Cisco Crosswork Situation Manager 8.0.x Implementer Guide

Available Languages

Cisco Crosswork Situation Manager 8.0.x Implementer Guide

(Powered by Moogsoft AIOps 8.0)

The Implementer Guide contains instructions to help you plan, install, configure, and maintain Cisco Crosswork Situation Manager.

Planning and Installation

System Requirements lists operating systems, browsers and third-party software required to run Cisco Crosswork Situation Manager. It also provides sizing recommendations for small, medium and large Cisco Crosswork Situation Manager systems.

Install Cisco Crosswork Situation Manager tells you how to install Cisco Crosswork Situation Manager using the various deployment options, how to install Add-ons and provides information on troubleshooting an installation.

Secure Your Installation tells you how to apply various security measures to your Cisco Crosswork Situation Manager system, including SSL certificates, external authentication, single sign-on with LDAP and SAML, and how to encrypt database communications. It also tells you how to manage users, roles and teams in Cisco Crosswork Situation Manager.

Data Ingestion and Event Processing

Before Ingesting Data outlines the steps to take before your Cisco Crosswork Situation Manager system can begin to ingest data. These include configuring logging, changing passwords for default users, analyzing your data and performing a business analysis to determine your Situation design goals.

Ingest Event Data from Monitoring Tools tells you how to prepare your data for ingestion, including how to select, clean, format, integrate and construct the data. It tells you how to map, parse and normalize data and describes the types of Link Access Module (LAM) and LAMbots you will use to achieve this.

Alert Creation and Enrichment

Configure Alert Creation tells you how to configure the Alert Builder, which creates alerts by processing event data from the Message Bus.

Process Alerts describes the components responsible for adding information to alerts and reducing noise. It tells you how to use enrichment processes to add supplemental data to alerts and Situations, and how to use topologies to view alerts and Situations according to the relationships that are important to your users.

Alert Clustering and Ticketing

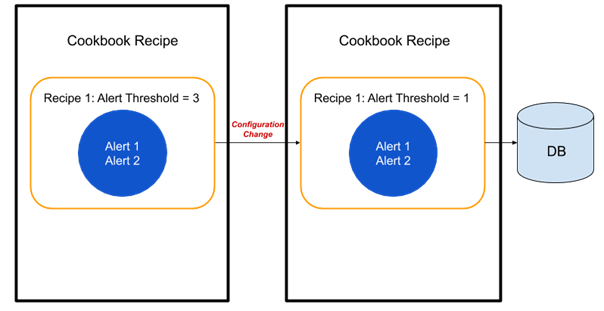

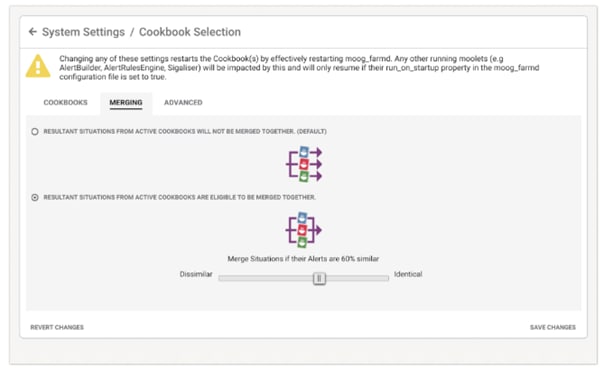

Situation Design tells you how to use Cisco Crosswork Situation Manager features to create insightful, informative Situations for your users and teams. These features include Cookbook, Tempus, Merge groups, and topologies.

Process Situations tells you how to create a Situation action Workflow engine to trigger workflows based on Situation actions. For example, when a Situation is created, updated, or closed.

Integrate with Ticketing Services tells you how to integrate with ticketing services including ServiceNow.

Operational Administration

Configure Operator Experience tells you how to configure the UI to best suit your operators, including the landing page, hotkeys, alert and Situation columns, and ChatOps. It also tells you how to configure and retrain Probable Root Cause (PRC).

Reporting and Dashboards tells you how to use Insights to analyze trends in operational performance. You can use the default dashboard or you can use Grafana to create a custom dashboard.

Customize Cisco Crosswork Situation Manager Further tells you how to use customization options in Cisco Crosswork Situation Manager including server and client tools. It also contains information on how to troubleshoot problems in Cisco Crosswork Situation Manager and how to run diagnostic tools.

Housekeeping Tasks provides instructions on maintaining your Cisco Crosswork Situation Manager system, including upgrading the software, maintaining Situation design, configuring historic data retention, archiving Situations and alerts, and scheduling system downtime.

Planning and Installation

System Requirements lists operating systems, browsers and third-party software required to run Cisco Crosswork Situation Manager. It also provides sizing recommendations for small, medium and large Cisco Crosswork Situation Manager systems.

Install Cisco Crosswork Situation Manager tells you how to install Cisco Crosswork Situation Manager using the various deployment options, how to install Add-ons and provides information on troubleshooting an installation.

Secure Your Installation tells you how to apply various security measures to your Cisco Crosswork Situation Manager system, including SSL certificates, external authentication, single sign-on with LDAP and SAML, and how to encrypt database communications. It also tells you how to manage users, roles and teams in Cisco Crosswork Situation Manager.

System Architecture Overview

You can think of the Cisco Crosswork Situation Manager architecture in different ways:

1. The data processing modules that handle the various processing tasks like data ingestion and core data processing. To learn about data processing, see Data Processing Flow.

2. The individual software components that comprise and support data processing and the User Interface, like the Percona database, RabbitMQ, Apache Tomcat, Ngnix, etc. To learn about the software components and their relationship to data processing, see Server Roles.

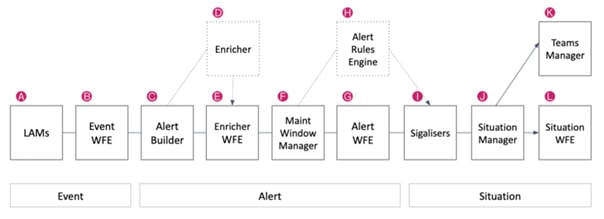

Data Processing Flow

Before you configure or customize data processing in Cisco Crosswork Situation Manager, take some time to learn the components that comprise the basic flow for processing event, alert, and Situation data.

Except for the Link Access Modules (LAMs) that perform data ingestion, the rest of the data processing components are individual Moolets that run as part of the Moogfarmd. For more information, see Configure Data Processing.

A) LAMs / Data Ingestion

The LAMs or Integrations ingest raw event data from your monitoring sources. LAMs do one of the following with the event data:

1. Map raw events into Cisco Crosswork Situation Manager events.

2. Discard events based upon system configuration. For example a blacklisting rule.

3. See Introduction to Integrations for more information.

B) Event Workflow Engine

See Workflow Engine for an overview of how the Workflow Engine UI works. See Workflow Engine Moolets for information on the Moolet.

C) Alert Builder

The Alert Builder deduplicates events into alerts and calculates the entropy value for alerts. Deduplicated events are visible in the UI after passing through the Alert Builder.

See Configure Event De-duplication in the Alert Builder for more information.

D) Enricher

The Enricher is an optional moolet that you can use to enrich alert data from external data sources such as a CMDB. See Enrichment Overview for information about the enrichment process.

See Enricher Moolet for information on the Moolet.

E) Enrichment Workflow Engine

The Enrichment Workflow Engine listens for alerts on the message bus and processes them based upon any active workflows. See Workflow Engine for an overview of how the Workflow Engine UI works. See Workflow Engine Moolets for information on the Moolet.

See Workflow Engine for an overview of how the Workflow Engine UI works. See Workflow Engine Moolets for information on the Moolet.

F) Maintenance Window Manager

To learn how to create a maintenance window, see Maintenance Window Manager for information on the Moolet.

G) Alert Workflow Engine

See Workflow Engine works for an overview of how the. See Workflow Engine Moolets for information on the Moolet.

H) Alert Rules Engine

If you upgraded from a previous version, you may have data processing configurations that use the Alert Rules Engine. The Alert Rules Engine lets you define criteria to process alerts according to different Transitions to move these alerts to different Action States. Before you start an implementation with the Alert Rules Engine, see if the Workflow Engine meets your needs.

See Alert Rules Engine for more information.

I) Clustering Algorithms

See the Clustering Algorithm Guide for an overview of the algorithms. To configure a clustering algorithm, see Configure Clustering Algorithms.

J) Situation Manager

The Situation Manager listens for Situation creation, update, and closure actions and lets you automate processes like data enrichment, assignment, or notification to a ticketing system.

The Labeler is part of the Situation Manager. See Situation Manager for more information.

K) Teams Manager

The Teams Manager Moolet listens for new Situation creation, update, and closure actions. It handles the team assignments you create in the Settings UI. See Manage Teams.

See Teams Manager Moolet for information on the Moolet.

L) Situation Workflow Engine

See Workflow Engine for an overview of how the Workflow Engine UI works. See Workflow Engine Moolets for information on the Moolet.

The following video further explains the data processing flow:

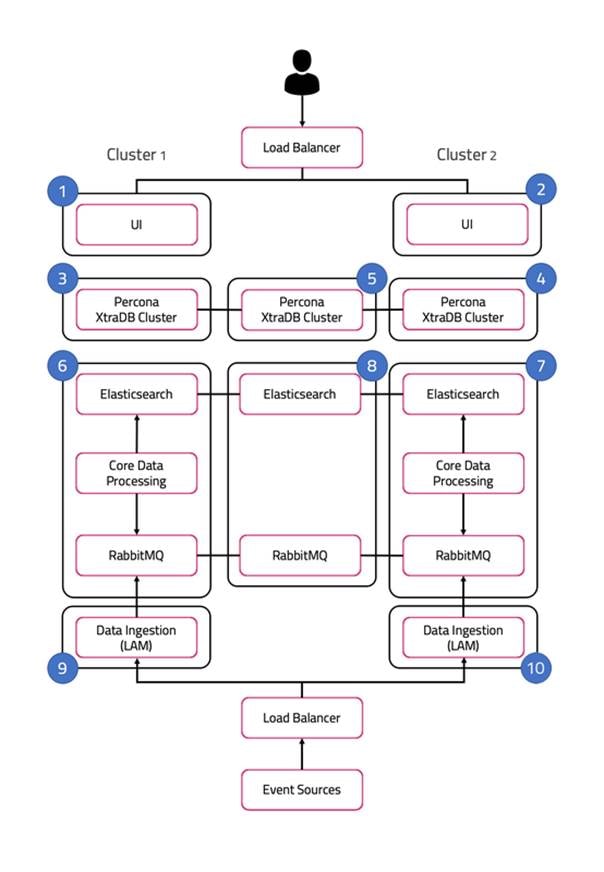

Server Roles

In order to plan your Cisco Crosswork Situation Manager deployment, it helps to understand the different components of Cisco Crosswork Situation Manager and the options for distributing them among multiple physical or virtual machines.

A server role within an Cisco Crosswork Situation Manager installation is a functional entity containing components that must be installed on the same machine. You can distribute different roles to different machines.

The following diagram illustrates the typical deployment strategy for the components of Cisco Crosswork Situation Manager in an Highly Available configuration:

The architecture is built upon two clusters with software components that serve several roles. See also HA Reference Architecture.

In the case of a single-server installation, you install all the roles on one machine.

UI role

The UI role comprises Nginx and Apache Tomcat, represented in the diagram as numbers 1 and 2. The Cisco Crosswork Situation Manager servlets groups run in active / active configuration.

Ngnix is the proxy for the web application server and for integrations.

Tomcat is the web application server. It reads and writes to the Message Bus and the database.

Database role

Percona XtraDB Cluster serves the database role, represented in the diagram as numbers 3, 4, and 5. The cluster runs in active / active standby / active standby mode.

Percona Xtra Db Cluster is the system datastore that handles transactional data from other parts of the system: LAMs (integrations), data processing, and the web application server.

HA Proxy handles database query routing and load balancing.

Core role

The Core role, represented by numbers 6 and 7 in the diagram comprises the following:

1. Moogfarmd, the Cisco Crosswork Situation Manager data processing component. Moogfarmd consumes messages from the Message Bus. It processes event data in a series of servlet-like modules called Moolets. Moogfarmd reads and writes to the database and publishes messages to the bus.

2. RabbitMQ which provides the message queue. It receives published messages from integrations. It publishes messages destined for data processing (Moogfarmd) and the web application server.

3. Elasticsearch which provides the UI search capability. It indexes documents from the indexer Moolet in the data processing series. It returns search results to Tomcat.

In HA deployments, Moogfarmd automatically runs in active / passive mode. See High Availability Overview for more information.

In concert with the the Redundancy Role server, RabbitMQ and Elasticsearch run in active / active / active mode.

Redundancy role

The redundancy role, represented by number 8 in the diagram, provides the third node required for true HA for RabbitMQ and Elasticsearch.

Data ingestion role

Link Access Modules (LAMs) make up the data ingestion role represented by numbers 9 and 10 in the diagram. Receiving LAMs listen for events from monitoring sources and Polling LAMs poll monitoring sources for events. Both parse and encode raw events into discrete events, and then write the discrete events to the Message Bus.

In HA deployments, receiving LAMs run in active / active mode, but polling LAMs run in active / passive mode.

Load balancers

The load balancers in front of the UI server role and the data ingestion server role are the customer's responsibility.

Scale Your Cisco Crosswork Situation Manager Implementation

Cisco Crosswork Situation Manager supports several options to help you scale your implementation to meet your performance needs. Monitor and Troubleshoot Cisco Crosswork Situation Manager to monitor your system for signs that it is time to scale.

For information on the performance tuning capabilities of individual Cisco Crosswork Situation Manager components, see Monitor Component Performance.

Horizontal Scaling

Cisco Crosswork Situation Manager currently supports horizontal scaling at the integration (LAM) and visualization (Ngnix + Tomcat) layers.

1. You can add more LAMs, either on additional servers or on the same server, to achieve higher event rates. In this case, you have the option to configure event sources to send to the parallel LAMs separately or to implement a load balancer in front of the LAMs.

2. You can add Nginx/Tomcat UI "stacks" behind a load balancer to increase performance for UI users. Adding UI stacks does not always provide better performance. It can degrade performance by adding more connection pressure to the database.

The following are typical horizontal scaling scenarios:

1. You can add an additional LAM to process incoming events if you see that, despite attempts to tune the number of threads for an individual LAM, its event rate hits a plateau. This is a sign that the LAM is the bottleneck, so adding other instances of the LAM behind a load balancer will allow a higher event processing rate.

2. You can add an additional UI stack if database pool diagnostics for Tomcat suggest that all or most of the database connections are constantly busy with long running connections, but the database itself is performing fine.

The data processing layer (moogfarmd) is not currently well suited to horizontal scaling. Moolets of the same type cannot currently share processing. Adding more Moolets like the AlertBuilder in an attempt to increase the event processing rate is likely to lead to database problems.

Vertical Scaling

All Cisco Crosswork Situation Manager components ultimately benefit from being run on the best available hardware, but the data processing layer (moogfrarmd) benefits most from this approach. Depending on the number and complexity of Moolets in your configuration, you will see performance benefits in data processing on servers having the fastest CPUs with numerous cores and a large amount of memory. This enables you to increase the number of threads for moogfarmd to improve processing speed. You should also locate the database on the most feasibly powerful server (clock speed, number of cores and memory) with the biggest/fastest disk.

Distributed Installations

In some cases you distribute Cisco Crosswork Situation Manager components among different hosts to gain performance because it reduces resource contention on a single server: The most common distribution is to install the database on a separate server, ideally within the same fast network to minimize risk of latency. An additional benefit of this move is that it allows you to run a clustered or master/slave database for redundancy.

Another common distribution is to install the UI stack (Nnginx) on a separate server within the same fast network.

Some integrations (LAMs) benefit in being closer to the source so are a candidates for distribution.

See Server Roles and HA Installation for more information.

System Requirements

Cisco Crosswork Situation Manager 8.0 Supported Environments

The following operation systems, browsers and third-party software are either supported or are required in order to run Cisco Crosswork Situation Manager.

Any operating systems and browsers not listed in the sections below are not officially recommended or supported.

Operating systems

You can run Cisco Crosswork Situation Manager on the following versions of Red Hat Enterprise Linux®(RHEL) and CentOS Linux:

| OS |

Version |

| CentOS |

v7 |

| RHEL |

v7 |

Note

No other Linux distributions are currently supported

Browsers

You can use the following browsers for the Cisco Crosswork Situation Manager UI:

| Browser |

Version |

| Chrome |

Latest |

| Firefox |

Latest |

| Safari |

Latest |

| Edge |

Latest |

Note

Due to a known issue in the Safari web browser, you must take additional steps if you've enabled the enhanced Content Security Policy in v8.0 and you want to access the UI with Safari. For more information, see RPM - Upgrade UI components or Tarball - Upgrade UI components depending on your implementation type.

After upgrading macOS to Catalina, the UI is inaccessible in Chrome, Safari and Edge browsers because self-signed certificates are no longer trusted. For workaround instructions see Catalina Browser Certificate Workaround.

Supported Third Party software

Cisco Crosswork Situation Manager v8.0 ships with the following third-party applications:

| Application |

Version |

| Apache Tomcat® |

v9.0.35 |

| Elasticsearch |

v6.8.1 (LTS version) |

| Nginx |

v1.14.0 or above |

| RabbitMQ |

v3.7.4 |

| Percona XtraDb Cluster |

v5.7.28 |

| Percona XtraBackup |

v2.4.20 |

| HA Proxy |

v1.5.18-9.el7.x86_64 |

Other supported application packages include:

| Application |

Version |

| Erlang |

v20.1.7 |

| JDK |

java-11-openjdk-devel >= 1:11.0.5 |

| Apache Tomcat® Native |

v1.2.23 or above |

| MySQL |

v5.7.28 |

You can do a minor upgrade of the JDK (for example, from 11.0.4 to 11.0.5) without having to also update the Cisco Crosswork Situation Manager RPM files.

Note

MySQL is supported for upgrading customers only. New Cisco Crosswork Situation Manager installations use Percona XtraDB Cluster for database management.

Integration support

The following table outlines the vendor supported integrations for the current version of Cisco Crosswork Situation Manager alongside the corresponding supported software versions.

Integrations support IPv6 connectivity.

| Integration Version |

Supported Software / Version |

| Ansible Tower Integration v1.11 |

Ansible Tower v3.0, 3.1 |

| Apache Kafka Integration v1.14 |

Apache Kafka v0.9, 1.1, 2.2 |

| AppDynamics Integration v2.2 |

AppDynamics v4.0, 4.1 |

| AWS CloudWatch Integration v2.1 |

aws-java-sdk v1.11 |

| AWS SNS Integration v1.3 |

Runtime Node.js 8.10, Node.js 10.x, and Node.js 12.x |

| BMC Remedy Integration v1.9 |

Remedy v9.1 |

| CA UIM Integration v1.8 |

CA Nimsoft UIM v8.4 |

| CA Spectrum Integration v2.3 |

CA Spectrum v10.2 |

| Catchpoint Integration v1.1 |

Catchpoint v2019 |

| Cherwell Service Management Integration v1.6 |

Cherwell v9.3 |

| Datadog Polling Integration v1.4 |

Datadog v2018 |

| Datadog Webhook Integration v1.12 |

Datadog v5.21 |

| Dynatrace APM Plugin Integration v1.9 |

Dynatrace v6.5, 7.0 |

| Dynatrace APM Polling Integration v2.4 |

Dynatrace v7.2.0.1697 |

| Dynatrace Notification Integration v1.6 |

Dynatrace v1.187.132.20200224-165652 |

| Email Integration v2.6 |

IMAP, IMAPS, POP3, POP3S |

| EMC Smarts Integration v1.5 |

RabbitMQ v3.7.4 and Smarts v9.5 |

| ExtraHop Integration v1.2 |

ExtraHop v2018 |

| AWS FireLens v1.01 |

AWS FireLens (New in 8.0) |

| FluentD Integration v1.11 |

FluentD v0.12 |

| Grafana Integration v1.2 |

Grafana v5.2.4 |

| HP NNMi Integration v2.6 |

HP NNMi v10.30 |

| HP OMi Plugin Integration v1.9 |

HP OMi v10.1 |

| HP OMi Polling Integration v2.6 |

HP OMi v10.1 |

| JIRA Service Desk Integration v1.12 |

JIRA Service Desk v. 4.7.1 and JIRA Cloud Rest API v. 2 |

| JIRA Software Integration v1.12 |

JIRA Software v. 7, v. 8.7.1 and JIRA Cloud Rest API v. 2 |

| JMS Integration v1.12 |

ActiveMQ v5.14, JBoss v10, WebLogic v12.0 |

| Moogsoft Express Polling Integration v1.0 |

Moogsoft Express (new in 8.0) |

| Moogsoft Express Webhook Integration v1.0 |

Moogsoft Express (new in 8.0) |

| Microsoft Azure Integration v1.2 |

Microsoft Azure Monitor v2018 |

| Microsoft Azure Classic Integration v1.2 |

Microsoft Azure Classic v2018 |

| Microsoft SCOM Integration v2.7 |

Microsoft SCOM v2012, 2016 and 2019 |

| Microsoft Teams Integration v1.1 |

Microsoft Teams v1.2.00.3961 |

| Nagios Integration v2.10 |

Nagios vXI |

| New Relic Integration v1.10 |

New Relic v2016 |

| New Relic Polling Integration v2.1 |

New Relic v2.3 |

| New Relic Insights Polling Integration v1.1 |

New Relic v2.3 |

| Node.js Integration v1.10 |

Node.js v1.6 |

| NodeRED Integration v1.10 |

Nagios Red v016, 017 |

| OEM Integration v2.3 |

Oracle Enterprise Manager v12c, 13c |

| Office 365 Email Integration v1.0 |

|

| OpsGenie v1.0 |

Opsgenie's Alerts v2 REST API (new in 8.0) |

| PagerDuty v1.0 |

PagerDuty SaaS (new in 8.0) |

| Pingdom Integration v1.9 |

Pingdom v2017 |

| Sensu Integration v1.0 |

Sensu Core v1.8 |

| ServiceNow Integration v4.5 |

ServiceNow vNew York, Madrid, London, Kingston |

| SevOne Integration v1.5 |

SevOne v5.7.2.0 |

| Site24x7 Integration v1.1 |

Site24x7 June-2019 |

| Slack Integration v1.7 |

Slack v3.1 |

| SolarWinds Integration v3.3 |

SolarWinds v. 11.5, v. 12.2, v. 12.3, v. 2019.4 |

| Splunk Integration v2.6 |

Splunk v. 6.5, v. 6 6, v. 7.0, v. 7.1, v. 7.2, v. 7.3, v. 8.0 |

| Splunk Streaming Integration v1.1 |

Splunk v. 7.1, v. 7.2, v. 7.3, v. 8.0 |

| Sumo Logic Integration v1.2 |

Sumo Logic v2018 |

| VMware vCenter Integration v2.4 |

VMware vCenter v. 6.0, v. 6.5, v. 6.7 |

| VMware vROps Integration v2.4 |

VMware vROps v6.6, v7.5.0 |

| VMware vSphere Integration v2.5 |

VMware vSphere v. 6.0, v. 6.5, v. 6.7 |

| VMware vRealize Log Insight Integration v2.5 |

VMware vRealize Log Insight v4.3 |

| WebSphere MQ Integration v1.13 |

WebSphere MQ v8 |

| xMatters Integration v2.0 |

xMatters v5.5 |

| Zabbix Integration v1.0 |

Zabbix v3.4 |

| Zabbix Polling Integration v3.5 |

Zabbix v3.2, v4.0, v4.4 |

| Zenoss Integration v2.6 |

Zenoss v4.2, v6.3.2 |

Add-ons

Cisco Crosswork Situation Manager v8.x supports Cisco Add-ons v2.0 and later. See Add-ons.

Sizing Recommendations

The sizing recommendations below are guidelines for small, medium and large Cisco Crosswork Situation Manager systems based on input data rate and volume. Event calculations depend on the number of events sent to the Alert Builder.

In the context of this guide, Managed Devices (MDs) are all of the components in the network infrastructure that generate and emit events:

Small

| Environment |

CPU |

File System |

| 1000 to 5000 Managed Devices (MDs) Less than 20 users Up to 5 integrations Less than 20 events per second to Alert Builder |

8 Cores 32GB RAM 2 x 1GB Ethernet Physical or Virtual Server |

1 TB Local or SAN See Retention policy below. |

Medium

| Environment |

CPU |

File System |

| 5000 to 20,000 MDs Between 20 and 40 users Between 6 and 10 integrations Between 20 and 100 events per second to Alert Builder |

16 Cores 64GB RAM 2 x 1GB Ethernet Physical or Virtual Server |

1 TB Local or SAN See Retention policy below. |

Large

| Environment |

CPU |

File System |

| More than 20,000 MDs More than 40 users More than 10 integrations More than 100 events per second to Alert Builder |

24+ Cores 128GB RAM 2 x 1GB Ethernet Physical or Virtual Server |

1 TB Local or SAN See Retention policy below. |

Virtualization restrictions

Consider the following restrictions for virtual environments:

· Ideally all Cisco Crosswork Situation Manager servers (guests) should be on the same compute node (host) sharing a hypervisor or virtual machine monitor. This minimizes latency between Cisco Crosswork Situation Manager guests.

· If servers are liable to automated resource balancing (for example vMotion) and liable to move compute nodes, then all Cisco Crosswork Situation Manager servers should be moved at the same time. If this is not possible, then Cisco Crosswork Situation Manager servers should be constrained to movements that minimize the resulting network distance.

· If Cisco Crosswork Situation Manager servers are distributed amongst compute nodes then the network “distance” (logical hops) between the nodes should be minimized.

· Network latency between components may affect event processing throughput. This is especially true of the core to db servers.

Shared storage

On any shared compute platform Cisco makes the following recommendations:

The minimum resource requirements are multiplied by at least 33% to account for shared resource usage and allocation.

Storage latency will reduce effective throughput at the core processing layer and should be minimised within the available constraints of a SAN.

Cisco Crosswork Situation Manager should be treated as a highly transactional system and not placed on the same compute node as other highly transactional applications that may cause SAN resource contention.

SAN port and array port contention should be minimized.

Storage medium should be as fast as possible to minimize the transaction times to the database.

Retention policy

You can determine the amount of disk space in GB required for the database server using the following calculation:

(es x eps x d x 86,400) x 1.2 / 1,000,000

For this calculation: es = average event size in KB, eps = average events per second, d = number of days of retention and 86,400 represents the number of seconds per day.

For the majority of event sources, you can reasonably estimate a 2KB event size. However, some sources have larger than average events. For example, Microsoft SCOM. A 2KB base takes account of the other event and alert based storage such as an alert's Situation membership and Situation room thread sizes.

The average event rate is across all LAMs and integrations.

Note

If you do not enable the Archiver tool, the historic database will grow indefinitely. See Archive Situations and Alerts for more information.

For example, the following calculation represents a 400 day retention period with an average event size of 2KB at 300 events per second:

(2 x 300 x 400 x 86,400) x 1.2 / 1,000,000 = 24,883.2 GB.

Install Cisco Crosswork Situation Manager

Use this guide to learn how to install Cisco Crosswork Situation Manager:

If you are installing another version, see Welcome to the Cisco Docs! for more information. Refer to the following topics to help choose the right environment for your Cisco Crosswork Situation Manager deployment:

1. The Cisco Crosswork Situation Manager Cisco Crosswork Situation Manager 8.0 Supported Environments topic details supported operating systems and system requirements.

2. The Sizing Recommendations topic will help you make sure you select hardware to support your data ingestion and user requirements.

If you are upgrading Cisco Crosswork Situation Manager, see Upgrade Cisco Crosswork Situation Manager.

Deployment options

You have the option to install all Cisco Crosswork Situation Manager packages on a single machine. However, the modular approach of the Cisco Crosswork Situation Manager distribution means fewer dependencies between individual packages. This means you have the flexibility to install different components to different machines. See Server Roles for a description of how you can distribute the different components amongst multiple machines.

1. For smaller deployments, you can run all the components in on a single machine.

— If you have root access to the machine and want to use Yum to install, see RPM Installation.

1. For most production deployments, you may install different components to different machines in order to distribute the workload. See High Availability Overview for more information.

Install Cisco Add-Ons

Cisco periodically releases add-ons to extend and enhance the core Cisco Crosswork Situation Manager functionality. For example, new Workflow Engine functions, new Workflow Engines, or Integrations tiles. All add-ons releases are cumulative and include the fixes from previous releases.

Once you have finished upgrading or installing Cisco Crosswork Situation Manager, you should install the Cisco Crosswork Situation Manager add-ons to ensure you have the latest version.

See Install Cisco Add-ons for more information on how to install the Cisco Crosswork Situation Manager add-ons.

Troubleshoot the installation

You may encounter issues when installing or upgrading Cisco Crosswork Situation Manager.

See Troubleshoot Installation and Upgrade for more information on how to resolve these issues.

For more information on message bus or Elasticsearch configuration, see Configure the Message Bus or Configure Search and Indexing.

Prepare to Install Cisco Crosswork Situation Manager

Before you start to install Cisco Crosswork Situation Manager v8.0.x, you must perform certain pre-installation tasks.

The instructions to follow depends on your preferred mode of deployment:

1. RPM: Use this method if you have root access to your Cisco Crosswork Situation Manager server(s) and you do not want to change the default installation locations.

2. Use the offline instructions if your Cisco Crosswork Situation Manager server(s) do not have access to the internet.

For pre-installation instructions, refer to one of the following topics:

1. Online RPM pre-installation

2. Cisco Crosswork Situation Manager - Offline RPM pre-installation

Cisco Crosswork Situation Manager - Offline RPM pre-installation

You must perform certain preparatory tasks before you install Cisco Crosswork Situation Manager v8.0.x.

Follow these steps if you have root access to the machine or machines on which you will install or upgrade Cisco Crosswork Situation Manager, but you cannot connect to Yum repositories outside your network from those machines.

If you are performing another type of installation, see:

a. Online RPM pre-installation: #.

b. Online Tarball pre-installation: #.

c. Offline Tarball pre-installation: #.

Before you begin

Before you begin to prepare for the installation, verify the following:

1. You have root access to the system where you plan to install Cisco Crosswork Situation Manager.

2. You are familiar with the supported versions of third party software, as outlined in Cisco Crosswork Situation Manager 8.0 Supported Environments.

Download the installation files

Complete the following steps before you perform an offline RPM installation of Cisco Crosswork Situation Manager v8.0.x:

· Download the Percona and dependency packages using cURL on an internet-connected host:

curl -L -O http://repo.percona.com/percona/yum/release/7/RPMS/x86_64/Percona-XtraDB-Cluster-shared-57-5.7.28-31.41.1.el7.x86_64.rpm

curl -L -O http://repo.percona.com/percona/yum/release/7/RPMS/x86_64/Percona-XtraDB-Cluster-client-57-5.7.28-31.41.1.el7.x86_64.rpm

curl -L -O http://repo.percona.com/percona/yum/release/7/RPMS/x86_64/Percona-XtraDB-Cluster-server-57-5.7.28-31.41.1.el7.x86_64.rpm

curl -L -O http://repo.percona.com/percona/yum/release/7/RPMS/x86_64/Percona-XtraDB-Cluster-shared-compat-57-5.7.28-31.41.1.el7.x86_64.rpm

curl -L -O http://repo.percona.com/percona/yum/release/7/RPMS/x86_64/percona-xtrabackup-24-2.4.19-1.el7.x86_64.rpm

· Copy the Percona install_percona_nodes.sh install script and RPM install files to all servers that will house a database node.

· Copy the tar.gz files to all servers that will run Cisco Crosswork Situation Manager components.

· Download the HA Proxy RPM on an internet-connected host (requires root permissions):

yum install --downloadonly --downloaddir ./ haproxy

Copy the HA Proxy RPM to the servers that will have the Core, UI and LAM server roles.

See Server Roles for more information on the Core, UI and LAM server roles.

Prepare the local Yum repositories

Follow these steps to create local Yum repositories to house the installation packages. If you are running a distributed installation, perform these steps on each machine that will run Cisco Crosswork Situation Manager components.

· Create two directories to house the repositories. For example:

sudo mkdir -p /media/localRPM/BASE/

sudo mkdir -p /media/localRPM/ESR/

· Extract the two Tarball files into separate directories and move the HA Proxy RPM to /media/localRPM/BASE/. For example:

tar xzf *-MoogsoftBASE7_offline_repo.tar.gz -C /media/localRPM/BASE/

tar xzf *-MoogsoftESR_8.0.0.1_offline_repo.tar.gz -C /media/localRPM/ESR/

mv haproxy*rpm /media/localRPM/BASE/

Back up the existing /etc/yum.repos.d directory. For example:

mv /etc/yum.repos.d /etc/yum.repos.d-backup

· Create an empty /etc/yum.repos.d directory. For example:

mkdir /etc/yum.repos.d

· Create a local.repo file ready to contain the local repository details:

vi /etc/yum.repos.d/local.repo

· Edit local.repo and configure the baseurl paths for BASE and ESR to point to the your directories. For example:

[BASE]

name=MoogCentOS-$releasever - MoogRPM

baseurl=file:///media/localRPM/BASE/RHEL

gpgcheck=0

enabled=1

[ESR]

name=MoogCentOS-$releasever - MoogRPM

baseurl=file:///media/localRPM/ESR/RHEL

gpgcheck=0

enabled=1

· Clean the Yum cache:

yum clean all

Verify that Yum can detect the newly created repositories. For example:

yum info "moogsoft-*"

Available Packages

Arch : x86_64

Version : 8.0.0.1

Release : XYZ

Size : 76 M

Repo : ESR

Summary : Algorithmic Intelligence for IT Operations

URL : https://www.moogsoft.com

License : Proprietary

Description : Moogsoft AIOps (8.0.0.1) - Build: XYZ - (Revision: XYZ)

The results should include the following packages:

Name : moogsoft-db

Name : moogsoft-integrations

Name : moogsoft-integrations-ui

Name : moogsoft-mooms

Name : moogsoft-search

Name : moogsoft-server

Name : moogsoft-ui

Name : moogsoft-utils

Name : moogsoft-common

Name : moogsoft-ccsm

· Install the downloaded Percona RPMs on all servers that will house a database node:

yum -y install Percona-XtraDB-Cluster-*.rpm percona-xtrabackup-24-2.4.19-1.el7.x86_64.rpm

· Install Java 11:

VERSION=11.0.7.10; yum -y install java-11-openjdk-headless-${VERSION} java-11-openjdk-${VERSION} java-11-openjdk-devel-${VERSION}

· Set SELinux to permissive mode or disable it completely. For example, to set SELinux to permissive mode:

setenforce 0

If you want to disable SELinux at boot time, edit the file /etc/sysconfig/selinux.

· Ensure the current user, or the user that will be running the Moogsoft processes, has sufficient resource limits by running the following commands as that user:

ulimit -n

ulimit -u

If either of the values returned are less than 65536, add the following to the /etc/security/limits.conf file as root:

moogsoft soft nofile 65536

moogsoft hard nofile 65536

moogsoft soft nproc 65535

moogsoft hard nproc 65535

Optional: GPG key validation of the RPMs

To validate the RPMs before installation:

· Download the key. For 8.0.0.1 and prior:

https://keys.openpgp.org/vks/v1/by-fingerprint/2529C94A49E42429EDAAADAEC7A2253BFC50512A

· Copy the key to the server onto which the RPMs or tarball will be installed (it will be an .asc file)

· Import the key. For example, for 8.0.0.1 and prior:

gpg --import 2529C94A49E42429EDAAADAEC7A2253BFC50512A.asc

· You can download the CCSM RPMs from Cisco eDelivery.

· Move the RPMs and .sig files into the same folder. For example, /tmp, as used in the example below.

· Copy the following code into a bash terminal and run it to perform the validation:

while read RPM

do

echo "Current RPM: $RPM"

gpg --verify ${RPM}.sig ${RPM} 2>&1

done < <(find /media/localRPM/ESR/RHEL/ -name '*.rpm');

· Confirm that all the commands for each RPM report:

Good signature from "Moogsoft Information Security Team "<security@moogsoft.com>"

Your local Yum repositories are now ready. Proceed with your offline installation or upgrade. See the upgrade instructions relevant to your deployment.

RPM Installation

This topic describes how to install Cisco Crosswork Situation Manager v8.0.x on a single host.

Follow these steps if you have root access to the machine or machines on which you will install Cisco Crosswork Situation Manager, and you can connect to Yum repositories outside your network from those machines.

To install Cisco Crosswork Situation Manager in a highly available distributed environment, see HA Installation.

Before you begin

Before you start to install Cisco Crosswork Situation Manager, complete all steps in one of the following documents:

· Online RPM pre-installation: If you have root access to the machine or machines on which you will install Cisco Crosswork Situation Manager, and you can connect to Yum repositories outside your network from those machines.

· Cisco Crosswork Situation Manager - Offline RPM pre-installation: If you have root access to the machine or machines on which you will install or upgrade Cisco Crosswork Situation Manager, but you cannot connect to Yum repositories outside your network from those machines.

Install Cisco Crosswork Situation Manager

To complete an RPM installation of Cisco Crosswork Situation Manager v8.0.x, perform the following steps:

· Download and install the Cisco Crosswork Situation Manager RPM packages, using one of the following methods according to your deployment type:

· If you are performing an RPM installation:

VERSION=8.0.0.1; yum -y install moogsoft-server-${VERSION} \

moogsoft-db-${VERSION} \

moogsoft-utils-${VERSION} \

moogsoft-search-${VERSION} \

moogsoft-ui-${VERSION} \

moogsoft-ccsm-${VERSION} \

moogsoft-common-${VERSION} \

moogsoft-mooms-${VERSION} \

moogsoft-integrations-${VERSION} \

moogsoft-integrations-ui-${VERSION}

· If you are performing an offline RPM installation, navigate to the location where you copied the RPM files and install them:

yum install *.rpm

· Edit your ~/.bashrc file to contain the following lines:

export MOOGSOFT_HOME=/usr/share/moogsoft

export APPSERVER_HOME=/usr/share/apache-tomcat

export JAVA_HOME=/usr/java/latest

export PATH=$PATH:$MOOGSOFT_HOME/bin:$MOOGSOFT_HOME/bin/utils

· Source the ~/.bashrc file:

source ~/.bashrc

· Run the Percona install script:

bash install_percona_nodes.sh;

The script guides you through the installation process. To configure a single database node on the same server as Cisco Crosswork Situation Manager, use these settings:

— Configure Percona as "Primary".

— Do not set the server to "DB only".

— Set the first database node IP address to the server IP address.

— When prompted to enter the IP addresses of the second and third nodes, press Enter to skip these settings.

Initialize Cisco Crosswork Situation Manager

When the installation process is complete, initialize Cisco Crosswork Situation Manager as follows:

· Run the initialization script moog_init, replacing <zone name> with your desired RabbitMQ VHOST:

$MOOGSOFT_HOME/bin/utils/moog_init.sh -I <zone_name> -u root

The script prompts you to accept the End User License Agreement (EULA) and guides you through the initialization process.

When asked if you want to change the configuration hostname, say yes and enter the public URL for the server. The public URL is the URL the instance will be connected to through a browser.

Note

When prompted for a password, enter the password for the root database user (not the UNIX system user). If you are installing Percona on this machine for the first time, leave the password blank and press Enter to continue.

The zone_name sets up a virtual host for the Message Bus. If you have multiple systems sharing the same bus, set a different zone name for each.

If you are deploying more than one database, configure HA Proxy to load-balance the database nodes. The following script requires root privileges. Run this script on any host running any Cisco Crosswork Situation Manager components after you have installed the RPMs:

$MOOGSOFT_HOME/bin/utils/haproxy_installer.sh

· Restart Moogfarmd:

service moogfarmd restart

Configure Elasticsearch heap size

The minimum and maximum JVM heap sizes must be large enough to ensure that Elasticsearch starts.

See Finalize and Validate the Upgrade for more information.

Enable the enhanced Content Security Policy (optional)

Cisco has provided an optional enhanced Content Security Policy (CSP) as part of this release. CSP is a security standard introduced to prevent Cross Site Scripting (XSS) and other data injection attacks. For more information, see the Mozilla document on Content Security Policy.

The CSP is controlled by Nginx and is disabled by default. To enable it:

· Edit the following file:

/etc/nginx/conf.d/moog-ui-headers.conf

· Uncomment the line that starts with add_header Content-Security-Policy and save the file.

· Restart Nginx:

service nginx reload

Note

If you enable the enhanced CSP you must follow the steps below to allow access to external domains. If you want to access the UI with the Safari web browser, you must follow the steps below to configure Cisco Crosswork Situation Manager for use with Safari.

Allow access to external domains

If you enable the enhanced CSP, the following features require additional configuration to allow access to external domains:

1. Situation Room plugins to external domains

2. Situation client tools to external URLs

To allow access to required external domains:

· Edit the following file:

/etc/nginx/conf.d/moog-ui-headers.conf

· Add a frame-src directive to the Content-Security-Policy header for the required domain. For example, run the following command to allow Google domains:

sed -i "s/add_header Content-Security-Policy\(.*\)\" always/add_header Content-Security-Policy\1; frame-src 'self' *.google.com\" always/" /etc/nginx/conf.d/moog-ui-headers.conf

· Restart Nginx:

service nginx reload

Note

Cisco Crosswork Situation Manager allows access to Pendo and WalkMe domains by default.

Configure Cisco Crosswork Situation Manager for use with Safari

Due to a known issue in the Safari web browser, you must take additional steps if you've enabled the enhanced CSP and you want to access the UI with Safari:

· Edit the following file:

/etc/nginx/conf.d/moog-ui-headers.conf

· Add the following websocket URLs to the Content-Security-Policy section of the file. Substitute your hostname for <webhost>:

wss://<webhost>/moogpoller/ws

wss://<webhost>/integrations/ws/v1

You can update the configuration using a command similar to the following. Substitute your hostname for <webhost>:

sed -i.bak "s;connect-src 'self' app;connect-src 'self' wss://<webhost>/moogpoller/ws wss://<webhost>/integrations/ws/v1 app;g" /etc/nginx/conf.d/moog-ui-headers.conf

· Restart Nginx:

service nginx reload

Confirm system ulimits

Ensure the 'moogsoft' system user has sufficient limits by running the following command as root:

runuser -l moogsoft -c 'ulimit -n; ulimit -u;'

If either of the values returned are less than 65536, add the following to the /etc/security/limits.conf file as root:

moogsoft soft nofile 65536

moogsoft hard nofile 65536

moogsoft soft nproc 65535

moogsoft hard nproc 65535

Verify the installation

To verify that the installation has completed successfully, follow the steps outlined in Validate the Installation.

Change passwords for default users

When the installation is complete, it is critical that you change the passwords for the default users created during the installation process. See Change passwords for default users for more information.

Install Cisco Add-Ons

Cisco periodically releases add-ons to extend and enhance the core Cisco Crosswork Situation Manager functionality. For example, new Workflow Engine functions, new Workflow Engines, or Integrations tiles. All add-ons releases are cumulative and include the fixes from previous releases.

Once you have finished upgrading or installing Cisco Crosswork Situation Manager, you should install the Cisco Crosswork Situation Manager add-ons to ensure you have the latest version.

See Install Cisco Add-ons for more information on how to install the Cisco Crosswork Situation Manager add-ons.

High Availability Overview

Cisco Crosswork Situation Manager supports high availability (HA) architectures to improve the fault tolerance of Cisco Crosswork Situation Manager. Each component supports a multi-node architecture to enable redundancy, failover, or both to minimize the risk of data loss, for example, in the case of a hardware failure

This topic covers the architectures you can use to achieve HA with Cisco Crosswork Situation Manager. For an example of how to set up a single site HA system, see HA Installation. See HA Reference Architecture for a detailed diagram of the components in a single site HA configuration.

Distributed HA architectures

Cisco Crosswork Situation Manager supports high availability in distributed architectures where different machines host a subset of the stack. You can run one or more of the server roles on its own machine.

See Server Roles for details of the HA architecture server roles in Cisco Crosswork Situation Manager.

If you run more than one server role on a machine, choose a primary role for the server. The primary role dictates which additional roles are supported on the machine as follows:

| Primary Role |

Supported Secondary Roles |

| Core |

UI, Data ingestion and Database |

| UI |

Data ingestion |

| Data Ingestion |

UI |

| Database |

Redundancy |

| Redundancy |

Database |

See Scale Your Cisco Crosswork Situation Manager Implementation for information on how to increase capacity within the HA architecture, you can.

Contact your Cisco technical representative to discuss scaling your deployment.

See Sizing Recommendations for more information on hardware sizes and capacity.

After you decide on the best HA architecture for your environment, you can plan your implementation.

Resilience and failover

Cisco Crosswork Situation Manager provides support for automatic failover between the two nodes within an HA pair. For example from one instance of Moogfarmd to another, or from one instance of a LAM to another. When an active instance in an HA pair fails, Cisco Crosswork Situation Manager persists any affected messages. The passive instance of the HA pair automatically takes over, and processes those messages without any interruption or loss of data. See Message Persistence for more information.Configure the Message Bus

There is no automatic failover between multiple HA pairs. For example, there is no failover from a primary site to a second site, such as a disaster recovery replica.

Cisco Crosswork Situation Manager does not support automated fail-back for any architecture. For example, consider an HA pair of Moogfarmd instances. When the instance of Moogfarmd in cluster 1 becomes unavailable, the instance in cluster 2 enters an active state. When the instance from cluster 1 recovers and becomes available, the instance in cluster 2 remains active.

High Availability Configuration Hierarchy

Cisco Crosswork Situation Manager deployments use a tiered hierarchy of clusters, groups, instances and roles to achieve High Availability.

A cluster is a collection of Cisco Crosswork Situation Manager components that can deliver an uninterrupted processing workflow. To achieve HA you need at least two clusters that include all the Cisco Crosswork Situation Manager components. You need an additional, third machine, for message queue and search components.

A group comprises a single component or two identical components that provide resilience over two or more clusters. Cisco Crosswork Situation Manager automatically controls the active or passive behaviour and failover of the instances within a group.

An example of a group is a Socket LAM configured for the same source in two separate clusters. Other groups include the following;

· Servlets for the UI.

· Moogfarmd for data processing.

· Individual LAMs for data ingestion. For example the REST LAM.

An instance is an individual component running within a group. Each instance in a group provides resilience for the other instance. For example the primary instance of a Socket LAM pairs with a secondary instance in the second cluster to make a group.

A role within a Cisco Crosswork Situation Manager installation is a functional entity containing components that must be installed on the same machine. You can distribute different roles to different machines. For example, the Core role.

HA Reference Architecture

The diagram in this topic represents a Cisco Crosswork Situation Manager High Availability deployment to a single site: one datacenter, LAN, or availability zone. To support this architecture, all servers must have sufficient connection speed amongst themselves so that latency between hosts does not exceed 5 ms.

A) Load balancers / VIPs

All Cisco Crosswork Situation Manager components have their own HA mechanism that provides failover capabilities , but it is also a best practice to use a load balancer or load balancers. You can use either software or hardware load balancers with the following requirements and recommendations:

· Load balancers must use TCP.

· You must implement health checks using your preferred approach to remove unhealthy servers from the cluster.

· The load balancer should provide load balancing capabilities and a VIP for each server role. For example: one UI VIP per site, one LAM VIP per site.

· Sticky sessions are recommended.

· You can choose your preferred load balancing approach. For example, round robin or least-connection.

B) User interface

The Cisco Crosswork Situation Manager UI comprises the following components:

1. Nginx: The web server that provides static UI content and acts as a proxy for the application server. For HA deployments, install a minimum of two Nginx instances on separate servers and optionally cluster the Nginx instances.

2. Apache Tomcat: The web server that provides servlet and API support. For HA deployments, install a minimum of two Tomcat instances on separate servers and optionally cluster the instances.

The UI components run in active/active configuration, so configure servlet instances to run in separate groups.

Required Ports: 80, 443

C) Database

Cisco Crosswork Situation Manager uses Percona XtraDB as the system database. HA requires a minimum of three server nodes configured in each cluster with latency between them not exceeding 5 ms.

Required Ports: 3306

D) Search and indexing

Cisco Crosswork Situation Manager uses Elasticsearch to store active alert and Situation data to provide search functionality within the product. For HA deployments install a cluster of a minimum of three data servers with one active master server.

Required Ports: 9200, 9300

E) Core data processing

Moogfarmd is the core data processing application that controls all other services in Cisco Crosswork Situation Manager. It manages the clustering algorithms and other applets (Moolets) that run as part of the system. For HA deployments, install a minimum of two Moogfarmd services on separate servers. Moogfarmd can only run as a two-instance group in an active/passive mode.

Required Ports: 5701, 8901 for Hazelcast: the in-memory data grid that provides fault tolerance.

F) Message Bus

Cisco Crosswork Situation Manager uses RabbitMQ as the system Message Bus. It requires a minimum of three servers for HA. RabbitMQ relies on its native clustering functionality and mirrored queues to handle failover; it does not use the Cisco Crosswork Situation Manager load balancing feature.

Required Ports: 5672, 4369, 15672, 25672

G) Data ingestion

Cisco Crosswork Situation Manager uses the following types of Link Access Modules (LAMs) to ingest data:

· Polling LAMs that periodically connect to a data source using an integration API to collect event data.

· Receiving LAMs that provide an endpoint for data sources to post event data.

For HA deployments:

1. Install two instances of each LAM. When both instances are in the same group, they run in active/passive mode.

2. For LAMs deployed over an unreliable link such as a WAN, or across data centers, you should deploy a caching LAM strategy that includes a database and message queue on the LAM Servers.

3. You can load balance receiving LAMs and configure them as active/active to increase capacity.

HA Architecture for LAMs

The Cisco Crosswork Situation Manager HA architecture provides increased resilience against LAM and server restarts by caching ingested data to the disk. It requires installing a local RabbitMQ cluster which is used by LAMs for publishing.

A remote caching LAM, located next to the Core role, connects to the local RabbitMQ cluster, picks the events from the queue and publishes them to the central RabbitMQ cluster for Moogfarmd to process.

If no caching LAM is available to consume the events from the local RabbitMQ cluster, the data is cached to disk until the server runs out of memory.

HA architecture

This architecture is recommended for hybrid installations, where the core processing is located in the cloud and LAMs are on-premise, or for a full on-premise configuration where LAMs are housed remotely to the core components.

Polling LAMs run in an active / passive mode and must connect to a local database in order to negotiate their state. This requires a local MySQL instance that runs with master / master replication.

Installation steps

If you are installing the LAMs in a non-SaaS version of Cisco Crosswork Situation Manager, see Install LAMs (non-SaaS).

If you are installing the LAMs in a SaaS version of Cisco Crosswork Situation Manager, see Install LAMs (SaaS).

High Availability for Third Party Component Dependencies

You can configure Cisco Crosswork Situation Manager dependencies such as Percona XTraDB Cluster, Elasticsearch, RabbitMQ, and Grafana to work effectively in highly available deployments.

See High Availability for details on high availability deployments of Cisco Crosswork Situation Manager and deployment scenarios.

Configure Percona XtraDB Cluster for HA

For an example Percona XtraDB Cluster configuration, see Set Up the Database for HA. For further information, refer to the documentation about Percona XtraDB Cluster.

Configure RabbitMQ for HA

You can improve the performance and reliability of your Cisco Crosswork Situation Manager deployment by:

1. Distributing your RabbitMQ brokers on different hosts.

2. Clustering your multiple RabbitMQ brokers.

3. Mirroring your message queues across multiple nodes.

See Set Up the Core Role for HA and Set Up the Redundancy Server Role for an example configuration. For more information see See Message System Deployment. Refer to the RabbitMQ documentation on Clustering and Mirrored Queues for more information.Message System Deployment

Configure Elasticsearch for HA

There are different ways to configure Elasticsearch for distributed installations. See Set Up the Core Role for HA and Set Up the Redundancy Server Role for an example configuration.

Refer to the Elasticsearch documentation on Clustering for more details.

Configure Grafana for HA

To set up Grafana for distributed installations, you should configure each Grafana instance to connect to a Cisco Crosswork Situation Manager UI load balancer such as HA Proxy rather than the Cisco Crosswork Situation Manager UI stack.

Alternatively you can point it at the Apache Tomcat server or Nginx server. Refer to the Grafana documention on Setting Up Grafana for High Availability.

HA Control Utility Command Reference

The Cisco Crosswork Situation Manager HA Control Utility ha_cntl is a command line utility to:

1. Control instance, process group, or cluster failover. For example, to switch from passive to active mode.

2. View the current status of all clusters, process groups, and instances. See High Availability Configuration Hierarchy for more information.

Normally you should configure groups in HA to use automatic failover in production. Use the HA Control utility to check the status of the HA system or to initiate failover in non-production scenarios.

Usage

ha_cntl [ --activate cluster[.group[.instance]] | --deactivate cluster[.group[.instance]] | --diagnostics cluster[.group[.instance]] [ --assumeyes ] | --view ] [ --loglevel (INFO|WARN|ALL) ] [ --time_out <seconds> ] | --help

| Argument |

Input |

Description |

| -a, --activate |

String <cluster[.group[.instance_name]]> |

Activate all groups within a cluster, a specific group within a cluster, or a single instance. |

| -d, --deactivate |

String <cluster[.group[.instance_name]]> |

Deactivate all groups within a cluster, a specific group within a cluster or a single instance. |

| -i, --diagnostics |

String <arg> |

Print additional diagnostics where available to process log file. |

| -l,--loglevel |

String, one of INFO | WARN | ALL |

Log level controlling the amount of information logged by the utility. |

| -t,--time_out |

String <number of seconds> |

Amount of time in seconds to wait for the last answer. Defaults to 2. |

| -v,--view |

- |

View the current status of all instances, process groups, and clusters. |

| -y,--assumeyes |

- |

Answer "yes" for all prompts. |

Example

$MOOGSOFT_HOME/bin/ha_cntl -v

Getting system status

Cluster: [SECONDARY] passive

Process Group: [UI] Passive (no leader - all can be active)

Instance: [servlets] Passive

Component: moogpoller - not running

Component: moogsvr - not running

Component: toolrunner - not running

Process Group: [moog_farmd] Passive (only leader should be active)

Instance: FARM Passive Leader

Moolet: AlertBuilder - not running (will run on activation)

Moolet: AlertRulesEngine - not running (will run on activation)

Moolet: Cookbook - not running (will run on activation)

Moolet: Speedbird - not running (will run on activation)

Moolet: TemplateMatcher - not running

Process Group: [rest_lam] Passive (no leader - all can be active)

Instance: REST2 Passive

Process Group: [socket_lam] Passive (only leader should be active)

Instance: SOCK2 Passive Leader

Cluster: [PRIMARY] active

Process Group: [UI] Active (no leader - all can be active)

Instance: [servlets] Active

Component: moogpoller - running

Component: moogsvr - running

Component: toolrunner - running

Process Group: [moog_farmd] Active (only leader should be active)

Instance: FARM Active Leader

Moolet: AlertBuilder - running

Moolet: AlertRulesEngine - running

Moolet: Cookbook - running

Moolet: Default Cookbook - running

Moolet: Speedbird - running

Moolet: TemplateMatcher - not running

Process Group: [rest_lam] Active (no leader - all can be active)

Instance: REST1 Active

Process Group: [socket_lam] Active (only leader should be active)

Instance: SOCK1 Active Leader

HA Installation

This topic summarises the different types of high availability (HA) installation available. There are three type of HA installation:

1. Basic.

2. Minimally distributed.

3. Fully distributed.

Before you begin

Before you start an HA installation:

1. Read the High Availability Overview section to familiarise yourself with HA concepts.

2. Complete the appropriate Prepare to Install Cisco Crosswork Situation Manager.

Basic HA installation

This installation configuration has three servers on a single machine; two for the primary and secondary clusters, and a redundancy server.

You can do a basic HA installation using either RPM or tarball.

See Basic HA (RPM) Install and Basic HA Installation - Tarball for more information.

Minimally distributed HA installation

For a minimally distributed HA installation, follow the fully distributed installation steps.

The instructions list the steps for a specific role installation. If you need to collocate multiple roles on the same server according to a minimally distributed installation of your choice, you may need to run multiple sets of instructions on the same server for the corresponding collocated roles. There might be an overlap in terms of steps and if this is the case you only need to perform those steps once. For instance, if you collocate Core 1 and UI 1 roles, you only need to configure HA Proxy once.

Fully distributed HA installation

This installation splits the different roles across different servers or virtual machines. To perform a fully distributed HA install:

· Set up Percona XtraDB Cluster. See Set Up the Database for HA for more information.

· Set up Core 1 and 2 roles. See Set Up the Core Role for HA for more information.

· Set up HAProxy on the Core, UI and LAM nodes. See Set Up HA Proxy for the Database Role for more information.

· Set up UI 1 and 2 roles. See Set Up the User Interface Role for HA for more information.

· Set up the Redundancy server role. See Set Up the Redundancy Server Role for more information.

· Set up the LAM 1 and 2 roles for an on-premise version of Cisco Crosswork Situation Manager. See Install LAMs (on-premise) for more information.

Set up the LAM 1 and 2 roles for a SaaS version of Cisco Crosswork Situation Manager. See Install LAMs (SaaS) for more information.

Install Cisco Add-Ons

Cisco periodically releases add-ons to extend and enhance the core Cisco Crosswork Situation Manager functionality. For example, new Workflow Engine functions, new Workflow Engines, or Integrations tiles. All add-ons releases are cumulative and include the fixes from previous releases.

Once you have finished upgrading or installing Cisco Crosswork Situation Manager, you should install the Cisco Crosswork Situation Manager add-ons to ensure you have the latest version.

See Install Cisco Add-ons for more information on how to install the Cisco Crosswork Situation Manager add-ons.

Basic HA Installation

The basic HA installation configuration has three servers on a single machine; two for the primary and secondary clusters, and a redundancy server.

You can do a basic HA install using either RPM or tarball.

Before you start, you must complete the appropriate Prepare to Install Cisco Crosswork Situation Manager process.

See Basic HA Installation - Tarball and Basic HA (RPM) Install for instructions on how to complete the basic HA install.

Basic HA Installation - RPM

This topic describes the basic High Availability (HA) installation for Cisco Crosswork Situation Manager using RPM. This installation configuration has three servers; two for the primary and secondary clusters, and a redundancy server. A three-server installation is good for user acceptance testing (UAT) or pre-production. For production installations Ciscorecommends five, seven, or nine servers.

This topic describes how to perform the following tasks for the core Cisco Crosswork Situation Manager components:

1. Install the Cisco Crosswork Situation Manager packages and set the environment variables.

2. Set up the Percona XtraDB database and HA Proxy.

3. Configure the RabbitMQ message broker and Elasticsearch search service.

4. Configure high availability for the Cisco Crosswork Situation Manager core processing components.

5. Initialize the user interface (UI).

6. Configure high availability for data ingestion.

Before you begin

Before you start to configure your highly available deployment of Cisco Crosswork Situation Manager:

1. Familiarize yourself with the single-server deployment process: Install Cisco Crosswork Situation Manager and Upgrade Cisco Crosswork Situation Manager.

2. Read the High Availability Overview and review the HA Reference Architecture.

3. Verify that the hosts can access the required ports on the other hosts in the group. See HA Reference Architecture for more information.

4. Verify that you have root access to all three servers. You must perform this installation as the root user.

5. Complete either the Online RPM pre-installation or Cisco Crosswork Situation Manager - Offline RPM pre-installation instructions.

Prepare to install Cisco Crosswork Situation Manager

Before you install the Cisco Crosswork Situation Manager packages, perform the pre-installation tasks on all three servers.

Install Cisco Crosswork Situation Manager packages

Install the Cisco Crosswork Situation Manager packages on all three servers. Make sure you install the version you want by changing the VERSION number (8.0.0.1 in the following example):

Primary, Secondary and Redundancy servers:

VERSION=8.0.0.1; yum -y install moogsoft-server-${VERSION} \

moogsoft-db-${VERSION} \

moogsoft-ccsm-${VERSION} \

moogsoft-utils-${VERSION} \

moogsoft-search-${VERSION} \

moogsoft-ui-${VERSION} \

moogsoft-common-${VERSION} \

moogsoft-mooms-${VERSION} \

moogsoft-integrations-${VERSION} \

moogsoft-integrations-ui-${VERSION}

Edit the ~/.bashrc file to contain the following lines:

export MOOGSOFT_HOME=/usr/share/moogsoft

export APPSERVER_HOME=/usr/share/apache-tomcat

export JAVA_HOME=/usr/java/latest

export PATH=$PATH:$MOOGSOFT_HOME/bin:$MOOGSOFT_HOME/bin/utils

Source the .bashrc file:

source ~/.bashrc

Initialize the database

Install the Percona nodes and initialize the database on the primary server. Substitute the IP addresses of your servers and choose the password for the sstuser. Press <Enter> at the password prompt during initialization.

Primary server:

bash install_percona_nodes.sh -p -i <PRIMARY_IP>,<SECONDARY_IP>,<REDUNDANCY_IP> -u sstuser -w <SSTPASSWORD>

moog_init_db.sh -qIu root

Install the Percona nodes on the secondary and redundancy servers. Substitute the IP addresses of your servers and use the same sstuser password as the primary server. Do not initialize the database on these servers.

Secondary and Redundancy servers:

bash install_percona_nodes.sh -i <PRIMARY_IP>,<SECONDARY_IP>,<REDUNDANCY_IP> -u sstuser -w <SSTPASSWORD>

To verify that the Percona initialization was successful, run the following command on all three servers. Substitute the IP address of your primary server:

curl http://<PRIMARY_IP>:9198

If successful, you see the following message:

Percona XtraDB Cluster Node is synced

Set up HA Proxy

Install HA Proxy on the primary and secondary servers. Substitute the IP addresses of your servers.

Primary and Secondary servers:

$MOOGSOFT_HOME/bin/utils/haproxy_installer.sh -l 3309 -c -i <PRIMARY_IP>:3306,<SECONDARY_IP>:3306,<REDUNDANCY_IP>:3306

Run the following script to confirm successful installation:

$MOOGSOFT_HOME/bin/utils/check_haproxy_connections.sh

If successful, you see a script output similar to the following example:

HAProxy Connection Counts

Frontend:

0.0.0.0:3309 : 27

Backend:

mysql_node_1 172.31.82.211:3306 : 27

mysql_node_2 172.31.82.133:3306 : 0

mysql_node_3 172.31.85.42:3306 : 0

Set up RabbitMQ

Initialize and configure RabbitMQ on all three servers.

Primary, Secondary and Redundancy servers:

Substitute a name for your zone.

moog_init_mooms.sh -pz <MY_ZONE>

The primary erlang cookie is located at /var/lib/rabbitmq/.erlang.cookie. The erlang cookie must be the same for all RabbitMQ nodes. Replace the erlang cookie on the secondary and redundancy servers with the erlang cookie from the primary server. Make the cookies on the secondary and redundancy servers read-only:

chmod 400 /var/lib/rabbitmq/.erlang.cookie

You may need to change the file permissions on the secondary and redundancy erlang cookies first to allow those files to be overwritten. For example:

chmod 406 /var/lib/rabbitmq/.erlang.cookie

Restart RabbitMQ on the secondary and redundancy servers and join the cluster. Substitute the short hostname of your primary server and the name of your zone.

The short hostname is the full hostname excluding the DNS domain name. For example, if the hostname is ip-172-31-82-78.ec2.internal, the short hostname is ip-172-31-82-78. To find out the short hostname, run rabbitmqctl cluster_status on the primary server.

Secondary and Redundancy servers:

systemctl restart rabbitmq-server

rabbitmqctl stop_app

rabbitmqctl join_cluster rabbit@<PRIMARY_SHORT_HOSTNAME>

rabbitmqctl start_app

rabbitmqctl set_policy -p <MY_ZONE> ha-all ".+\.HA" '{"ha-mode":"all"}'

Run rabbitmqctl cluster_status to get the cluster status. Example output is as follows:

Cluster status of node rabbit@ip-172-31-93-201 ...

[{nodes,[{disc,['rabbit@ip-172-31-82-211','rabbit@ip-172-31-85-42','rabbit@ip-172-31-93-201']}]},

{running_nodes,['rabbit@ip-172-31-85-42','rabbit@ip-172-31-82-211','rabbit@ip-172-31-93-201']},

{cluster_name,<<"rabbit@ip-172-31-93-201.ec2.internal">>},

{partitions,[]},

{alarms,[{'rabbit@ip-172-31-85-42',[]},{'rabbit@ip-172-31-82-211',[]},{'rabbit@ip-172-31-93-201',[]}]}]

Set up Elasticsearch

Initialize and configure Elasticsearch on all three servers.

Primary, Secondary and Redundancy servers:

moog_init_search.sh

Uncomment and edit the properties in the Elasticsearch YAML file /etc/elasticsearch/elasticsearch.yml on all three servers as follows:

cluster.name: aiops

node.name: <SERVER_HOSTNAME>

...

network.host: 0.0.0.0

http.port: 9200

discovery.zen.ping.unicast.hosts: ["<PRIMARY_HOSTNAME>","<SECONDARY_HOSTNAME>","<REDUNDANCY_HOSTNAME>"]

discovery.zen.minimum_master_nodes: 1

gateway.recover_after_nodes: 1

node.master: true

Restart Elasticsearch:

systemctl restart elasticsearch

Get the health status of the cluster.

Primary server:

curl -X GET "localhost:9200/_cat/health?v&pretty"

Example cluster health status:

epoch timestamp cluster status

node.total node.data shards pri relo init

unassign pending_tasks

max_task_wait_time

active_shards_percent

1580490422 17:07:02 aiops green 3 3 0 0 0 0 0 0 - 100.0%

The minimum and maximum JVM heap sizes must be large enough to ensure that Elasticsearch starts.

See Finalize and Validate the Install for more information.

Elasticsearch Encryption

You can enable password authentication on Elasticsearch by editing the $MOOGSOFT_HOME/config/system.conf configuration file. You can use either an unencrypted password or an encrypted password, but you cannot use both.

You should use an encrypted password in the configuration file if you do not want users with configuration access to be able to access integrated systems.

Enable password authentication

To enable unencrypted password authentication on Elasticsearch, set the following properties in the system.conf file:

"search":

{

...

“username” : <username>,

“password” : <password>,

...

}

To enable encrypted password authentication on Elasticsearch, set the following properties in the system.conf file:

"search":

{

...

“username” : <username>,

“encrypted_password” : <encrypted password>

...

}

Initialize Elasticsearch

To initialize Elasticsearch with password authentication, run:

moog_init_search.sh -a username:password

or:

moog_init_search.sh --auth username:password

If you run moog_init_search without the -a/--auth parameters, you will not enable password authentication in Elasticsearch.

See Moog Encryptor for more information on how to encrypt passwords stored in the system.conf file.

You can also manually add authentication to the Elasticsearch configuration. You should do this if you have your own local Elasticsearch installation. See the Elasticsearch documentation on configuring security for more information.

Configure Cisco Crosswork Situation Manager

Configure Cisco Crosswork Situation Manager by editing the Moogfarmd and system configuration files.

Primary and Secondary servers:

Edit $MOOGSOFT_HOME/config/system.conf and set the following properties. Substitute the name of your RabbitMQ zone, the server hostnames, and the cluster names.

"mooms" :

{

...

"zone" : "<MY_ZONE>",

"brokers" : [

{"host" : "<PRIMARY_HOSTNAME>", "port" : 5672},

{"host" : "<SECONDARY_HOSTNAME>", "port" : 5672},

{"host" : "<REDUNDANCY_HOSTNAME>", "port" : 5672}

],

...

"cache_on_failure" : true,

...

"search" :

{

...

"nodes" : [

{"host" : "<PRIMARY_HOSTNAME>", "port" : 9200},

{"host" : "<SECONDARY_HOSTNAME>", "port" : 9200},

{"host" : "<REDUNDANCY_HOSTNAME>", "port" : 9200}

]

...

"failover" :

{

"persist_state" : true,

"hazelcast" :

{

"hosts" : ["<PRIMARY_HOSTNAME>","<SECONDARY_HOSTNAME>"],

"cluster_per_group" : true

}

"automatic_failover" : true,

}

...

"ha":

{ "cluster": "<CLUSTER_NAME, PRIMARY or SECONDARY>" }

Uncomment and edit the following properties in $MOOGSOFT_HOME/config/moog_farmd.conf. Note the importance of the initial comma. Delete the cluster line in this section of the file.

Primary server

,

ha:

{

group: "moog_farmd",

instance: "moog_farmd",

default_leader: true,

start_as_passive: false

}

Secondary server

,

ha:

{

group: "moog_farmd",

instance: "moog_farmd",

default_leader: false,

start_as_passive: false

}

Start Moogfarmd on the primary and secondary servers:

systemctl start moogfarmd

After starting Moogfarmd on the primary and secondary servers, run the HA Control command line utility ha_cntl -v to check the status of Moogfarmd. Example output is as follows:

Moogsoft AIOps Version 8.0.0.1

(C) Copyright 2012-2020 Moogsoft, Inc.

All rights reserved.

Executing: ha_cntl

Getting system status

Cluster: [PRIMARY] active

Process Group: [moog_farmd] Active (only leader should be active)

Instance: [primary] Active Leader

Component: Alert Workflows - running

Component: AlertBuilder - running

Component: AlertMgr - not running

Component: AlertRulesEngine - not running

Component: Default Cookbook - running

Component: Enricher - not running

Component: Enrichment Workflows - running

Component: Event Workflows - running

Component: Feedback - not running

Component: Housekeeper - running

Component: Indexer - running

Component: MaintenanceWindowManager - running

Component: Notifier - not running

Component: Scheduler - not running

Component: Situation Workflows - running

Component: SituationMgr - running

Component: SituationRootCause - running

Component: TeamsMgr - running

Cluster: [SECONDARY] partially active

Process Group: [moog_farmd] Passive (only leader should be active)

Instance: [secondary] Passive Leader

Component: Alert Workflows - not running (will run on activation)

Component: AlertBuilder - not running (will run on activation)

Component: AlertMgr - not running

Component: AlertRulesEngine - not running

Component: Enricher - not running

Component: Enrichment Workflows - not running (will run on activation)

Component: Event Workflows - not running (will run on activation)

Component: Feedback - not running

Component: Housekeeper - not running (will run on activation)

Component: Indexer - not running (will run on activation)

Component: MaintenanceWindowManager - not running (will run on activation)

Component: Notifier - not running

Component: Scheduler - not running

Component: Situation Workflows - not running (will run on activation)