- New and Changed Information

- Preface

- Overview

- Configuring the CDP

- Configuring the Domain

- Managing Server Connections

- Managing the Configuration

- Working with Files

- Managing Users

- Configuring NTP

- Configuring Local SPAN and ERSPAN

- Configuring SNMP

- Configuring NetFlow

- Configuring System Message Logging

- Configuring iSCSI Multipath

- Configuring VSM Backup and Recovery

- Virtualized Workload Mobility (DC to DC vMotion)

- Configuring Limits

- Index

Cisco Nexus 1000V System Management Configuration Guide, Release 4.2(1)SV1(5.1)

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- Updated:

- April 7, 2013

Chapter: Configuring iSCSI Multipath

- Information About iSCSI Multipath

- Guidelines and Limitations

- Prerequisites

- Default Settings

- Configuring iSCSI Multipath

- Uplink Pinning and Storage Binding

- Converting to a Hardware iSCSI Configuration

- Changing the VMkernel NIC Access VLAN

- Verifying the iSCSI Multipath Configuration

- Managing Storage Loss Detection

- Additional References

- Feature History for iSCSI Multipath

Configuring iSCSI Multipath

This chapter describes how to configure iSCSI multipath for multiple routes between a server and its storage devices and includes the following topics:

•![]() Information About iSCSI Multipath

Information About iSCSI Multipath

•![]() Verifying the iSCSI Multipath Configuration

Verifying the iSCSI Multipath Configuration

•![]() Managing Storage Loss Detection

Managing Storage Loss Detection

•![]() Feature History for iSCSI Multipath

Feature History for iSCSI Multipath

Information About iSCSI Multipath

This section includes the following topics:

•![]() iSCSI Multipath Setup on the VMware Switch

iSCSI Multipath Setup on the VMware Switch

Overview

The iSCSI multipath feature sets up multiple routes between a server and its storage devices for maintaining a constant connection and balancing the traffic load. The multipathing software handles all input and output requests and passes them through on the best possible path. Traffic from host servers is transported to shared storage using the iSCSI protocol that packages SCSI commands into iSCSI packets and transmits them on the Ethernet network.

iSCSI multipath provides path failover. In the event a path or any of its components fails, the server selects another available path. In addition to path failover, multipathing reduces or removes potential bottlenecks by distributing storage loads across multiple physical paths.

The Cisco Nexus 1000V DVS performs iSCSI multipathing regardless of the iSCSI target. The iSCSI daemon on an ESX server communicates with the iSCSI target in multiple sessions using two or more VMkernel NICs on the host and pinning them to physical NICs on the Cisco Nexus 1000V. Uplink pinning is the only function of multipathing provided by the Cisco Nexus 1000V. Other multipathing functions such as storage binding, path selection, and path failover are provided by VMware code running in the VMkernel.

Setting up iSCSI Multipath is accomplished in the following steps:

1. ![]() Uplink Pinning

Uplink Pinning

Each VMkernel port created for iSCSI access is pinned to one physical NIC.

This overrides any NIC teaming policy or port bundling policy. All traffic from the VMkernel port uses only the pinned uplink to reach the upstream switch.

2. ![]() Storage Binding

Storage Binding

Each VMkernel port is pinned to theVMware iSCSI host bus adapter (VMHBA) associated with the physical NIC to which the VMkernel port is pinned.

The ESX or ESXi host creates the following VMHBAs for the physical NICs.

–![]() In Software iSCSI, only one VMHBA is created for all physical NICs.

In Software iSCSI, only one VMHBA is created for all physical NICs.

–![]() In Hardware iSCSI, one VMHBA is created for each physical NIC that supports iSCSI offload in hardware.

In Hardware iSCSI, one VMHBA is created for each physical NIC that supports iSCSI offload in hardware.

For detailed information about how to use VMware ESX and VMware ESXi systems with an iSCSI storage area network (SAN), see the iSCSI SAN Configuration Guide.

Supported iSCSI Adapters

This section lists the available VMware iSCSI host bus adapters (VMHBAs) and indicates those supported by the Cisco Nexus 1000V.

iSCSI Multipath Setup on the VMware Switch

Before enabling or configuring multipathing, networking must be configured for the software or hardware iSCSI adapter. This involves creating a VMkernel iSCSI port for the traffic between the iSCSI adapter and the physical NIC.

On the vSwitch, uplink pinning is done manually by the admin directly on the vSphere client.

Storage binding is also done manually by the admin directly on the ESX host or using RCLI.

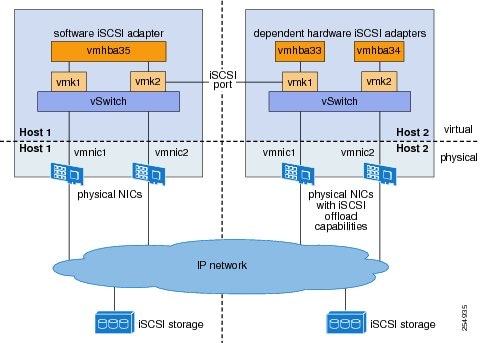

For software iSCSI, only oneVMHBA is required for the entire implementation. All VMkernel ports are bound to this adapter. For example, in Figure 13-1, both vmk1 and vmk2 are bound to VMHBA35.

For hardware iSCSI, a separate adapter is required for each NIC. Each VMkernel port is bound to the adapter of the physical VM NIC to which it is pinned. For example, in Figure 13-1, vmk1 is bound to VMHBA33, the iSCSI adapter associated with vmnic1 and to which vmk1 is pinned. Similarly vmk2 is bound to VMHBA34.

Figure 13-1 iSCSI Multipath on VMware Virtual Switch

The following are the adapters and NICs used in the hardware and software iSCSI multipathing configuration shown in Figure 13-1.

|

|

|

|

|---|---|---|

VMHBA35 |

1 |

1 |

2 |

2 |

|

|

|

||

VMHBA33 |

1 |

1 |

VMHBA34 |

2 |

2 |

Guidelines and Limitations

The following are guidelines and limitations for the iSCSI multipath feature.

•![]() Only port profiles of type vEthernet can be configured with capability iscsi-multipath.

Only port profiles of type vEthernet can be configured with capability iscsi-multipath.

•![]() The port profile used for iSCSI multipath must be an access port profile, not a trunk port profile.

The port profile used for iSCSI multipath must be an access port profile, not a trunk port profile.

•![]() The following are not allowed on a port profile configured with capability iscsi-multipath

The following are not allowed on a port profile configured with capability iscsi-multipath

–![]() The port profile cannot also be configured with capability l3 control.

The port profile cannot also be configured with capability l3 control.

–![]() A system VLAN change when the port profile is inherited by VMkernel NIC.

A system VLAN change when the port profile is inherited by VMkernel NIC.

–![]() An access VLAN change when the port profile is inherited by VMkernel NIC.

An access VLAN change when the port profile is inherited by VMkernel NIC.

–![]() A port mode change to trunk mode.

A port mode change to trunk mode.

•![]() Only VMkernel NIC ports can inherit a port profile configured with capability iscsi-multipath.

Only VMkernel NIC ports can inherit a port profile configured with capability iscsi-multipath.

•![]() The Cisco Nexus 1000V imposes the following limitations if you try to override its automatic uplink pinning.

The Cisco Nexus 1000V imposes the following limitations if you try to override its automatic uplink pinning.

–![]() A VMkernel port can only be pinned to one physical NIC.

A VMkernel port can only be pinned to one physical NIC.

–![]() Multiple VMkernel ports can be pinned to a software physical NIC.

Multiple VMkernel ports can be pinned to a software physical NIC.

–![]() Only one VMkernel port can be pinned to a hardware physical NIC.

Only one VMkernel port can be pinned to a hardware physical NIC.

•![]() The iSCSI initiators and storage must already be operational.

The iSCSI initiators and storage must already be operational.

•![]() ESX 4.0 Update1 or later supports only software iSCSI multipathing.

ESX 4.0 Update1 or later supports only software iSCSI multipathing.

•![]() ESX 4.1 or later supports both software and hardware iSCSI multipathing.

ESX 4.1 or later supports both software and hardware iSCSI multipathing.

•![]() VMkernel ports must be created before enabling or configuring the software or hardware iSCSI for multipathing.

VMkernel ports must be created before enabling or configuring the software or hardware iSCSI for multipathing.

•![]() VMkernel networking must be functioning for the iSCSI traffic.

VMkernel networking must be functioning for the iSCSI traffic.

•![]() Before removing from the DVS an uplink to which an activeVMkernel NIC is pinned, you must first remove the binding between the VMkernel NIC and its VMHBA. The following system message displays as a warning:

Before removing from the DVS an uplink to which an activeVMkernel NIC is pinned, you must first remove the binding between the VMkernel NIC and its VMHBA. The following system message displays as a warning:

vsm# 2010 Nov 10 02:22:12 sekrishn-bl-vsm %VEM_MGR-SLOT8-1-VEM_SYSLOG_ALERT: sfport : Removing Uplink Port Eth8/3 (ltl 19), when vmknic lveth8/1 (ltl 49) is pinned to this port for iSCSI Multipathing

•![]() Hardware iSCSI is new in Cisco Nexus 1000V Release 4.2(1)SV1(5.1). If you configured software iSCSI multipathing in a previous release, the following are preserved after upgrade:

Hardware iSCSI is new in Cisco Nexus 1000V Release 4.2(1)SV1(5.1). If you configured software iSCSI multipathing in a previous release, the following are preserved after upgrade:

–![]() multipathing

multipathing

–![]() software iSCSI uplink pinning

software iSCSI uplink pinning

–![]() VMHBA adapter bindings

VMHBA adapter bindings

–![]() host access to iSCSI storage

host access to iSCSI storage

To leverage the hardware offload capable NICs on ESX 4.1, use the "Converting to a Hardware iSCSI Configuration" procedure.

•![]() An iSCSI target and initiator should be in the same subnet.

An iSCSI target and initiator should be in the same subnet.

•![]() iSCSI multipathing on the Nexus 1000V currently only allows a single vmknic to be pinned to one vmnic.

iSCSI multipathing on the Nexus 1000V currently only allows a single vmknic to be pinned to one vmnic.

Prerequisites

The iSCSI Multipath feature has the following prerequisites.

•![]() You must understand VMware iSCSI SAN storage virtualization. For detailed information about how to use VMware ESX and VMware ESXi systems with an iSCSI storage area network (SAN), see the iSCSI SAN Configuration Guide.

You must understand VMware iSCSI SAN storage virtualization. For detailed information about how to use VMware ESX and VMware ESXi systems with an iSCSI storage area network (SAN), see the iSCSI SAN Configuration Guide.

•![]() You must know how to set up the iSCSI Initiator on your VMware ESX/ESXi host.

You must know how to set up the iSCSI Initiator on your VMware ESX/ESXi host.

•![]() The host is already functioning with one of the following:

The host is already functioning with one of the following:

–![]() VMware ESX 4.0.1 Update 01 for software iSCSI

VMware ESX 4.0.1 Update 01 for software iSCSI

–![]() VMware ESX 4.1 or later for software and hardware iSCSI

VMware ESX 4.1 or later for software and hardware iSCSI

•![]() You must understand iSCSI multipathing and path failover.

You must understand iSCSI multipathing and path failover.

•![]() VMware kernel NICs configured to access the SAN external storage are required.

VMware kernel NICs configured to access the SAN external storage are required.

Default Settings

Table 13-1 lists the default settings in the iSCSI Multipath configuration.

Configuring iSCSI Multipath

Use the following procedures to configure iSCSI Multipath.

•![]() "Uplink Pinning and Storage Binding" procedure

"Uplink Pinning and Storage Binding" procedure

•![]() "Converting to a Hardware iSCSI Configuration" procedure

"Converting to a Hardware iSCSI Configuration" procedure

•![]() "Changing the VMkernel NIC Access VLAN" procedure

"Changing the VMkernel NIC Access VLAN" procedure

Uplink Pinning and Storage Binding

Use this section to configure iSCSI multipathing between hosts and targets over iSCSI protocol by assigning the vEthernet interface to an iSCSI multipath port profile configured with a system VLAN.

Process for Uplink Pinning and Storage Binding

Step 1 ![]() "Creating a Port Profile for a VMkernel NIC" procedure.

"Creating a Port Profile for a VMkernel NIC" procedure.

Step 2 ![]() "Creating VMkernel NICs and Attaching the Port Profile" procedure.

"Creating VMkernel NICs and Attaching the Port Profile" procedure.

Step 3 ![]() Do one of the following:

Do one of the following:

•![]() If you want to override the automatic pinning of NICS, go to "Manually Pinning the NICs" procedure.

If you want to override the automatic pinning of NICS, go to "Manually Pinning the NICs" procedure.

•![]() If not continue with storage binding.

If not continue with storage binding.

You have completed uplink pinning. Continue with the next step for storage binding.

Step 4 ![]() "Identifying the iSCSI Adapters for the Physical NICs" procedure

"Identifying the iSCSI Adapters for the Physical NICs" procedure

Step 5 ![]() "Binding the VMkernel NICs to the iSCSI Adapter" procedure

"Binding the VMkernel NICs to the iSCSI Adapter" procedure

Step 6 ![]() "Verifying the iSCSI Multipath Configuration" procedure

"Verifying the iSCSI Multipath Configuration" procedure

Creating a Port Profile for a VMkernel NIC

You can use this procedure to create a port profile for a VMkernel NIC.

BEFORE YOU BEGIN

Before starting this procedure, you must know or do the following.

•![]() You have already configured the host with one port channel that includes two or more physical NICs.

You have already configured the host with one port channel that includes two or more physical NICs.

•![]() Multipathing must be configured on the interface by using this procedure to create an iSCSI multipath port profile and then assigning the interface to it.

Multipathing must be configured on the interface by using this procedure to create an iSCSI multipath port profile and then assigning the interface to it.

•![]() You are logged in to the CLI in EXEC mode.

You are logged in to the CLI in EXEC mode.

•![]() You know the VLAN ID for the VLAN you are adding to this iSCSI multipath port profile.

You know the VLAN ID for the VLAN you are adding to this iSCSI multipath port profile.

–![]() The VLAN must already be created on the Cisco Nexus 1000V.

The VLAN must already be created on the Cisco Nexus 1000V.

–![]() The VLAN that you assign to this iSCSI multipath port profile must be a system VLAN.

The VLAN that you assign to this iSCSI multipath port profile must be a system VLAN.

–![]() One of the uplink ports must already have this VLAN in its system VLAN range.

One of the uplink ports must already have this VLAN in its system VLAN range.

•![]() The port profile must be an access port profile. It cannot be a trunk port profile. This procedure includes steps to configure the port profile as an access port profile.

The port profile must be an access port profile. It cannot be a trunk port profile. This procedure includes steps to configure the port profile as an access port profile.

SUMMARY STEPS

1. ![]() config t

config t

2. ![]() port-profile type vethernet name

port-profile type vethernet name

3. ![]() vmware port-group [name]

vmware port-group [name]

4. ![]() switchport mode access

switchport mode access

5. ![]() switchport access vlan vlanID

switchport access vlan vlanID

6. ![]() no shutdown

no shutdown

7. ![]() (Optional) system vlan vlanID

(Optional) system vlan vlanID

8. ![]() capability iscsi-multipath

capability iscsi-multipath

9. ![]() state enabled

state enabled

10. ![]() (Optional) show port-profile name

(Optional) show port-profile name

11. ![]() (Optional) copy running-config startup-config

(Optional) copy running-config startup-config

DETAILED STEPS

Creating VMkernel NICs and Attaching the Port Profile

You can use this procedure to create VMkernel NICs and attach a port profile to them which triggers the automatic pinning of the VMkernel NICs to physical NICs.

BEFORE YOU BEGIN

Before starting this procedure, you must know or do the following.

•![]() You have already created a port profile using the procedure, "Creating a Port Profile for a VMkernel NIC" procedure, and you know the name of this port profile.

You have already created a port profile using the procedure, "Creating a Port Profile for a VMkernel NIC" procedure, and you know the name of this port profile.

•![]() The VMkernel ports are created directly on the vSphere client.

The VMkernel ports are created directly on the vSphere client.

•![]() Create one VMkernel NIC for each physical NIC that carries the iSCSI VLAN. The number of paths to the storage device is the same as the number of VMkernel NIC created.

Create one VMkernel NIC for each physical NIC that carries the iSCSI VLAN. The number of paths to the storage device is the same as the number of VMkernel NIC created.

•![]() Step 2 of this procedure triggers automatic pinning of VMkernel NICs to physical NICs, so you must understand the following rules for automatic pinning:

Step 2 of this procedure triggers automatic pinning of VMkernel NICs to physical NICs, so you must understand the following rules for automatic pinning:

–![]() A VMkernel NIC is pinned to an uplink only if the VMkernel NIC and the uplink carry the same VLAN.

A VMkernel NIC is pinned to an uplink only if the VMkernel NIC and the uplink carry the same VLAN.

–![]() The hardware iSCSI NIC is picked first if there are many physical NICs carrying the iSCSI VLAN.

The hardware iSCSI NIC is picked first if there are many physical NICs carrying the iSCSI VLAN.

–![]() The software iSCSI NIC is picked only if there is no available hardware iSCSI NIC.

The software iSCSI NIC is picked only if there is no available hardware iSCSI NIC.

–![]() Two VMkernel NICs are never pinned to the same hardware iSCSI NIC.

Two VMkernel NICs are never pinned to the same hardware iSCSI NIC.

–![]() Two VMkernel NICs can be pinned to the same software iSCSI NIC.

Two VMkernel NICs can be pinned to the same software iSCSI NIC.

Step 1 ![]() Create one VMkernel NIC for each physical NIC that carries the iSCSI VLAN.

Create one VMkernel NIC for each physical NIC that carries the iSCSI VLAN.

For example, if you want to configure two paths, create two physical NICs on the Cisco Nexus 1000V DVS to carry the iSCSI VLAN. The two physical NICs may carry other vlans. Create two VMkernel NICs for two paths.

Step 2 ![]() Attach the port profile configured with capability iscsi-multipath to the VMkernel ports.

Attach the port profile configured with capability iscsi-multipath to the VMkernel ports.

The Cisco Nexus 1000V automatically pins the VMkernel NICs to the physical NICs.

Step 3 ![]() From the ESX host, display the auto pinning configuration for verification.

From the ESX host, display the auto pinning configuration for verification.

~ # vemcmd show iscsi pinning

Example:

~ # vemcmd show iscsi pinning

Vmknic LTL Pinned_Uplink LTL

vmk6 49 vmnic2 19

vmk5 50 vmnic1 18

Step 4 ![]() You have completed this procedure. Return to the "Process for Uplink Pinning and Storage Binding" section.

You have completed this procedure. Return to the "Process for Uplink Pinning and Storage Binding" section.

Manually Pinning the NICs

You can use this procedure to override the automatic pinning of NICs done by the Cisco Nexus 1000V, and manually pin the VMkernel NICs to the physical NICs.

Note ![]() If the pinning done automatically by Cisco Nexus 1000V is not optimal or if you want to change the pinning, then this procedure describes how to use the vemcmd on the ESX host to override it.

If the pinning done automatically by Cisco Nexus 1000V is not optimal or if you want to change the pinning, then this procedure describes how to use the vemcmd on the ESX host to override it.

BEFORE YOU BEGIN

Before starting this procedure, you must know or do the following:

•![]() You are logged in to the ESX host.

You are logged in to the ESX host.

•![]() You have already created VMkernel NICs and attached a port profile to them, using the "Creating VMkernel NICs and Attaching the Port Profile" procedure.

You have already created VMkernel NICs and attached a port profile to them, using the "Creating VMkernel NICs and Attaching the Port Profile" procedure.

•![]() Before changing the pinning, you must remove the binding between the iSCSI VMkernel NIC and the VMHBA. This procedure includes a step for doing this.

Before changing the pinning, you must remove the binding between the iSCSI VMkernel NIC and the VMHBA. This procedure includes a step for doing this.

•![]() Manual pinning persists across ESX host reboots.Manual pinning is lost if the VMkernel NIC is moved from the DVS to the vSwitch and back.

Manual pinning persists across ESX host reboots.Manual pinning is lost if the VMkernel NIC is moved from the DVS to the vSwitch and back.

Step 1 ![]() List the binding for each VMHBA to identify the binding to remove (iSCSI VMkernel NIC to VMHBA).

List the binding for each VMHBA to identify the binding to remove (iSCSI VMkernel NIC to VMHBA).

esxcli swiscsi nic list -d vmhbann

Example:

esxcli swiscsi nic list -d vmhba33

vmk6

pNic name: vmnic2

ipv4 address: 169.254.0.1

ipv4 net mask: 255.255.0.0

ipv6 addresses:

mac address: 00:1a:64:d2:ac:94

mtu: 1500

toe: false

tso: true

tcp checksum: false

vlan: true

link connected: true

ethernet speed: 1000

packets received: 3548617

packets sent: 102313

NIC driver: bnx2

driver version: 1.6.9

firmware version: 3.4.4

vmk5

pNic name: vmnic3

ipv4 address: 169.254.0.2

ipv4 net mask: 255.255.0.0

ipv6 addresses:

mac address: 00:1a:64:d2:ac:94

mtu: 1500

toe: false

tso: true

tcp checksum: false

vlan: true

link connected: true

ethernet speed: 1000

packets received: 3548617

packets sent: 102313

NIC driver: bnx2

driver version: 1.6.9

firmware version: 3.4.4

Step 2 ![]() Remove the binding between the iSCSI VMkernel NIC and the VMHBA.

Remove the binding between the iSCSI VMkernel NIC and the VMHBA.

Example:

esxcli swiscsi nic remove --adapter vmhba33 --nic vmk6

esxcli swiscsi nic remove --adapter vmhba33 --nic vmk5

Note ![]() If active iSCSI sessions exist between the host and targets, the iSCSI port cannot be disconnected.

If active iSCSI sessions exist between the host and targets, the iSCSI port cannot be disconnected.

Step 3 ![]() From the EXS host, display the auto pinning configuration.

From the EXS host, display the auto pinning configuration.

~ # vemcmd show iscsi pinning

Example:

~ # vemcmd show iscsi pinning

Vmknic LTL Pinned_Uplink LTL

vmk6 49 vmnic2 19

vmk5 50 vmnic1 18

Step 4 ![]() Manually pin the VMkernel NIC to the physical NIC, overriding the auto pinning configuration.

Manually pin the VMkernel NIC to the physical NIC, overriding the auto pinning configuration.

~ # vemcmd set iscsi pinning vmk-ltl vmnic-ltl

Example:

~ # vemcmd set iscsi pinning 50 20

Step 5 ![]() Verify the manual pinning.

Verify the manual pinning.

~ # vemcmd show iscsi pinning

Example:

~ # vemcmd show iscsi pinning

Vmknic LTL Pinned_Uplink LTL

vmk6 49 vmnic2 19

vmk5 50 vmnic3 20

Step 6 ![]() You have completed this procedure. Return to the "Process for Uplink Pinning and Storage Binding" section.

You have completed this procedure. Return to the "Process for Uplink Pinning and Storage Binding" section.

Identifying the iSCSI Adapters for the Physical NICs

You can use one of the following procedures in this section to identify the iSCSI adapters associated with the physical NICs.

•![]() "Identifying iSCSI Adapters on the vSphere Client" procedure

"Identifying iSCSI Adapters on the vSphere Client" procedure

•![]() "Identifying iSCSI Adapters on the Host Server" procedure

"Identifying iSCSI Adapters on the Host Server" procedure

Identifying iSCSI Adapters on the vSphere Client

You can use this procedure on the vSphere client to identify the iSCSI adapters associated with the physical NICs.

BEFORE YOU BEGIN

Before beginning this procedure, you must know or do the following:

•![]() You are logged in to vSphere client.

You are logged in to vSphere client.

Step 1 ![]() From the Inventory panel, select a host.

From the Inventory panel, select a host.

Step 2 ![]() Click the Configuration tab.

Click the Configuration tab.

Step 3 ![]() In the Hardware panel, click Storage Adapters.

In the Hardware panel, click Storage Adapters.

The dependent hardware iSCSI adapter is displayed in the list of storage adapters.

Step 4 ![]() Select the adapter and click Properties.

Select the adapter and click Properties.

The iSCSI Initiator Properties dialog box displays information about the adapter, including the iSCSI name and iSCSI alias.

Step 5 ![]() Locate the name of the physical NIC associated with the iSCSI adapter.

Locate the name of the physical NIC associated with the iSCSI adapter.

The default iSCSI alias has the following format: driver_name-vmnic#,

where vmnic# is the NIC associated with the iSCSI adapter.

Step 6 ![]() You have completed this procedure. Return to the "Process for Uplink Pinning and Storage Binding" section.

You have completed this procedure. Return to the "Process for Uplink Pinning and Storage Binding" section.

Identifying iSCSI Adapters on the Host Server

You can use this procedure on the ESX or ESXi host to identify the iSCSI adapters associated with the physical NICs.

BEFORE YOU BEGIN

Before beginning this procedure, you must do the following:

•![]() You are logged in to the server host.

You are logged in to the server host.

Step 1 ![]() List the storage adapters on the server.

List the storage adapters on the server.

esxcfg-scsidevs -a

Example:

esxcfg-scsidevs -a

vmhba33 bnx2i unbound iscsi.vmhba33 Broadcom iSCSI Adapter

vmhba34 bnx2i online iscsi.vmhba34 Broadcom iSCSI Adapter

Step 2 ![]() For each adapter, list the physical NIC bound to it.

For each adapter, list the physical NIC bound to it.

esxcli swiscsi vmnic list -d adapter-name

Example:

esxcli swiscsi vmnic list -d vmhba33 | grep name

vmnic name: vmnic2

esxcli swiscsi vmnic list -d vmhba34 | grep name

vmnic name: vmnic3

For the software iSCSI adapter, all physical NICs in the server are listed.

For each hardware iSCSI adaptor, one physical NIC is listed.

Step 3 ![]() You have completed this procedure. Return to the section that pointed you here:

You have completed this procedure. Return to the section that pointed you here:

•![]() "Process for Uplink Pinning and Storage Binding" section.

"Process for Uplink Pinning and Storage Binding" section.

•![]() "Process for Converting to a Hardware iSCSI Configuration" section.

"Process for Converting to a Hardware iSCSI Configuration" section.

•![]() "Process for Changing the Access VLAN" section.

"Process for Changing the Access VLAN" section.

Binding the VMkernel NICs to the iSCSI Adapter

You can use this procedure to manually bind the physical VMkernel NICs to the iSCSI adapter corresponding to the pinned physical NICs.

BEFORE YOU BEGIN

Before starting this procedure, you must know or do the following:

•![]() You are logged in to the ESX host.

You are logged in to the ESX host.

•![]() You know the iSCSI adapters associated with the physical NICs, found in the "Identifying the iSCSI Adapters for the Physical NICs" procedure.

You know the iSCSI adapters associated with the physical NICs, found in the "Identifying the iSCSI Adapters for the Physical NICs" procedure.

Step 1 ![]() Find the physical NICs to which the VEM has pinned the VMkernel NICs.

Find the physical NICs to which the VEM has pinned the VMkernel NICs.

Example:

Vmknic LTL Pinned_Uplink LTL

vmk2 48 vmnic2 18

vmk3 49 vmnic3 19

Step 2 ![]() Bind the physical NIC to the iSCSI adapter found when "Identifying the iSCSI Adapters for the Physical NICs" procedure.

Bind the physical NIC to the iSCSI adapter found when "Identifying the iSCSI Adapters for the Physical NICs" procedure.

Example:

esxcli swiscsi nic add --adapter vmhba33 --nic vmk2

Example:

esxcli swiscsi nic add --adapter vmhba34 --nic vmk3

Step 3 ![]() You have completed this procedure. Return to the section that pointed you here:

You have completed this procedure. Return to the section that pointed you here:

•![]() "Process for Uplink Pinning and Storage Binding" section.

"Process for Uplink Pinning and Storage Binding" section.

•![]() "Process for Converting to a Hardware iSCSI Configuration" section.

"Process for Converting to a Hardware iSCSI Configuration" section.

•![]() "Process for Changing the Access VLAN" section.

"Process for Changing the Access VLAN" section.

Converting to a Hardware iSCSI Configuration

You can use the procedures in this section on an ESX 4.1 host to convert from a software iSCSI to a hardware iSCSI.

BEFORE YOU BEGIN

Before starting the procedures in this section, you must know or do the following:

•![]() You have scheduled a maintenance window for this conversion. Converting the setup from software to hardware iSCSI involves a storage update.

You have scheduled a maintenance window for this conversion. Converting the setup from software to hardware iSCSI involves a storage update.

Process for Converting to a Hardware iSCSI Configuration

You can use the following steps to convert to a hardware iSCSI configuration:

Step 1 ![]() In the vSphere client, disassociate the storage configuration made on the iSCSI NIC.

In the vSphere client, disassociate the storage configuration made on the iSCSI NIC.

Step 2 ![]() Remove the path to the iSCSI targets.

Remove the path to the iSCSI targets.

Step 3 ![]() Remove the binding between the VMkernel NIC and the iSCSI adapter using the "Removing the Binding to the Software iSCSI Adapter" procedure

Remove the binding between the VMkernel NIC and the iSCSI adapter using the "Removing the Binding to the Software iSCSI Adapter" procedure

Step 4 ![]() Move VMkernel NIC from the Cisco Nexus 1000V DVS to the vSwitch.

Move VMkernel NIC from the Cisco Nexus 1000V DVS to the vSwitch.

Step 5 ![]() Install the hardware NICs on the ESX host, if not already installed.

Install the hardware NICs on the ESX host, if not already installed.

Step 6 ![]() Do one of the following:

Do one of the following:

•![]() If the hardware NICs are already present on Cisco Nexus 1000V DVS, then continue with the next step.

If the hardware NICs are already present on Cisco Nexus 1000V DVS, then continue with the next step.

•![]() If the hardware NICs are not already present on Cisco Nexus 1000V DVS, then go to the "Adding the Hardware NICs to the DVS" procedure.

If the hardware NICs are not already present on Cisco Nexus 1000V DVS, then go to the "Adding the Hardware NICs to the DVS" procedure.

Step 7 ![]() Move the VMkernel NIC back from the vSwitch to the Cisco Nexus 1000V DVS.

Move the VMkernel NIC back from the vSwitch to the Cisco Nexus 1000V DVS.

Step 8 ![]() Find an iSCSI adapter, using the "Identifying the iSCSI Adapters for the Physical NICs" procedure

Find an iSCSI adapter, using the "Identifying the iSCSI Adapters for the Physical NICs" procedure

Step 9 ![]() Bind the NIC to the adapter, using the "Binding the VMkernel NICs to the iSCSI Adapter" procedure

Bind the NIC to the adapter, using the "Binding the VMkernel NICs to the iSCSI Adapter" procedure

Step 10 ![]() Verify the iSCSI multipathing configuration, using the "Verifying the iSCSI Multipath Configuration" procedure

Verify the iSCSI multipathing configuration, using the "Verifying the iSCSI Multipath Configuration" procedure

Removing the Binding to the Software iSCSI Adapter

You can use this procedure to remove the binding between the iSCSI VMkernel NIC and the software iSCSI adapter.

Step 1 ![]() Remove the iSCSI VMkernel NIC binding to the VMHBA.

Remove the iSCSI VMkernel NIC binding to the VMHBA.

Example:

esxcli swiscsi nic remove --adapter vmhba33 --nic vmk6

esxcli swiscsi nic remove --adapter vmhba33 --nic vmk5

Step 2 ![]() You have completed this procedure. Return to the "Process for Converting to a Hardware iSCSI Configuration" section.

You have completed this procedure. Return to the "Process for Converting to a Hardware iSCSI Configuration" section.

Adding the Hardware NICs to the DVS

You can use this procedure, if the hardware NICs are not on Cisco Nexus 1000V DVS, to add the uplinks to the DVS using the vSphere client.

BEEFORE YOU BEGIN

Before starting this procedure, you must know or do the following:

•![]() You are logged in to vSphere client.

You are logged in to vSphere client.

•![]() This procedure requires a server reboot.

This procedure requires a server reboot.

Step 1 ![]() Select a server from the inventory panel.

Select a server from the inventory panel.

Step 2 ![]() Click the Configuration tab.

Click the Configuration tab.

Step 3 ![]() In the Configuration panel, click Networking.

In the Configuration panel, click Networking.

Step 4 ![]() Click the vNetwork Distributed Switch.

Click the vNetwork Distributed Switch.

Step 5 ![]() Click Manage Physical Adapters.

Click Manage Physical Adapters.

Step 6 ![]() Select the port profile to use for the hardware NIC.

Select the port profile to use for the hardware NIC.

Step 7 ![]() Click Click to Add NIC.

Click Click to Add NIC.

Step 8 ![]() In Unclaimed Adapters, select the physical NIC and Click OK.

In Unclaimed Adapters, select the physical NIC and Click OK.

Step 9 ![]() In the Manage Physical Adapters window, click OK.

In the Manage Physical Adapters window, click OK.

Step 10 ![]() Move the iSCSI VMkernel NICs from vSwitch to the Cisco Nexus 1000V DVS.

Move the iSCSI VMkernel NICs from vSwitch to the Cisco Nexus 1000V DVS.

The VMkernel NICs are automatically pinned to the hardware NICs.

Step 11 ![]() You have completed this procedure. Return to the "Process for Converting to a Hardware iSCSI Configuration" section.

You have completed this procedure. Return to the "Process for Converting to a Hardware iSCSI Configuration" section.

Changing the VMkernel NIC Access VLAN

You can use the procedures in this section to change the access VLAN, or the networking configuration, of the iSCSI VMkernel.

Process for Changing the Access VLAN

You can use the following steps to change the VMkernel NIC access VLAN:

Step 1 ![]() In the vSphere client, disassociate the storage configuration made on the iSCSI NIC.

In the vSphere client, disassociate the storage configuration made on the iSCSI NIC.

Step 2 ![]() Remove the path to the iSCSI targets.

Remove the path to the iSCSI targets.

Step 3 ![]() Remove the binding between the VMkernel NIC and the iSCSI adapter using the "Removing the Binding to the Software iSCSI Adapter" procedure

Remove the binding between the VMkernel NIC and the iSCSI adapter using the "Removing the Binding to the Software iSCSI Adapter" procedure

Step 4 ![]() Move VMkernel NIC from the Cisco Nexus 1000V DVS to the vSwitch.

Move VMkernel NIC from the Cisco Nexus 1000V DVS to the vSwitch.

Step 5 ![]() Change the access VLAN, using the "Changing the Access VLAN" procedure.

Change the access VLAN, using the "Changing the Access VLAN" procedure.

Step 6 ![]() Move the VMkernel NIC back from the vSwitch to the Cisco Nexus 1000V DVS.

Move the VMkernel NIC back from the vSwitch to the Cisco Nexus 1000V DVS.

Step 7 ![]() Find an iSCSI adapter, using the "Identifying the iSCSI Adapters for the Physical NICs" procedure

Find an iSCSI adapter, using the "Identifying the iSCSI Adapters for the Physical NICs" procedure

Step 8 ![]() Bind the NIC to the adapter, using the "Binding the VMkernel NICs to the iSCSI Adapter" procedure

Bind the NIC to the adapter, using the "Binding the VMkernel NICs to the iSCSI Adapter" procedure

Step 9 ![]() Verify the iSCSI multipathing configuration, using the "Verifying the iSCSI Multipath Configuration" procedure

Verify the iSCSI multipathing configuration, using the "Verifying the iSCSI Multipath Configuration" procedure

Changing the Access VLAN

BEFORE YOU BEGIN

Before starting this procedure, you must know or do the following:

•![]() You are logged in to the ESX host.

You are logged in to the ESX host.

•![]() You are not allowed to change the access VLAN of an iSCSI multipath port profile if it is inherited by a VMkernel NIC. Use the show port-profile name profile-name command to verify inheritance.

You are not allowed to change the access VLAN of an iSCSI multipath port profile if it is inherited by a VMkernel NIC. Use the show port-profile name profile-name command to verify inheritance.

Step 1 ![]() Remove the path to the iSCSI targets from the vSphere client.

Remove the path to the iSCSI targets from the vSphere client.

Step 2 ![]() List the binding for each VMHBA to identify the binding to remove (iSCSI VMkernel NIC to VMHBA).

List the binding for each VMHBA to identify the binding to remove (iSCSI VMkernel NIC to VMHBA).

esxcli swiscsi nic list -d vmhbann

Example:

esxcli swiscsi nic list -d vmhba33

vmk6

pNic name: vmnic2

ipv4 address: 169.254.0.1

ipv4 net mask: 255.255.0.0

ipv6 addresses:

mac address: 00:1a:64:d2:ac:94

mtu: 1500

toe: false

tso: true

tcp checksum: false

vlan: true

link connected: true

ethernet speed: 1000

packets received: 3548617

packets sent: 102313

NIC driver: bnx2

driver version: 1.6.9

firmware version: 3.4.4

vmk5

pNic name: vmnic3

ipv4 address: 169.254.0.2

ipv4 net mask: 255.255.0.0

ipv6 addresses:

mac address: 00:1a:64:d2:ac:94

mtu: 1500

toe: false

tso: true

tcp checksum: false

vlan: true

link connected: true

ethernet speed: 1000

packets received: 3548617

packets sent: 102313

NIC driver: bnx2

driver version: 1.6.9

firmware version: 3.4.4

Step 3 ![]() Remove the iSCSI VMkernel NIC binding to the VMHBA.

Remove the iSCSI VMkernel NIC binding to the VMHBA.

Example:

esxcli swiscsi nic remove --adapter vmhba33 --nic vmk6 esxcli swiscsi nic remove --adapter vmhba33 --nic vmk5

Step 4 ![]() Remove the capability iscsi-multipath configuration from the port profile.

Remove the capability iscsi-multipath configuration from the port profile.

no capability iscsi-multipath

Example:

n1000v# config t

n1000v(config)# port-profile type vethernet VMK-port-profile

n1000v(config-port-prof)# no capability iscsi-multipath

Step 5 ![]() Remove the system VLAN.

Remove the system VLAN.

no system vlan vlanID

Example:

n1000v# config t

n1000v(config)# port-profile type vethernet VMK-port-profile

n1000v(config-port-prof)# no system vlan 300

Step 6 ![]() Change the access VLAN in the port profile.

Change the access VLAN in the port profile.

switchport access vlan vlanID

Example:

n1000v# config t

n1000v(config)# port-profile type vethernet VMK-port-profile

n1000v(config-port-prof)# switchport access vlan 300

Step 7 ![]() Add the system VLAN.

Add the system VLAN.

system vlan vlanID

Example:

n1000v# config t

n1000v(config)# port-profile type vethernet VMK-port-profile

n1000v(config-port-prof)# system vlan 300

Step 8 ![]() Add the capability iscsi-multipath configuration back to the port profile.

Add the capability iscsi-multipath configuration back to the port profile.

capability iscsi-multipath

Example:

n1000v# config t

n1000v(config)# port-profile type vethernet VMK-port-profile

n1000v(config-port-prof)# capability iscsi-multipath

Step 9 ![]() You have completed this procedure. Return to the "Process for Changing the Access VLAN" section.

You have completed this procedure. Return to the "Process for Changing the Access VLAN" section.

Verifying the iSCSI Multipath Configuration

You can use the commands in this section to verify the iSCSI multipath configuration.

|

|

|

|---|---|

~ # vemcmd show iscsi pinning |

Displays the auto pinning of VMkernel NICs See Example 13-1. |

esxcli swiscsi nic list -d vmhba33 |

Displays the iSCSI adapter binding of VMkernel NICs. See Example 13-2 |

show port-profile [brief | expand-interface | usage] [name profile-name] |

Displays the port profile configuration. See Example 13-3 |

Example 13-1 ~ # vemcmd show iscsi pinning

~ # vemcmd show iscsi pinning

Vmknic LTL Pinned_Uplink LTL

vmk6 49 vmnic2 19

vmk5 50 vmnic1 18

Example 13-2 esxcli swiscsi nic list -d vmhbann

esxcli swiscsi nic list -d vmhba33

vmk6

pNic name: vmnic2

ipv4 address: 169.254.0.1

ipv4 net mask: 255.255.0.0

ipv6 addresses:

mac address: 00:1a:64:d2:ac:94

mtu: 1500

toe: false

tso: true

tcp checksum: false

vlan: true

link connected: true

ethernet speed: 1000

packets received: 3548617

packets sent: 102313

NIC driver: bnx2

driver version: 1.6.9

firmware version: 3.4.4

Example 13-3 show port-profile name iscsi-profile

n1000v# show port-profile name iscsi-profile

port-profile iscsi-profile

type: Vethernet

description:

status: enabled

max-ports: 32

inherit:

config attributes:

evaluated config attributes:

assigned interfaces:

port-group:

system vlans: 254

capability l3control: no

capability iscsi-multipath: yes

port-profile role: none

port-binding: static

n1000v#

Managing Storage Loss Detection

This section describes the command that provides the configuration to detect storage connectivity losses and provides support when storage loss is detected. When VSMs are hosted on remote storage systems such as NFS or iSCSI, storage connectivity can be lost. This connectivity loss can cause unexpected VSM behavior.

Use the following command syntax to configure storage loss detection:

system storage-loss { log | reboot } [ time <interval> ] | no system storage-loss [ { log | reboot } ] [ time <interval> ]

The time interval value is the intervals at which the VSM checks for storage connectivity status. If a storage loss is detected, the syslog displays. The default interval is 30 seconds. You can configure the intervals from 30 seconds to 600 seconds. The default configuration for this command is:

system storage-loss log time 30

Note ![]() Configure this command in EXEC mode. Do not use configuration mode.

Configure this command in EXEC mode. Do not use configuration mode.

The following describes how this command manages storage loss detection:

•![]() Log only: A syslog message is displayed stating that a storage loss has occurred. The administrator must take action immediately to avoid an unexpected VSM state.

Log only: A syslog message is displayed stating that a storage loss has occurred. The administrator must take action immediately to avoid an unexpected VSM state.

•![]() Reset: The VSM on which the storage loss is detected is reloaded automatically to avoid an unexpected VSM state.

Reset: The VSM on which the storage loss is detected is reloaded automatically to avoid an unexpected VSM state.

–![]() Storage loss on the active VSM: The active VSM is reloaded. The standby VSM becomes active and takes control of the hosts.

Storage loss on the active VSM: The active VSM is reloaded. The standby VSM becomes active and takes control of the hosts.

–![]() Storage loss on the standby VSM: The standby VSM is reloaded. The active VSM continues to control the hosts.

Storage loss on the standby VSM: The standby VSM is reloaded. The active VSM continues to control the hosts.

Note ![]() Do not keep both the active and standby VSMs on the same remote storage, so that storage losses do not affect the VSM operations.

Do not keep both the active and standby VSMs on the same remote storage, so that storage losses do not affect the VSM operations.

BEFORE YOU BEGIN

Before beginning this procedure, you must know or do the following:

•![]() You are logged in to the CLI in EXEC mode.

You are logged in to the CLI in EXEC mode.

SUMMARY STEPS

1. ![]() system storage-loss {time <interval>}

system storage-loss {time <interval>}

2. ![]() copy running-config startup-config

copy running-config startup-config

DETAILED STEPS

EXAMPLES

The following command disables the storage-loss checking. Whenever the VSMs are installed on local storage, this is the configuration we recommend.

n1000v# no system storage-loss

Note ![]() Disable storage loss checking if both VSMs are in local storage.

Disable storage loss checking if both VSMs are in local storage.

The following command enables storage loss detection time intervals on an active or standby VSM and displays a syslog message about the storage loss. In this example, the VSM is checked for storage loss every 60 seconds. The administrator has to take action to recover the storage and avoid an inconsistent VSM state:

n1000v# system storage-loss log time 60

The following command enables storage loss detection to be performed every 50 seconds.

n1000v# system storage-loss log time 50

The following example shows the commands you use to configure logging only when storage loss is detected:

n1000v# system storage-loss log time 60

n1000v# copy run start

The following example shows the commands you use to configure the VSM to reboot when storage loss is detected:

n1000v# system storage-loss reboot time 60

n1000v# copy run start

The following example shows the commands you use to disable storage loss checking:

n1000v# no system storage-loss

n1000v# copy run start

Additional References

For additional information related to implementing iSCSI Multipath, see the following sections:

Related Documents

Standards

|

|

|

|---|---|

No new or modified standards are supported by this feature, and support for existing standards has not been modified by this feature. |

— |

Feature History for iSCSI Multipath

Table 13-2 lists the release history for the iSCSI Multipath feature.

Feedback

Feedback