FlashStack Data Center for Oracle RAC 19c Database on NVMe/RoCE

Available Languages

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- US/Canada 800-553-2447

- Worldwide Support Phone Numbers

- All Tools

Feedback

Feedback

FlashStack Data Center for Oracle RAC 19c Database on NVMe/RoCE

Deployment Guide for Oracle RAC 19c Databases on Cisco UCS with Pure Storage FlashArray//X90 R2 on NVMe over RoCE (RDMA over Converged Ethernet v2)

Published: August 2021

In partnership with:

![]()

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to:

http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unified Computing System (Cisco UCS), Cisco UCS B-Series Blade Servers, Cisco UCS C-Series Rack Servers, Cisco UCS S-Series Storage Servers, Cisco UCS Manager, Cisco UCS Management Software, Cisco Unified Fabric, Cisco Application Centric Infrastructure, Cisco Nexus 9000 Series, Cisco Nexus 7000 Series. Cisco Prime Data Center Network Manager, Cisco NX-OS Software, Cisco MDS Series, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries. (LDW_P).

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2021 Cisco Systems, Inc. All rights reserved.

Deployment Hardware and Software

Operating System and Database Deployment

The IT industry has been transforming rapidly to converged infrastructure, which enables faster provisioning, scalability, lower data center costs, simpler management infrastructure with technology advancement. There is a current industry trend for pre-engineered solutions which standardize the data center infrastructure and offers operational efficiencies and agility to address enterprise applications and IT services. This standardized data center needs to be seamless instead of siloed when spanning multiple sites, delivering a uniform network and storage experience to the compute systems and end users accessing these data centers.

The FlashStack solution provides best of breed technology from Cisco Unified Computing System and Pure Storage to gain the benefits that converged infrastructure brings to the table. FlashStack solution provides the advantage of having the compute, storage, and network stack integrated with the programmability of the Cisco Unified Computing System (Cisco UCS). Cisco Validated Designs (CVDs) consist of systems and solutions that are designed, tested, and documented to facilitate and improve customer deployments. These designs incorporate a wide range of technologies and products into a portfolio of solutions that have been developed to address the business needs of customers and to guide them from design to deployment.

This Cisco Validated Design (CVD) describes a FlashStack reference architecture for deploying a highly available Oracle Multitenant RAC 19c Databases environment on Pure Storage FlashArray//X90 R2 using Cisco UCS Compute Servers, Cisco Fabric Interconnect Switches, Cisco Nexus Switches and Red Hat Enterprise Linux. Cisco and Pure Storage have validated the reference architecture with various Database workloads like OLTP (Online Transactional Processing) and Data Warehouse in Cisco’s UCS Datacenter lab. This document presents the hardware and software configuration of the components involved, results of various tests performed and offers implementation and best practices guidance.

Introduction

This Cisco Validated Design (CVD) describes how Cisco UCS System can be used in conjunction with Pure Storage FlashArray //X90 R2 System to implement an Oracle Multitenant Real Application Cluster (RAC) 19c Database solution on NVMe over RoCE (RDMA over Converged Ethernet). Oracle Multitenant is a new option starting with Oracle Database 12c Enterprise Edition that helps customers reduce IT costs by simplifying consolidation, provisioning, upgrades, and more. The Oracle Multitenant architecture allows a container database to hold many pluggable databases and it fully complements other options, including Oracle Real Application Clusters and Oracle Active Data Guard.

FlashStack embraces the latest technology and efficiently simplifies data center workloads that redefine the way IT delivers value:

· A cohesive, integrated system that is managed, serviced and tested as a whole

· Guarantee customer success with prebuilt, pre-tested drivers and Oracle database software

· Faster Time to Deployment – Leverage a pre-validated platform to minimize business disruption, improve IT agility, and reduce deployment time from months to weeks.

· Reduces Operational Risk – Highly available architecture with no single point of failure, non-disruptive operations, and no downtime.

Audience

The target audience for this document includes but is not limited to storage administrators, data center architects, database administrators, field consultants, IT managers, Oracle solution architects and customers who want to implement Oracle RAC database solutions with Red Hat Enterprise Linux on a FlashStack Converged Infrastructure solution. A working knowledge of Oracle RAC Database, Linux, Storage technology, and Network is assumed but is not a prerequisite to read this document

Purpose of this Document

Oracle RAC database often manage the mission critical components of a customer’s IT department. Ensuring availability while lowering the IT department’s TCO is always the database administrator’s top priority. This FlashStack solution for Oracle RAC databases delivers industry-leading storage, unprecedented scalability, high availability and simplified operational management for customers and their business demands. The goal of this Cisco Validated Design (CVD) is to highlight the performance, scalability, manageability, and high availability for OLTP and OLAP type of Oracle Databases on the FlashStack CI Solution.

The following are the objectives of this reference architecture document:

· Provide reference architecture design guidelines for deploying Oracle RAC Databases on FlashStack.

· Build, validate, and predict performance of Server, Network, and Storage platform on various types of workload

· Demonstrate the seamless scalability of performance and capacity to meet growth needs of Oracle Databases.

· Confirm high availability of Database instances, without performance compromise through software and hardware upgrades.

Starting from Oracle Database release 12c onwards, there are two ways to create a database, as a multitenant database or a pre-12c non-multitenant database. In this solution, we will deploy both types of databases (Non-Container Database and Container Database) and perform testing on various types of workloads to check how performance on both aspects of it. We will demonstrate the scalability and performance of this solution by running database stress tests such as SwingBench and SLOB (Silly Little Oracle Benchmark) on OLTP (Online Transaction Processing) and DSS (Decision Support System) databases with varying users, nodes and read/write workload characteristics.

What’s New in this Release?

This version of the FlashStack CVD introduces the Pure Storage DirectFlash™ Fabric that brings the low latency and high performance of NVMe technology to the storage network along with Cisco UCS 5th Generation B200 M5 Blade Servers to deploy Oracle RAC Database Releases 19c using RDMA over Converged Ethernet (RoCE).

It incorporates the following features:

· Cisco UCS B200 M5 Blade Servers with 2nd Generation Intel® Xeon™ Scalable Processors

· Validation of Oracle RAC 19c Container and Non-Container Database deployments

· Support for the NVMe/RoCE on Cisco UCS and Pure Storage

· Support for the Cisco UCS Infrastructure and UCS Manager Software Release 4.1

· 100Gbps NVMe/RoCE Storage to Nexus Switch Connectivity

Solution Summary

NVMe is a host controller interface and storage protocol that was created by an industry body called NVM Express Inc as a replacement for SCSI/SAS and SATA. It enables fast transfer of data over a computer’s high-speed Peripheral Component Interconnect Express (PCIe) bus. It was designed from the ground up for low-latency solid state media, eliminating many of the bottlenecks seen in the legacy protocols for running enterprise applications.

NVMe devices are connected to the PCIe bus inside a server. NVMe-oF extends the high-performance and low-latency benefits of NVMe across network fabrics that connect servers and storage. NVMe-oF takes the lightweight and streamlined NVMe command set, and the more efficient queueing model, and replaces the PCIe transport with alternate transports, like Fibre Channel, RDMA over Converged Ethernet (RoCE v2), TCP.

Remote Direct Memory Access (RDMA) is the ability of accessing (read, write) memory on a remote machine without interrupting the processing of the CPU(s) on that system. Remote Direct Memory Access (RDMA) provides direct memory access from the memory of one host (storage or compute) to the memory of another host without involving the remote Operating System and CPU, boosting network and host performance with lower latency, lower CPU load and higher bandwidth. RoCE (RDMA over Converged Ethernet, pronounced Rocky) provides a seamless, low overhead, scalable way to solve the TCP/IP I/O bottleneck with minimal extra infrastructure.

NVMe-oF provides better performance for the following reasons:

· Lower latency

· Higher IOPs

· Higher bandwidth

· Improved protocol efficiency by reducing the I/O stack

· Lower CPU utilization on the host by offloading some processing from the kernel to the HBA.

RDMA over Converged Ethernet (RoCE) is a standard protocol which enables RDMA’s efficient data transfer over Ethernet networks allowing transport offload with hardware RDMA engine implementation, and superior performance. RoCE is a standard protocol defined in the InfiniBand Trade Association (IBTA) standard. RoCE v2 makes use of UDP encapsulation allowing it to transcend Layer 3 networks.

In this FlashStack solution, we will showcase Cisco UCS System with Pure’s FlashArray//X90 R2 running on NVMe-oF which can provide efficiency and performance of NVMe, and the benefits of shared accelerated storage with advanced data services like redundancy, thin provisioning, snapshots and replication.

The FlashStack platform, developed by Cisco and Pure Storage, is a flexible, integrated infrastructure solution that delivers pre-validated storage, networking, and server technologies. Composed of defined set of hardware and software, this FlashStack solution is designed to increase IT responsiveness to organizational needs and reduce the cost of computing with maximum uptime and minimal risk. Cisco and Pure Storage have carefully validated and verified the FlashStack solution architecture and its many use cases while creating a portfolio of detailed documentation, information, and references to assist customers in transforming their data centers to this shared infrastructure model.

This portfolio includes, but is not limited to, the following items:

· Best practice architectural design

· Implementation and deployment instructions and provides application sizing based on results

As shown in Figure 1, these components are connected and configured according to best practices of both Cisco and Pure Storage and provides the ideal platform for running a variety of enterprise database workloads with confidence. FlashStack can scale up for greater performance and capacity (adding compute, network, or storage resources individually as needed), or it can scale out for environments that require multiple consistent deployments.

The reference architecture covered in this document leverages the Pure Storage FlashArray//X90 R2 Controller with NVMe based DirectFlash™ Fabric for Storage, Cisco UCS B200 M5 Blade Server for Compute, Cisco Nexus 9000 series Switches for the networking element and Cisco Fabric Interconnects 6300 series for System Management. As shown in Figure 1, FlashStack Architecture can maintain consistency at scale. Each of the component families shown in (Cisco UCS, Cisco Nexus, Cisco FI and Pure Storage) offers platform and resource options to scale the infrastructure up or down, while supporting the same features and functionality that are required under the configuration and connectivity best practices of FlashStack.

FlashStack provides a jointly supported solution by Cisco and Pure Storage. Bringing a carefully validated architecture built on superior compute, world-class networking, and the leading innovations in all flash storage. The portfolio of validated offerings from FlashStack includes but is not limited to the following:

· Consistent Performance and Scalability

· Operational Simplicity

· Mission Critical and Enterprise Grade Resiliency

Cisco and Pure Storage have also built a robust and experienced support team focused on FlashStack solutions, from customer account and technical sales representatives to professional services and technical support engineers. The support alliance between Pure Storage and Cisco gives customers and channel services partners direct access to technical experts who collaborate with cross vendors and have access to shared lab resources to resolve potential issues.

Deployment Hardware and Software

This FlashStack solution provides an end-to-end architecture with Cisco Unified Computing System, Oracle, and Pure Storage technologies and demonstrates the benefits for running Oracle Multitenant RAC Databases 19c workload with high availability and redundancy. The reference FlashStack architecture covered in this document is built on the Pure Storage FlashArray//X90 R2 Series for Storage, Cisco UCS B200 M5 Blade Servers for Compute, Cisco Nexus 9336C-FX2 Switches for Network and Cisco Fabric Interconnects 6332-16UP Fabric Interconnects for System Management in a single package. The design is flexible enough that the networking, computing, and storage can fit in one data center rack or be deployed according to a customer's data center design. The reference architecture reinforces the "wire-once" strategy, because as additional storage is added to the architecture, no re-cabling is required from the hosts to the Cisco UCS fabric interconnect.

Physical Topology

This solution consists of the following set of hardware combined into a single stack as:

· Compute: Cisco UCS B200 M5 Blade Servers with Cisco Virtual Interface Cards (VIC) 1440

· Network: Cisco Nexus 9336C-FX2 and Cisco UCS Fabric Interconnect 6332-16UP for network and management connectivity

· Storage: Pure Storage FlashArray //X90 R2

In this solution design, two Cisco UCS Blade Server Chassis were used with 8 identical Intel Xeon CPU based Cisco UCS B200 M5 Blade Servers for hosting the 8-Node Oracle RAC Databases. The Cisco UCS B200 M5 Server has Virtual Interface Card (VIC) 1440 with port expander and they were connected four ports from each Cisco Fabric extender 2304 of the Cisco UCS Chassis to the Cisco Fabric Interconnects, which were in turn connected to the Cisco Nexus Switches for upstream connectivity to access the Pure storage.

Figure 2 shows the architecture diagram of the components and the network connections to deploy an eight node Oracle RAC 19c Databases solution. This reference design is a typical network configuration that can be deployed in a customer's environments. The best practices and setup recommendations are described later in this document.

As shown in Figure 2, a pair of the Cisco UCS 6332-16UP Fabric Interconnects (FI) carries both storage and network traffic from the Cisco UCS B200 M5 server blades with the help of Cisco Nexus 9336C-FX2 Switches. Both the Fabric Interconnects and the Cisco Nexus switches are clustered with the peer link between them to provide high availability. Three virtual Port-Channels (vPCs) are configured to provide public network, private network and storage network paths for the server blades to northbound switches and storage to provide aggregated bandwidth and redundancy. Each vPC has VLANs created for application network data, storage data and management data paths.

As illustrated in the architecture, eight (4 x 40G link per chassis) links from the Blade Server Chassis go to Fabric Interconnect – A. Similarly, eight (4 x 40G link per chassis) links from the Blade Server Chassis go to Fabric Interconnect – B. Fabric Interconnect – A links are used for Oracle Public Network Traffic (VLAN-135) and Storage Network Traffic (VLAN-11) shown as green lines. Fabric Interconnect – B links are used for Oracle Private Interconnect Traffic (VLAN 10) and Storage Network Traffic (VLAN-12) shown as red lines.

From Fabric Interconnect – A, two 40G links go to Nexus Switch – A and two 40G links go to Nexus Switch – B which is configured as Port-Channel (vPC 51). Similarly, From Fabric Interconnect – B, two 40G links go to Nexus Switch – A and two 40G links go to Nexus Switch – B which is configured as Port-Channel (vPC 52). From Nexus Switch – A, one 100G link goes to Pure Storage Controller CT0 and one 100G link goes to Pure Storage Controller CT1 shown as blue lines. Likewise, from Nexus Switch – B, one 100G link goes to Pure Storage Controller CT0 and one 100G link goes to Pure Storage Controller CT1 shown as blue lines. Storage access from Nexus Switch – A and Nexus Switch –B show as blue lines. This wired connectivity and configuration provide high availability and redundancy to keep the database system running with no single point of failure.

![]() For Oracle RAC configuration on Cisco Unified Computing System, we recommend keeping all private interconnects network traffic local on a single Fabric interconnect. In such a case, the private traffic will stay local to that fabric interconnect and will not be routed via northbound network switch. In that way, all the inter server blade (or RAC node private) communication will be resolved locally at the fabric interconnects and this significantly reduces latency for Oracle Cache Fusion traffic.

For Oracle RAC configuration on Cisco Unified Computing System, we recommend keeping all private interconnects network traffic local on a single Fabric interconnect. In such a case, the private traffic will stay local to that fabric interconnect and will not be routed via northbound network switch. In that way, all the inter server blade (or RAC node private) communication will be resolved locally at the fabric interconnects and this significantly reduces latency for Oracle Cache Fusion traffic.

Additional 1Gb management connections will be needed for an out-of-band network switch that sits apart from this physical infrastructure. Both Cisco UCS fabric interconnect and Cisco Nexus switch is connected to the out-of-band network switch, and each Pure Storage controller also has two connections to the out-of-band network switch.

Although this is the base design, each of the components can be scaled easily to support specific business requirements. For example, more servers or even blade chassis can be deployed to increase compute capacity, additional disk shelves can be deployed to improve I/O capability and throughput, and special hardware or software features can be added to introduce new features. This document guides you through the low-level steps for deploying the base architecture, as shown in Figure 2. These procedures explain everything from physical cabling to network, compute and storage device configurations.

Design Topology

This section describes the hardware and software components used to deploy the Oracle RAC Database Solution on FlashStack.

Table 1 Hardware Inventory and Bill of Materials

| Name |

Model/Product ID |

Description |

Quantity |

| Cisco UCS 5108 Blade Server Chassis |

UCSB-5108-AC2 |

Cisco UCS AC Blade Server Chassis, 6U with Eight Blade Server Slots |

2 |

| Cisco UCS Fabric Extender |

UCS-IOM-2304 |

Cisco UCS 2304 4x40 G Port IO Module |

4 |

| Cisco UCS B200 M5 Blade Server |

UCSB-B200-M5 |

Cisco UCS B200 M5 2 Socket Blade Server |

8 |

| Cisco UCS VIC 1440 |

UCSB-MLOM-40G-04 |

Cisco UCS VIC 1440 Blade MLOM |

8 |

| Cisco UCS Port Expander Card |

UCSB-MLOM-PT-01 |

Port Expander Card for Cisco UCS MLOM |

8 |

| Cisco UCS 6332-16UP Fabric Interconnect |

UCS-FI-6332-16UP |

Cisco UCS 24X40G 16X10G Port 1RU Fabric Interconnect |

2 |

| Cisco Nexus Switch |

N9K-9336C-FX2 |

Cisco Nexus 9336C-FX2 Switch |

2 |

| Pure Storage FlashArray |

FA-X90 R2 |

Pure Storage FlashArray//X90 R2 |

1 |

| Pure Storage NVMe/RoCE NIC |

FA-XR2-100Gb NVMe/RoCE 2 Port UPG |

NVMe/RoCE Network Card per FlashArray//X Controller |

4 |

In this solution design, we used 8 identical Cisco UCS B200 M5 Blade Servers for hosting the 8-Node Oracle RAC Databases. The Cisco UCS B200 M5 Server configuration is listed in Table 2 .

Table 2 Cisco UCS B200 M5 Blade Server

| Cisco UCS B200 M5 Server Configuration |

|

| Processor |

2 x Intel(R) Xeon(R) Gold 6248 2.50 GHz 150W 20C 27.50MB Cache DDR4 2933MHz (PID - UCS-CPU-I6248) |

| Memory |

12 x Samsung 64GB DDR4-2933-MHz LRDIMM/4Rx4/1.2v (PID - UCS-ML-X64G4RT-H) |

| Cisco UCS VIC 1440 |

Cisco UCS VIC 1440 Blade MLOM (PID - UCSB-MLOM-40G-04) |

| Cisco UCS Port Expander Card |

Port Expander Card for Cisco UCS MLOM (PID - UCSB-MLOM-PT-01) |

In this solution, we configured four vNIC (Network Interface Cards) on each host to carry all the network traffic.

Table 3 vNIC configured on Each Host

| vNIC Details |

|

| vNIC (eth0) |

Management and Public Network Traffic Interface for Oracle RAC. MTU = 1500 |

| vNIC (eth1) |

Private Server-to-Server Network (Cache Fusion) Traffic Interface for Oracle RAC. MTU = 9000 |

| vNIC (eth2) |

RoCE v2 Database IO Traffic to Pure Storage. MTU = 9000 |

| vNIC (eth3) |

RoCE v2 Database IO Traffic to Pure Storage. MTU = 9000 |

For this solution, we configured 4 VLANs to carry public, private and storage network traffic as listed in Table 4 .

| VLANs Details |

||

| Name |

ID |

Description |

| Default VLAN |

1 |

Native VLAN |

| Public VLAN |

135 |

VLAN for Public Network Traffic |

| Private VLAN |

10 |

VLAN for Private Network Traffic |

| Storage VLAN - A |

11 |

VLAN for RoCE Storage Traffic |

| Storage VLAN - B |

12 |

VLAN for RoCE Storage Traffic |

Table 5 Pure Storage FlashArray//X90 R2

| Pure Storage FlashArray//X90 R2 Details |

|

| FlashArray//X90 R2 |

Pure Storage FlashArray//X90 R2 |

| Capacity |

28 TB Direct Flash Storage |

| Connectivity |

4 x 100Gb NVMe/RoCE (2 Host I/O Card per each storage controller) 2 x 1Gb Management Ports |

| Physical |

3 RU |

Table 6 Software and Firmware

| Software and Firmware |

Version/Release |

| Cisco UCS Manager System · Cisco UCS Infrastructure Software Bundle · Cisco UCS Infrastructure Software Bundle for the Cisco UCS 6332 Fabric Interconnects · Cisco UCS B-Series Blade Server Software Bundle |

4.1(1a) · 4.1(1a) · ucs-6300-k9-bundle-infra.4.1.1a.A.bin · ucs-k9-bundle-b-series.4.1.1a.B.bin |

| Cisco UCS Adapter VIC 1440 |

5.1(1e) |

| Cisco eNIC (modinfo enic) (Cisco VIC Ethernet NIC Driver) |

4.0.0.6-802.21 kmod-enic-4.0.0.6-802.21.rhel7u6.x86_64.rpm |

| Cisco eNIC_rdma (modinfo enic_rdma) (Cisco VIC Ethernet NIC RDMA Driver) |

1.0.0.6-802.21 kmod-enic_rdma-1.0.0.6-802.21.rhel7u6.x86_64.rpm |

| Red Hat Enterprise Linux Server release 7.6 |

Linux 3.10.0-957.27.2.el7.x86_64 |

| Pure Storage Purity |

Purity //FA 5.3.2 |

| Cisco Nexus 9336C-FX2 NXOS Version |

9.3(2) |

| Oracle Database 19c (19.3) for Linux x86-64 Enterprise Edition |

19.3.0.0.0 |

| Oracle Database 19c Grid Infrastructure (19.3) for Linux x86-64 |

19.3.0.0.0 |

| SLOB |

2.4.2 |

| SwingBench |

2.6.1124 |

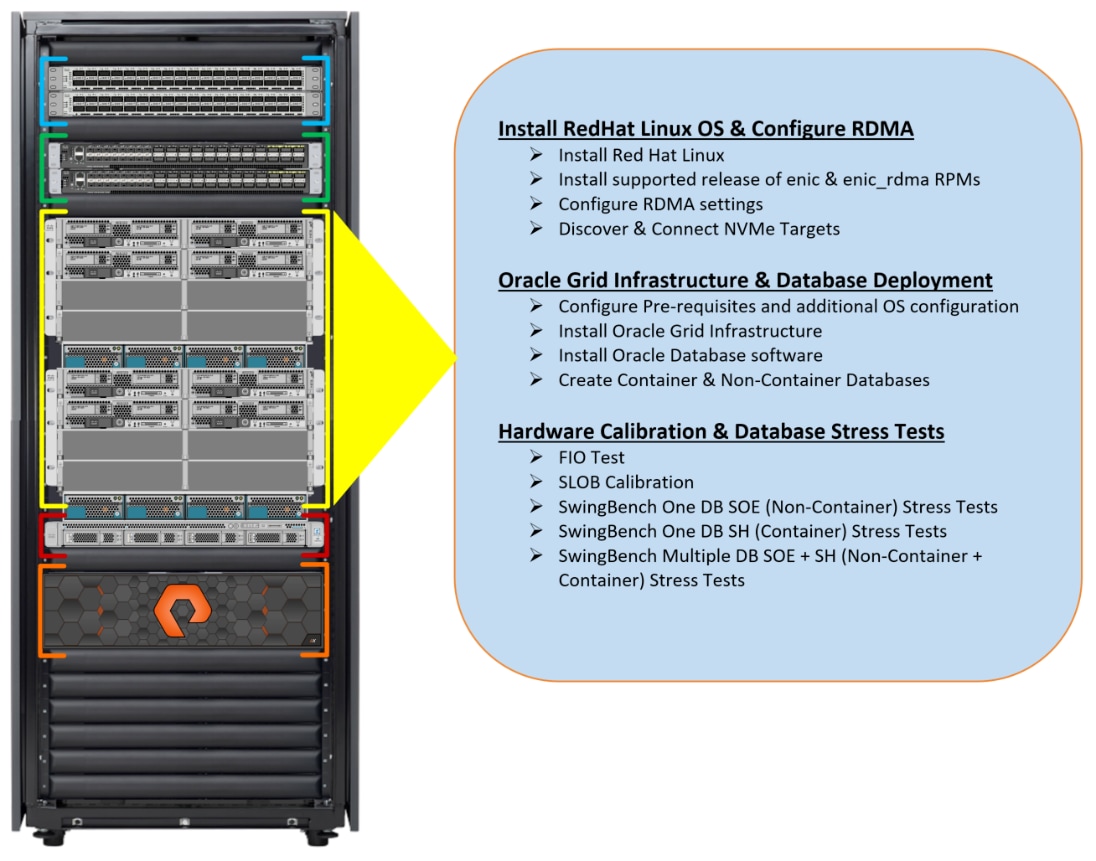

Figure 3 shows the high-level overview and steps for configuring various components to deploy and test the Oracle RAC Database 19c on FlashStack reference architecture. This section details the configuration of this solution.

Figure 3 highlights the overview of various components such as Nexus Switches, Fabric Interconnects, Pure Storage FlashArray and Blade Servers configuration steps to deploy this reference solution.

Cisco Nexus Switch Configuration

This section details the high-level steps to configure Cisco Nexus Switches as shown in Figure 4.

Initial Setup

![]() On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning.

On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning.

To set up the initial configuration for the Cisco Nexus A switch on <nexus-A-hostname>, follow these steps:

Abort Power on Auto Provisioning and continue with normal setup? (yes/no) [n]: yes

Do you want to enforce secure password standard (yes/no) [y]: Enter

Enter the password for "admin": <password>

Confirm the password for "admin": <password>

Would you like to enter the basic configuration dialog (yes/no): yes

Create another login account (yes/no) [n]: Enter

Configure read-only SNMP community string (yes/no) [n]: Enter

Configure read-write SNMP community string (yes/no) [n]: Enter

Enter the switch name: <nexus-A-hostname>

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]: Enter

Mgmt0 IPv4 address: <nexus-A-mgmt0-ip>

Mgmt0 IPv4 netmask: <nexus-A-mgmt0-netmask>

Configure the default gateway? (yes/no) [y]: Enter

IPv4 address of the default gateway: <nexus-A-mgmt0-gw>

Configure advanced IP options? (yes/no) [n]: Enter

Enable the telnet service? (yes/no) [n]: Enter

Enable the ssh service? (yes/no) [y]: Enter

Type of ssh key you would like to generate (dsa/rsa) [rsa]: Enter

Number of rsa key bits <1024-2048> [1024]: Enter

Configure the ntp server? (yes/no) [n]: y

NTP server IPv4 address: <global-ntp-server-ip>

Configure default interface layer (L3/L2) [L3]: L2

Configure default switchport interface state (shut/noshut) [noshut]: Enter

Configure CoPP system profile (strict/moderate/lenient/dense/skip) [strict]: Enter

Would you like to edit the configuration? (yes/no) [n]: Enter

Nexus B Switch

Similarly, follow the steps from section Nexus A Switch to setup the initial configuration for the Cisco Nexus B and change the relevant switch hostname and management address.

Global Settings

To set global configuration, follow these steps on both Nexus switches.

1. Login as admin user into the Nexus Switch A and run the following commands to set global configuration on Switch A.

configure terminal

feature interface-vlan

feature hsrp

feature lacp

feature vpc

feature lldp

feature udld

spanning-tree port type edge bpduguard default

spanning-tree port type network default

port-channel load-balance src-dst l4port

policy-map type network-qos jumbo

class type network-qos class-default

mtu 9216

policy-map type network-qos RoCE-UCS-NQ-Policy

class type network-qos c-8q-nq3

pause pfc-cos 3

mtu 9216

class type network-qos c-8q-nq5

pause pfc-cos 5

mtu 9216

vrf context management

ip route 0.0.0.0/0 10.29.135.1

system qos

service-policy type network-qos RoCE-UCS-NQ-Policy

class-map type qos match-all class-pure

match dhcp 46

class-map type qos match-all class-platinum

match cos 5

class-map type qos match-all class-best-effort

match cos 0

policy-map type qos policy-pure

description qos policy for pure ports

class class-pure

set qos-group 5

set cos 5

set dscp 46

policy-map type qos system_qos_policy

description qos policy for FI to Nexus ports

class class-platinum

set qos-group 5

set dlb-disable

set dscp 46

set cos 5

class class-best-effort

set qos-group 0

copy running-config startup-config

2. Login as admin user into the Nexus Switch B and run the same commands (above) to set global configuration on Switch B.

![]() Make sure to run copy run start to save the configuration on each switch after the configuration is completed.

Make sure to run copy run start to save the configuration on each switch after the configuration is completed.

VLANs Configuration

To create the necessary virtual local area networks (VLANs), follow these steps on both the Nexus switches.

1. Log into Nexus Switch A as admin user.

2. Create VLAN 135 for Public Network Traffic, VLAN 10 for Private Network Traffic, VLAN 11 and 12 for Storage Network Traffic.

configure terminal

vlan 10

name Oracle_RAC_Private_Network

no shutdown

vlan 11

name RoCE_Traffic_FI_A

no shutdown

vlan 12

name RoCE_Traffic_FI_B

no shutdown

vlan 135

name Oracle_RAC_Public_Network

no shutdown

interface Ethernet1/31

description connect to uplink switch

switchport access vlan 135

speed 1000

copy running-config startup-config

3. Log into Nexus Switch B as admin user and create VLAN 135 for Public Network Traffic, VLAN 10 for Private Network Traffic, VLAN 11 & 12 for Storage Network Traffic.

![]() Make sure to run copy run start to save the configuration on each switch after the configuration is completed.

Make sure to run copy run start to save the configuration on each switch after the configuration is completed.

Virtual Port Channel (vPC) Summary for Network Traffic

A port channel bundles individual links into a channel group to create a single logical link that provides the aggregate bandwidth of up to eight physical links. If a member port within a port channel fails, traffic previously carried over the failed link switches to the remaining member ports within the port channel. Port channeling also load balances traffic across these physical interfaces. The port channel stays operational as long as at least one physical interface within the port channel is operational. Using port channels, Cisco NX-OS provides wider bandwidth, redundancy, and load balancing across the channels

In Cisco Nexus Switch topology, a single vPC feature is enabled to provide HA, faster convergence in the event of a failure, and greater throughput. Cisco Nexus vPC configurations with the vPC domains and corresponding vPC names and IDs for Oracle Database Servers is shown in Table 7 .

| vPC Domain |

vPC Name |

vPC ID |

| 1 |

Peer-Link |

1 |

| 1 |

vPC FI-A |

51 |

| 1 |

vPC FI-B |

52 |

As listed in Table 7 , a single vPC domain with Domain ID 1 is created across two Nexus switches to define vPC members to carry specific VLAN network traffic. In this topology, we defined a total number of 3 vPCs.

vPC ID 1 is defined as Peer link communication between the two Nexus switches. vPC IDs 51 and 52 are configured for both Cisco UCS fabric interconnects. Please follow these steps to create this configuration.

![]() A port channel bundles up to eight individual interfaces into a group to provide increased bandwidth and redundancy.

A port channel bundles up to eight individual interfaces into a group to provide increased bandwidth and redundancy.

Create vPC Peer-Link

For vPC 1 as Peer-link, we used interfaces 1 to 4 for Peer-Link. You may choose an appropriate number of ports based on your needs. To create the necessary port channels between devices, follow these steps on both Nexus Switches:

1. Log into Nexus Switch A as admin user

configure terminal

vpc domain 1

peer-keepalive destination 10.29.135.104 source 10.29.135.103

auto-recovery

interface port-channel1

description vPC peer-link

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type network

service-policy type qos input system_qos_policy

vpc peer-link

interface Ethernet1/1

description Peer link connected to N9K-B-Eth1/1

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

channel-group 1 mode active

interface Ethernet1/2

description Peer link connected to N9K-B-Eth1/2

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

channel-group 1 mode active

interface Ethernet1/3

description Peer link connected to N9K-B-Eth1/3

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

channel-group 1 mode active

interface Ethernet1/4

description Peer link connected to N9K-B-Eth1/4

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

channel-group 1 mode active

copy running-config startup-config

2. Login as admin user into the Nexus Switch B and repeat the above steps to configure second Nexus Switch. Make sure to changes the description of interfaces accordingly.

![]() Make sure to change peer-keepalive destination and source IP address appropriately for Nexus Switch A and B.

Make sure to change peer-keepalive destination and source IP address appropriately for Nexus Switch A and B.

Create vPC between Nexus Switches and Fabric Interconnects

This section describes how to create and configure port channel 51 and 52 for storage and network traffic between Nexus and Fabric Interconnect Switches.

Table 8 lists the Port-Channel configured on Fabric Interconnect and Nexus Switches for this solution.

Table 8 Nexus Switch and Fabric Interconnect Switch Connectivity

| vPC Description |

vPC ID |

Fabric Interconnects Ports |

Nexus Switch Ports |

Allowed VLANs |

| Port Channel FI-A |

51 |

FI-A Port 1/31 |

N9K-A Port 1/9 |

135, 10, 11, 12

Note: VLAN 10 and 12 needed for failover |

| FI-A Port 1/32 |

N9K-A Port 1/10 |

|||

| FI-A Port 1/33 |

N9K-B Port 1/9 |

|||

| FI-A Port 1/34 |

N9K-B Port 1/10 |

|||

| Port Channel FI-B |

52 |

FI-B Port 1/31 |

N9K-A Port 1/11 |

135, 10, 11, 12

Note: VLAN 11 and 135 needed for failover |

| FI-B Port 1/32 |

N9K-A Port 1/12 |

|||

| FI-B Port 1/33 |

N9K-B Port 1/11 |

|||

| FI-B Port 1/34 |

N9K-B Port 1/12 |

To configure port channels on Nexus Switches, follow these steps:

1. Log into Nexus Switch A as admin user and run the following commands:

configure terminal

interface port-channel51

description Port-Channel FI-A

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type edge trunk

mtu 9216

service-policy type qos input system_qos_policy

vpc 51

interface port-channel52

description Port-Channel FI-B

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type edge trunk

mtu 9216

service-policy type qos input system_qos_policy

vpc 52

interface Ethernet1/9

description Connected to Fabric-Interconnect-A-31

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type edge trunk

mtu 9216

channel-group 51 mode active

interface Ethernet1/10

description Connected to Fabric-Interconnect-A-32

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type edge trunk

mtu 9216

channel-group 51 mode active

interface Ethernet1/11

description Connected to Fabric-Interconnect-B-31

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type edge trunk

mtu 9216

channel-group 52 mode active

interface Ethernet1/12

description Connected to Fabric-Interconnect-B-32

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type edge trunk

mtu 9216

channel-group 52 mode active

copy running-config startup-config

2. Login as admin user into the Nexus Switch B and repeat the above steps to configure second Nexus Switch:

configure terminal

interface port-channel51

description Port-Channel FI-A

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type edge trunk

mtu 9216

service-policy type qos input system_qos_policy

vpc 51

interface port-channel52

description Port-Channel FI-B

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type edge trunk

mtu 9216

service-policy type qos input system_qos_policy

vpc 52

interface Ethernet1/9

description Connected to Fabric-Interconnect-A-33

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type edge trunk

mtu 9216

channel-group 51 mode active

interface Ethernet1/10

description Connected to Fabric-Interconnect-A-34

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type edge trunk

mtu 9216

channel-group 51 mode active

interface Ethernet1/11

description Connected to Fabric-Interconnect-B-33

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type edge trunk

mtu 9216

channel-group 52 mode active

interface Ethernet1/12

description Connected to Fabric-Interconnect-B-34

switchport mode trunk

switchport trunk allowed vlan 1,10-12,135

spanning-tree port type edge trunk

mtu 9216

channel-group 52 mode active

copy running-config startup-config

Verify all vPC Status

To verify all vPC status, follow these steps:

1. Log into Nexus Switches as admin user and run the following commands to verify the port channel summary.

Configure Nexus Switch to Storage Ports

This section details how to configure Nexus switches to storage ports.

Table 9 lists the port connectivity between the Nexus Switches and Pure Storage FlashArray//X90 R2.

Table 9 Storage and Nexus Switch Connectivity

| Nexus Ports |

Pure Storage Ports |

Allowed VLANs |

Pure Storage Interface IP Configured |

| N9K-A Port 1/23 |

Storage Controller CT0.Eth14 |

11 |

200.200.11.3 |

| N9K-A Port 1/24 |

Storage Controller CT1.Eth14 |

12 |

200.200.12.4 |

| N9K-B Port 1/23 |

Storage Controller CT0.Eth15 |

12 |

200.200.12.3 |

| N9K-B Port 1/24 |

Storage Controller CT1.Eth15 |

11 |

200.200.11.4 |

To configure storage ports on Nexus Switches, follow these steps:

1. Log into Nexus Switch A as admin user and run the following commands:

configure terminal

interface Ethernet1/23

description Connected to Pure-Storage-CT0.Eth14

switchport access vlan 11

priority-flow-control mode on

spanning-tree port type edge

mtu 9216

service-policy type qos input policy-pure

interface Ethernet1/24

description Connected to Pure-Storage-CT1.Eth14

switchport access vlan 12

priority-flow-control mode on

spanning-tree port type edge

mtu 9216

service-policy type qos input policy-pure

copy running-config startup-config

2. Login as admin user into the Nexus Switch B and repeat the above steps to configure second Nexus Switch.

configure terminal

interface Ethernet1/23

description Connected to Pure-Storage-CT0.Eth15

switchport access vlan 12

priority-flow-control mode on

spanning-tree port type edge

mtu 9216

service-policy type qos input policy-pure

interface Ethernet1/24

description Connected to Pure-Storage-CT1.Eth15

switchport access vlan 11

priority-flow-control mode on

spanning-tree port type edge

mtu 9216

service-policy type qos input policy-pure

copy running-config startup-config

3. Verify all Nexus Switches connectivity as shown below:

Configure Pure Storage FlashArray//X90 R2

This section describes the high-level steps to configure Pure Storage FlashArray//X90 R2 used in this solution.

For this solution, Pure Storage FlashArray was loaded with Purity//FA Version 5.3.2, which supports NVMe/RoCE. Alternatively, you can go for newer Purity//FA Version 5.3.5 which is recommended by Pure Storage.

Pure storage support needs to configure NVMe/RoCE services on the FlashArray. The hosts were redundantly connected to the storage controllers through 4 x 100Gb connections (2 x 100Gb per storage controller module) from the redundant Cisco Nexus 9K switches.

The FlashArray network settings were configured with three subnets across three VLANs. Storage Interfaces CT0.Eth0 and CT1.Eth0 were configured to access management for the storage on VLAN 135. Storage Interfaces (CT0.Eth14, CT0.Eth15, CT1.Eth14 & CT1.Eth15) were configured to run RoCE Storage network traffic on the VLAN 11 and VLAN 12 to access database storage from all the oracle RAC nodes.

To configure network settings into Pure Storage FlashArray, follow these steps:

1. Open a web browser and navigate to the Pure Storage FlashArray//X90 R2 Cluster address.

2. Enter the Username and Password for the storage.

3. From the Pure Storage Dashboard, go to Settings > Network. Click Edit Interface.

4. Enter Address, Netmask, Gateway and MTU as shown above and click Save to configure the interface.

The configured network interfaces are shown below:

For this solution, you will use these interface addresses and configure RoCE network traffic to run Oracle RAC databases. You will also configure Volumes, Hosts and Host Groups as discussed in the following sections when you are ready to deploy Oracle RAC Database software.

Cisco UCS Configuration

This section details the Cisco UCS configuration that was done as part of the infrastructure buildout. The racking, power, and installation of the chassis are described in the installation guide ( See https://www.cisco.com/c/en/us/support/servers-unified-computing/ucs-manager/products-installation-guides-list.html). It is beyond the scope of this document to explain the Cisco UCS infrastructure setup and connectivity. The documentation guides and examples are available here: https://www.cisco.com/c/en/us/support/servers-unified-computing/ucs-manager/products-installation-and-configuration-guides-list.html.

![]() This document details all the tasks to configure Cisco UCS but only some screenshots are included.

This document details all the tasks to configure Cisco UCS but only some screenshots are included.

Using logical servers that are disassociated from the physical hardware removes many limiting constraints around how servers are provisioned. Cisco UCS Service Profiles contain values for a server's property settings, including virtual network interface cards (vNICs), MAC addresses, boot policies, firmware policies, fabric connectivity, external management, and HA information. The service profiles represent all the attributes of a logical server in Cisco UCS model. By abstracting these settings from the physical server into a Cisco Service Profile, the Service Profile can then be deployed to any physical compute hardware within the Cisco UCS domain. Furthermore, Service Profiles can, at any time, be migrated from one physical server to another. Furthermore, Cisco is the only hardware provider to offer a truly unified management platform, with Cisco UCS Service Profiles and hardware abstraction capabilities extending to both blade and rack servers.

The following are the high-level steps involved for a Cisco UCS configuration:

1. Perform Initial Setup of Fabric Interconnects for a Cluster Setup.

2. Upgrade UCS Manager Software to Version 4.1.1a

3. Synchronize Cisco UCS to NTP.

4. Configure Fabric Interconnects for Chassis and Blade Discovery:

a. Configure Global Policies

b. Configure Server Ports

5. Configure LAN:

a. Configure Ethernet LAN Uplink Ports

b. Create Uplink Port Channels to Nexus Switches

c. Configure VLANs

6. Configure IP, UUID, Server and MAC Pools:

a. IP Pool Creation

b. UUID Suffix Pool Creation

c. Server Pool Creation

d. MAC Pool Creation

7. Set Jumbo Frames in both the Fabric Interconnect.

8. Create QoS Policy for RoCE.

9. Configure Server BIOS Policy.

10. Create Adapter Policy:

a. Create Adapter Policy for Public and Private Network Interfaces

b. Create Adapter Policy for Storage Network RoCE Interfaces

11. Configure Update Default Maintenance Policy.

12. Configure vNIC Template:

a. Create Public vNIC Template

b. Create Private vNIC Template

c. Create Storage vNIC Template

13. Create Server Boot Policy for Local Boot.

The details for each step are provided in the following sections.

Perform Initial Setup of Fabric Interconnects for a Cluster Setup

This section provides detailed procedures for configuring the Cisco Unified Computing System (Cisco UCS) for use in a FlashStack environment. The steps are necessary to provision the Cisco UCS B-Series and C-Series servers and should be followed precisely to avoid improper configuration.

Configure Fabric Interconnect A and Fabric Interconnect B

To configure the UCS Fabric Interconnects, follow these steps.

1. Verify the following physical connections on the fabric interconnect:

a. The management Ethernet port (mgmt0) is connected to an external hub, switch, or router

b. The L1 ports on both fabric interconnects are directly connected to each other

c. The L2 ports on both fabric interconnects are directly connected to each other

2. Connect to the console port on the first Fabric Interconnect.

3. Review the settings printed to the console. Answer yes to apply and save the configuration.

4. Wait for the login prompt to make the configuration has been saved to Fabric Interconnect A.

5. Now, connect console port on the second Fabric Interconnect and do as following

6. Review the settings printed to the console. Answer yes to apply and save the configuration.

7. Wait for the login prompt to make the configuration has been saved to Fabric Interconnect B

Log into Cisco UCS Manager

To log into the Cisco Unified Computing System (Cisco UCS) environment, follow these steps:

1. Open a web browser and navigate to the Cisco UCS fabric interconnect cluster address.

![]() You may need to wait at least 5 minutes after configuring the second fabric interconnect for Cisco UCS Manager to come up.

You may need to wait at least 5 minutes after configuring the second fabric interconnect for Cisco UCS Manager to come up.

2. Click the Launch UCS Manager link under HTML to launch Cisco UCS Manager.

3. If prompted to accept security certificates, accept as necessary.

4. When prompted, enter admin as the username and enter the administrative password.

5. Click Login to log into Cisco UCS Manager.

Configure Cisco UCS Call Home

It is highly recommended by Cisco to configure Call Home in Cisco UCS Manager. Configuring Call Home will accelerate the resolution of support cases. To configure Call Home, follow these steps:

1. In Cisco UCS Manager, click Admin.

2. Select All > Communication Management > Call Home.

3. Change the State to On.

4. Fill in all the fields according to your Management preferences and click Save Changes and click OK to complete configuring Call Home

Upgrade Cisco UCS Manager Software to Version 4.1

This solution was configured on Cisco UCS 4.1 software release. To upgrade the Cisco UCS Manager software and the Cisco UCS Fabric Interconnect software to version 4.1, go to https://www.cisco.com/c/en/us/support/servers-unified-computing/ucs-manager/products-installation-guides-list.html

Synchronize Cisco UCS Manager to NTP

To synchronize the Cisco UCS Manager environment to the NTP server, follow these steps:

1. In Cisco UCS Manager, in the navigation pane, click the Admin tab.

2. Select All > Time zone Management.

3. In the Properties pane, select the appropriate time zone in the Time zone menu.

4. Click Save Changes and then click OK.

5. Click Add NTP Server.

6. Enter the NTP server IP address and click OK.

7. Click OK to finish

Configure Fabric Interconnect for Chassis and Blade Discovery

Cisco UCS 6332-16UP Fabric Interconnects are configured for redundancy. It provides resiliency in case of failures. The first step to establish connectivity between blade servers and Fabric Interconnects.

Configure Global Policies

The chassis discovery policy determines how the system reacts when you add a new chassis. We recommend using the platform max value as shown. Using platform max helps ensure that Cisco UCS Manager uses the maximum number of IOM uplinks available.

To configure global policies, follow these steps:

1. Go to Equipment > Policies (right pane) > Global Policies > Chassis/FEX Discovery Policies. As shown in the screenshot below, select Action as Platform Max from the drop-down list and set Link Grouping to Port Channel.

2. Click Save Changes.

3. Click OK.

The difference between Discrete mode vs Port Channel mode is shown below:

Configure Server Ports

To configure server ports to initiate chasses and blade recovery, follow these steps:

1. Go to Equipment > Fabric Interconnects > Fabric Interconnect A > Fixed Module > Ethernet Ports.

2. Select the ports (for this solution ports are 17-24) which are connected to the Cisco IO Modules of the two Cisco UCS B-Series 5108 Chassis.

3. Right-click and select Configure as Server Port.

4. Click Yes to confirm and click OK.

5. Repeat steps 1-4 for Fabric Interconnect B.

6. After configuring Server Ports, acknowledge both the Chassis. Go to Equipment > Chassis > Chassis 1 > General > Actions > select Acknowledge Chassis. Similarly, acknowledge the chassis 2.

7. After acknowledging both the chassis, Re-acknowledge all the servers placed in the chassis. Go to Equipment > Chassis 1 > Servers > Server 1 > General > Actions > select Server Maintenance > select option Re-acknowledge and click OK. Similarly, repeat the process to Re-acknowledge all the eight Servers.

8. Once the acknowledgement of the Servers completed, verify Port-channel of Internal LAN. Go to tab LAN > Internal LAN > Internal Fabric A > Port Channels as shown below.

9. Verify the same for Internal Fabric B.

![]() The last 6 ports of the Cisco UCS 6332 and Cisco UCS 6332-16UP FIs will only work with optical based QSFP transceivers and AOC cables, so they can be better utilized as uplinks to upstream resources that might be optical only.

The last 6 ports of the Cisco UCS 6332 and Cisco UCS 6332-16UP FIs will only work with optical based QSFP transceivers and AOC cables, so they can be better utilized as uplinks to upstream resources that might be optical only.

Configure LAN

Configure Ethernet Uplink ports as explained in the following sections.

Configure Ethernet LAN Uplinks Ports

To configure network ports used to uplink the Fabric Interconnects to the Nexus switches, follow these steps:

1. In Cisco UCS Manager, in the navigation pane, click the Equipment tab.

2. Select Equipment > Fabric Interconnects > Fabric Interconnect A > Fixed Module.

3. Expand Ethernet Ports.

4. Select ports (for this solution ports are 31-34) that are connected to the Nexus switches, right-click them, and select Configure as Network Port.

5. Click Yes to confirm ports and click OK.

6. Verify the Ports connected to Nexus upstream switches are now configured as network ports.

7. Repeat steps 1-6 for Fabric Interconnect B. The screenshot shows the network uplink ports for Fabric A.

Now you have created four uplink ports on each Fabric Interconnect as shown above. These ports will be used to create Virtual Port Channel in the next section.

![]() The last 6 ports of the Cisco UCS 6332 and UCS 6332-16UP FIs will only work with optical based QSFP transceivers and AOC cables, so they can be better utilized as uplinks to upstream resources that might be optical only.

The last 6 ports of the Cisco UCS 6332 and UCS 6332-16UP FIs will only work with optical based QSFP transceivers and AOC cables, so they can be better utilized as uplinks to upstream resources that might be optical only.

Create Uplink Port Channels to Nexus Switches

In this procedure, two port channels were created: one from Fabric A to both Nexus switch and one from Fabric B to both Nexus switch. To configure the necessary port channels in the Cisco UCS environment, follow these steps:

1. In Cisco UCS Manager, click the LAN tab in the navigation pane.

2. Under LAN > LAN Cloud, expand node Fabric A tree:

a. Right-click Port Channels.

b. Select Create Port Channel.

c. Enter 51 as the unique ID of the port channel.

d. Enter FI-A as the name of the port channel.

e. Click Next.

f. Select Ethernet ports 31-34 for the port channel.

g. Click >> to add the ports to the port channel

3. Click Finish to create the port channel and then click OK.

4. Repeat steps 1-3 for Fabric Interconnect B, substituting 52 for the port channel number and FI-B for the name. The resulting configuration should look like the screenshot shown below.

Configure VLANs

In this solution, four VLANs were created: one for private network (VLAN 10) traffic, one for public network (VLAN 135) traffic and two storage network (VLAN 11 and VLAN 12) traffic. These four VLANs will be used in the vNIC templates that are discussed later.

To configure the necessary virtual local area networks (VLANs) for the Cisco UCS environment, follow these steps:

![]() It is very important to create both VLANs as global across both fabric interconnects. This way, VLAN identity is maintained across the fabric interconnects in case of NIC failover.

It is very important to create both VLANs as global across both fabric interconnects. This way, VLAN identity is maintained across the fabric interconnects in case of NIC failover.

1. In Cisco UCS Manager, click the LAN tab in the navigation pane.

2. Select LAN > LAN Cloud.

3. Right-click VLANs.

4. Select Create VLANs.

5. Enter Public_Traffic as the name of the VLAN to be used for Public Network Traffic.

6. Keep the Common/Global option selected for the scope of the VLAN.

7. Enter 135 as the ID of the VLAN ID.

8. Keep the Sharing Type as None.

9. Click OK and then click OK again.

10. Create the second VLAN: for private network (VLAN 10) traffic and remaining two storage VLANs for storage network (VALN 11 & 12) traffic as shown below:

These four VLANs will be used in the vNIC templates that are discussed.

Configure IP, UUID, Server and MAC Pools

IP Pool Creation

An IP address pool on the out of band management network must be created to facilitate KVM access to each compute node in the UCS domain. To create a block of IP addresses for server KVM access in the Cisco UCS environment, follow these steps:

1. In Cisco UCS Manager, in the navigation pane, click the LAN tab.

2. Select Pools > root > IP Pools >click Create IP Pool.

3. We have named IP Pool as ORA19C-KVMPoo for this solution.

4. Select option Sequential to assign IP in sequential order then click next.

5. Click Add IPv4 Block.

6. Enter the starting IP address of the block and the number of IP addresses required, and the subnet and gateway information as shown below.

7. Click Next and Finish to create the IP block.

UUID Suffix Pool Creation

To configure the necessary universally unique identifier (UUID) suffix pool for the Cisco UCS environment, follow these steps:

1. In Cisco UCS Manager, click the Servers tab in the navigation pane.

2. Select Pools > root.

3. Right-click UUID Suffix Pools and then select Create UUID Suffix Pool.

4. Enter ORA19C-UUID-Pool as the name of the UUID name.

5. Optional: Enter a description for the UUID pool.

6. Keep the prefix at the derived option and select Sequential in as Assignment Order then click Next.

7. Click Add to add a block of UUIDs.

8. Create a starting point UUID as per your environment.

9. Specify a size for the UUID block that is sufficient to support the available blade or server resources.

Server Pool Creation

To configure the necessary server pool for the Cisco UCS environment, follow these steps:

![]() Consider creating unique server pools to achieve the granularity that is required in your environment.

Consider creating unique server pools to achieve the granularity that is required in your environment.

1. In Cisco UCS Manager, click the Servers tab in the navigation pane.

2. Select Pools > root > Right-click Server Pools > Select Create Server Pool.

3. Enter ORA19C-SERVER-POOL as the name of the server pool.

4. Optional: Enter a description for the server pool then click Next.

5. Select all the eight servers to be used for the Oracle RAC management and click > to add them to the server pool.

6. Click Finish and click OK.

MAC Pool Creation

To configure the necessary MAC address pools for the Cisco UCS environment, follow these steps:

1. In Cisco UCS Manager, click the LAN tab in the navigation pane.

2. Select Pools > root > right-click MAC Pools under the root organization.

3. Select Create MAC Pool to create the MAC address pool.

4. Enter ORA-MAC-A as the name for MAC pool.

5. Enter the seed MAC address and provide the number of MAC addresses to be provisioned.

6. Click OK and then click Finish.

7. In the confirmation message, click OK.

8. Create remaining MAC Pool and assign unique MAC Addresses as shown below:

![]() When there are multiple Cisco UCS domains sitting in adjacency, it is important that these blocks of MAC Addresses, hold differing values between each set.

When there are multiple Cisco UCS domains sitting in adjacency, it is important that these blocks of MAC Addresses, hold differing values between each set.

Set Jumbo Frames in both the Fabric Interconnect

To configure jumbo frames and enable quality of service in the Cisco UCS fabric, follow these steps:

1. In Cisco UCS Manager, click the LAN tab in the navigation pane.

2. Select LAN > LAN Cloud > QoS System Class.

3. In the right pane, click the General tab.

4. On the Best Effort row, enter 9216 in the box under the MTU column.

5. Enable the Platinum Priority and configured as shown below.

6. Click Save Changes in the bottom of the window.

7. Click OK.

The Platinum QoS System Classes are enabled in this FlashStack implementation. The Cisco UCS and Nexus switches are intentionally configured this way so that all IP traffic within the FlashStack will be treated as Platinum CoS5. Enabling the other QoS System Classes without having a comprehensive, end-to-end QoS setup in place can cause difficult to troubleshoot issues.

For example, Pure storage controllers by default mark all interfaces nvme-roce protocol packets with a CoS value of 5. With the configuration on the Nexus switches in this implementation, storage packets will pass through the switches and into the UCS Fabric Interconnects with CoS 5 set in the packet header.

Create QoS Policy for RoCE

To configure QoS Policy for RoCE Network traffic, follow these steps:

1. Go to LAN > Policies > root > QoS Policies and right-click for Create QoS Policy

2. Name the policy as ROCE and select priority as Platinum as shown below:

Configure Server BIOS Policy

To create a server BIOS policy for the Cisco UCS environment, follow these steps

1. In Cisco UCS Manager, click Servers.

2. Select Policies > root.

3. Right-click BIOS Policies.

4. Select Create BIOS Policy.

5. Enter ORA19C_BIOS as the BIOS policy name

6. Select and click the newly created BIOS Policy.

7. Click the Advanced tab, leaving the Processor tab selected within the Advanced tab.

8. Set the following within the Processor tab:

- CPU Hardware Power Management: HWPM Native Mode

- CPU Performance: Enterprise

- Energy Efficient Turbo: Disabled

- IMC Inteleave: Auto

- Sub NUMA Clustering: Disabled

- Package C State Limit: C0 C1 State

- Processor C State: Disabled

- Processor C1E: Disabled

- Processor C3 Report: Disabled

- Processor C6 Report: Disabled

- Processor C7 Report: Disabled

- LLC Prefetch: Disabled

- Demand Scrub: Disabled

- Patrol Scrub: Disabled

- Workload Configuration: IO Sensitive

9. Set the following within the RAS Memory tab:

- Memory RAS configuration: ADDDC Sparing

10. Click Save Changes and then click OK.

![]() All BIOS policies might be required on your setup. Please follow the steps according to your environment and requirement. The following changes were made on the test bed where Oracle RAC installed. Please validate and change as needed.

All BIOS policies might be required on your setup. Please follow the steps according to your environment and requirement. The following changes were made on the test bed where Oracle RAC installed. Please validate and change as needed.

![]() For more details on BIOS settings, refer to: https://www.cisco.com/c/dam/en/us/products/collateral/servers-unified-computing/ucs-b-series-blade-servers/whitepaper_c11-740098.pdf

For more details on BIOS settings, refer to: https://www.cisco.com/c/dam/en/us/products/collateral/servers-unified-computing/ucs-b-series-blade-servers/whitepaper_c11-740098.pdf

![]() It is recommended to disable C states in the BIOS and in addition, Oracle recommends disabling it from OS level as well by modifying grub entries. The OS level settings are explained in section Operating System Configuration.

It is recommended to disable C states in the BIOS and in addition, Oracle recommends disabling it from OS level as well by modifying grub entries. The OS level settings are explained in section Operating System Configuration.

Create Adapter Policy

In this solution, we created two adapter policy. One Adapter policy for Public and Private Network Interface Traffic and second adapter policy for Storage Network Interface RoCE Traffic as explained in the following sections.

Create Adapter Policy for Public and Private Network Interfaces

To create an Adapter Policy for the UCS environment, follow these steps:

1. In Cisco UCS Manager, click the Servers tab in the navigation pane.

2. Select Policies > root > right-click Adapter Policies.

3. Select Create Ethernet Adapter Policy.

4. Provide a name for the Ethernet adapter policy as ORA_Linux_Tuning. Change the following fields and click Save Changes:

a. Resources

i. Transmit Queues: 8

ii. Ring Size: 4096

iii. Receive Queues: 8

iv. Ring Size: 4096

v. Completion Queues: 16

vi. Interrupts: 32

b. Options

i. Receive Side Scaling (RSS): Enabled

5. Configure the adapter policy as shown below:

![]() RSS distributes network receive processing across multiple CPUs in multiprocessor systems. This can be one of the following:

RSS distributes network receive processing across multiple CPUs in multiprocessor systems. This can be one of the following:

Disabled—Network receive processing is always handled by a single processor even if additional processors are available.

Enabled—Network receive processing is shared across processors whenever possible.

Create Adapter Policy for Storage Network RoCE Interfaces

To create an adapter policy for the storage network RoCE traffic, follow these steps:

1. In Cisco UCS Manager, click the Servers tab in the navigation pane.

2. Select Policies > root > right-click Adapter Policies.

3. Select Create Ethernet Adapter Policy.

4. Provide a name for the Ethernet adapter policy as ROCE Adapter. Change the following fields and click Save Changes when you are finished:

a. Resources

i. Transmit Queues: 8

ii. Ring Size: 4096

iii. Receive Queues: 8

iv. Ring Size: 4096

v. Completion Queues: 16

vi. Interrupts: 256

b. Options

i. Receive Side Scaling (RSS): Enabled

ii. RoCE: Enabled

iii. RoCE Properties:

- Version 2: Enabled

- Queue Pairs: 1024

- Memory Regions: 131072

- Resource Groups: 8

- Priority: Platinum

5. Configure the adapter policy as shown below:

Configure Update Default Maintenance Policy

To update the default Maintenance Policy, follow these steps:

1. In Cisco UCS Manager, click the Servers tab in the navigation pane.

2. Select Policies > root > Maintenance Policies > Default.

3. Change the Reboot Policy to User Ack.

4. Click Save Changes.

5. Click OK to accept the changes.

Configure vNIC Template

With the four vNIC template for Public Network, Private Network and RoCE Storage Network Traffic you’ve created, you will use these vNIC Templates during the creation of the Service Profile later in this section.

To create vNIC (virtual network interface card) template for the Cisco UCS environment, follow these steps:

1. In Cisco UCS Manager, click the LAN tab in the navigation pane.

2. Select Policies > root > vNIC Templates > right-click to vNIC Template and Select "Create vNIC Template."

3. Enter ORA-vNIC-A as the vNIC template name and keep Fabric A selected.

4. Select the Enable Failover checkbox for high availability of the vNIC.

![]() Selecting Hardware level Failover is strongly recommended for Oracle Private Interconnect Network Interfaces.

Selecting Hardware level Failover is strongly recommended for Oracle Private Interconnect Network Interfaces.

5. Select Template Type as Updating Template.

6. Under VLANs, select the checkboxes default and Public_Traffic and set Native-VLAN as the Public_Traffic.

7. Keep MTU value 1500 for Public Network Traffic.

8. In the MAC Pool list, select ORA-MAC-A.

9. Click OK to create the vNIC template as shown below.

10. Click OK to finish.

11. Similarly, create another vNIC template for Private Network Traffic with few changes.

12. Enter ORA-vNIC-B as the vNIC template name for Private Network Traffic.

13. Select the Fabric B and Enable Failover for Fabric ID options.

14. Select Template Type as Updating Template.

15. Under VLANs, select the checkboxes default and Private_Traffic and set Native-VLAN as the Private_Traffic.

16. Set MTU value to 9000 and MAC Pool as ORA-MAC-B.

17. Click OK to create the vNIC template as shown below:

18. Create third vNIC template for Storage Network Traffic through Fabric Interconnect – A.

19. Enter ORA-vNIC-C as the vNIC template name for Storage Network Traffic.

20. Select the Fabric A for Fabric ID options.

21. Select Template Type as Updating Template.

22. Under VLANs, select the checkboxes Storage_VLAN11 and set Native-VLAN as VLAN 11.

23. Set MTU value to 9000 and MAC Pool as ORA-MAC-C.

24. Set QoS Policy as ROCE.

25. Click OK to create the vNIC template as shown below.

![]() Fabric failover is not supported on RoCE based vNICs with this release of UCSM and the recommendation is to use the OS level multipathing to reroute and balance the storage network traffic.

Fabric failover is not supported on RoCE based vNICs with this release of UCSM and the recommendation is to use the OS level multipathing to reroute and balance the storage network traffic.

26. Create fourth vNIC template for Storage Network Traffic through Fabric Interconnect – B.

27. Enter ORA-vNIC-D as the vNIC template name for Storage Network Traffic.

28. Select the Fabric B for Fabric ID options.

29. Select Template Type as Updating Template.

30. Under VLANs, select the checkboxes Storage_VLAN12 and set Native-VLAN as VLAN 12.

31. Set MTU value to 9000 and MAC Pool as ORA-MAC-D.

32. Set QoS Policy as ROCE.

33. Click OK to create the vNIC template as shown below.

![]() Fabric failover is not supported on RoCE based vNICs with this release of UCSM and the recommendation is to use the OS level multipathing to reroute and balance the storage network traffic.

Fabric failover is not supported on RoCE based vNICs with this release of UCSM and the recommendation is to use the OS level multipathing to reroute and balance the storage network traffic.

All the vNIC templates are configured as shown below:

Create Server Boot Policy for Local Boot

All Oracle nodes were set to boot from Local Disk for this Cisco Validated Design as part of the Service Profile template. A Local disk configuration for Cisco UCS is necessary if the servers in the environments have a local disk.

To create Boot Policies for the Cisco UCS environments, follow these steps:

1. Go to Cisco UCS Manager and then go to Servers > Policies > root > Boot Policies.

2. Right-click and select Create Boot Policy. Enter Local_Disk for the name of the boot policy.

3. Expand the Local Devices drop-down menu and Choose Add CD/DVD and then Local Disk for the Boot Order as shown below:

4. Click OK to create the boot policy.

Create and Configure Service Profile Template

Service profile templates enable policy-based server management that helps ensure consistent server resource provisioning suitable to meet predefined workload needs.

The Cisco UCS service profile provides the following benefits:

· Scalability - Rapid deployment of new servers to the environment in a very few steps.

· Manageability - Enables seamless hardware maintenance and upgrades without any restrictions.

· Flexibility - Easy to repurpose physical servers for different applications and services as needed.

· Availability - Hardware failures are not impactful and critical. In rare case of a server failure, it is easier to associate the logical service profile to another healthy physical server to reduce the impact.

You will create one Service Profile Template named ORA19C as explained in the follow sections.

Create Service Profile Template

To create a service profile template, follow these steps:

1. In the Cisco UCS Manager, go to Servers > Service Profile Templates > root and right-click to Create Service Profile Template as shown below:

2. Enter the Service Profile Template name, select the UUID Pool that was created earlier, and click Next.

3. Select Local Disk Configuration Policy to default as Any configuration mode.

4. In the networking window, select Expert and click Add to create vNICs. Add one or more vNICs that the server should use to connect to the LAN.

Now there are four vNIC in the create vNIC menu. You have provided a name to the first vNIC as eth0 and second vNIC as eth1. Similarly, you have provided name to third vNIC as eth2 and fourth vNIC as eth3.

5. As shown below, select vNIC Template as ORA-vNIC-A and Adapter Policy as ORA_Linux_Tuning which was created earlier for vNIC eth0.

6. Select vNIC Template as Oracle-vNIC-B and Adapter Policy as ORA_Linux_Tuning for vNIC eth1.

7. For vNIC eth2, select vNIC Template as ORA-vNIC-C and Adapter Policy as ROCE_Adapter as shown below.

8. For vNIC eth3, select vNIC Template as ORA-vNIC-D and Adapter Policy as ROCE_Adapter as shown below.

Four vNICs are configured for each linux host as shown below:

![]() With this release of UCSM, only two RDMA vNICs are supported.

With this release of UCSM, only two RDMA vNICs are supported.

9. When the vNICs are created, click Next.

10. In the SAN Connectivity menu, select No vHBAs. Click Next.

11. Skip zoning; for this Oracle RAC Configuration, we have not used any zoning for SAN.

12. In the vNIC/vHBA Placement Menu, select option Specify Manually. For this solution, we configured the vNIC placement manually as shown below:

![]() You can configure vNIC Placement by selecting option as Specify Manually. Click vCon1 from Name option and eth0 from vNICs, and then select assign button to send eth0 under vCon1 option.

You can configure vNIC Placement by selecting option as Specify Manually. Click vCon1 from Name option and eth0 from vNICs, and then select assign button to send eth0 under vCon1 option.

13. Keep default value in the vMedia Policy menu then click Next.

14. For the Server Boot Policy, select Local Disk as Boot Policy which you created earlier.

15. The remaining maintenance, pool assignment, firmware management and server assignment policies were left as default in the configuration. However, they may vary from site-to-site depending on workloads, best practices, and policies.

16. Click Next and Select BIOS Policy as ORA19C_BIOS in the BIOS Configuration.

17. Click Finish to create a service profile template as ORA19C. This service profile template is used to create all eight service profiles for oracle RAC node 1 to 8 (orarac1 to orarac8).

You have now created the Service profile template as ORA19C with each having four vNICs as shown below:

Create Service Profiles from Template and Associate to Servers

Create Service Profiles from Template

To create eight service profiles as ORARAC1 to ORARAC8 from template ORA19C, follow these steps:

1. Go to tab Servers > Service Profiles > root > and right-click Create Service Profiles from Template.

2. Select the Service profile template as ORA19C previously created and name the service profile ORARAC.

3. To create eight service profiles, enter Number of Instances as 8 as shown below. This process will create service profiles as ORARAC1, ORARAC2, ORARAC3, ORARAC4, ORARAC5, ORARAC6, ORARAC7 and ORRAC8.

4. Once the service profiles are created, associate them to the servers as described in the following section.

Associate Service Profiles to the Servers

To associate service profiles to the servers, follow these steps:

1. Under the server tab, right-click the name of service profile you want to associate with the server and select the option "Change Service Profile Association".

2. In the Change Service Profile Association page, from the Server Assignment drop-down, select the existing server that you would like to assign, and click OK.