Cisco HyperFlex 2.6 for Virtual Server Infrastructure

Available Languages

Cisco HyperFlex 2.6 for Virtual Server Infrastructure

Deployment Guide for Cisco HyperFlex 2.6 for Virtual Server Infrastructure

Cisco HyperFlex 2.6 for Virtual Server Infrastructure PDF

Last Updated: December 18, 2018

About the Cisco Validated Design (CVD) Program

The CVD program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information visit:

http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unified Computing System (Cisco UCS), Cisco UCS B-Series Blade Servers, Cisco UCS C-Series Rack Servers, Cisco UCS S-Series Storage Servers, Cisco UCS Manager, Cisco UCS Management Software, Cisco Unified Fabric, Cisco Application Centric Infrastructure, Cisco Nexus 9000 Series, Cisco Nexus 7000 Series. Cisco Prime Data Center Network Manager, Cisco NX-OS Software, Cisco MDS Series, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries.

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2018 Cisco Systems, Inc. All rights reserved.

Table of Contents

Cisco Unified Computing System

Cisco UCS 6248UP Fabric Interconnect

Cisco UCS 6296UP Fabric Interconnect

Cisco UCS 6332 Fabric Interconnect

Cisco UCS 6332-16UP Fabric Interconnect

Cisco HyperFlex HX-Series Nodes

Cisco HyperFlex HXAF220c-M5SX All-Flash Node

Cisco HyperFlex HXAF240c-M5SX All-Flash Node

Cisco HyperFlex HX220c-M5SX Hybrid Node

Cisco HyperFlex HX240c-M5SX Hybrid Node

Cisco HyperFlex HXAF220c-M4S All-Flash Node

Cisco HyperFlex HXAF240c-M4SX All-Flash Node

Cisco HyperFlex HX220c-M4S Hybrid Node

Cisco HyperFlex HX240c-M4SX Hybrid Node

Cisco VIC 1227 and 1387 MLOM Interface Cards

Cisco HyperFlex Compute-Only Nodes

Cisco HyperFlex Data Platform Software

Cisco HyperFlex Connect HTML5 Management Web Page

Cisco Intersight Cloud Based Management

Cisco HyperFlex HX Data Platform Administration Plug-in

Cisco HyperFlex HX Data Platform Controller

Data Operations and Distribution

Cisco UCS B-Series Blade Servers

Cisco UCS C-Series Rack-Mount Servers

Cisco UCS Service Profile Templates

Storage Platform Controller VMs

Cisco UCS Fabric Interconnect A

Cisco UCS Fabric Interconnect B

HyperFlex Installer Deployment

Post Installation Script to Complete Your HX Configuration

Auto-Support and Notifications

Adding vHBAs or iSCSI vNICs During HX Cluster Creation

Adding vHBAs or iSCSI vNICs to an Existing HX Cluster

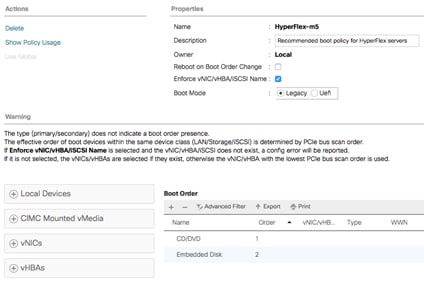

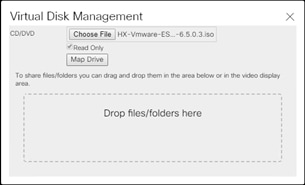

Cisco UCS vMedia and Boot Policies

Undo vMedia and Boot Policy Changes

Expansion with Compute-Only Nodes

Expansion with Converged Nodes

Expansion with M5 Generation Servers for Mixed Clusters

Cisco Intersight Cloud-Based Management

Connecting Cisco HyperFlex Clusters

Virtual Machine Recovery Operations

Virtual Machine Recovery Testing

Virtual Machine Disaster Recovery

Disaster Recover Post Operations

A: Cluster Capacity Calculations

C: HyperFlex Workload Profiler

D: Example Cisco Nexus 9372 Switch Configurations

E: Example Connecting to External Storage Systems

Connecting to Fibre Channel Storage

F: Adding HX to an Existing Cisco UCS Domain

With the proliferation of virtualized environments across most IT landscapes, other technology stacks which have traditionally not offered the same levels of simplicity, flexibility, and rapid deployment as virtualized compute platforms have come under increasing scrutiny. In particular, networking devices and storage systems have lacked the agility of hypervisors and virtual servers. With the introduction of Cisco HyperFlex, Cisco has brought the dramatic enhancements of hyperconvergence to the modern datacenter. Cisco HyperFlex systems are based on the Cisco UCS platform, combining Cisco HX-Series x86 servers and integrated networking technologies through the Cisco UCS Fabric Interconnects, into a single management domain, along with industry leading virtualization hypervisor software from VMware, and next-generation software defined storage technology. The combination creates a complete virtualization platform, which provides the network connectivity for the guest virtual machine (VM) connections, and the distributed storage to house the VMs, spread across all of the Cisco UCS x86 servers, versus using specialized storage or networking components. The unique storage features of the HyperFlex log based filesystem enable rapid cloning of VMs, snapshots without the traditional performance penalties, and data deduplication and compression. All configuration, deployment, management, and monitoring of the solution can be done with existing tools for Cisco UCS and VMware, such as Cisco UCS Manager and VMware vCenter. This powerful linking of advanced technology stacks into a single, simple, rapidly deployed solution makes Cisco HyperFlex a true second generation hyperconverged platform.

Cisco HyperFlex HXDP 2.5 introduced new enterprise class data protection features, including snapshot-based virtual machine replication, and data-at-rest encryption. Virtual machine replication allows for easy configuration of a secondary HyperFlex site for disaster recovery. Data-at-rest encryption keeps all data on the disks encrypted to protect against accidental data loss or theft. Customers can choose to deploy SSD-only All-Flash HyperFlex clusters for improved performance, increased density, and reduced latency, or use HyperFlex hybrid clusters which combine high-performance SSDs and low-cost, high-capacity HDDs to optimize the cost of storing data. Further enhancements include an all-new HTML5 based native HyperFlex Connect management tool with role-based access control, support for 40 GbE connectivity, larger scale 16-node clusters, and support for NVMe based SSDs in place of SAS-based SSDs for the caching disks. Cisco HyperFlex HXDP 2.6 now introduces support for the latest M5 generation servers, which can be used as converged storage nodes, or as compute-only nodes. Cisco M5 generation servers can be deployed as a standalone HyperFlex cluster, or customers can choose to deploy mixed clusters using both M4 and M5 generation servers.

Introduction

The Cisco HyperFlex System provides an all-purpose virtualized server platform, with hypervisor hosts, networking connectivity, and virtual server storage across a set of Cisco UCS HX-Series x86 rack-mount servers. Legacy datacenter deployments have relied on a disparate set of technologies, each performing a distinct and specialized function, such as network switches connecting endpoints and transferring Ethernet network traffic, and Fibre Channel (FC) storage arrays providing block based storage via a dedicated storage array network (SAN). Each of these systems had unique requirements for hardware, connectivity, management tools, operational knowledge, monitoring, and ongoing support. A legacy virtual server environment was often divided up into areas commonly referred to as silos, within which only a single technology operated, along with their correlated software tools and support staff. Silos could often be divided between the x86 computing hardware, the networking connectivity of those x86 servers, SAN connectivity and storage device presentation, the hypervisors and virtual platform management, and finally the guest VM themselves along with their OS and applications. This model proves to be inflexible, difficult to navigate, and is susceptible to numerous operational inefficiencies.

A more modern datacenter model was developed called a converged infrastructure. Converged infrastructures attempt to collapse the traditional silos by combining these technologies into a more singular environment, which has been designed to operate together in pre-defined, tested, and validated designs. A key component of the converged infrastructure was the revolutionary combination of x86 rack and blade servers, along with converged Ethernet and Fibre Channel networking offered by the Cisco UCS platform. Converged infrastructures leverage Cisco UCS, plus new deployment tools, management software suites, automation processes, and orchestration tools to overcome the difficulties deploying traditional environments, and do so in a much more rapid fashion. These new tools place the ongoing management and operation of the system into the hands of fewer staff, with more rapid deployment of workloads based on business needs, while still remaining at the forefront of flexibility to adapt to workload needs, and offering the highest possible performance. Cisco has had incredible success in these areas with our various partners, developing leading solutions such as Cisco FlexPod, FlashStack, VersaStack, and VxBlock architectures. Despite these advances, because these converged infrastructures contained some legacy technology stacks, particularly in the storage subsystems, there often remained a division of responsibility amongst multiple teams of administrators. Alongside, there is also a recognition that these converged infrastructures can still be a somewhat complex combination of components, where a simpler system would suffice to serve the workloads being requested.

Significant changes in the storage marketplace have given rise to the software defined storage (SDS) system. Legacy FC storage arrays often contained a specialized subset of hardware, such as Fibre Channel Arbitrated Loop (FC-AL) based controllers and disk shelves along with optimized Application Specific Integrated Circuits (ASIC), read/write data caching modules and cards, plus highly customized software to operate the arrays. With the rise of Serial Attached SCSI (SAS) bus technology and its inherent benefits, storage array vendors began to transition their internal hardware architectures to SAS, and with dramatic increases in processing power from recent x86 processor architectures, they also used fewer or no custom ASICs at all. As disk physical sizes shrank, x86 servers began to have the same density of storage per rack unit (RU) as the arrays themselves, and with the proliferation of NAND based flash memory solid state disks (SSD), they also now had access to input/output (IO) devices whose speed rivaled that of dedicated caching devices. If servers themselves now contained storage devices and technology to rival many dedicated arrays on the market, then the major differentiator between them was the software providing allocation, presentation and management of the storage, plus the advanced features many vendors offered. This has led to the rise of software defined storage, where the x86 servers with the storage devices ran software to effectively turn one or more of them, working cooperatively, into a storage array much the same as the traditional arrays were. In a somewhat unexpected turn of events, some of the major storage array vendors themselves were pioneers in this field, recognizing the technological shifts in the market, and attempting to profit from the software features they offered versus their specialized hardware, as had been done in the past.

Some early uses of SDS systems simply replaced the traditional storage array in the converged architectures as described earlier. That configuration still had a separate storage system from the virtual server hypervisor platform, and depending on the solution provider, still remained separate from the network devices. If the servers that hosted the VMs, and also provided the SDS environment were in fact the same model of server, could they simply do both things at once and collapse the two functions into one? This ultimate combination of resources becomes what the industry has given the moniker of a hyperconverged infrastructure. Hyperconverged infrastructures coalesce the computing, memory, hypervisor, and storage devices of servers into a single platform for virtual servers. There is no longer a separate storage system, as the servers running the hypervisors also provide the software defined storage resources to store the virtual servers, effectively storing the virtual machines on themselves. Now nearly all the silos are gone, and a hyperconverged infrastructure becomes something almost completely self-contained, simpler to use, faster to deploy, easier to consume, yet still flexible and with very high performance. Many hyperconverged systems still rely on standard networking components, such as on-board network cards in the x86 servers, and top-of-rack switches. The Cisco HyperFlex system combines the convergence of computing and networking provided by Cisco UCS, along with next-generation hyperconverged storage software, to uniquely provide the compute resources, network connectivity, storage, and hypervisor platform to run an entire virtual environment, all contained in a single uniform system.

Some key advantages of hyperconverged infrastructures are the simplification of deployment, day to day management operations, as well as increased agility, thereby reducing the amount operational costs. Since hyperconverged storage can be easily managed by an IT generalist, this can also reduce technical debt going forward that is often accrued by implementing complex systems that need dedicated management teams and skillsets.

Audience

The intended audience for this document includes, but is not limited to, sales engineers, field consultants, professional services, IT managers, partner engineering, and customers deploying the Cisco HyperFlex System. External references are provided wherever applicable, but readers are expected to be familiar with VMware specific technologies, infrastructure concepts, networking connectivity, and security policies of the customer installation.

Purpose of this Document

This document describes the steps required to deploy, configure, and manage a Cisco HyperFlex system. The document is based on all known best practices using the software, hardware and firmware revisions specified in the document. As such, recommendations and best practices can be amended with later versions. This document showcases the installation, configuration and expansion of Cisco HyperFlex standard and also extended clusters, including both converged nodes and compute-only nodes, in a typical customer datacenter environment. While readers of this document are expected to have sufficient knowledge to install and configure the products used, configuration details that are important to the deployment of this solution are provided in this CVD.

Enhancements for Version 2.5

The Cisco HyperFlex system has several new capabilities and enhancements in version 2.5:

· Native replication of virtual machine snapshots between two Cisco HyperFlex clusters, enabling recovery of a single VM, multiple VMs, or an entire site as a disaster recovery.

· Data-at-rest encryption using hardware based self-encrypting disks (SED) to protect data written on the disks, preventing accidental data loss or data theft.

· Support for larger scale clusters; up to 16 converged nodes and up to 16 compute-only nodes per all-flash cluster, and support for managing up to 100 Cisco HyperFlex clusters per VMware vCenter instance.

· Support for using NVMe based SSDs as the caching disk in the Cisco HyperFlex all-flash converged nodes.

· The new HyperFlex Connect native HTML5 management GUI, with role-based access control.

· A fully supported release of the HyperFlex REST API, allowing programmatic management and monitoring of the cluster, and its features.

· HXAF240c-M4SX nodes now can scale to a full allotment of 23 capacity SSDs per node.

· Expanded Cisco UCS blade and rack-mount server model options for compute-only nodes.

· Smart Licensing

· FIPS/CC Certification

· VMware vSphere 6.5 support

Enhancements for Version 2.6

The Cisco HyperFlex system brings new capabilities and enhancements in version 2.6:

· Support for the new Cisco M5 generation HyperFlex server models; HX220c-M5SX, HX240c-M5SX, HXAF220c-M5SX, and HXAF240c-M5SX.

· Support for mixed clusters using a combination of Cisco M4 generation and M5 generation servers running in the same HyperFlex cluster.

· Support for standard Cisco M5 generation rack mount and blade servers as compute-only nodes.

· Support for the initial release of Cisco Intersight cloud-based remote monitoring and management.

· Additional VM management features in the HyperFlex Connect HTML5 management GUI.

· Native replication between an all-flash HyperFlex cluster and a hybrid HyperFlex cluster.

Documentation Roadmap

For the comprehensive documentation suite, refer to the following for the Cisco UCS HX-Series Documentation Roadmap: https://www.cisco.com/c/en/us/td/docs/hyperconverged_systems/HyperFlex_HX_DataPlatformSoftware/HX_Documentation_Roadmap/HX_Series_Doc_Roadmap.html

![]() Note: A login is required for the Documentation Roadmap.

Note: A login is required for the Documentation Roadmap.

Hyperconverged Infrastructure web link: http://hyperflex.io

Solution Summary

The Cisco HyperFlex system provides a fully contained virtual server platform, with compute and memory resources, integrated networking connectivity, a distributed high-performance log based filesystem for VM storage, and the hypervisor software for running the virtualized servers, all within a single Cisco UCS management domain.

Figure 1 HyperFlex System Overview

The following are the components of a Cisco HyperFlex system:

· Cisco UCS Fabric Interconnects, choose from models:

- Cisco UCS 6248UP Fabric Interconnect

- Cisco UCS 6296UP Fabric Interconnect

- Cisco UCS 6332 Fabric Interconnect

- Cisco UCS 6332-16UP Fabric Interconnect

· Cisco HyperFlex HX-Series Rack-Mount Servers, choose from models:

- Cisco HyperFlex HX220c-M5SX Rack-Mount Servers

- Cisco HyperFlex HX240c-M5SX Rack-Mount Servers

- Cisco HyperFlex HXAF220c-M5SX All-Flash Rack-Mount Servers

- Cisco HyperFlex HXAF240c-M5SX All-Flash Rack-Mount Servers

- Cisco HyperFlex HX220c-M4S Rack-Mount Servers

- Cisco HyperFlex HX240c-M4SX Rack-Mount Servers

- Cisco HyperFlex HXAF220c-M4S All-Flash Rack-Mount Servers

- Cisco HyperFlex HXAF240c-M4SX All-Flash Rack-Mount Servers

· Cisco HyperFlex Data Platform Software

· VMware vSphere ESXi Hypervisor

· VMware vCenter Server (end-user supplied)

Optional components for additional compute-only resources are:

· Cisco UCS 5108 Chassis

· Cisco UCS 2204XP, 2208XP or 2304 model Fabric Extenders

· Cisco UCS B200-M3, B200-M4, B200-M5, B260-M4, B420-M4, B460-M4 or B480-M5 blade servers

· Cisco UCS C220-M3, C220-M4, C220-M5, C240-M3, C240-M4, C240-M5, C460-M4 or C480-M5 rack-mount servers

All-Flash Versus Hybrid

The initial HyperFlex product release featured hybrid converged nodes, which use a combination of solid-state disks (SSDs) for the short-term storage caching layer, and hard disk drives (HDDs) for the long-term storage capacity layer. The hybrid HyperFlex system is an excellent choice for entry-level or midrange storage solutions, and hybrid solutions have been successfully deployed in many non-performance sensitive virtual environments. Meanwhile, there is significant growth in deployment of highly performance sensitive and mission critical applications. The primary challenge to the hybrid HyperFlex system from these highly performance sensitive applications, is their increased sensitivity to high storage latency. Due to the characteristics of the spinning hard disks, it is unavoidable that their higher latency becomes the bottleneck in the hybrid system. Ideally, if all of the storage operations were to occur in the caching SSD layer, the hybrid system’s performance will be excellent. But in several scenarios, the amount of data being written and read exceeds the caching layer capacity, placing larger loads on the HDD capacity layer, and the subsequent increases in latency will naturally result in reduced performance.

Cisco All-Flash HyperFlex systems are an excellent option for customers with a requirement to support high performance, latency sensitive workloads. With a purpose built, flash-optimized and high-performance log based filesystem, the Cisco All-Flash HyperFlex system provides:

· Predictable high performance across all the virtual machines on HyperFlex All-Flash and compute-only nodes in the cluster.

· Highly consistent and low latency, which benefits data-intensive applications and databases such as Microsoft SQL and Oracle.

· Support for NVMe caching SSDs, offering an even higher level of performance.

· Future ready architecture that is well suited for flash-memory configuration:

- Cluster-wide SSD pooling maximizes performance and balances SSD usage so as to spread the wear.

- A fully distributed log-structured filesystem optimizes the data path to help reduce write amplification.

- Large sequential writing reduces flash wear and increases component longevity.

- Inline space optimization, for example; deduplication and compression, minimizes data operations and reduces wear.

· Lower operating cost with the higher density drives for increased capacity of the system.

· Cloud scale solution with easy scale-out and distributed infrastructure and the flexibility of scaling out independent resources separately.

Cisco HyperFlex support for hybrid and all-flash models now allows customers to choose the right platform configuration based on their capacity, applications, performance, and budget requirements. All-flash configurations offer repeatable and sustainable high performance, especially for scenarios with a larger working set of data, in other words, a large amount of data in motion. Hybrid configurations are a good option for customers who want the simplicity of the Cisco HyperFlex solution, but their needs focus on capacity-sensitive solutions, lower budgets, and fewer performance-sensitive applications.

Cisco Unified Computing System

The Cisco Unified Computing System (Cisco UCS) is a next-generation data center platform that unites compute, network, and storage access. The platform, optimized for virtual environments, is designed using open industry-standard technologies and aims to reduce total cost of ownership (TCO) and increase business agility. The system integrates a low-latency, lossless 10 Gigabit Ethernet or 40 Gigabit Ethernet unified network fabric with enterprise-class, x86-architecture servers. It is an integrated, scalable, multi chassis platform in which all resources participate in a unified management domain.

The main components of Cisco Unified Computing System are:

· Computing: The system is based on an entirely new class of computing system that incorporates rack-mount and blade servers based on Intel Xeon Processors.

· Network: The system is integrated onto a low-latency, lossless, 10-Gbps or 40-Gbps unified network fabric. This network foundation consolidates LANs, SANs, and high-performance computing networks which are often separate networks today. The unified fabric lowers costs by reducing the number of network adapters, switches, and cables, and by decreasing the power and cooling requirements.

· Virtualization: The system unleashes the full potential of virtualization by enhancing the scalability, performance, and operational control of virtual environments. Cisco security, policy enforcement, and diagnostic features are now extended into virtualized environments to better support changing business and IT requirements.

· Storage access: The system provides consolidated access to both SAN storage and Network Attached Storage (NAS) over the unified fabric. By unifying storage access, the Cisco Unified Computing System can access storage over Ethernet, Fibre Channel, Fibre Channel over Ethernet (FCoE), and iSCSI. This provides customers with their choice of storage protocol and physical architecture, and enhanced investment protection. In addition, the server administrators can pre-assign storage-access policies for system connectivity to storage resources, simplifying storage connectivity, and management for increased productivity.

· Management: The system uniquely integrates all system components which enable the entire solution to be managed as a single entity by the Cisco UCS Manager (UCSM). The Cisco UCS Manager has an intuitive graphical user interface (GUI), a command-line interface (CLI), and a robust application programming interface (API) to manage all system configuration and operations.

The Cisco Unified Computing System is designed to deliver:

· A reduced Total Cost of Ownership and increased business agility.

· Increased IT staff productivity through just-in-time provisioning and mobility support.

· A cohesive, integrated system which unifies the technology in the data center. The system is managed, serviced and tested as a whole.

· Scalability through a design for hundreds of discrete servers and thousands of virtual machines and the capability to scale I/O bandwidth to match demand.

· Industry standards supported by a partner ecosystem of industry leaders.

Cisco UCS Fabric Interconnect

The Cisco UCS Fabric Interconnect (FI) is a core part of the Cisco Unified Computing System, providing both network connectivity and management capabilities for the system. Depending on the model chosen, the Cisco UCS Fabric Interconnect offers line-rate, low-latency, lossless 10 Gigabit or 40 Gigabit Ethernet, Fibre Channel over Ethernet (FCoE) and Fibre Channel connectivity. Cisco UCS Fabric Interconnects provide the management and communication backbone for the Cisco UCS C-Series, S-Series and HX-Series Rack-Mount Servers, Cisco UCS B-Series Blade Servers and Cisco UCS 5100 Series Blade Server Chassis. All servers and chassis, and therefore all blades, attached to the Cisco UCS Fabric Interconnects become part of a single, highly available management domain. In addition, by supporting unified fabrics, the Cisco UCS Fabric Interconnects provide both the LAN and SAN connectivity for all servers within its domain.

From a networking perspective, the Cisco UCS 6200 Series uses a cut-through architecture, supporting deterministic, low latency, line rate 10 Gigabit Ethernet on all ports, up to 1.92 Tbps switching capacity and 160 Gbps bandwidth per chassis, independent of packet size and enabled services. The product family supports Cisco low-latency, lossless 10 Gigabit Ethernet unified network fabric capabilities, which increase the reliability, efficiency, and scalability of Ethernet networks. The Fabric Interconnect supports multiple traffic classes over the Ethernet fabric from the servers to the uplinks. Significant TCO savings come from an FCoE-optimized server design in which network interface cards (NICs), host bus adapters (HBAs), cables, and switches can be consolidated.

The Cisco UCS 6300 Series offers the same features while supporting even higher performance, low latency, lossless, line rate 40 Gigabit Ethernet, with up to 2.56 Tbps of switching capacity. Backward compatibility and scalability are assured with the ability to configure 40 Gbps quad SFP (QSFP) ports as breakout ports using 4x10GbE breakout cables. Existing Cisco UCS servers with 10GbE interfaces can be connected in this manner, although Cisco HyperFlex nodes must use a 40GbE VIC adapter in order to connect to a Cisco UCS 6300 Series Fabric Interconnect.

Cisco UCS 6248UP Fabric Interconnect

The Cisco UCS 6248UP Fabric Interconnect is a one-rack-unit (1RU) 10 Gigabit Ethernet, FCoE and Fiber Channel switch offering up to 960 Gbps throughput and up to 48 ports. The switch has 32 1/10-Gbps fixed Ethernet, FCoE, or 1/2/4/8 Gbps FC ports, plus one expansion slot.

Figure 2 Cisco UCS 6248UP Fabric Interconnect

Cisco UCS 6296UP Fabric Interconnect

The Cisco UCS 6296UP Fabric Interconnect is a two-rack-unit (2RU) 10 Gigabit Ethernet, FCoE, and native Fibre Channel switch offering up to 1920 Gbps of throughput and up to 96 ports. The switch has 48 1/10-Gbps fixed Ethernet, FCoE, or 1/2/4/8 Gbps FC ports, plus three expansion slots.

Figure 3 Cisco UCS 6296UP Fabric Interconnect

Cisco UCS 6332 Fabric Interconnect

The Cisco UCS 6332 Fabric Interconnect is a one-rack-unit (1RU) 40 Gigabit Ethernet and FCoE switch offering up to 2560 Gbps of throughput. The switch has 32 40-Gbps fixed Ethernet and FCoE ports. Up to 24 of the ports can be reconfigured as 4x10Gbps breakout ports, providing up to 96 10-Gbps ports.

Figure 4 Cisco UCS 6332 Fabric Interconnect

Cisco UCS 6332-16UP Fabric Interconnect

The Cisco UCS 6332-16UP Fabric Interconnect is a one-rack-unit (1RU) 10/40 Gigabit Ethernet, FCoE, and native Fibre Channel switch offering up to 2430 Gbps of throughput. The switch has 24 40-Gbps fixed Ethernet and FCoE ports, plus 16 1/10-Gbps fixed Ethernet, FCoE, or 4/8/16 Gbps FC ports. Up to 18 of the 40-Gbps ports can be reconfigured as 4x10Gbps breakout ports, providing up to 88 total 10-Gbps ports.

Figure 5 Cisco UCS 6332-16UP Fabric Interconnect

![]() Note: When used for a Cisco HyperFlex deployment, due to mandatory QoS settings in the configuration, the 6332 and 6332-16UP will be limited to a maximum of four 4x10Gbps breakout ports.

Note: When used for a Cisco HyperFlex deployment, due to mandatory QoS settings in the configuration, the 6332 and 6332-16UP will be limited to a maximum of four 4x10Gbps breakout ports.

Cisco HyperFlex HX-Series Nodes

A HyperFlex cluster requires a minimum of three HX-Series “converged” nodes (with disk storage). Data is replicated across at least two of these nodes, and a third node is required for continuous operation in the event of a single-node failure. Each node that has disk storage is equipped with at least one high-performance SSD drive for data caching and rapid acknowledgment of write requests. Each node also is equipped with additional disks, up to the platform’s physical limit, for long term storage and capacity.

Cisco HyperFlex HXAF220c-M5SX All-Flash Node

This small footprint Cisco HyperFlex all-flash model contains a 240 GB M.2 form factor solid-state disk (SSD) that acts as the boot drive, a 240 GB housekeeping SSD drive, either a single 1.6 TB NVMe SSD or 400GB SAS SSD write-log drive, and six to eight 960 GB or 3.8 TB SATA SSD drives for storage capacity. For configurations requiring self-encrypting drives, the caching SSD is replaced with an 800 GB SAS SED SSD, and the capacity disks are also replaced with either 800 GB, 960 GB or 3.8 TB SED SSDs. A minimum of three nodes and a maximum of sixteen nodes can be configured in one HX all-flash cluster.

Figure 6 HXAF220c-M5SX All-Flash Node

Cisco HyperFlex HXAF240c-M5SX All-Flash Node

This capacity optimized Cisco HyperFlex all-flash model contains a 240 GB M.2 form factor solid-state disk (SSD) that acts as the boot drive, a 240 GB housekeeping SSD drive, either a single 1.6 TB NVMe SSD or 400GB SAS SSD write-log drive installed in a rear hot swappable slot, and six to twenty-three 960 GB or 3.8 TB SATA SSD drives for storage capacity. For configurations requiring self-encrypting drives, the caching SSD is replaced with an 800 GB SAS SED SSD, and the capacity disks are also replaced with either 800 GB, 960 GB or 3.8 TB SED SSDs. A minimum of three nodes and a maximum of sixteen nodes can be configured in one HX all-flash cluster.

Figure 7 HXAF240c-M5SX Node

![]() Note: Either a 400 GB SAS SSD or 1.6 TB NVMe SSD caching drive may be chosen. While the NVMe option can provide a higher level of performance, the partitioning of the two disks is the same, therefore the amount of cache available on the system is the same regardless of the model chosen.

Note: Either a 400 GB SAS SSD or 1.6 TB NVMe SSD caching drive may be chosen. While the NVMe option can provide a higher level of performance, the partitioning of the two disks is the same, therefore the amount of cache available on the system is the same regardless of the model chosen.

Cisco HyperFlex HX220c-M5SX Hybrid Node

This small footprint Cisco HyperFlex hybrid model contains a minimum of six, and up to eight 1.8 terabyte (TB) or 1.2 TB SAS hard disk drives (HDD) that contribute to cluster storage capacity, a 240 GB SSD housekeeping drive, a 480 GB or 800 GB SSD caching drive, and a 240 GB M.2 form factor SSD that acts as the boot drive. For configurations requiring self-encrypting drives, the caching SSD is replaced with an 800 GB SAS SED SSD, and the capacity disks are replaced with 1.2TB SAS SED HDDs. A minimum of three nodes and a maximum of eight nodes can be configured in one HX hybrid cluster.

Figure 8 HX220c-M5SX Node

![]() Note: Either a 480 GB or 800 GB caching SAS SSD may be chosen. This option is provided to allow flexibility in ordering based on product availability, pricing and lead times. There is no performance, capacity, or scalability benefit in choosing the larger disk.

Note: Either a 480 GB or 800 GB caching SAS SSD may be chosen. This option is provided to allow flexibility in ordering based on product availability, pricing and lead times. There is no performance, capacity, or scalability benefit in choosing the larger disk.

Cisco HyperFlex HX240c-M5SX Hybrid Node

This capacity optimized Cisco HyperFlex hybrid model contains a minimum of six and up to twenty-three 1.8 TB or 1.2 TB SAS hard disk drives (HDD) that contribute to cluster storage, a 240 GB SSD housekeeping drive, a single 1.6 TB SSD caching drive installed in a rear hot swappable slot, and a 240 GB M.2 form factor SSD that acts as the boot drive. For configurations requiring self-encrypting drives, the caching SSD is replaced with a 1.6 TB SAS SED SSD, and the capacity disks are replaced with 1.2TB SAS SED HDDs. A minimum of three nodes and a maximum of eight nodes can be configured in one HX hybrid cluster.

Figure 9 HX240c-M5SX Node

Cisco HyperFlex HXAF220c-M4S All-Flash Node

This small footprint Cisco HyperFlex all-flash model contains two Cisco Flexible Flash (FlexFlash) Secure Digital (SD) cards that act as the boot drives, a single 120 GB or 240 GB solid-state disk (SSD) data-logging drive, a single 400 GB NVMe or a 400GB or 800 GB SAS SSD write-log drive, and six 960 GB or 3.8 terabyte (TB) SATA SSD drives for storage capacity. For configurations requiring self-encrypting drives, the caching SSD is replaced with an 800 GB SAS SED SSD, and the capacity disks are also replaced with either 800 GB, 960 GB or 3.8 TB SED SSDs. A minimum of three nodes and a maximum of sixteen nodes can be configured in one HX all-flash cluster.

Figure 10 HXAF220c-M4S All-Flash Node

Cisco HyperFlex HXAF240c-M4SX All-Flash Node

This capacity optimized Cisco HyperFlex all-flash model contains two FlexFlash SD cards that act as boot drives, a single 120 GB or 240 GB solid-state disk (SSD) data-logging drive, a single 400 GB NVMe or a 400GB or 800 GB SAS SSD write-log drive, and six to twenty-three 960 GB or 3.8 terabyte (TB) SATA SSD drives for storage capacity. For configurations requiring self-encrypting drives, the caching SSD is replaced with an 800 GB SAS SED SSD, and the capacity disks are also replaced with either 800 GB, 960 GB or 3.8 TB SED SSDs. A minimum of three nodes and a maximum of sixteen nodes can be configured in one HX all-flash cluster.

![]() Note: In M4 generation server all-flash configurations, either a 400 GB or 800 GB caching SAS SSD may be chosen. This option is provided to allow flexibility in ordering based on product availability, pricing and lead times. There is no performance, capacity, or scalability benefit in choosing the larger disk.

Note: In M4 generation server all-flash configurations, either a 400 GB or 800 GB caching SAS SSD may be chosen. This option is provided to allow flexibility in ordering based on product availability, pricing and lead times. There is no performance, capacity, or scalability benefit in choosing the larger disk.

Cisco HyperFlex HX220c-M4S Hybrid Node

This small footprint Cisco HyperFlex hybrid model contains six 1.8 terabyte (TB) or 1.2 TB SAS HDD drives that contribute to cluster storage capacity, a 120 GB or 240 GB SSD housekeeping drive, a 480 GB SAS SSD caching drive, and two Cisco Flexible Flash (FlexFlash) Secure Digital (SD) cards that act as boot drives. For configurations requiring self-encrypting drives, the caching SSD is replaced with an 800 GB SAS SED SSD, and the capacity disks are replaced with 1.2TB SAS SED HDDs. A minimum of three nodes and a maximum of eight nodes can be configured in one HX hybrid cluster.

Figure 12 HX220c-M4S Node

Cisco HyperFlex HX240c-M4SX Hybrid Node

This capacity optimized Cisco HyperFlex hybrid model contains a minimum of six and up to twenty-three 1.8 TB or 1.2 TB SAS HDD drives that contribute to cluster storage, a single 120 GB or 240 GB SSD housekeeping drive, a single 1.6 TB SAS SSD caching drive, and two FlexFlash SD cards that act as the boot drives. For configurations requiring self-encrypting drives, the caching SSD is replaced with a 1.6 TB SAS SED SSD, and the capacity disks are replaced with 1.2TB SAS SED HDDs. A minimum of three nodes and a maximum of eight nodes can be configured in one HX hybrid cluster.

Figure 13 HX240c-M4SX Node

![]() Note: In all M4 generation server configurations, either a 120 GB or 240 GB housekeeping disk may be chosen. This option is provided to allow flexibility in ordering based on product availability, pricing and lead times. There is no performance, capacity or scalability benefit in choosing the larger disk.

Note: In all M4 generation server configurations, either a 120 GB or 240 GB housekeeping disk may be chosen. This option is provided to allow flexibility in ordering based on product availability, pricing and lead times. There is no performance, capacity or scalability benefit in choosing the larger disk.

Cisco VIC 1227 and 1387 MLOM Interface Cards

The Cisco UCS Virtual Interface Card (VIC) 1227 is a dual-port Enhanced Small Form-Factor Pluggable (SFP+) 10-Gbps Ethernet and Fibre Channel over Ethernet (FCoE)-capable PCI Express (PCIe) modular LAN-on-motherboard (mLOM) adapter installed in the Cisco UCS HX-Series Rack Servers. The VIC 1227 is used in conjunction with the Cisco UCS 6248UP or 6296UP model Fabric Interconnects.

The Cisco UCS VIC 1387 Card is a dual-port Enhanced Quad Small Form-Factor Pluggable (QSFP+) 40-Gbps Ethernet and Fibre Channel over Ethernet (FCoE)-capable PCI Express (PCIe) modular LAN-on-motherboard (mLOM) adapter installed in the Cisco UCS HX-Series Rack Servers. The VIC 1387 is used in conjunction with the Cisco UCS 6332 or 6332-16UP model Fabric Interconnects.

The mLOM slot can be used to install a Cisco VIC without consuming a PCIe slot, which provides greater I/O expandability. It incorporates next-generation converged network adapter (CNA) technology from Cisco, providing investment protection for future feature releases. The card enables a policy-based, stateless, agile server infrastructure that can present up to 256 PCIe standards-compliant interfaces to the host, each dynamically configured as either a network interface card (NICs) or host bus adapter (HBA). The personality of the interfaces is set programmatically using the service profile associated with the server. The number, type (NIC or HBA), identity (MAC address and World Wide Name [WWN]), failover policy, adapter settings, bandwidth, and quality-of-service (QoS) policies of the PCIe interfaces are all specified using the service profile.

Figure 14 Cisco VIC 1227 mLOM Card

Figure 15 Cisco VIC 1387 mLOM Card

![]() Note: Hardware revision V03 or later of the Cisco VIC 1387 card is required for the Cisco HyperFlex HX-series servers.

Note: Hardware revision V03 or later of the Cisco VIC 1387 card is required for the Cisco HyperFlex HX-series servers.

Cisco HyperFlex Compute-Only Nodes

HyperFlex 2.6 expands the number of options available for using standard model Cisco UCS Rack-Mount and Blade Servers as compute-only nodes. All current model Cisco UCS M4 and M5 generation servers, except the C880 M4, may be used as compute-only nodes connected to a Cisco HyperFlex cluster, along with a limited number of previous M3 generation servers. Any valid CPU and memory configuration is allowed in the compute-only nodes, and the servers can be configured to boot from SAN, local disks, or internal SD cards. The following servers may be used as compute-only nodes:

· Cisco UCS B200 M3 Blade Server

· Cisco UCS B200 M4 Blade Server

· Cisco UCS B200 M5 Blade Server

· Cisco UCS B260 M4 Blade Server

· Cisco UCS B420 M4 Blade Server

· Cisco UCS B460 M4 Blade Server

· Cisco UCS B480 M5 Blade Server

· Cisco UCS C220 M3 Rack-Mount Servers

· Cisco UCS C220 M4 Rack-Mount Servers

· Cisco UCS C220 M5 Rack-Mount Servers

· Cisco UCS C240 M3 Rack-Mount Servers

· Cisco UCS C240 M4 Rack-Mount Servers

· Cisco UCS C240 M5 Rack-Mount Servers

· Cisco UCS C460 M4 Rack-Mount Servers

· Cisco UCS C480 M5 Rack-Mount Servers

Cisco HyperFlex Data Platform Software

The Cisco HyperFlex HX Data Platform is a purpose-built, high-performance, distributed file system with a wide array of enterprise-class data management services. The data platform’s innovations redefine distributed storage technology, exceeding the boundaries of first-generation hyperconverged infrastructures. The data platform has all the features expected in an enterprise shared storage system, eliminating the need to configure and maintain complex Fibre Channel storage networks and devices. The platform simplifies operations and helps ensure data availability. Enterprise-class storage features include the following:

· Data protection creates multiple copies of the data across the cluster so that data availability is not affected if a single or multiple components fail (depending on the replication factor configured).

· Deduplication is always on, helping reduce storage requirements in virtualization clusters in which multiple operating system instances in guest virtual machines result in large amounts of replicated data.

· Compression further reduces storage requirements, reducing costs, and the log-structured file system is designed to store variable-sized blocks, reducing internal fragmentation.

· Replication copies virtual machine level snapshots from one Cisco HyperFlex cluster to another, to facilitate recovery from a cluster or site failure, via a failover to the secondary site of all VMs.

· Encryption stores all data on the caching and capacity disks in an encrypted format, to prevent accidental data loss or data theft. Key management can be done using local Cisco UCS Manager managed keys, or third-party Key Management Systems (KMS) via the Key Management Interoperability Protocol (KMIP).

· Thin provisioning allows large volumes to be created without requiring storage to support them until the need arises, simplifying data volume growth and making storage a “pay as you grow” proposition.

· Fast, space-efficient clones rapidly duplicate virtual storage volumes so that virtual machines can be cloned simply through metadata operations, with actual data copied only for write operations.

· Snapshots help facilitate backup and remote-replication operations, which are needed in enterprises that require always-on data availability.

Cisco HyperFlex Connect HTML5 Management Web Page

An all-new HTML 5 based Web UI is available for use as the primary management tool for Cisco HyperFlex. Through this centralized point of control for the cluster, administrators can create volumes, monitor the data platform health, and manage resource use. Administrators can also use this data to predict when the cluster will need to be scaled. To use the HyperFlex Connect UI, connect using a web browser to the HyperFlex cluster IP address: http://<hx controller cluster ip>.

Figure 16 HyperFlex Connect GUI

Cisco Intersight Cloud Based Management

Cisco Intersight (https://intersight.com), previously known as Starship, is the latest visionary cloud-based management tool, designed to provide a centralized off-site management, monitoring and reporting tool for all of your Cisco UCS based solutions. In the initial release of Cisco Intersight, monitoring and reporting is enabled against Cisco HyperFlex clusters. The Cisco Intersight website and framework can be upgraded with new and enhanced features independently of the products that are managed, meaning that many new features and capabilities can come with no downtime or upgrades required by the end users. Future releases of Cisco HyperFlex will enable further functionality along with these upgrades to the Cisco Intersight framework. This unique combination of embedded and online technologies will result in a complete cloud-based management solution that can care for Cisco HyperFlex throughout the entire lifecycle, from deployment through retirement.

Figure 17 Cisco Intersight

Cisco HyperFlex HX Data Platform Administration Plug-in

The Cisco HyperFlex HX Data Platform is also administered secondarily through a VMware vSphere web client plug-in.

Figure 18 HyperFlex Web Client Plugin

Cisco HyperFlex HX Data Platform Controller

A Cisco HyperFlex HX Data Platform controller resides on each node and implements the distributed file system. The controller runs as software in user space within a virtual machine, and intercepts and handles all I/O from the guest virtual machines. The Storage Controller Virtual Machine (SCVM) uses the VMDirectPath I/O feature to provide PCI passthrough control of the physical server’s SAS disk controller. This method gives the controller VM full control of the physical disk resources, utilizing the SSD drives as a read/write caching layer, and the HDDs or SDDs as a capacity layer for distributed storage. The controller integrates the data platform into the VMware vSphere cluster through the use of three preinstalled VMware ESXi vSphere Installation Bundles (VIBs) on each node:

· IO Visor: This VIB provides a network file system (NFS) mount point so that the ESXi hypervisor can access the virtual disks that are attached to individual virtual machines. From the hypervisor’s perspective, it is simply attached to a network file system. The IO Visor intercepts guest VM IO traffic, and intelligently redirects it to the HyperFlex SCVMs.

· VMware API for Array Integration (VAAI): This storage offload API allows vSphere to request advanced file system operations such as snapshots and cloning. The controller implements these operations via manipulation of the filesystem metadata rather than actual data copying, providing rapid response, and thus rapid deployment of new environments.

· stHypervisorSvc: This VIB adds enhancements and features needed for HyperFlex data protection and VM replication.

Data Operations and Distribution

The Cisco HyperFlex HX Data Platform controllers handle all read and write operation requests from the guest VMs to their virtual disks (VMDK) stored in the distributed datastores in the cluster. The data platform distributes the data across multiple nodes of the cluster, and also across multiple capacity disks of each node, according to the replication level policy selected during the cluster setup. This method avoids storage hotspots on specific nodes, and on specific disks of the nodes, and thereby also avoids networking hotspots or congestion from accessing more data on some nodes versus others.

Replication Factor

The policy for the number of duplicate copies of each storage block is chosen during cluster setup, and is referred to as the replication factor (RF).

· Replication Factor 3: For every I/O write committed to the storage layer, 2 additional copies of the blocks written will be created and stored in separate locations, for a total of 3 copies of the blocks. Blocks are distributed in such a way as to ensure multiple copies of the blocks are not stored on the same disks, nor on the same nodes of the cluster. This setting can tolerate simultaneous failures of 2 entire nodes in a cluster of 5 nodes or greater, without losing data and resorting to restore from backup or other recovery processes. RF3 is recommended for all production systems.

· Replication Factor 2: For every I/O write committed to the storage layer, 1 additional copy of the blocks written will be created and stored in separate locations, for a total of 2 copies of the blocks. Blocks are distributed in such a way as to ensure multiple copies of the blocks are not stored on the same disks, nor on the same nodes of the cluster. This setting can tolerate a failure of 1 entire node without losing data and resorting to restore from backup or other recovery processes. RF2 is suitable for non-production systems, or environments where the extra data protection is not needed.

Data Write and Compression Operations

Internally, the contents of each virtual disk are subdivided and spread across multiple servers by the HXDP software. For each write operation, the data is intercepted by the IO Visor module on the node where the VM is running, a primary node is determined for that particular operation via a hashing algorithm, and then sent to the primary node via the network. The primary node compresses the data in real time, writes the compressed data to its caching SSD, and replica copies of that compressed data are written to the caching SSD of the remote nodes in the cluster, according to the replication factor setting. For example, at RF=3 a write will be written to the primary node for that virtual disk address, and two additional writes will be committed in parallel on two other nodes. Because the virtual disk contents have been divided and spread out via the hashing algorithm, this method results in all writes being spread across all nodes, avoiding the problems with data locality and “noisy” VMs consuming all the IO capacity of a single node. The write operation will not be acknowledged until all three copies are written to the caching layer SSDs. Written data is also cached in a write log area resident in memory in the controller VM, along with the write log on the caching SSDs. This process speeds up read requests when reads are requested of data that has recently been written.

Data Destaging and Deduplication

The Cisco HyperFlex HX Data Platform constructs multiple write caching segments on the caching SSDs of each node in the distributed cluster. As write cache segments become full, and based on policies accounting for I/O load and access patterns, those write cache segments are locked and new writes roll over to a new write cache segment. The data in the now locked cache segment is destaged to the HDD capacity layer of the nodes for the Hybrid system or to the SDD capacity layer of the nodes for the All-Flash system. During the destaging process, data is deduplicated before being written to the capacity storage layer, and the resulting data can now be written to the HDDs or SDDs of the server. On hybrid systems, the now deduplicated and compressed data is also written to the dedicated read cache area of the caching SSD, which speeds up read requests of data that has recently been written. When the data is destaged to a HDD, it is written in a single sequential operation, avoiding disk head seek thrashing on the spinning disks and accomplishing the task in the minimal amount of time. Since the data is already deduplicated and compressed before being written, the platform avoids additional I/O overhead often seen on competing systems, which must later do a read/dedupe/compress/write cycle. Deduplication, compression and destaging take place with no delays or I/O penalties to the guest VMs making requests to read or write data, which benefits both the HDD and SDD configurations.

Figure 19 HyperFlex HX Data Platform Data Movement

Data Read Operations

For data read operations, data may be read from multiple locations. For data that was very recently written, the data is likely to still exist in the write log of the local platform controller memory, or the write log of the local caching layer disk. If local write logs do not contain the data, the distributed filesystem metadata will be queried to see if the data is cached elsewhere, either in write logs of remote nodes, or in the dedicated read cache area of the local and remote caching SSDs of hybrid nodes. Finally, if the data has not been accessed in a significant amount of time, the filesystem will retrieve the requested data from the distributed capacity layer. As requests for reads are made to the distributed filesystem and the data is retrieved from the capacity layer, the caching SSDs of hybrid nodes populate their dedicated read cache area to speed up subsequent requests for the same data. This multi-tiered distributed system with several layers of caching techniques, ensures that data is served at the highest possible speed, leveraging the caching SSDs of the nodes fully and equally. All-flash configurations, however, do not employ a dedicated read cache because such caching does not provide any performance benefit; the persistent data copy already resides on high-performance SSDs.

In summary, the Cisco HyperFlex HX Data Platform implements a distributed, log-structured file system that performs data operations via two configurations:

· In a Hybrid configuration, the data platform provides a caching layer using SSDs to accelerate read requests and write responses, and it implements a storage capacity layer using HDDs.

· In an All-Flash configuration, the data platform provides a dedicated caching layer using high endurance SSDs to accelerate write responses, and it implements a storage capacity layer also using SSDs. Read requests are fulfilled directly from the capacity SSDs, as a dedicated read cache is not needed to accelerate read operations.

Requirements

The following sections detail the physical hardware, software revisions, and firmware versions required to install the Cisco HyperFlex system. Maximum cluster size of 32 nodes can be obtained by combining 16 converged nodes (storage nodes), and 16 compute-only nodes (all-flash only, hybrid cluster maximum size is 8 converged nodes, plus 8 compute-only nodes).

Physical Components

Table 1 HyperFlex System Components

| Component |

Hardware Required |

| Fabric Interconnects |

Two Cisco UCS 6248UP Fabric Interconnects, or Two Cisco UCS 6296UP Fabric Interconnects, or Two Cisco UCS 6332 Fabric Interconnects, or Two Cisco UCS 6332-16UP Fabric Interconnects |

| Servers |

Three to Sixteen Cisco HyperFlex HXAF220c-M5SX All-Flash rack servers, or Three to Sixteen Cisco HyperFlex HXAF240c-M5SX All-Flash rack servers, or Three to Eight Cisco HyperFlex HX220c-M5SX Hybrid rack servers, or Three to Eight Cisco HyperFlex HX240c-M5SX Hybrid rack servers, or Three to Sixteen Cisco HyperFlex HXAF220c-M4S All-Flash rack servers, or Three to Sixteen Cisco HyperFlex HXAF240c-M4SX All-Flash rack servers, or Three to Eight Cisco HyperFlex HX220c-M4S Hybrid rack servers, or Three to Eight Cisco HyperFlex HX240c-M4SX Hybrid rack servers |

For complete server specifications and more information, please refer to the links below:

Compare models:

HXAF220c-M5SX Spec Sheet:

HXAF240c-M5SX Spec Sheet:

HX220c-M5SX Spec Sheet:

HX240c-M5SX Spec Sheet:

HXAF220c-M4S Spec Sheet:

HXAF240c-M4S Spec Sheet:

HX220c-M4S Spec Sheet:

HX240c-M4SX Spec Sheet:

Table 2 lists the hardware component options for the HXAF220c-M5SX server model:

Table 2 HXAF220c-M5SX Server Options

| HXAF220c-M5SX options |

Hardware Required |

|

| Processors |

Chose a matching pair of Intel Xeon Processor Scalable Family CPUs |

|

| Memory |

192 GB to 3 TB of total memory using 16 GB, 32 GB, 64 GB, or 128 GB DDR4 2666 MHz 1.2v modules |

|

| Disk Controller |

Cisco 12Gbps Modular SAS HBA |

|

| SSDs |

Standard |

· One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD · One 400 GB 2.5 Inch Enterprise Performance 12G SAS SSD, or One 1.6 TB 2.5 Inch Enterprise Performance NVMe SSD · Six to eight 3.8 TB 2.5 Inch Enterprise Value 6G SATA SSDs, or Six to eight 960 GB 2.5 Inch Enterprise Value 6G SATA SSDs |

| SED |

· One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD · One 800 GB 2.5 Inch Enterprise Performance 12G SAS SED SSD · Six to eight 3.8 TB 2.5 Inch Enterprise Value 6G SATA SED SSDs, or Six to eight 960 GB 2.5 Inch Enterprise Value 6G SATA SED SSDs, or six to eight 800 GB 2.5 Inch Enterprise Performance 12G SAS SED SSDs |

|

| Network |

Cisco UCS VIC1387 VIC MLOM |

|

| Boot Device |

One 240 GB M.2 form factor SATA SSD |

|

| microSD Card |

One 32GB microSD card for local host utilities storage |

|

| Optional |

Cisco QSA module to convert 40 GbE QSFP+ to 10 GbE SFP+ |

|

Table 3 lists the hardware component options for the HXAF240c-M5SX server model:

Table 3 HXAF240c-M5SX Server Options

| HXAF240c-M5SX Options |

Hardware Required |

|

| Processors |

Chose a matching pair of Intel Xeon Processor Scalable Family CPUs |

|

| Memory |

192 GB to 3 TB of total memory using 16 GB, 32 GB, 64 GB, or 128 GB DDR4 2666 MHz 1.2v modules |

|

| Disk Controller |

Cisco 12Gbps Modular SAS HBA |

|

| SSD |

Standard |

· One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD · One 400 GB 2.5 Inch Enterprise Performance 12G SAS SSD, or One 1.6 TB 2.5 Inch Enterprise Performance NVMe SSD · Six to twenty-three 3.8 TB 2.5 Inch Enterprise Value 6G SATA SSDs, or Six to twenty-three 960 GB 2.5 Inch Enterprise Value 6G SATA SSDs |

| SED |

· One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD · One 800 GB 2.5 Inch Enterprise Performance 12G SAS SED SSD · Six to twenty-three 3.8 TB 2.5 Inch Enterprise Value 6G SATA SED SSDs, or Six to twenty-three 960 GB 2.5 Inch Enterprise Value 6G SATA SED SSDs, or six to twenty-three 800 GB 2.5 Inch Enterprise Performance 12G SAS SED SSDs |

|

| Network |

Cisco UCS VIC1387 VIC MLOM |

|

| Boot Device |

One 240 GB M.2 form factor SATA SSD |

|

| microSD Card |

One 32GB microSD card for local host utilities storage |

|

| Optional |

Cisco QSA module to convert 40 GbE QSFP+ to 10 GbE SFP+ |

|

Table 4 lists the hardware component options for the HX220c-M5SX server model:

Table 4 HX220c-M5SX Server Options

| HX220c-M5SX Options |

Hardware Required |

|

| Processors |

Chose a matching pair of Intel Xeon Processor Scalable Family CPUs |

|

| Memory |

192 GB to 3 TB of total memory using 16 GB, 32 GB, 64 GB, or 128 GB DDR4 2666 MHz 1.2v modules |

|

| Disk Controller |

Cisco 12Gbps Modular SAS HBA |

|

| SSDs |

Standard |

· One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD · One 480 GB 2.5 Inch Enterprise Performance 6G SATA SSD, or One 800 GB 2.5 Inch Enterprise Performance 12G SAS SSD |

| SED |

· One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD · One 800 GB 2.5 Inch Enterprise Performance 12G SAS SED SSD |

|

| HDDs |

Standard |

Six to eight 1.8 TB or 1.2 TB SAS 12Gbps 10K rpm SFF HDD |

| SED |

Six to eight 1.2 TB SAS 12Gbps 10K rpm SFF SED HDD |

|

| Network |

Cisco UCS VIC1387 VIC MLOM |

|

| Boot Device |

One 240 GB M.2 form factor SATA SSD |

|

| microSD Card |

One 32GB microSD card for local host utilities storage |

|

| Optional |

Cisco QSA module to convert 40 GbE QSFP+ to 10 GbE SFP+ |

|

Table 5 lists the hardware component options for the HX240c-M5SX server model:

Table 5 HX240c-M5SX Server Options

| HX240c-M5SX Options |

Hardware Required |

|

| Processors |

Chose a matching pair of Intel Xeon Processor Scalable Family CPUs |

|

| Memory |

192 GB to 3 TB of total memory using 16 GB, 32 GB, 64 GB, or 128 GB DDR4 2666 MHz 1.2v modules |

|

| Disk Controller |

Cisco 12Gbps Modular SAS HBA |

|

| SSDs |

Standard |

· One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD · One 1.6 TB 2.5 Inch Enterprise Performance 12G SAS SSD |

| SED |

· One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD · One 1.6 TB 2.5 Inch Enterprise Performance 12G SAS SED SSD |

|

| HDDs |

Standard |

Six to twenty-three 1.8 TB or 1.2 TB SAS 12Gbps 10K rpm SFF HDD |

| SED |

Six to twenty-three 1.2 TB SAS 12Gbps 10K rpm SFF SED HDD |

|

| Network |

Cisco UCS VIC1387 VIC MLOM |

|

| Boot Device |

One 240 GB M.2 form factor SATA SSD |

|

| microSD Card |

One 32GB microSD card for local host utilities storage |

|

| Optional |

Cisco QSA module to convert 40 GbE QSFP+ to 10 GbE SFP+ |

|

Table 6 lists the hardware component options for the HXAF220c-M4S server model:

Table 6 HXAF220c-M4S Server Options

| HXAF220c-M4S options |

Hardware Required |

|

| Processors |

Chose a matching pair of Intel E5-2600 v4 CPUs |

|

| Memory |

128 GB to 1.5 TB of total memory using 16 GB, 32 GB, or 64 GB DDR4 2400 MHz 1.2v modules, or 64 GB DDR4 2133 MHz 1.2v modules |

|

| Disk Controller |

Cisco 12Gbps Modular SAS HBA |

|

| SSDs |

Standard |

· One 120 GB 2.5 Inch Enterprise Value 6G SATA SSD, or One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD · One 400 GB 2.5 Inch Enterprise Performance 12G SAS SSD, or One 800 GB 2.5 Inch Enterprise Performance 12G SAS SSD, or One 400 GB 2.5 Inch Enterprise Performance NVMe SSD · Six 3.8 TB 2.5 Inch Enterprise Value 6G SATA SSDs, or Six 960 GB 2.5 Inch Enterprise Value 6G SATA SSDs |

| SED |

· One 120 GB 2.5 Inch Enterprise Value 6G SATA SSD, or One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD · One 800 GB 2.5 Inch Enterprise Performance 12G SAS SED SSDs · Six 3.8 TB 2.5 Inch Enterprise Value 6G SATA SED SSDs, or Six 960 GB 2.5 Inch Enterprise Value 6G SATA SED SSDs, or Six 800 GB 2.5 Inch Enterprise Performance 12G SAS SED SSDs |

|

| Network |

Cisco UCS VIC1227 VIC MLOM, or Cisco UCS VIC1387 VIC MLOM |

|

| Boot Devices |

Two 64GB Cisco FlexFlash SD Cards for UCS Servers |

|

Table 7 lists the hardware component options for the HXAF240c-M4SX server model:

Table 7 HXAF240c-M4S Server Options

| HXAF240c-M4SX Options |

Hardware Required |

|

| Processors |

Chose a matching pair of Intel E5-2600 v4 CPUs |

|

| Memory |

128 GB to 1.5 TB of total memory using 16 GB, 32 GB, or 64 GB DDR4 2400 MHz 1.2v modules, or 64 GB DDR4 2133 MHz 1.2v modules |

|

| Disk Controller |

Cisco 12Gbps Modular SAS HBA |

|

| SSD |

Standard |

· One 120 GB 2.5 Inch Enterprise Value 6G SATA SSD, or One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD (in the rear disk enclosure) · One 400 GB 2.5 Inch Enterprise Performance 12G SAS SSD, or One 800 GB 2.5 Inch Enterprise Performance 12G SAS SSD, or One 400 GB 2.5 Inch Enterprise Performance NVMe SSD · Six to Twenty-three 3.8 TB 2.5 Inch Enterprise Value 6G SATA SSDs, or Six to Twenty-three 960 GB 2.5 Inch Enterprise Value 6G SATA SSDs |

| SED |

· One 120 GB 2.5 Inch Enterprise Value 6G SATA SSD, or One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD (in a front disk bay) · One 800 GB 2.5 Inch Enterprise Performance 12G SAS SED SSDs · Six to Twenty-two 3.8 TB 2.5 Inch Enterprise Value 6G SATA SED SSDs, or Six to Twenty-two 960 GB 2.5 Inch Enterprise Value 6G SATA SED SSDs, or Six to Twenty-two 800 GB 2.5 Inch Enterprise Performance 12G SAS SED SSDs |

|

| Network |

Cisco UCS VIC1227 VIC MLOM, or Cisco UCS VIC1387 VIC MLOM |

|

| Boot Devices |

Two 64GB Cisco FlexFlash SD Cards for UCS Servers |

|

Table 8 lists the hardware component options for the HX220c-M4S server model:

Table 8 HX220c-M4S Server Options

| HX220c-M4S Options |

Hardware Required |

|

| Processors |

Chose a matching pair of Intel E5-2600 v4 CPUs |

|

| Memory |

128 GB to 1.5 TB of total memory using 16 GB, 32 GB, or 64 GB DDR4 2400 MHz 1.2v modules, or 64 GB DDR4 2133 MHz 1.2v modules |

|

| Disk Controller |

Cisco 12Gbps Modular SAS HBA |

|

| SSDs |

Standard |

· One 120 GB 2.5 Inch Enterprise Value 6G SATA SSD, or One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD · One 480 GB 2.5 Inch Enterprise Performance 6G SATA SSD |

| SED |

· One 120 GB 2.5 Inch Enterprise Value 6G SATA SSD, or One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD · One 800 GB 2.5 Inch Enterprise Performance 12G SAS SED SSDs |

|

| HDDs |

Standard |

Six 1.8 TB or 1.2 TB SAS 12Gbps 10K rpm SFF HDD |

| SED |

Six 1.2 TB SAS 12Gbps 10K rpm SFF SED HDD |

|

| Network |

Cisco UCS VIC1227 VIC MLOM, or Cisco UCS VIC1387 VIC MLOM |

|

| Boot Devices |

Two 64GB Cisco FlexFlash SD Cards for Cisco UCS Servers |

|

Table 9 lists the hardware component options for the HX240c-M4SX server model:

Table 9 HX240c-M4SX Server Options

| HX240c-M4SX Options |

Hardware Required |

|

| Processors |

Chose a matching pair of Intel E5-2600 v4 CPUs |

|

| Memory |

128 GB to 1.5 TB of total memory using 16 GB, 32 GB, or 64 GB DDR4 2400 MHz 1.2v modules, or 64 GB DDR4 2133 MHz 1.2v modules |

|

| Disk Controller |

Cisco 12Gbps Modular SAS HBA |

|

| SSDs |

Standard |

· One 120 GB 2.5 Inch Enterprise Value 6G SATA SSD, or One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD (in the rear disk enclosure) · One 1.6 TB 2.5 Inch Enterprise Performance 6G SATA SSD |

| SED |

· One 120 GB 2.5 Inch Enterprise Value 6G SATA SSD, or One 240 GB 2.5 Inch Enterprise Value 6G SATA SSD (in a front disk bay) · One 1.6 TB 2.5 Inch Enterprise Performance 12G SAS SED SSD |

|

| HDDs |

Standard |

Six to twenty-three 1.8 TB or 1.2 TB SAS 12Gbps 10K rpm SFF HDD |

| SED |

Six to twenty-two 1.2 TB SAS 12Gbps 10K rpm SFF SED HDD |

|

| Network |

Cisco UCS VIC1227 VIC MLOM, or Cisco UCS VIC1387 VIC MLOM |

|

| Boot Devices |

Two 64GB Cisco FlexFlash SD Cards for Cisco UCS Servers |

|

Software Components

Table 10 lists the software components and the versions required for the Cisco HyperFlex system:

| Component |

Software Required |

| Hypervisor |

VMware ESXi 6.0 U1b, 6.0 U2, 6.0 U2 Patch 3, 6.0 U2 Patch 4, 6.0 U3 or VMware ESXi 6.5 Patch 1a, VMware 6.5 U1 ESXi 6.5 U1 is recommended (CISCO Custom Image for ESXi 6.5 Update 1: HX-Vmware-ESXi-6.5U1-5969303-Cisco-Custom-6.5.1.1.iso)

|

| Management Server |

VMware vCenter Server for Windows or vCenter Server Appliance 6.0 U1 or later. Refer to http://www.vmware.com/resources/compatibility/sim/interop_matrix.php for interoperability of your ESXi version and vCenter Server.

|

| Cisco HyperFlex HX Data Platform |

Cisco HyperFlex HX Data Platform Software 2.6.1b |

| Cisco UCS Firmware |

Cisco UCS Infrastructure software, B-Series and C-Series bundles, revision 3.2(2d) or later.

|

Considerations

Version Control

The software revisions listed in Table 10 are the only valid and supported configuration at the time of the publishing of this validated design. Special care must be taken not to alter the revision of the hypervisor, vCenter server, Cisco HX platform software, or the Cisco UCS firmware without first consulting the appropriate release notes and compatibility matrixes to ensure that the system is not being modified into an unsupported configuration.

vCenter Server

The vCenter Server must be installed and operational prior to the installation of the Cisco HyperFlex HX Data Platform software. The following best practice guidance applies to installations of HyperFlex 2.5:

· Do not modify the default TCP port settings of the vCenter installation. Using non-standard ports can lead to failures during the installation.

· It is recommended to build the vCenter server on a physical server or in a virtual environment outside of the HyperFlex cluster. Building the vCenter server as a virtual machine inside the HyperFlex cluster environment is highly discouraged. There is a tech note for multiple methods of deployment if no external vCenter server is already available: http://www.cisco.com/c/en/us/td/docs/hyperconverged_systems/HyperFlex_HX_DataPlatformSoftware/TechNotes/Nested_vcenter_on_hyperflex.html

![]() Note: This document does not cover the installation and configuration of VMware vCenter Server for Windows, or the vCenter Server Appliance.

Note: This document does not cover the installation and configuration of VMware vCenter Server for Windows, or the vCenter Server Appliance.

Scale

Cisco HyperFlex clusters currently scale up from a minimum of 3 to a maximum of 16 converged nodes per all-flash cluster, i.e. 16 nodes providing storage resources to the HX Distributed Filesystem. For clusters with HX hybrid nodes, the limit is 8 converged nodes. For the compute intensive “extended” cluster design, a configuration with 3-16 Cisco HX-series all-flash converged nodes can be combined with up to 16 compute nodes. Since the quantity of compute-only nodes cannot exceed the quantity of converged nodes, in clusters with hybrid HX converged servers, the maximum number of compute-only nodes is 8. Cisco blade servers and rack-mount servers can be used for the compute only nodes. It is required that the number of compute-only nodes should always be less than or equal to number of converged nodes.

Once the maximum size of a cluster has been reached, the environment can be “scaled out” by adding additional HX model servers to the Cisco UCS domain, installing an additional HyperFlex cluster on them, and controlling them via the same vCenter server. A maximum of 8 clusters can be created in a single UCS domain, and up to 100 HyperFlex clusters can be managed by a single vCenter server.

Capacity

Overall usable cluster capacity is based on a number of factors. The number of nodes in the cluster must be considered, plus the number and size of the capacity layer disks. Caching disk sizes are not calculated as part of the cluster capacity. The replication factor of the HyperFlex HX Data Platform also affects the cluster capacity as it defines the number of copies of each block of data written.

Disk drive manufacturers have adopted a size reporting methodology using calculation by powers of 10, also known as decimal prefix. As an example, a 120 GB disk is listed with a minimum of 120 x 10^9 bytes of usable addressable capacity, or 120 billion bytes. However, many operating systems and filesystems report their space based on standard computer binary exponentiation, or calculation by powers of 2, also called binary prefix. In this example, 2^10 or 1024 bytes make up a kilobyte, 2^10 kilobytes make up a megabyte, 2^10 megabytes make up a gigabyte, and 2^10 gigabytes make up a terabyte. As the values increase, the disparity between the two systems of measurement and notation get worse, at the terabyte level, the deviation between a decimal prefix value and a binary prefix value is nearly 10 percent.

The International System of Units (SI) defines values and decimal prefix by powers of 10 as follows:

Table 11 SI Unit Values (Decimal Prefix)

| Value |

Symbol |

Name |

| 1000 bytes |

kB |

Kilobyte |

| 1000 kB |

MB |

Megabyte |

| 1000 MB |

GB |

Gigabyte |

| 1000 GB |

TB |

Terabyte |

The International Organization for Standardization (ISO) and the International Electrotechnical Commission (IEC) defines values and binary prefix by powers of 2 in ISO/IEC 80000-13:2008 Clause 4 as follows:

Table 12 IEC unit values (binary prefix)

| Value |

Symbol |

Name |

| 1024 bytes |

KiB |

Kibibyte |

| 1024 KiB |

MiB |

Mebibyte |

| 1024 MiB |

GiB |

Gibibyte |

| 1024 GiB |

TiB |

Tebibyte |

For the purpose of this document, the decimal prefix numbers are used only for raw disk capacity as listed by the respective manufacturers. For all calculations where raw or usable capacities are shown from the perspective of the HyperFlex software, filesystems or operating systems, the binary prefix numbers are used. This is done primarily to show a consistent set of values as seen by the end user from within the HyperFlex vCenter Web Plugin and HyperFlex Connect GUI when viewing cluster capacity, allocation and consumption, and also within most operating systems.

Table 13 lists a set of HyperFlex HX Data Platform cluster usable capacity values, using binary prefix, for an array of cluster configurations. These values are useful for determining the appropriate size of HX cluster to initially purchase, and how much capacity can be gained by adding capacity disks. The calculations for these values are listed in Appendix A: Cluster Capacity Calculations. The HyperFlex tool to help with sizing is listed in Appendix B: HyperFlex Sizer.

Table 13 Cluster Usable Capacities

| HX-Series Server Model |

Node Quantity |

Capacity Disk Size (each) |

Capacity Disk Quantity (per node) |

Cluster Usable Capacity at RF=2 |

Cluster Usable Capacity at RF=3 |

| HXAF220c-M4S |

8 |

3.8 TB |

6 |

77.1 TiB |

51.4 TiB |

| 960 GB |

6 |

19.3 TiB |

12.9 TiB |

||

| 800 GB |

6 |

16.1 TiB |

10.7 TiB |

||

| HXAF240c-M4SX |

8 |

3.8 TB |

6 |

77.1 TiB |

51.4 TiB |

| 15 |

192.8 TiB |

128.5 TiB |

|||

| 23 |

295.7 TiB |

197.1 TiB |

|||

| 960 GB |

6 |

19.3 TiB |

12.9 TiB |

||

| 15 |

48.2 TiB |

32.1 TiB |

|||

| 23 |

73.9 TiB |

49.3 TiB |

|||

| 800 GB |

6 |

16.1 TiB |

10.7 TiB |

||

| 15 |

40.2 TiB |

26.8 TiB |

|||

| 22 |

58.9 TiB |

39.3 TiB |

|||

| HX220c-M4S |

8 |

1.2 TB |

6 |

24.1 TiB |

16.1 TiB |

| 1.8 TB |

6 |

36.2 TiB |

24.1 TiB |

||

| HX240c-M4SX |

8 |

1.2 TB |

6 |

24.1 TiB |

16.1 TiB |

| 15 |

60.2 TiB |

40.2 TiB |

|||

| 23 |

92.4 TiB |

61.6 TiB |

|||

| 1.8 TB |

6 |

36.2 TiB |

24.1 TiB |

||

| 15 |

90.4 TiB |

60.2 TiB |

|||

| 23 |

138.6 TiB |

92.4 TiB |

Physical Topology

Topology Overview

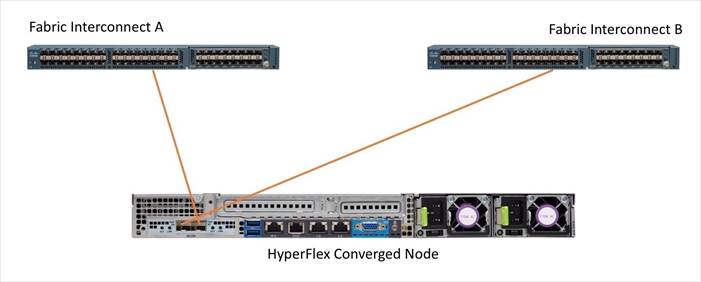

The Cisco HyperFlex system is composed of a pair of Cisco UCS Fabric Interconnects along with up to sixteen HX-Series rack-mount servers per cluster. Up to sixteen compute-only servers can also be added per HyperFlex cluster. Adding Cisco UCS rack-mount servers and/or Cisco UCS 5108 Blade chassis, which house Cisco UCS blade servers, allows for additional compute resources in an extended cluster design. Up to eight separate HX clusters can be installed under a single pair of Fabric Interconnects. The two Fabric Interconnects both connect to every HX-Series rack-mount server, and both connect to every Cisco UCS 5108 blade chassis, and Cisco UCS rack-mount server. Upstream network connections, also referred to as “northbound” network connections are made from the Fabric Interconnects to the customer datacenter network at the time of installation.

Figure 20 HyperFlex Standard Cluster Topology

Figure 21 HyperFlex Extended Cluster Topology