更换控制器服务器UCS C240 M4 - vEPC

下载选项

非歧视性语言

此产品的文档集力求使用非歧视性语言。在本文档集中,非歧视性语言是指不隐含针对年龄、残障、性别、种族身份、族群身份、性取向、社会经济地位和交叉性的歧视的语言。由于产品软件的用户界面中使用的硬编码语言、基于 RFP 文档使用的语言或引用的第三方产品使用的语言,文档中可能无法确保完全使用非歧视性语言。 深入了解思科如何使用包容性语言。

关于此翻译

思科采用人工翻译与机器翻译相结合的方式将此文档翻译成不同语言,希望全球的用户都能通过各自的语言得到支持性的内容。 请注意:即使是最好的机器翻译,其准确度也不及专业翻译人员的水平。 Cisco Systems, Inc. 对于翻译的准确性不承担任何责任,并建议您总是参考英文原始文档(已提供链接)。

Contents

Introduction

本文描述要求的步骤替换在设置的Ultra-M的有故障的控制器服务器主机StarOS虚拟网络作用(VNFs)。

背景信息

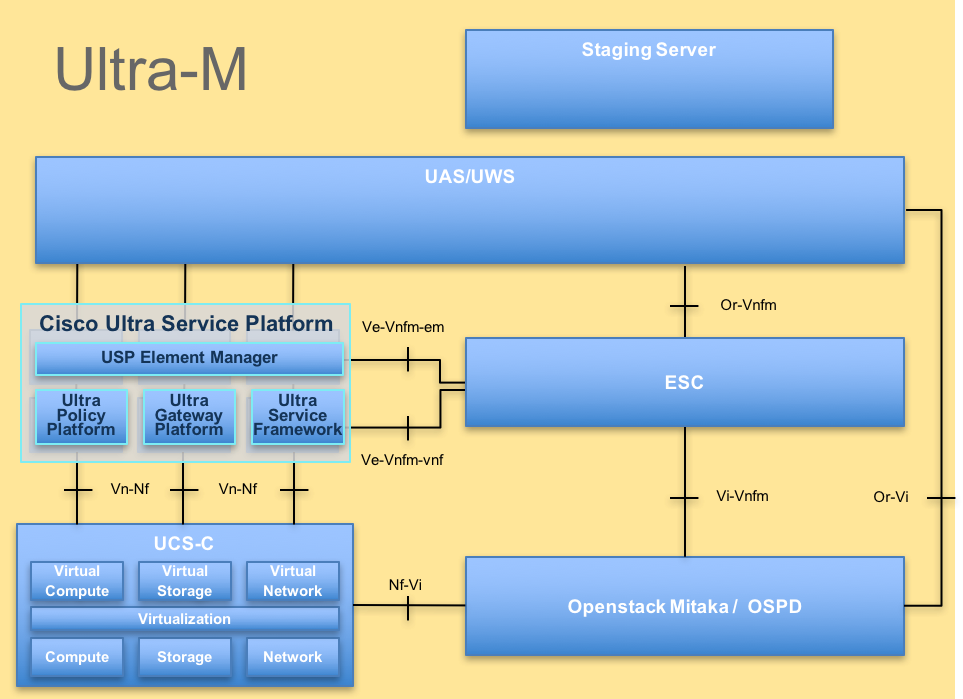

Ultra-M是设计为了简化VNFs的配置的一个被预先包装的和被验证的虚拟化的便携信息包核心解决方案。OpenStack是虚拟化的基础设施管理器(精力) Ultra-M的并且包括这些节点类型:

- 估计

- 对象存储磁盘-估计(OSD -估计)

- 控制器

- OpenStack平台-导向器(OSPD)

Ultra-M高级体系结构和介入的组件在此镜像表示:

UltraM体系结构

UltraM体系结构

本文供熟悉Cisco Ultra-M平台的Cisco人员使用,并且选派要求被执行在OpenStack和StarOS VNF级别在控制器服务器更换时的步骤。

Note:超M 5.1.x版本考虑为了定义在本文的程序。

简称

| VNF | 虚拟网络功能 |

| CF | 控制功能 |

| SF | 服务功能 |

| ESC | 有弹性服务控制器 |

| MOP | 程序方法 |

| OSD | 对象存储磁盘 |

| HDD | 硬盘驱动器 |

| SSD | 固体驱动 |

| 精力 | 虚拟基础设施管理器 |

| VM | 虚拟机 |

| EM | 网元管理 |

| UAS | 超自动化服务 |

| UUID | 通用唯一标识符 |

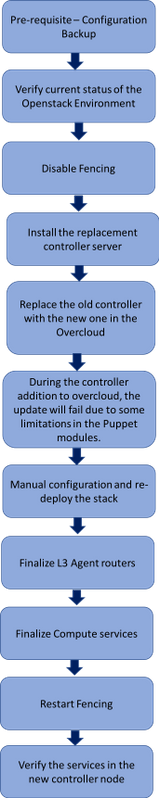

Mop的工作流

替换程序的高级工作流

替换程序的高级工作流

Prerequisites

备份

在恢复的情况下, Cisco推荐使用OSPD数据库(DB)的备份与使用这些步骤:

[root@director ~]# mysqldump --opt --all-databases > /root/undercloud-all-databases.sql

[root@director ~]# tar --xattrs -czf undercloud-backup-`date +%F`.tar.gz /root/undercloud-all-databases.sql

/etc/my.cnf.d/server.cnf /var/lib/glance/images /srv/node /home/stack

tar: Removing leading `/' from member names

初步的状态检查

请注意检查OpenStack环境和服务的当前状态和它是健康的,在您继续进行替换程序前。它可帮助在控制器替换过程时避免复杂化。

- 检查OpenStack和节点列表的状况:

[stack@director ~]$ source stackrc

[stack@director ~]$ openstack stack list --nested

[stack@director ~]$ ironic node-list

[stack@director ~]$ nova list

- 检查在控制器的心脏起搏器状态:

登陆到其中一个激活控制器并且检查心脏起搏器状态。所有服务在发生故障的控制器应该运作在可用的控制器和终止。

[stack@pod1-controller-0 ~]# pcs status

<snip>

Online: [ pod1-controller-0 pod1-controller-1 ]

OFFLINE: [ pod1-controller-2 ]

Full list of resources:

ip-11.120.0.109 (ocf::heartbeat:IPaddr2): Started pod1-controller-0

ip-172.25.22.109 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-192.200.0.107 (ocf::heartbeat:IPaddr2): Started pod1-controller-0

Clone Set: haproxy-clone [haproxy]

Started: [ pod1-controller-0 pod1-controller-1 ]

Stopped: [ pod1-controller-2 ]

Master/Slave Set: galera-master [galera]

Masters: [ pod1-controller-0 pod1-controller-1 ]

Stopped: [ pod1-controller-2 ]

ip-11.120.0.110 (ocf::heartbeat:IPaddr2): Started pod1-controller-0

ip-11.119.0.110 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

Clone Set: rabbitmq-clone [rabbitmq]

Started: [ pod1-controller-0 pod1-controller-1 ]

Stopped: [ pod1-controller-2 ]

Master/Slave Set: redis-master [redis]

Masters: [ pod1-controller-0 ]

Slaves: [ pod1-controller-1 ]

Stopped: [ pod1-controller-2 ]

ip-11.118.0.104 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

openstack-cinder-volume (systemd:openstack-cinder-volume): Started pod1-controller-0

my-ipmilan-for-controller-6 (stonith:fence_ipmilan): Started pod1-controller-1

my-ipmilan-for-controller-4 (stonith:fence_ipmilan): Started pod1-controller-0

my-ipmilan-for-controller-7 (stonith:fence_ipmilan): Started pod1-controller-0

Failed Actions:

Daemon Status:

corosync: active/enabled

pacemaker: active/enabled

pcsd: active/enabled

在本例中, Controller-2脱机。它,因此,将被替换。Controller-0和Controller-1是可操作的和管理簇服务。

- 检查在激活控制器的MariaDB状态:

[stack@director] nova list | grep control

| 4361358a-922f-49b5-89d4-247a50722f6d | pod1-controller-0 | ACTIVE | - | Running | ctlplane=192.200.0.102 |

| d0f57f27-93a8-414f-b4d8-957de0d785fc | pod1-controller-1 | ACTIVE | - | Running | ctlplane=192.200.0.110 |

[stack@director ~]$ for i in 192.200.0.102 192.200.0.110 ; do echo "*** $i ***" ; ssh heat-admin@$i "sudo mysql --exec=\"SHOW STATUS LIKE 'wsrep_local_state_comment'\" ; sudo mysql --exec=\"SHOW STATUS LIKE 'wsrep_cluster_size'\""; done

*** 192.200.0.152 ***

Variable_name Value

wsrep_local_state_comment Synced

Variable_name Value

wsrep_cluster_size 2

*** 192.200.0.154 ***

Variable_name Value

wsrep_local_state_comment Synced

Variable_name Value

wsrep_cluster_size 2

验证这些线路为每个激活控制器是存在:

wsrep_local_state_comment :同步的

wsrep_cluster_size :2

- 检查在激活控制器的Rabbitmq状态。发生故障的控制器不应该出现于运行的节点列表。

[heat-admin@pod1-controller-0 ~] sudo rabbitmqctl cluster_status

Cluster status of node 'rabbit@pod1-controller-0' ...

[{nodes,[{disc,['rabbit@pod1-controller-0','rabbit@pod1-controller-1',

'rabbit@pod1-controller-2']}]},

{running_nodes,['rabbit@pod1-controller-1',

'rabbit@pod1-controller-0']},

{cluster_name,<<"rabbit@pod1-controller-2.localdomain">>},

{partitions,[]},

{alarms,[{'rabbit@pod1-controller-1',[]},

{'rabbit@pod1-controller-0',[]}]}]

[heat-admin@pod1-controller-1 ~] sudo rabbitmqctl cluster_status

Cluster status of node 'rabbit@pod1-controller-1' ...

[{nodes,[{disc,['rabbit@pod1-controller-0','rabbit@pod1-controller-1',

'rabbit@pod1-controller-2']}]},

{running_nodes,['rabbit@pod1-controller-0',

'rabbit@pod1-controller-1']},

{cluster_name,<<"rabbit@pod1-controller-2.localdomain">>},

{partitions,[]},

{alarms,[{'rabbit@pod1-controller-0',[]},

{'rabbit@pod1-controller-1',[]}]}]

- 是否请检查所有undercloud服务在装载,活动和运行状态从OSP-D节点。

[stack@director ~]$ systemctl list-units "openstack*" "neutron*" "openvswitch*"

UNIT LOAD ACTIVE SUB DESCRIPTION

neutron-dhcp-agent.service loaded active running OpenStack Neutron DHCP Agent

neutron-openvswitch-agent.service loaded active running OpenStack Neutron Open vSwitch Agent

neutron-ovs-cleanup.service loaded active exited OpenStack Neutron Open vSwitch Cleanup Utility

neutron-server.service loaded active running OpenStack Neutron Server

openstack-aodh-evaluator.service loaded active running OpenStack Alarm evaluator service

openstack-aodh-listener.service loaded active running OpenStack Alarm listener service

openstack-aodh-notifier.service loaded active running OpenStack Alarm notifier service

openstack-ceilometer-central.service loaded active running OpenStack ceilometer central agent

openstack-ceilometer-collector.service loaded active running OpenStack ceilometer collection service

openstack-ceilometer-notification.service loaded active running OpenStack ceilometer notification agent

openstack-glance-api.service loaded active running OpenStack Image Service (code-named Glance) API server

openstack-glance-registry.service loaded active running OpenStack Image Service (code-named Glance) Registry server

openstack-heat-api-cfn.service loaded active running Openstack Heat CFN-compatible API Service

openstack-heat-api.service loaded active running OpenStack Heat API Service

openstack-heat-engine.service loaded active running Openstack Heat Engine Service

openstack-ironic-api.service loaded active running OpenStack Ironic API service

openstack-ironic-conductor.service loaded active running OpenStack Ironic Conductor service

openstack-ironic-inspector-dnsmasq.service loaded active running PXE boot dnsmasq service for Ironic Inspector

openstack-ironic-inspector.service loaded active running Hardware introspection service for OpenStack Ironic

openstack-mistral-api.service loaded active running Mistral API Server

openstack-mistral-engine.service loaded active running Mistral Engine Server

openstack-mistral-executor.service loaded active running Mistral Executor Server

openstack-nova-api.service loaded active running OpenStack Nova API Server

openstack-nova-cert.service loaded active running OpenStack Nova Cert Server

openstack-nova-compute.service loaded active running OpenStack Nova Compute Server

openstack-nova-conductor.service loaded active running OpenStack Nova Conductor Server

openstack-nova-scheduler.service loaded active running OpenStack Nova Scheduler Server

openstack-swift-account-reaper.service loaded active running OpenStack Object Storage (swift) - Account Reaper

openstack-swift-account.service loaded active running OpenStack Object Storage (swift) - Account Server

openstack-swift-container-updater.service loaded active running OpenStack Object Storage (swift) - Container Updater

openstack-swift-container.service loaded active running OpenStack Object Storage (swift) - Container Server

openstack-swift-object-updater.service loaded active running OpenStack Object Storage (swift) - Object Updater

openstack-swift-object.service loaded active running OpenStack Object Storage (swift) - Object Server

openstack-swift-proxy.service loaded active running OpenStack Object Storage (swift) - Proxy Server

openstack-zaqar.service loaded active running OpenStack Message Queuing Service (code-named Zaqar) Server

openstack-zaqar@1.service loaded active running OpenStack Message Queuing Service (code-named Zaqar) Server Instance 1

openvswitch.service loaded active exited Open vSwitch

LOAD = Reflects whether the unit definition was properly loaded.

ACTIVE = The high-level unit activation state, i.e. generalization of SUB.

SUB = The low-level unit activation state, values depend on unit type.

37 loaded units listed. Pass --all to see loaded but inactive units, too.

To show all installed unit files use 'systemctl list-unit-files'.

禁用操刀在控制器簇

[root@pod1-controller-0 ~]# sudo pcs property set stonith-enabled=false

[root@pod1-controller-0 ~]# pcs property show

Cluster Properties:

cluster-infrastructure: corosync

cluster-name: tripleo_cluster

dc-version: 1.1.15-11.el7_3.4-e174ec8

have-watchdog: false

last-lrm-refresh: 1510809585

maintenance-mode: false

redis_REPL_INFO: pod1-controller-0

stonith-enabled: false

Node Attributes:

pod1-controller-0: rmq-node-attr-last-known-rabbitmq=rabbit@pod1-controller-0

pod1-controller-1: rmq-node-attr-last-known-rabbitmq=rabbit@pod1-controller-1

pod1-controller-2: rmq-node-attr-last-known-rabbitmq=rabbit@pod1-controller-2

安装新的控制器节点

- 步骤为了安装一个新的UCS C240 M4服务器和初始建立步骤可以参考从:

- 服务器的洛金有使用的CIMC IP

- 如果固件不是根据以前,使用的推荐的版本请执行BIOS升级。测量得BIOS升级的步骤这里:

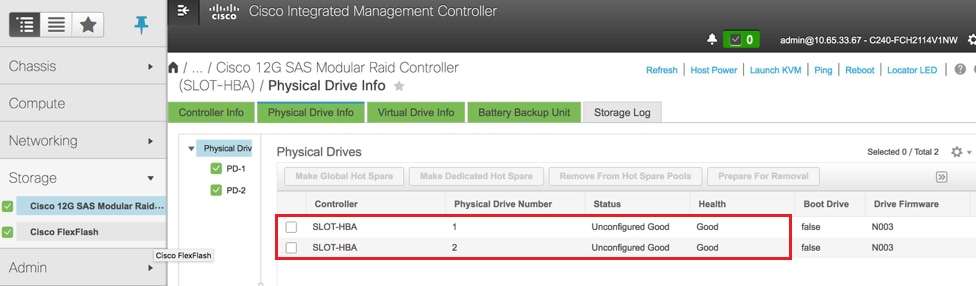

- 验证物理驱动的状况。这一定是“没有配置好” :

存贮> Cisco 12G SAS模块化袭击控制器(SLOT-HBA) >物理驱动信息

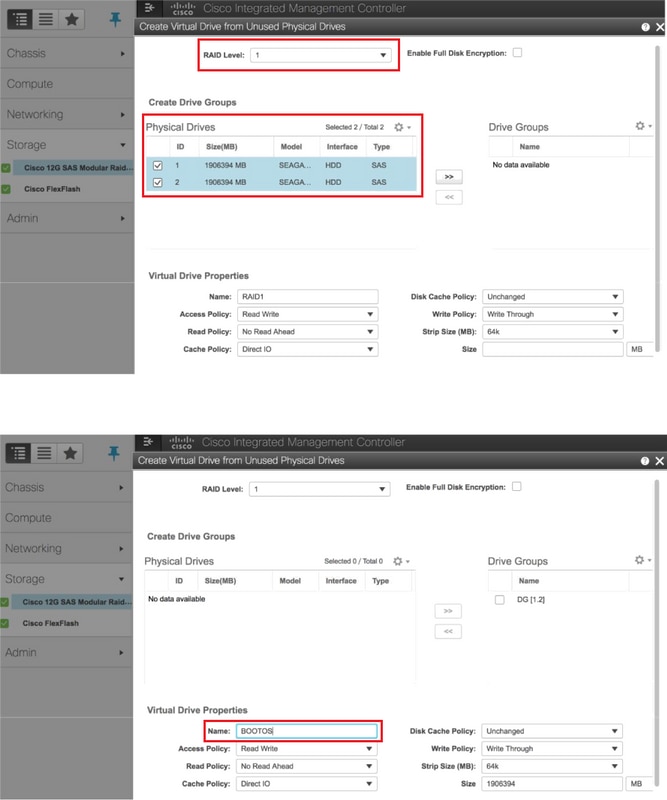

- 用RAID第1级创建从物理驱动的一个虚拟驱动器:

存贮> Cisco 12G SAS模块化袭击控制器(SLOT-HBA) >控制器信息>创建从未使用的物理驱动的虚拟驱动器

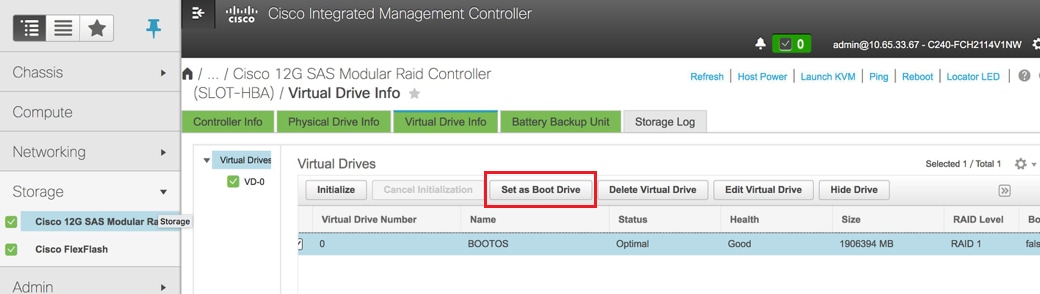

- 选择VD并且配置集作为引导驱动器:

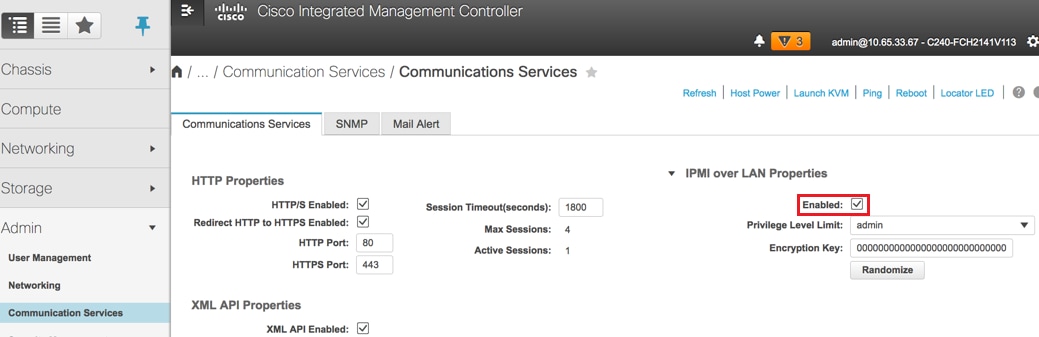

- 在LAN的Enable (event) IPMI :

Admin >通信服务>通信服务

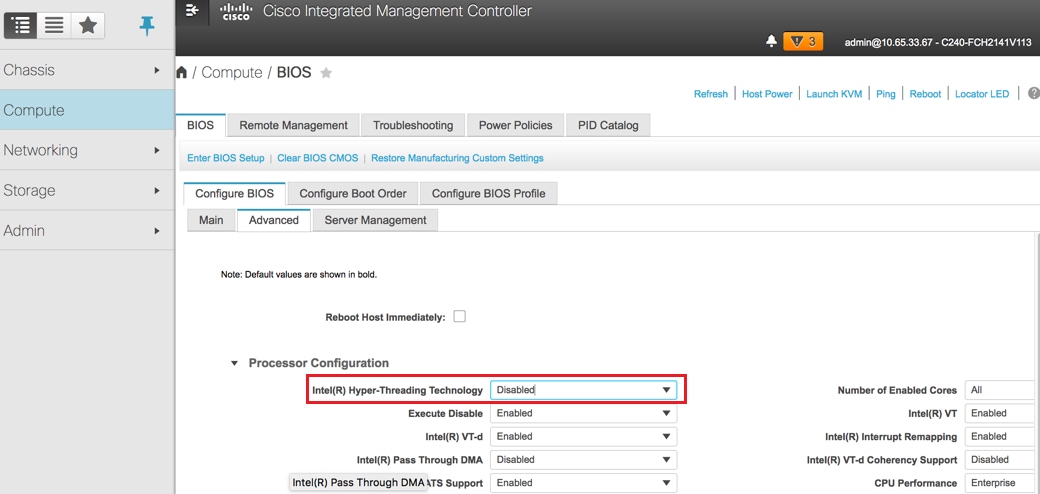

- hyperthreading的功能失效:

估计> BIOS >配置BIOS >Advanced >处理器配置

Note:此处显示的图像和在此部分提及的这里配置步骤是关于固件版本3.0(3e),并且也许有轻微的变化,如果研究其他版本。

控制器节点更换乌云密布

此部分包括要求为了用在乌云密布的新的替换有故障的控制器的步骤。对于此,使用提出堆栈将重新使用的deploy.sh脚本。 在配置时,在ControllerNodesPostDeployment阶段,更新失效由于一些限制木偶模块。人工干预,在您重新启动配置脚本前,需要。

准备删除发生故障的控制器节点

- 识别发生故障的控制器的索引。索引是在控制器名字的数字后缀在OpenStack服务器列表输出中。在本例中,索引是2 :

[stack@director ~]$ nova list | grep controller

| 5813a47e-af27-4fb9-8560-75decd3347b4 | pod1-controller-0 | ACTIVE | - | Running | ctlplane=192.200.0.152 |

| 457f023f-d077-45c9-bbea-dd32017d9708 | pod1-controller-1 | ACTIVE | - | Running | ctlplane=192.200.0.154 |

| d13bb207-473a-4e42-a1e7-05316935ed65 | pod1-controller-2 | ACTIVE | - | Running | ctlplane=192.200.0.151 |

- 创建将定义节点删除的一个Yaml文件~templates/remove-controller.yaml。请使用被找到的索引在上一步在资源列表的条目:

[stack@director ~]$ cat templates/remove-controller.yaml

parameters:

ControllerRemovalPolicies:

[{'resource_list': [‘2’]}]

parameter_defaults:

CorosyncSettleTries: 5

- 做使用为了安装乌云密布和插入线路为了包含以前被创建的去除controller.yaml文件的复制配置脚本:

[stack@director ~]$ cp deploy.sh deploy-removeController.sh

[stack@director ~]$ cat deploy-removeController.sh

time openstack overcloud deploy --templates \

-r ~/custom-templates/custom-roles.yaml \

-e /home/stack/templates/remove-controller.yaml \

-e /usr/share/openstack-tripleo-heat-templates/environments/puppet-pacemaker.yaml \

-e /usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml \

-e /usr/share/openstack-tripleo-heat-templates/environments/storage-environment.yaml \

-e /usr/share/openstack-tripleo-heat-templates/environments/neutron-sriov.yaml \

-e ~/custom-templates/network.yaml \

-e ~/custom-templates/ceph.yaml \

-e ~/custom-templates/compute.yaml \

-e ~/custom-templates/layout-removeController.yaml \

-e ~/custom-templates/rabbitmq.yaml \

--stack pod1 \

--debug \

--log-file overcloudDeploy_$(date +%m_%d_%y__%H_%M_%S).log \

--neutron-flat-networks phys_pcie1_0,phys_pcie1_1,phys_pcie4_0,phys_pcie4_1 \

--neutron-network-vlan-ranges datacentre:101:200 \

--neutron-disable-tunneling \

--verbose --timeout 180

- 识别用被提及的使用将替换的,控制器的ID命令这里并且移动它向维护模式:

[stack@director ~]$ nova list | grep controller

| 5813a47e-af27-4fb9-8560-75decd3347b4 | pod1-controller-0 | ACTIVE | - | Running | ctlplane=192.200.0.152 |

| 457f023f-d077-45c9-bbea-dd32017d9708 | pod1-controller-1 | ACTIVE | - | Running | ctlplane=192.200.0.154 |

| d13bb207-473a-4e42-a1e7-05316935ed65 | pod1-controller-2 | ACTIVE | - | Running | ctlplane=192.200.0.151 |

[stack@director ~]$ openstack baremetal node list | grep d13bb207-473a-4e42-a1e7-05316935ed65

| e7c32170-c7d1-4023-b356-e98564a9b85b | None | d13bb207-473a-4e42-a1e7-05316935ed65 | power off | active | False |

[stack@b10-ospd ~]$ openstack baremetal node maintenance set e7c32170-c7d1-4023-b356-e98564a9b85b

[stack@director~]$ openstack baremetal node list | grep True

| e7c32170-c7d1-4023-b356-e98564a9b85b | None | d13bb207-473a-4e42-a1e7-05316935ed65 | power off | active | True |

- 为了保证DB在替换程序时运行,从心脏起搏器控制请去除加莱拉角并且运行激活控制器的此on命令一:

[root@pod1-controller-0 ~]# sudo pcs resource unmanage galera

[root@pod1-controller-0 ~]# sudo pcs status

Cluster name: tripleo_cluster

Stack: corosync

Current DC: pod1-controller-0 (version 1.1.15-11.el7_3.4-e174ec8) - partition with quorum

Last updated: Thu Nov 16 16:51:18 2017 Last change: Thu Nov 16 16:51:12 2017 by root via crm_resource on pod1-controller-0

3 nodes and 22 resources configured

Online: [ pod1-controller-0 pod1-controller-1 ]

OFFLINE: [ pod1-controller-2 ]

Full list of resources:

ip-11.120.0.109 (ocf::heartbeat:IPaddr2): Started pod1-controller-0

ip-172.25.22.109 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-192.200.0.107 (ocf::heartbeat:IPaddr2): Started pod1-controller-0

Clone Set: haproxy-clone [haproxy]

Started: [ pod1-controller-0 pod1-controller-1 ]

Stopped: [ pod1-controller-2 ]

Master/Slave Set: galera-master [galera] (unmanaged)

galera (ocf::heartbeat:galera): Master pod1-controller-0 (unmanaged)

galera (ocf::heartbeat:galera): Master pod1-controller-1 (unmanaged)

Stopped: [ pod1-controller-2 ]

ip-11.120.0.110 (ocf::heartbeat:IPaddr2): Started pod1-controller-0

ip-11.119.0.110 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

<snip>

准备添加新的控制器节点

- 用仅新的控制器详细资料创建一个controllerRMA.json文件。保证以前未使用在新的控制器的索引编号。 一般,对下个高控制器编号的增量。

示例:最高预先是Controller-2,因此请创建Controller-3。

Note:是记住json格式。

[stack@director ~]$ cat controllerRMA.json

{

"nodes": [

{

"mac": [

<MAC_ADDRESS>

],

"capabilities": "node:controller-3,boot_option:local",

"cpu": "24",

"memory": "256000",

"disk": "3000",

"arch": "x86_64",

"pm_type": "pxe_ipmitool",

"pm_user": "admin",

"pm_password": "<PASSWORD>",

"pm_addr": "<CIMC_IP>"

}

]

}

- 导入与在上一步创建的使用的新节点json文件:

[stack@director ~]$ openstack baremetal import --json controllerRMA.json

Started Mistral Workflow. Execution ID: 67989c8b-1225-48fe-ba52-3a45f366e7a0

Successfully registered node UUID 048ccb59-89df-4f40-82f5-3d90d37ac7dd

Started Mistral Workflow. Execution ID: c6711b5f-fa97-4c86-8de5-b6bc7013b398

Successfully set all nodes to available.

[stack@director ~]$ openstack baremetal node list | grep available

| 048ccb59-89df-4f40-82f5-3d90d37ac7dd | None | None | power off | available | False

- 设置节点管理状态:

[stack@director ~]$ openstack baremetal node manage 048ccb59-89df-4f40-82f5-3d90d37ac7dd

[stack@director ~]$ openstack baremetal node list | grep off

| 048ccb59-89df-4f40-82f5-3d90d37ac7dd | None | None | power off | manageable | False |

- 运行内省:

[stack@director ~]$ openstack overcloud node introspect 048ccb59-89df-4f40-82f5-3d90d37ac7dd --provide

Started Mistral Workflow. Execution ID: f73fb275-c90e-45cc-952b-bfc25b9b5727

Waiting for introspection to finish...

Successfully introspected all nodes.

Introspection completed.

Started Mistral Workflow. Execution ID: a892b456-eb15-4c06-b37e-5bc3f6c37c65

Successfully set all nodes to available

[stack@director ~]$ openstack baremetal node list | grep available

| 048ccb59-89df-4f40-82f5-3d90d37ac7dd | None | None | power off | available | False |

- 标记可用的节点用新的控制器属性。保证使用如用于controllerRMA.json文件被选定的新的控制器,控制器ID :

[stack@director ~]$ openstack baremetal node set --property capabilities='node:controller-3,profile:control,boot_option:local' 048ccb59-89df-4f40-82f5-3d90d37ac7dd

- 在配置脚本,有称为尤其,指定的layout.yaml的自定义模板什么IP地址分配到控制器为多种接口。在一个新的堆栈,有为Controller-0、Controller-1和Controller-2定义的3个地址。当您添加一个新的控制器时,请保证您为每个子网依顺序添加一个下个IP地址:

ControllerIPs:

internal_api:

- 11.120.0.10

- 11.120.0.11

- 11.120.0.12

- 11.120.0.13

tenant:

- 11.117.0.10

- 11.117.0.11

- 11.117.0.12

- 11.117.0.13

storage:

- 11.118.0.10

- 11.118.0.11

- 11.118.0.12

- 11.118.0.13

storage_mgmt:

- 11.119.0.10

- 11.119.0.11

- 11.119.0.12

- 11.119.0.13

- 现在请运行以前被创建的deploy-removecontroller.sh,为了删除老节点和添加新节点。

Note:此步骤预计失效ControllerNodesDeployment_Step1。那时,需要人工干预。

[stack@b10-ospd ~]$ ./deploy-addController.sh

START with options: [u'overcloud', u'deploy', u'--templates', u'-r', u'/home/stack/custom-templates/custom-roles.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/puppet-pacemaker.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/storage-environment.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/neutron-sriov.yaml', u'-e', u'/home/stack/custom-templates/network.yaml', u'-e', u'/home/stack/custom-templates/ceph.yaml', u'-e', u'/home/stack/custom-templates/compute.yaml', u'-e', u'/home/stack/custom-templates/layout-removeController.yaml', u'-e', u'/home/stack/custom-templates/rabbitmq.yaml', u'--stack', u'newtonoc', u'--debug', u'--log-file', u'overcloudDeploy_11_15_17__07_46_35.log', u'--neutron-flat-networks', u'phys_pcie1_0,phys_pcie1_1,phys_pcie4_0,phys_pcie4_1', u'--neutron-network-vlan-ranges', u'datacentre:101:200', u'--neutron-disable-tunneling', u'--verbose', u'--timeout', u'180']

:

DeploymentError: Heat Stack update failed

END return value: 1

real 42m1.525s

user 0m3.043s

sys 0m0.614s

配置的进展/状况可以用这些命令监控:

[stack@director~]$ openstack stack list --nested | grep -iv complete

+--------------------------------------+-------------------------------------------------------------------------------------------------------------------------------------------------------------------------+-----------------+----------------------+----------------------+--------------------------------------+

| ID | Stack Name | Stack Status | Creation Time | Updated Time | Parent |

+--------------------------------------+-------------------------------------------------------------------------------------------------------------------------------------------------------------------------+-----------------+----------------------+----------------------+--------------------------------------+

| c1e338f2-877e-4817-93b4-9a3f0c0b3d37 | pod1-AllNodesDeploySteps-5psegydpwxij-ComputeDeployment_Step1-swnuzjixac43 | UPDATE_FAILED | 2017-10-08T14:06:07Z | 2017-11-16T18:09:43Z | e90f00ef-2499-4ec3-90b4-d7def6e97c47 |

| 1db4fef4-45d3-4125-bd96-2cc3297a69ff | pod1-AllNodesDeploySteps-5psegydpwxij-ControllerDeployment_Step1-hmn3hpruubcn | UPDATE_FAILED | 2017-10-08T14:03:05Z | 2017-11-16T18:12:12Z | e90f00ef-2499-4ec3-90b4-d7def6e97c47 |

| e90f00ef-2499-4ec3-90b4-d7def6e97c47 | pod1-AllNodesDeploySteps-5psegydpwxij | UPDATE_FAILED | 2017-10-08T13:59:25Z | 2017-11-16T18:09:25Z | 6c4b604a-55a4-4a19-9141-28c844816c0d |

| 6c4b604a-55a4-4a19-9141-28c844816c0d | pod1 | UPDATE_FAILED | 2017-10-08T12:37:11Z | 2017-11-16T17:35:35Z | None |

+--------------------------------------+-------------------------------------------------------------------------------------------------------------------------------------------------------------------------+-----------------+----------------------+----------------------+--------------------------------------+

人工干预

- 在OSP-D服务器上,请运行list命令OpenStack的服务器为了列出可用的控制器。新加的控制器应该出现于列表:

[stack@director ~]$ openstack server list | grep controller

| 3e6c3db8-ba24-48d9-b0e8-1e8a2eb8b5ff | pod1-controller-3 | ACTIVE | ctlplane=192.200.0.103 | overcloud-full |

| 457f023f-d077-45c9-bbea-dd32017d9708 | pod1-controller-1 | ACTIVE | ctlplane=192.200.0.154 | overcloud-full |

| 5813a47e-af27-4fb9-8560-75decd3347b4 | pod1-controller-0 | ACTIVE | ctlplane=192.200.0.152 | overcloud-full |

- 连接到其中一个激活控制器(不是新加的控制器)并且查看文件/etc/corosync/corosycn.conf。查找分配nodeid到每个控制器的nodelist。查找发生故障的节点的条目并且注释其nodeid :

[root@pod1-controller-0 ~]# cat /etc/corosync/corosync.conf

totem {

version: 2

secauth: off

cluster_name: tripleo_cluster

transport: udpu

token: 10000

}

nodelist {

node {

ring0_addr: pod1-controller-0

nodeid: 5

}

node {

ring0_addr: pod1-controller-1

nodeid: 7

}

node {

ring0_addr: pod1-controller-2

nodeid: 8

}

}

- 登陆对其中每一个激活控制器。删除发生故障的节点并且重新启动服务。在这种情况下,请去除pod1-controller-2。请勿进行对新加的控制器的此动作:

[root@pod1-controller-0 ~]# sudo pcs cluster localnode remove pod1-controller-2

pod1-controller-2: successfully removed!

[root@pod1-controller-0 ~]# sudo pcs cluster reload corosync

Corosync reloaded

[root@pod1-controller-1 ~]# sudo pcs cluster localnode remove pod1-controller-2

pod1-controller-2: successfully removed!

[root@pod1-controller-1 ~]# sudo pcs cluster reload corosync

Corosync reloaded

- 从其中一个激活控制器运行此命令为了从簇删除发生故障的节点:

[root@pod1-controller-0 ~]# sudo crm_node -R pod1-controller-2 --force

- 从其中一个激活控制器运行此命令为了从rabbitmq簇删除发生故障的节点:

[root@pod1-controller-0 ~]# sudo rabbitmqctl forget_cluster_node rabbit@pod1-controller-2

Removing node 'rabbit@newtonoc-controller-2' from cluster ...

- 从MongoDB删除发生故障的节点。为了执行此,您需要查找活动蒙戈币节点。请使用netstat查找主机的IP地址:

[root@pod1-controller-0 ~]# sudo netstat -tulnp | grep 27017

tcp 0 0 11.120.0.10:27017 0.0.0.0:* LISTEN 219577/mongod

- 登陆对节点并且检查为了发现它是否是与使用的主设备IP地址和端口号从前面的命令:

[heat-admin@pod1-controller-0 ~]$ echo "db.isMaster()" | mongo --host 11.120.0.10:27017

MongoDB shell version: 2.6.11

connecting to: 11.120.0.10:27017/test

{

"setName" : "tripleo",

"setVersion" : 9,

"ismaster" : true,

"secondary" : false,

"hosts" : [

"11.120.0.10:27017",

"11.120.0.12:27017",

"11.120.0.11:27017"

],

"primary" : "11.120.0.10:27017",

"me" : "11.120.0.10:27017",

"electionId" : ObjectId("5a0d2661218cb0238b582fb1"),

"maxBsonObjectSize" : 16777216,

"maxMessageSizeBytes" : 48000000,

"maxWriteBatchSize" : 1000,

"localTime" : ISODate("2017-11-16T18:36:34.473Z"),

"maxWireVersion" : 2,

"minWireVersion" : 0,

"ok" : 1

}

如果节点不是主设备,请登陆到另一个激活控制器并且执行同一个步骤。

- 从主设备,请列出与使用的可用的节点rs.status ()命令。查找老/无答复的节点并且识别蒙戈币节点名。

[root@pod1-controller-0 ~]# mongo --host 11.120.0.10

MongoDB shell version: 2.6.11

connecting to: 11.120.0.10:27017/test

<snip>

tripleo:PRIMARY> rs.status()

{

"set" : "tripleo",

"date" : ISODate("2017-11-14T13:27:14Z"),

"myState" : 1,

"members" : [

{

"_id" : 0,

"name" : "11.120.0.10:27017",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY",

"uptime" : 418347,

"optime" : Timestamp(1510666033, 1),

"optimeDate" : ISODate("2017-11-14T13:27:13Z"),

"electionTime" : Timestamp(1510247693, 1),

"electionDate" : ISODate("2017-11-09T17:14:53Z"),

"self" : true

},

{

"_id" : 2,

"name" : "11.120.0.12:27017",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 418347,

"optime" : Timestamp(1510666033, 1),

"optimeDate" : ISODate("2017-11-14T13:27:13Z"),

"lastHeartbeat" : ISODate("2017-11-14T13:27:13Z"),

"lastHeartbeatRecv" : ISODate("2017-11-14T13:27:13Z"),

"pingMs" : 0,

"syncingTo" : "11.120.0.10:27017"

},

{

"_id" : 3,

"name" : "11.120.0.11:27017

"health" : 0,

"state" : 8,

"stateStr" : "(not reachable/healthy)",

"uptime" : 0,

"optime" : Timestamp(1510610580, 1),

"optimeDate" : ISODate("2017-11-13T22:03:00Z"),

"lastHeartbeat" : ISODate("2017-11-14T13:27:10Z"),

"lastHeartbeatRecv" : ISODate("2017-11-13T22:03:01Z"),

"pingMs" : 0,

"syncingTo" : "11.120.0.10:27017"

}

],

"ok" : 1

}

- 从主设备,请删除与使用的发生故障的节点rs.remove命令。一些错误将被看到,当您执行此命令,但是更加检查状态发现删除了节点:

[root@pod1-controller-0 ~]$ mongo --host 11.120.0.10

<snip>

tripleo:PRIMARY> rs.remove('11.120.0.12:27017')

2017-11-16T18:41:04.999+0000 DBClientCursor::init call() failed

2017-11-16T18:41:05.000+0000 Error: error doing query: failed at src/mongo/shell/query.js:81

2017-11-16T18:41:05.001+0000 trying reconnect to 11.120.0.10:27017 (11.120.0.10) failed

2017-11-16T18:41:05.003+0000 reconnect 11.120.0.10:27017 (11.120.0.10) ok

tripleo:PRIMARY> rs.status()

{

"set" : "tripleo",

"date" : ISODate("2017-11-16T18:44:11Z"),

"myState" : 1,

"members" : [

{

"_id" : 3,

"name" : "11.120.0.11:27017",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 187,

"optime" : Timestamp(1510857848, 3),

"optimeDate" : ISODate("2017-11-16T18:44:08Z"),

"lastHeartbeat" : ISODate("2017-11-16T18:44:11Z"),

"lastHeartbeatRecv" : ISODate("2017-11-16T18:44:09Z"),

"pingMs" : 0,

"syncingTo" : "11.120.0.10:27017"

},

{

"_id" : 4,

"name" : "11.120.0.10:27017",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY",

"uptime" : 89820,

"optime" : Timestamp(1510857848, 3),

"optimeDate" : ISODate("2017-11-16T18:44:08Z"),

"electionTime" : Timestamp(1510811232, 1),

"electionDate" : ISODate("2017-11-16T05:47:12Z"),

"self" : true

}

],

"ok" : 1

}

tripleo:PRIMARY> exit

bye

- 运行此命令为了更新激活控制器节点列表。包括新的控制器节点在此列表:

[root@pod1-controller-0 ~]# sudo pcs resource update galera wsrep_cluster_address=gcomm://pod1-controller-0,pod1-controller-1,pod1-controller-2

- 从已经存在到新的控制器的控制器复制这些文件:

/etc/sysconfig/clustercheck

/root/.my.cnf

On existing controller:

[root@pod1-controller-0 ~]# scp /etc/sysconfig/clustercheck stack@192.200.0.1:/tmp/.

[root@pod1-controller-0 ~]# scp /root/.my.cnf stack@192.200.0.1:/tmp/my.cnf

On new controller:

[root@pod1-controller-3 ~]# cd /etc/sysconfig

[root@pod1-controller-3 sysconfig]# scp stack@192.200.0.1:/tmp/clustercheck .

[root@pod1-controller-3 sysconfig]# cd /root

[root@pod1-controller-3 ~]# scp stack@192.200.0.1:/tmp/my.cnf .my.cnf

- 从已经存在的其中一个控制器运行add命令的簇结:

[root@pod1-controller-1 ~]# sudo pcs cluster node add pod1-controller-3

Disabling SBD service...

pod1-controller-3: sbd disabled

pod1-controller-0: Corosync updated

pod1-controller-1: Corosync updated

Setting up corosync...

pod1-controller-3: Succeeded

Synchronizing pcsd certificates on nodes pod1-controller-3...

pod1-controller-3: Success

Restarting pcsd on the nodes in order to reload the certificates...

pod1-controller-3: Success

- 登陆到每个控制器并且查看文件/etc/corosync/corosync.conf。 保证新的控制器是列出的,并且节点ID分配到该控制器是在以前未使用的顺序的下个编号。保证此更改在所有3个控制器完成:

[root@pod1-controller-1 ~]# cat /etc/corosync/corosync.conf

totem {

version: 2

secauth: off

cluster_name: tripleo_cluster

transport: udpu

token: 10000

}

nodelist {

node {

ring0_addr: pod1-controller-0

nodeid: 5

}

node {

ring0_addr: pod1-controller-1

nodeid: 7

}

node {

ring0_addr: pod1-controller-3

nodeid: 6

}

}

quorum {

provider: corosync_votequorum

}

logging {

to_logfile: yes

logfile: /var/log/cluster/corosync.log

to_syslog: yes

}

例如/etc/corosync/corosync.conf在修改以后:

totem {

version: 2

secauth: off

cluster_name: tripleo_cluster

transport: udpu

token: 10000

}

nodelist {

node {

ring0_addr: pod1-controller-0

nodeid: 5

}

node {

ring0_addr: pod1-controller-1

nodeid: 7

}

node {

ring0_addr: pod1-controller-3

nodeid: 9

}

}

quorum {

provider: corosync_votequorum

}

logging {

to_logfile: yes

logfile: /var/log/cluster/corosync.log

to_syslog: yes

}

- 在激活控制器的重新启动corosync。请勿开始在新的控制器的corosync :

[root@pod1-controller-0 ~]# sudo pcs cluster reload corosync

[root@pod1-controller-1 ~]# sudo pcs cluster reload corosync

- 启动新的控制器节点从其中一个代理控制器:

[root@pod1-controller-1 ~]# sudo pcs cluster start pod1-controller-3

- 重新启动从其中一个的加莱拉角代理控制器:

[root@pod1-controller-1 ~]# sudo pcs cluster start pod1-controller-3

pod1-controller-0: Starting Cluster...

[root@pod1-controller-1 ~]# sudo pcs resource cleanup galera

Cleaning up galera:0 on pod1-controller-0, removing fail-count-galera

Cleaning up galera:0 on pod1-controller-1, removing fail-count-galera

Cleaning up galera:0 on pod1-controller-3, removing fail-count-galera

* The configuration prevents the cluster from stopping or starting 'galera-master' (unmanaged)

Waiting for 3 replies from the CRMd... OK

[root@pod1-controller-1 ~]#

[root@pod1-controller-1 ~]# sudo pcs resource manage galera

- 簇在维护模式下。禁用维护模式为了使服务开始:

[root@pod1-controller-2 ~]# sudo pcs property set maintenance-mode=false --wait

- 请检查个人计算机状态加莱拉角,直到全部3个控制器列出作为主设备在加莱拉角:

Note:对于大设置,它能采取一些时间同步Dbs。

[root@pod1-controller-1 ~]# sudo pcs status | grep galera -A1

Master/Slave Set: galera-master [galera]

Masters: [ pod1-controller-0 pod1-controller-1 pod1-controller-3 ]

- 换成簇维护模式:

[root@pod1-controller-1~]# sudo pcs property set maintenance-mode=true --wait

[root@pod1-controller-1 ~]# pcs cluster status

Cluster Status:

Stack: corosync

Current DC: pod1-controller-0 (version 1.1.15-11.el7_3.4-e174ec8) - partition with quorum

Last updated: Thu Nov 16 19:17:01 2017 Last change: Thu Nov 16 19:16:48 2017 by root via cibadmin on pod1-controller-1

*** Resource management is DISABLED ***

The cluster will not attempt to start, stop or recover services

PCSD Status:

pod1-controller-3: Online

pod1-controller-0: Online

pod1-controller-1: Online

- 重新运行您以前运行的配置脚本。这次它应该成功。

[stack@director ~]$ ./deploy-addController.sh

START with options: [u'overcloud', u'deploy', u'--templates', u'-r', u'/home/stack/custom-templates/custom-roles.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/puppet-pacemaker.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/storage-environment.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/neutron-sriov.yaml', u'-e', u'/home/stack/custom-templates/network.yaml', u'-e', u'/home/stack/custom-templates/ceph.yaml', u'-e', u'/home/stack/custom-templates/compute.yaml', u'-e', u'/home/stack/custom-templates/layout-removeController.yaml', u'--stack', u'newtonoc', u'--debug', u'--log-file', u'overcloudDeploy_11_14_17__13_53_12.log', u'--neutron-flat-networks', u'phys_pcie1_0,phys_pcie1_1,phys_pcie4_0,phys_pcie4_1', u'--neutron-network-vlan-ranges', u'datacentre:101:200', u'--neutron-disable-tunneling', u'--verbose', u'--timeout', u'180']

options: Namespace(access_key='', access_secret='***', access_token='***', access_token_endpoint='', access_token_type='', aodh_endpoint='', auth_type='', auth_url='https://192.200.0.2:13000/v2.0', authorization_code='', cacert=None, cert='', client_id='', client_secret='***', cloud='', consumer_key='', consumer_secret='***', debug=True, default_domain='default', default_domain_id='', default_domain_name='', deferred_help=False, discovery_endpoint='', domain_id='', domain_name='', endpoint='', identity_provider='', identity_provider_url='', insecure=None, inspector_api_version='1', inspector_url=None, interface='', key='', log_file=u'overcloudDeploy_11_14_17__13_53_12.log', murano_url='', old_profile=None, openid_scope='', os_alarming_api_version='2', os_application_catalog_api_version='1', os_baremetal_api_version='1.15', os_beta_command=False, os_compute_api_version='', os_container_infra_api_version='1', os_data_processing_api_version='1.1', os_data_processing_url='', os_dns_api_version='2', os_identity_api_version='', os_image_api_version='1', os_key_manager_api_version='1', os_metrics_api_version='1', os_network_api_version='', os_object_api_version='', os_orchestration_api_version='1', os_project_id=None, os_project_name=None, os_queues_api_version='2', os_tripleoclient_api_version='1', os_volume_api_version='', os_workflow_api_version='2', passcode='', password='***', profile=None, project_domain_id='', project_domain_name='', project_id='', project_name='admin', protocol='', redirect_uri='', region_name='', roles='', timing=False, token='***', trust_id='', url='', user='', user_domain_id='', user_domain_name='', user_id='', username='admin', verbose_level=3, verify=None)

Auth plugin password selected

Starting new HTTPS connection (1): 192.200.0.2

"POST /v2/action_executions HTTP/1.1" 201 1696

HTTP POST https://192.200.0.2:13989/v2/action_executions 201

Overcloud Endpoint: http://172.25.22.109:5000/v2.0

Overcloud Deployed

clean_up DeployOvercloud:

END return value: 0

real 54m17.197s

user 0m3.421s

sys 0m0.670s

验证乌云密布在控制器的服务

- 保证所有可管理的服务在控制器节点适当地运作。

[heat-admin@pod1-controller-2 ~]$ sudo pcs status

确定L3代理路由器

检查路由器为了保证L3代理程序适当地主机。当您执行此检查时,请保证来源overcloudrc文件。

- 查找路由器名字:

[stack@director~]$ source corerc

[stack@director ~]$ neutron router-list

+--------------------------------------+------+-------------------------------------------------------------------+-------------+------+

| id | name | external_gateway_info | distributed | ha |

+--------------------------------------+------+-------------------------------------------------------------------+-------------+------+

| d814dc9d-2b2f-496f-8c25-24911e464d02 | main | {"network_id": "18c4250c-e402-428c-87d6-a955157d50b5", | False | True |

在本例中,路由器的名字是主要的。

- 列出所有L3代理程序为了查找发生故障的节点和新节点UUID :

[stack@director ~]$ neutron agent-list | grep "neutron-l3-agent"

| 70242f5c-43ab-4355-abd6-9277f92e4ce6 | L3 agent | pod1-controller-0.localdomain | nova | :-) | True | neutron-l3-agent |

| 8d2ffbcb-b6ff-42cd-b5b8-da31d8da8a40 | L3 agent | pod1-controller-2.localdomain | nova | xxx | True | neutron-l3-agent |

| a410a491-e271-4938-8a43-458084ffe15d | L3 agent | pod1-controller-3.localdomain | nova | :-) | True | neutron-l3-agent |

| cb4bc1ad-ac50-42e9-ae69-8a256d375136 | L3 agent | pod1-controller-1.localdomain | nova | :-) | True | neutron-l3-agent |

- 在本例中, L3对应于pod1-controller-2.localdomain的代理程序应该从路由器和对应于pod1-controller-3.localdomain的那个删除应该添加到路由器:

[stack@director ~]$ neutron l3-agent-router-remove 8d2ffbcb-b6ff-42cd-b5b8-da31d8da8a40 main

Removed router main from L3 agent

[stack@director ~]$ neutron l3-agent-router-add a410a491-e271-4938-8a43-458084ffe15d main

Added router main to L3 agent

- 检查L3-agents更新的列表:

[stack@director ~]$ neutron l3-agent-list-hosting-router main

+--------------------------------------+-----------------------------------+----------------+-------+----------+

| id | host | admin_state_up | alive | ha_state |

+--------------------------------------+-----------------------------------+----------------+-------+----------+

| 70242f5c-43ab-4355-abd6-9277f92e4ce6 | pod1-controller-0.localdomain | True | :-) | standby |

| a410a491-e271-4938-8a43-458084ffe15d | pod1-controller-3.localdomain | True | :-) | standby |

| cb4bc1ad-ac50-42e9-ae69-8a256d375136 | pod1-controller-1.localdomain | True | :-) | active |

+--------------------------------------+-----------------------------------+----------------+-------+----------+

- 列出从被删除的控制器节点运作并且取消他们的所有服务:

[stack@director ~]$ neutron agent-list | grep controller-2

| 877314c2-3c8d-4666-a6ec-69513e83042d | Metadata agent | pod1-controller-2.localdomain | | xxx | True | neutron-metadata-agent |

| 8d2ffbcb-b6ff-42cd-b5b8-da31d8da8a40 | L3 agent | pod1-controller-2.localdomain | nova | xxx | True | neutron-l3-agent |

| 911c43a5-df3a-49ec-99ed-1d722821ec20 | DHCP agent | pod1-controller-2.localdomain | nova | xxx | True | neutron-dhcp-agent |

| a58a3dd3-4cdc-48d4-ab34-612a6cd72768 | Open vSwitch agent | pod1-controller-2.localdomain | | xxx | True | neutron-openvswitch-agent |

[stack@director ~]$ neutron agent-delete 877314c2-3c8d-4666-a6ec-69513e83042d

Deleted agent(s): 877314c2-3c8d-4666-a6ec-69513e83042d

[stack@director ~]$ neutron agent-delete 8d2ffbcb-b6ff-42cd-b5b8-da31d8da8a40

Deleted agent(s): 8d2ffbcb-b6ff-42cd-b5b8-da31d8da8a40

[stack@director ~]$ neutron agent-delete 911c43a5-df3a-49ec-99ed-1d722821ec20

Deleted agent(s): 911c43a5-df3a-49ec-99ed-1d722821ec20

[stack@director ~]$ neutron agent-delete a58a3dd3-4cdc-48d4-ab34-612a6cd72768

Deleted agent(s): a58a3dd3-4cdc-48d4-ab34-612a6cd72768

[stack@director ~]$ neutron agent-list | grep controller-2

[stack@director ~]$

确定估计服务

- 检查新星从去除的节点留下的Service列表项目并且删除他们:

[stack@director ~]$ nova service-list | grep controller-2

| 615 | nova-consoleauth | pod1-controller-2.localdomain | internal | enabled | down | 2017-11-16T16:08:14.000000 | - |

| 618 | nova-scheduler | pod1-controller-2.localdomain | internal | enabled | down | 2017-11-16T16:08:13.000000 | - |

| 621 | nova-conductor | pod1-controller-2.localdomain | internal | enabled | down | 2017-11-16T16:08:14.000000 | -

[stack@director ~]$ nova service-delete 615

[stack@director ~]$ nova service-delete 618

[stack@director ~]$ nova service-delete 621

stack@director ~]$ nova service-list | grep controller-2

- 保证consoleauth进程在所有控制器运行或重新启动它与使用此命令:个人计算机资源重新启动openstack新星consoleauth :

[stack@director ~]$ nova service-list | grep consoleauth

| 601 | nova-consoleauth | pod1-controller-0.localdomain | internal | enabled | up | 2017-11-16T21:00:10.000000 | - |

| 608 | nova-consoleauth | pod1-controller-1.localdomain | internal | enabled | up | 2017-11-16T21:00:13.000000 | - |

| 622 | nova-consoleauth | pod1-controller-3.localdomain | internal | enabled | up | 2017-11-16T21:00:13.000000 | -

操刀在控制器节点的重新启动

- 检查所有控制器IP路由对undercloud 192.0.0.0/8 :

[root@pod1-controller-3 ~]# ip route

default via 172.25.22.1 dev vlan101

11.117.0.0/24 dev vlan17 proto kernel scope link src 11.117.0.12

11.118.0.0/24 dev vlan18 proto kernel scope link src 11.118.0.12

11.119.0.0/24 dev vlan19 proto kernel scope link src 11.119.0.12

11.120.0.0/24 dev vlan20 proto kernel scope link src 11.120.0.12

169.254.169.254 via 192.200.0.1 dev eno1

172.25.22.0/24 dev vlan101 proto kernel scope link src 172.25.22.102

192.0.0.0/8 dev eno1 proto kernel scope link src 192.200.0.103

- 检查当前stonith配置。取消在老控制器节点的所有参考:

[root@pod1-controller-3 ~]# sudo pcs stonith show --full

Resource: my-ipmilan-for-controller-6 (class=stonith type=fence_ipmilan)

Attributes: pcmk_host_list=pod1-controller-1 ipaddr=192.100.0.1 login=admin passwd=Csco@123Starent lanplus=1

Operations: monitor interval=60s (my-ipmilan-for-controller-6-monitor-interval-60s)

Resource: my-ipmilan-for-controller-4 (class=stonith type=fence_ipmilan)

Attributes: pcmk_host_list=pod1-controller-0 ipaddr=192.100.0.14 login=admin passwd=Csco@123Starent lanplus=1

Operations: monitor interval=60s (my-ipmilan-for-controller-4-monitor-interval-60s)

Resource: my-ipmilan-for-controller-7 (class=stonith type=fence_ipmilan)

Attributes: pcmk_host_list=pod1-controller-2 ipaddr=192.100.0.15 login=admin passwd=Csco@123Starent lanplus=1

Operations: monitor interval=60s (my-ipmilan-for-controller-7-monitor-interval-60s)

[root@pod1-controller-3 ~]# pcs stonith delete my-ipmilan-for-controller-7

Attempting to stop: my-ipmilan-for-controller-7...Stopped

- 添加新的控制器的stonith配置:

[root@pod1-controller-3 ~]sudo pcs stonith create my-ipmilan-for-controller-8 fence_ipmilan pcmk_host_list=pod1-controller-3 ipaddr=<CIMC_IP> login=admin passwd=<PASSWORD> lanplus=1 op monitor interval=60s

- 重新启动操刀从所有控制器并且验证状态:

[root@pod1-controller-1 ~]# sudo pcs property set stonith-enabled=true

[root@pod1-controller-3 ~]# pcs status

<snip>

my-ipmilan-for-controller-1 (stonith:fence_ipmilan): Started pod1-controller-3

my-ipmilan-for-controller-0 (stonith:fence_ipmilan): Started pod1-controller-3

my-ipmilan-for-controller-3 (stonith:fence_ipmilan): Started pod1-controller-3

张贴服务器替换设置

请参见下面链路应用以前是存在老服务器的设置:

由思科工程师提供

- Padmaraj RamanoudjamCisco Advanced Services

- Partheeban RajagopalCisco Advanced Services

反馈

反馈