OSD计算UCS 240M4 - vEPC的替代品

下载选项

非歧视性语言

此产品的文档集力求使用非歧视性语言。在本文档集中,非歧视性语言是指不隐含针对年龄、残障、性别、种族身份、族群身份、性取向、社会经济地位和交叉性的歧视的语言。由于产品软件的用户界面中使用的硬编码语言、基于 RFP 文档使用的语言或引用的第三方产品使用的语言,文档中可能无法确保完全使用非歧视性语言。 深入了解思科如何使用包容性语言。

关于此翻译

思科采用人工翻译与机器翻译相结合的方式将此文档翻译成不同语言,希望全球的用户都能通过各自的语言得到支持性的内容。 请注意:即使是最好的机器翻译,其准确度也不及专业翻译人员的水平。 Cisco Systems, Inc. 对于翻译的准确性不承担任何责任,并建议您总是参考英文原始文档(已提供链接)。

目录

简介

本文档介绍在托管StarOS虚拟网络功能(VNF)的Ultra-M设置中替换故障对象存储磁盘(OSD) — 计算服务器所需的步骤。

背景信息

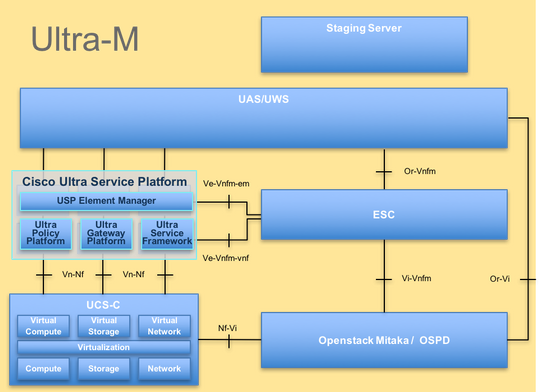

Ultra-M是经过预先打包和验证的虚拟化移动数据包核心解决方案,旨在简化VNF的部署。OpenStack是适用于Ultra-M的虚拟化基础设施管理器(VIM),由以下节点类型组成:

- 计算

- OSD — 计算

- 控制器

- OpenStack平台 — 导向器(OSPD)

Ultra-M的高级体系结构和涉及的组件如下图所示:

本文档面向熟悉Cisco Ultra-M平台的思科人员,并详述在进行计算服务器更换时,在OpenStack和StarOS VNF级别需要执行的步骤。

注意:Ultra M 5.1.x版本用于定义本文档中的过程。

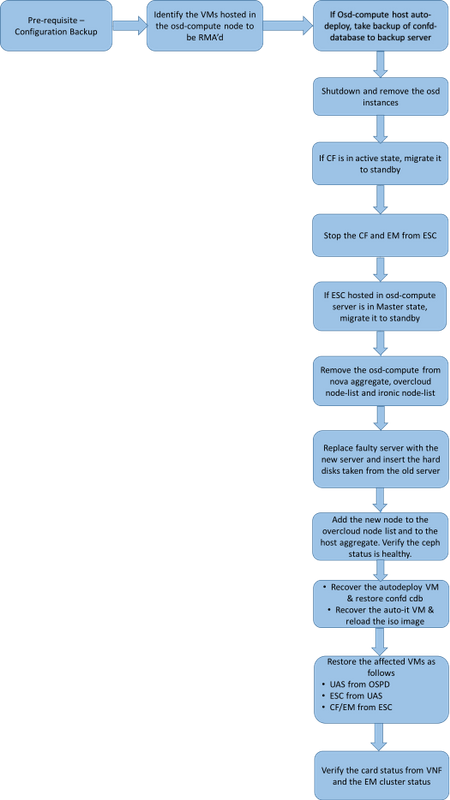

MoP的工作流程

缩写

| VNF | 虚拟网络功能 |

| CF | 控制功能 |

| 旧金山 | 服务功能 |

| ESC | 弹性服务控制器 |

| MOP | 程序方法 |

| OSD | 对象存储磁盘 |

| HDD | 硬盘驱动器 |

| SSD | 固态驱动器 |

| VIM | 虚拟基础设施管理器 |

| VM | 虚拟机 |

| EM | 元素管理器 |

| UAS | 超自动化服务 |

| UUID | 通用唯一ID标识符 |

先决条件

备份OSPD

在替换OSD-Compute节点之前,必须检查Red Hat OpenStack平台环境的当前状态。建议您检查当前状态,以避免当计算机替换过程开启时造成复杂性。通过这种替换流程可以实现这一点。

在进行恢复时,Cisco建议您使用以下步骤对OSPD数据库(DB)进行备份:

[root@director ~]# mysqldump --opt --all-databases > /root/undercloud-all-databases.sql

[root@director ~]# tar --xattrs -czf undercloud-backup-`date +%F`.tar.gz /root/undercloud-all-databases.sql

/etc/my.cnf.d/server.cnf /var/lib/glance/images /srv/node /home/stack

tar: Removing leading `/' from member names

此过程确保可以替换节点而不影响任何实例的可用性。此外,建议备份StarOS配置,特别是要替换的计算节点托管CF VM时。

确定OSD计算节点中托管的虚拟机

确定托管在计算服务器上的VM。有两种可能:

OSD计算服务器包含VM的EM/UAS/自动部署/自动IT组合:

[stack@director ~]$ nova list --field name,host | grep osd-compute-0

| c6144778-9afd-4946-8453-78c817368f18 | AUTO-DEPLOY-VNF2-uas-0 | pod1-osd-compute-0.localdomain |

| 2d051522-bce2-4809-8d63-0c0e17f251dc | AUTO-IT-VNF2-uas-0 | pod1-osd-compute-0.localdomain |

| 507d67c2-1d00-4321-b9d1-da879af524f8 | VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea | pod1-osd-compute-0.localdomain |

| f5bd7b9c-476a-4679-83e5-303f0aae9309 | VNF2-UAS-uas-0 | pod1-osd-compute-0.localdomain |

计算服务器包含虚拟机的CF/ESC/EM/UAS组合:

[stack@director ~]$ nova list --field name,host | grep osd-compute-1

| 507d67c2-1d00-4321-b9d1-da879af524f8 | VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea | pod1-compute-8.localdomain |

| f9c0763a-4a4f-4bbd-af51-bc7545774be2 | VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229 | pod1-compute-8.localdomain |

| 75528898-ef4b-4d68-b05d-882014708694 | VNF2-ESC-ESC-0 | pod1-compute-8.localdomain |

| f5bd7b9c-476a-4679-83e5-303f0aae9309 | VNF2-UAS-uas-0 | pod1-compute-8.localdomain |

注意:在此显示的输出中,第一列与UUID相对应,第二列是VM名称,第三列是VM所在的主机名。此输出的参数将在后续部分中使用。

验证Ceph是否具有可用容量以允许删除单个OSD服务器:

[root@pod1-osd-compute-1 ~]# sudo ceph df

GLOBAL:

SIZE AVAIL RAW USED %RAW USED

13393G 11804G 1589G 11.87

POOLS:

NAME ID USED %USED MAX AVAIL OBJECTS

rbd 0 0 0 3876G 0

metrics 1 4157M 0.10 3876G 215385

images 2 6731M 0.17 3876G 897

backups 3 0 0 3876G 0

volumes 4 399G 9.34 3876G 102373

vms 5 122G 3.06 3876G 31863

验证OSD-Compute服务器上的ceph osd树状态为up:

[heat-admin@pod1-osd-compute-1 ~]$ sudo ceph osd tree

ID WEIGHT TYPE NAME UP/DOWN REWEIGHT PRIMARY-AFFINITY

-1 13.07996 root default

-2 4.35999 host pod1-osd-compute-0

0 1.09000 osd.0 up 1.00000 1.00000

3 1.09000 osd.3 up1.00000 1.00000

6 1.09000 osd.6 up 1.00000 1.00000

9 1.09000 osd.9 up 1.00000 1.00000

-3 4.35999 host pod1-osd-compute-2

1 1.09000 osd.1 up 1.00000 1.00000

4 1.09000 osd.4 up 1.00000 1.00000

7 1.09000 osd.7 up 1.00000 1.00000

10 1.09000 osd.10 up 1.00000 1.00000

-4 4.35999 host pod1-osd-compute-1

2 1.09000 osd.2 up 1.00000 1.00000

5 1.09000 osd.5 up 1.00000 1.00000

8 1.09000 osd.8 up 1.00000 1.00000

11 1.09000 osd.11 up 1.00000 1.00000

Ceph进程在OSD计算服务器上处于活动状态:

[root@pod1-osd-compute-1 ~]# systemctl list-units *ceph*

UNIT LOAD ACTIVE SUB DESCRIPTION

var-lib-ceph-osd-ceph\x2d11.mount loaded active mounted /var/lib/ceph/osd/ceph-11

var-lib-ceph-osd-ceph\x2d2.mount loaded active mounted /var/lib/ceph/osd/ceph-2

var-lib-ceph-osd-ceph\x2d5.mount loaded active mounted /var/lib/ceph/osd/ceph-5

var-lib-ceph-osd-ceph\x2d8.mount loaded active mounted /var/lib/ceph/osd/ceph-8

ceph-osd@11.service loaded active running Ceph object storage daemon

ceph-osd@2.service loaded active running Ceph object storage daemon

ceph-osd@5.service loaded active running Ceph object storage daemon

ceph-osd@8.service loaded active running Ceph object storage daemon

system-ceph\x2ddisk.slice loaded active active system-ceph\x2ddisk.slice

system-ceph\x2dosd.slice loaded active active system-ceph\x2dosd.slice

ceph-mon.target loaded active active ceph target allowing to start/stop all ceph-mon@.service instances at once

ceph-osd.target loaded active active ceph target allowing to start/stop all ceph-osd@.service instances at once

ceph-radosgw.target loaded active active ceph target allowing to start/stop all ceph-radosgw@.service instances at once

ceph.target loaded active active ceph target allowing to start/stop all ceph*@.service instances at once

禁用并停止每个Ceph实例,从OSD中删除每个实例并卸载目录。对每个Ceph实例重复以下操作:

[root@pod1-osd-compute-1 ~]# systemctl disable ceph-osd@11

[root@pod1-osd-compute-1 ~]# systemctl stop ceph-osd@11

[root@pod1-osd-compute-1 ~]# ceph osd out 11

marked out osd.11.

[root@pod1-osd-compute-1 ~]# ceph osd crush remove osd.11

removed item id 11 name 'osd.11' from crush map

[root@pod1-osd-compute-1 ~]# ceph auth del osd.11

updated

[root@pod1-osd-compute-1 ~]# ceph osd rm 11

removed osd.11

[root@pod1-osd-compute-1 ~]# umount /var/lib/ceph.osd/ceph-11

[root@pod1-osd-compute-1 ~]# rm -rf /var/lib/ceph.osd/ceph-11

或

可以使用Clean.sh脚本来执行此任务:

[heat-admin@pod1-osd-compute-0 ~]$ sudo ls /var/lib/ceph/osd

ceph-11 ceph-3 ceph-6 ceph-8

[heat-admin@pod1-osd-compute-0 ~]$ /bin/sh clean.sh

[heat-admin@pod1-osd-compute-0 ~]$ cat clean.sh

#!/bin/sh

set -x

CEPH=`sudo ls /var/lib/ceph/osd`

for c in $CEPH

do

i=`echo $c |cut -d'-' -f2`

sudo systemctl disable ceph-osd@$i || (echo "error rc:$?"; exit 1)

sleep 2

sudo systemctl stop ceph-osd@$i || (echo "error rc:$?"; exit 1)

sleep 2

sudo ceph osd out $i || (echo "error rc:$?"; exit 1)

sleep 2

sudo ceph osd crush remove osd.$i || (echo "error rc:$?"; exit 1)

sleep 2

sudo ceph auth del osd.$i || (echo "error rc:$?"; exit 1)

sleep 2

sudo ceph osd rm $i || (echo "error rc:$?"; exit 1)

sleep 2

sudo umount /var/lib/ceph/osd/$c || (echo "error rc:$?"; exit 1)

sleep 2

sudo rm -rf /var/lib/ceph/osd/$c || (echo "error rc:$?"; exit 1)

sleep 2

done

sudo ceph osd tree

在所有OSD进程已迁移/删除后,节点可以从重叠云中删除。

注:删除Ceph后,VNF HD RAID将进入“已降级”状态,但HD磁盘必须仍然可访问。

正常断电

案例1. OSD计算节点主机CF/ESC/EM/UAS

将CF卡迁移至备用状态

登录到StarOS VNF并确定与CF VM对应的卡。使用识别OSD — 计算节点中托管的VM部分中识别的CF VM的UUID,并查找与UUID对应的卡。

[local]VNF2# show card hardware

Tuesday might 08 16:49:42 UTC 2018

<snip>

Card 2:

Card Type : Control Function Virtual Card

CPU Packages : 8 [#0, #1, #2, #3, #4, #5, #6, #7]

CPU Nodes : 1

CPU Cores/Threads : 8

Memory : 16384M (qvpc-di-large)

UUID/Serial Number : F9C0763A-4A4F-4BBD-AF51-BC7545774BE2

<snip>

检查卡的状态:

[local]VNF2# show card table

Tuesday might 08 16:52:53 UTC 2018

Slot Card Type Oper State SPOF Attach

----------- -------------------------------------- ------------- ---- ------

1: CFC Control Function Virtual Card Standby -

2: CFC Control Function Virtual Card Active No

3: FC 4-Port Service Function Virtual Card Active No

4: FC 4-Port Service Function Virtual Card Active No

5: FC 4-Port Service Function Virtual Card Active No

6: FC 4-Port Service Function Virtual Card Active No

7: FC 4-Port Service Function Virtual Card Active No

8: FC 4-Port Service Function Virtual Card Active No

9: FC 4-Port Service Function Virtual Card Active No

10: FC 4-Port Service Function Virtual Card Standby -

如果卡处于活动状态,请将卡移至备用状态:

[local]VNF2# card migrate from 2 to 1

从ESC关闭CF和EM VM

登录到与VNF对应的ESC节点并检查VM的状态:

[admin@VNF2-esc-esc-0 ~]$ cd /opt/cisco/esc/esc-confd/esc-cli

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli get esc_datamodel | egrep --color "<state>|<vm_name>|<vm_id>|<deployment_name>"

<snip>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229</vm_name>

<state>VM_ALIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_c3_0_3e0db133-c13b-4e3d-ac14-

<state>VM_ALIVE_STATE</state>

<deployment_name>VNF2-DEPLOYMENT-em</deployment_name>

<vm_id>507d67c2-1d00-4321-b9d1-da879af524f8</vm_id>

<vm_id>dc168a6a-4aeb-4e81-abd9-91d7568b5f7c</vm_id>

<vm_id>9ffec58b-4b9d-4072-b944-5413bf7fcf07</vm_id>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea</vm_name>

<state>VM_ALIVE_STATE</state>

<snip>

使用其VM名称逐个停止CF和EM VM。VM名称(Identify the VMs hosted in the OSD-Compute Node)部分中注明。

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli vm-action STOP VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli vm-action STOP VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea

停止后,VM必须进入SHUTOFF状态:

[admin@VNF2-esc-esc-0 ~]$ cd /opt/cisco/esc/esc-confd/esc-cli

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli get esc_datamodel | egrep --color "<state>|<vm_name>|<vm_id>|<deployment_name>"

<snip>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229</vm_name>

<state>VM_SHUTOFF_STATE</state>

<vm_name>VNF2-DEPLOYM_c3_0_3e0db133-c13b-4e3d-ac14-

<state>VM_ALIVE_STATE</state>

<deployment_name>VNF2-DEPLOYMENT-em</deployment_name>

<vm_id>507d67c2-1d00-4321-b9d1-da879af524f8</vm_id>

<vm_id>dc168a6a-4aeb-4e81-abd9-91d7568b5f7c</vm_id>

<vm_id>9ffec58b-4b9d-4072-b944-5413bf7fcf07</vm_id>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea</vm_name>

VM_SHUTOFF_STATE

<snip>

将ESC迁移到备用模式

登录到计算节点中托管的ESC,并检查它是否处于主状态。如果是,请将ESC切换到备用模式:

[admin@VNF2-esc-esc-0 esc-cli]$ escadm status

0 ESC status=0 ESC Master Healthy

[admin@VNF2-esc-esc-0 ~]$ sudo service keepalived stop

Stopping keepalived: [ OK ]

[admin@VNF2-esc-esc-0 ~]$ escadm status

1 ESC status=0 In SWITCHING_TO_STOP state. Please check status after a while.

[admin@VNF2-esc-esc-0 ~]$ sudo reboot

Broadcast message from admin@vnf1-esc-esc-0.novalocal

(/dev/pts/0) at 13:32 ...

The system is going down for reboot NOW!

从Nova聚合列表中删除OSD-Compute节点

列出nova聚合,并根据其所托管的VNF确定与计算服务器对应的聚合。通常,其格式为<VNFNAME>-EM-MGMT<X>和<VNFNAME>-CF-MGMT<X>:

[stack@director ~]$ nova aggregate-list

+----+-------------------+-------------------+

| Id | Name | Availability Zone |

+----+-------------------+-------------------+

| 29 | POD1-AUTOIT | mgmt |

| 57 | VNF1-SERVICE1 | - |

| 60 | VNF1-EM-MGMT1 | - |

| 63 | VNF1-CF-MGMT1 | - |

| 66 | VNF2-CF-MGMT2 | - |

| 69 | VNF2-EM-MGMT2 | - |

| 72 | VNF2-SERVICE2 | - |

| 75 | VNF3-CF-MGMT3 | - |

| 78 | VNF3-EM-MGMT3 | - |

| 81 | VNF3-SERVICE3 | - |

+----+-------------------+-------------------+

在本例中,OSD-Compute服务器属于VNF2。因此,对应的聚合为VNF2-CF-MGMT2和VNF2-EM-MGMT2。

从已识别的聚合中删除OSD-Compute节点:

nova aggregate-remove-host

[stack@director ~]$ nova aggregate-remove-host VNF2-CF-MGMT2 pod1-osd-compute-0.localdomain

[stack@director ~]$ nova aggregate-remove-host VNF2-EM-MGMT2 pod1-osd-compute-0.localdomain

[stack@director ~]$ nova aggregate-remove-host POD1-AUTOIT pod1-osd-compute-0.localdomain

验证是否已从聚合中删除OSD-Compute节点。现在,请确保主机未列在聚合下:

nova aggregate-show

[stack@director ~]$ nova aggregate-show VNF2-CF-MGMT2

[stack@director ~]$ nova aggregate-show VNF2-EM-MGMT2

[stack@director ~]$ nova aggregate-show POD1-AUTOIT

案例2. OSD计算节点托管自动部署/自动IT/EM/UAS

备份自动部署的CDB

定期或在每次激活/取消激活后备份autodeploy confd cdb数据,并将文件保存到备份服务器。自动部署不是冗余的,如果此数据丢失,则很难停用部署。

登录到Auto-Deploy VM和备份confd cdb目录:

ubuntu@auto-deploy-iso-2007-uas-0:~$sudo -i

root@auto-deploy-iso-2007-uas-0:~#service uas-confd stop

uas-confd stop/waiting

root@auto-deploy-iso-2007-uas-0:~# cd /opt/cisco/usp/uas/confd-6.3.1/var/confd

root@auto-deploy-iso-2007-uas-0:/opt/cisco/usp/uas/confd-6.3.1/var/confd#tar cvf autodeploy_cdb_backup.tar cdb/

cdb/

cdb/O.cdb

cdb/C.cdb

cdb/aaa_init.xml

cdb/A.cdb

root@auto-deploy-iso-2007-uas-0:~# service uas-confd start

uas-confd start/running, process 13852

注意:将autodeploy_cdb_backup.tar复制到备份服务器。

从自动IT备份system.cfg

将system.cfg文件备份到backup-server:

Auto-it = 10.1.1.2

Backup server = 10.2.2.2

[stack@director ~]$ ssh ubuntu@10.1.1.2

ubuntu@10.1.1.2's password:

Welcome to Ubuntu 14.04.3 LTS (GNU/Linux 3.13.0-76-generic x86_64)

* Documentation: https://help.ubuntu.com/

System information as of Wed Jun 13 16:21:34 UTC 2018

System load: 0.02 Processes: 87

Usage of /: 15.1% of 78.71GB Users logged in: 0

Memory usage: 13% IP address for eth0: 172.16.182.4

Swap usage: 0%

Graph this data and manage this system at:

https://landscape.canonical.com/

Get cloud support with Ubuntu Advantage Cloud Guest:

http://www.ubuntu.com/business/services/cloud

Cisco Ultra Services Platform (USP)

Build Date: Wed Feb 14 12:58:22 EST 2018

Description: UAS build assemble-uas#1891

sha1: bf02ced

ubuntu@auto-it-vnf-uas-0:~$ scp -r /opt/cisco/usp/uploads/system.cfg root@10.2.2.2:/home/stack

root@10.2.2.2's password:

system.cfg 100% 565 0.6KB/s 00:00

ubuntu@auto-it-vnf-uas-0:~$

注:对OSD-Compute-0上托管的EM/UAS执行正常关闭的步骤在这两种情况下都相同。请参考案例1。

OSD计算节点删除

此部分中提到的步骤是通用的,与计算节点中托管的VM无关。

从服务列表中删除OSD计算节点

从服务列表中删除计算服务:

[stack@director ~]$ source corerc

[stack@director ~]$ openstack compute service list | grep osd-compute-0

| 404 | nova-compute | pod1-osd-compute-0.localdomain | nova | enabled | up | 2018-05-08T18:40:56.000000 |

openstack compute service delete

[stack@director ~]$ openstack compute service delete 404

删除Neutron代理

删除旧关联的中子代理并打开计算服务器的vswitch agent:

[stack@director ~]$ openstack network agent list | grep osd-compute-0

| c3ee92ba-aa23-480c-ac81-d3d8d01dcc03 | Open vSwitch agent | pod1-osd-compute-0.localdomain | None | False | UP | neutron-openvswitch-agent |

| ec19cb01-abbb-4773-8397-8739d9b0a349 | NIC Switch agent | pod1-osd-compute-0.localdomain | None | False | UP | neutron-sriov-nic-agent |

openstack network agent delete

[stack@director ~]$ openstack network agent delete c3ee92ba-aa23-480c-ac81-d3d8d01dcc03

[stack@director ~]$ openstack network agent delete ec19cb01-abbb-4773-8397-8739d9b0a349

从Nova和Ironic数据库中删除

从nova列表和ironic数据库中删除节点并验证它:

[stack@director ~]$ source stackrc

[stack@al01-pod1-ospd ~]$ nova list | grep osd-compute-0

| c2cfa4d6-9c88-4ba0-9970-857d1a18d02c | pod1-osd-compute-0 | ACTIVE | - | Running | ctlplane=192.200.0.114 |

[stack@al01-pod1-ospd ~]$ nova delete c2cfa4d6-9c88-4ba0-9970-857d1a18d02c

nova show| grep hypervisor

[stack@director ~]$ nova show pod1-osd-compute-0 | grep hypervisor

| OS-EXT-SRV-ATTR:hypervisor_hostname | 4ab21917-32fa-43a6-9260-02538b5c7a5a

ironic node-delete

[stack@director ~]$ ironic node-delete 4ab21917-32fa-43a6-9260-02538b5c7a5a

[stack@director ~]$ ironic node-list (node delete must not be listed now)

从Overcloud中删除

使用所示内容创建名为delete_node.sh的脚本文件。请确保提到的模板与deploy.sh脚本中用于堆栈部署的模板相同:

delete_node.sh

openstack overcloud node delete --templates -e /usr/share/openstack-tripleo-heat-templates/environments/puppet-pacemaker.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/storage-environment.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/neutron-sriov.yaml -e /home/stack/custom-templates/network.yaml -e /home/stack/custom-templates/ceph.yaml -e /home/stack/custom-templates/compute.yaml -e /home/stack/custom-templates/layout.yaml -e /home/stack/custom-templates/layout.yaml --stack

[stack@director ~]$ source stackrc

[stack@director ~]$ /bin/sh delete_node.sh

+ openstack overcloud node delete --templates -e /usr/share/openstack-tripleo-heat-templates/environments/puppet-pacemaker.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/storage-environment.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/neutron-sriov.yaml -e /home/stack/custom-templates/network.yaml -e /home/stack/custom-templates/ceph.yaml -e /home/stack/custom-templates/compute.yaml -e /home/stack/custom-templates/layout.yaml -e /home/stack/custom-templates/layout.yaml --stack pod1 49ac5f22-469e-4b84-badc-031083db0533

Deleting the following nodes from stack pod1:

- 49ac5f22-469e-4b84-badc-031083db0533

Started Mistral Workflow. Execution ID: 4ab4508a-c1d5-4e48-9b95-ad9a5baa20ae

real 0m52.078s

user 0m0.383s

sys 0m0.086s

等待OpenStack堆栈操作以转到COMPLETE状态:

[stack@director ~]$ openstack stack list

+--------------------------------------+------------+-----------------+----------------------+----------------------+

| ID | Stack Name | Stack Status | Creation Time | Updated Time |

+--------------------------------------+------------+-----------------+----------------------+----------------------+

| 5df68458-095d-43bd-a8c4-033e68ba79a0 | pod1 | UPDATE_COMPLETE | 2018-05-08T21:30:06Z | 2018-05-08T20:42:48Z |

+--------------------------------------+------------+-----------------+----------------------+----------------------

安装新的计算节点

- 安装新UCS C240 M4服务器的步骤和初始设置步骤可从以下网址获得:

- 安装服务器后,将硬盘插入相应插槽中作为旧服务器

- 使用CIMC IP登录到服务器

- 如果固件与之前使用的推荐版本不一致,请执行BIOS升级。BIOS升级步骤如下:

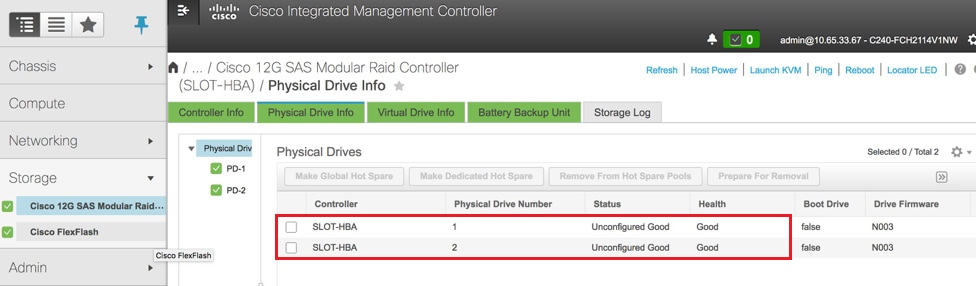

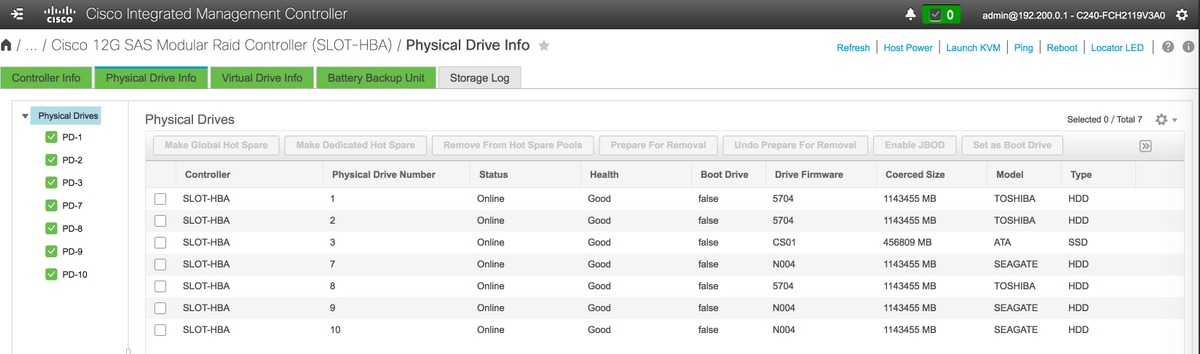

- 检验物理驱动器的状态。它必须是无与伦比的

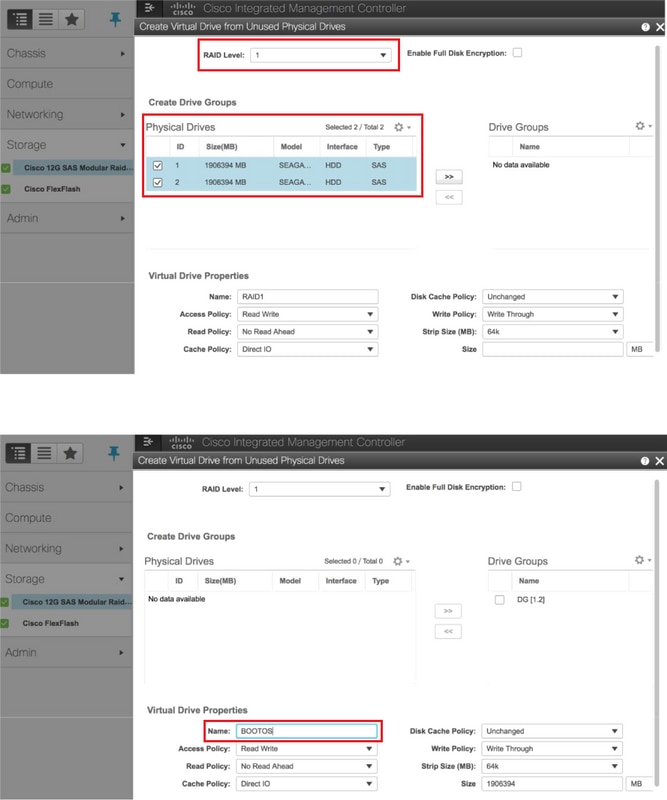

- 使用RAID级别1从物理驱动器创建虚拟驱动器

存储> Cisco 12G SAS模块化Raid控制器(SLOT-HBA)>物理驱动器信息

存储> Cisco 12G SAS模块化Raid控制器(SLOT-HBA)>物理驱动器信息

注意:此映像仅供说明之用,实际上,OSD计算CIMC中,您将看到七个物理驱动器位于插槽(1,2,3,7,8,9,10)中,处于未配置的良好状态,因为没有从插槽创建虚拟驱动器。

存储> Cisco 12G SAS模块化Raid控制器(SLOT-HBA)>控制器信息>从未使用的物理驱动器创建虚拟驱动器

存储> Cisco 12G SAS模块化Raid控制器(SLOT-HBA)>控制器信息>从未使用的物理驱动器创建虚拟驱动器

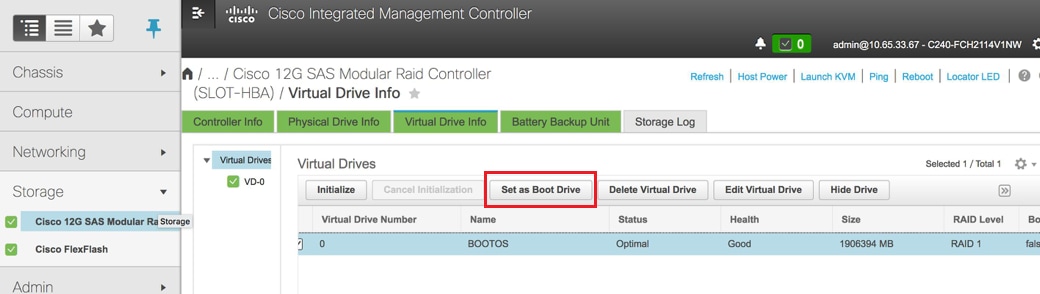

选择VD并配置“设置为引导驱动器”

选择VD并配置“设置为引导驱动器”

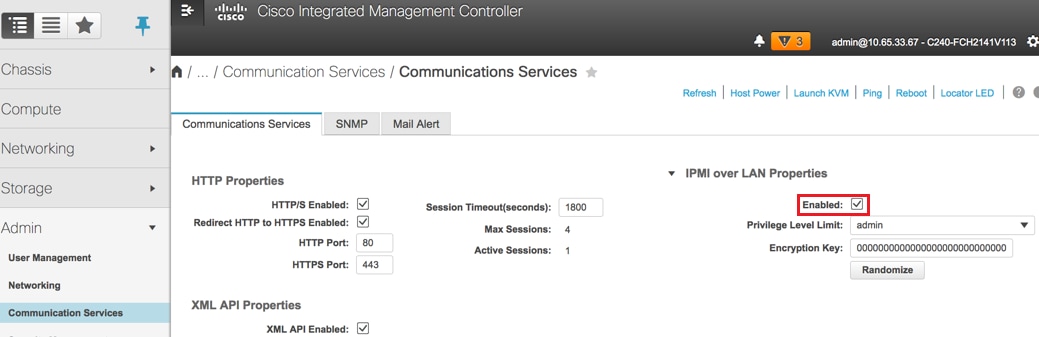

启用IPMI over LAN:管理员>通信服务>通信服务

启用IPMI over LAN:管理员>通信服务>通信服务

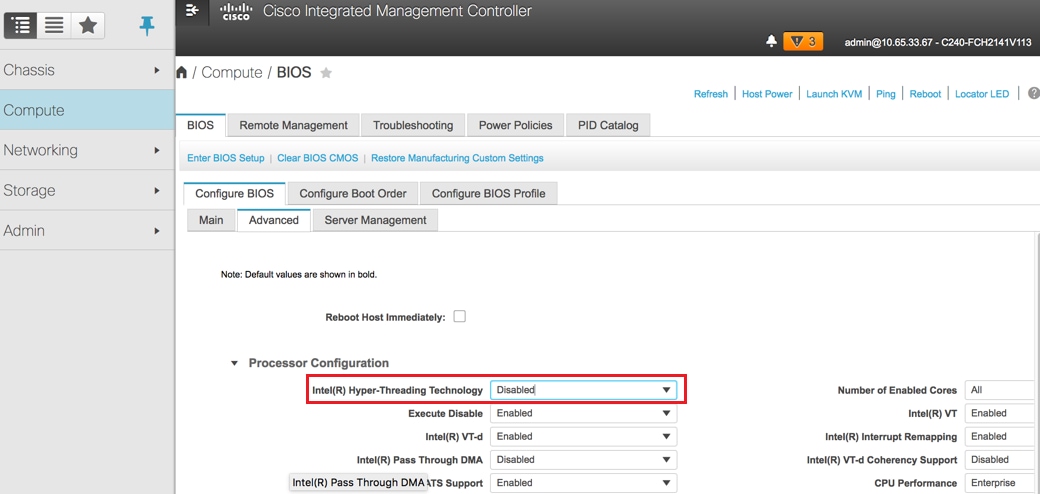

禁用超线程:计算> BIOS >配置BIOS >高级>处理器配置

禁用超线程:计算> BIOS >配置BIOS >高级>处理器配置

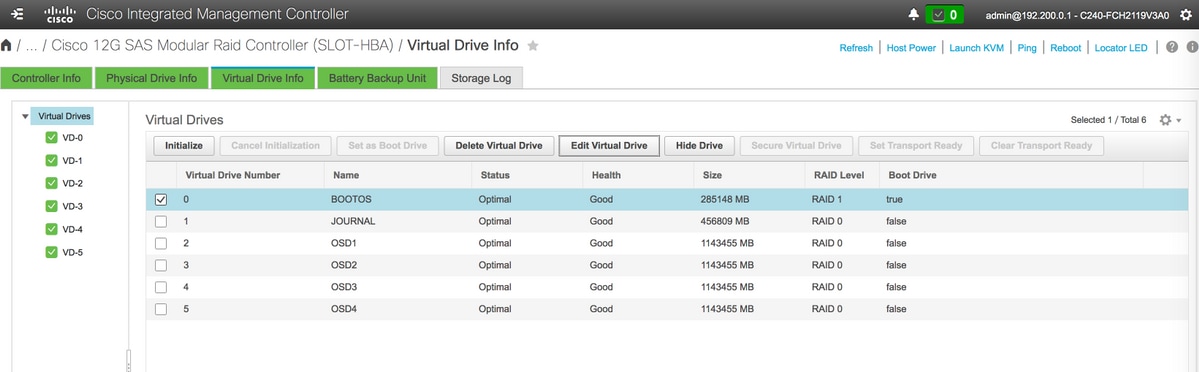

- 与使用物理驱动器1和2创建的BOOTOS VD类似,再创建四个虚拟驱动器,作为

JOURNAL > From physical drive number 3

OSD1 >从物理驱动器编号7

OSD2 >从物理驱动器号8

OSD3 >从物理驱动器号9

OSD4 >从物理驱动器号10 - 最后,物理驱动器和虚拟驱动器必须类似如图所示:

虚拟驱动器

虚拟驱动器 物理驱动器

物理驱动器

注意:此处显示的映像和本节中提到的配置步骤是参考固件版本3.0(3e),如果您使用其他版本,可能会有细微的差异。

将新的OSD计算节点添加到Overcloud

此部分中提到的步骤是通用的,与计算节点托管的VM无关。

使用其他索引添加计算服务器。

创建一个add_node.json文件,其中仅包含要添加的新计算服务器的详细信息。确保新的OSD-Compute服务器的索引号之前未使用过。通常,递增下一个最高计算值。

示例:最高先验是OSD-Compute-0,因此创建了OSD-Compute-3,适用于2-vnf系统。

注意:请记住json格式。

[stack@director ~]$ cat add_node.json

{

"nodes":[

{

"mac":[

"<MAC_ADDRESS>"

],

"capabilities": "node:osd-compute-3,boot_option:local",

"cpu":"24",

"memory":"256000",

"disk":"3000",

"arch":"x86_64",

"pm_type":"pxe_ipmitool",

"pm_user":"admin",

"pm_password":"<PASSWORD>",

"pm_addr":"192.100.0.5"

}

]

}

导入json文件:

[stack@director ~]$ openstack baremetal import --json add_node.json

Started Mistral Workflow. Execution ID: 78f3b22c-5c11-4d08-a00f-8553b09f497d

Successfully registered node UUID 7eddfa87-6ae6-4308-b1d2-78c98689a56e

Started Mistral Workflow. Execution ID: 33a68c16-c6fd-4f2a-9df9-926545f2127e

Successfully set all nodes to available.

使用上一步中介绍的UUID运行节点内省:

[stack@director ~]$ openstack baremetal node manage 7eddfa87-6ae6-4308-b1d2-78c98689a56e

[stack@director ~]$ ironic node-list |grep 7eddfa87

| 7eddfa87-6ae6-4308-b1d2-78c98689a56e | None | None | power off | manageable | False |

[stack@director ~]$ openstack overcloud node introspect 7eddfa87-6ae6-4308-b1d2-78c98689a56e --provide

Started Mistral Workflow. Execution ID: e320298a-6562-42e3-8ba6-5ce6d8524e5c

Waiting for introspection to finish...

Successfully introspected all nodes.

Introspection completed.

Started Mistral Workflow. Execution ID: c4a90d7b-ebf2-4fcb-96bf-e3168aa69dc9

Successfully set all nodes to available.

[stack@director ~]$ ironic node-list |grep available

| 7eddfa87-6ae6-4308-b1d2-78c98689a56e | None | None | power off | available | False |

在OsdComputeIPs下将IP地址添加到custom-templates/layout.yml。在这种情况下,当您替换OSD-Compute-0时,会将该地址添加到每个类型的列表末尾:

OsdComputeIPs:

internal_api:

- 11.120.0.43

- 11.120.0.44

- 11.120.0.45

- 11.120.0.43 <<< take osd-compute-0 .43 and add here

tenant:

- 11.117.0.43

- 11.117.0.44

- 11.117.0.45

- 11.117.0.43 << and here

storage:

- 11.118.0.43

- 11.118.0.44

- 11.118.0.45

- 11.118.0.43 << and here

storage_mgmt:

- 11.119.0.43

- 11.119.0.44

- 11.119.0.45

- 11.119.0.43 << and here

运行以前用于部署堆栈的deploy.sh脚本,以便将新的计算节点添加到超云堆栈:

[stack@director ~]$ ./deploy.sh

++ openstack overcloud deploy --templates -r /home/stack/custom-templates/custom-roles.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/puppet-pacemaker.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/storage-environment.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/neutron-sriov.yaml -e /home/stack/custom-templates/network.yaml -e /home/stack/custom-templates/ceph.yaml -e /home/stack/custom-templates/compute.yaml -e /home/stack/custom-templates/layout.yaml --stack ADN-ultram --debug --log-file overcloudDeploy_11_06_17__16_39_26.log --ntp-server 172.24.167.109 --neutron-flat-networks phys_pcie1_0,phys_pcie1_1,phys_pcie4_0,phys_pcie4_1 --neutron-network-vlan-ranges datacentre:1001:1050 --neutron-disable-tunneling --verbose --timeout 180

…

Starting new HTTP connection (1): 192.200.0.1

"POST /v2/action_executions HTTP/1.1" 201 1695

HTTP POST http://192.200.0.1:8989/v2/action_executions 201

Overcloud Endpoint: http://10.1.2.5:5000/v2.0

Overcloud Deployed

clean_up DeployOvercloud:

END return value: 0

real 38m38.971s

user 0m3.605s

sys 0m0.466s

等待OpenStack堆栈状态变为COMPLETE:

[stack@director ~]$ openstack stack list

+--------------------------------------+------------+-----------------+----------------------+----------------------+

| ID | Stack Name | Stack Status | Creation Time | Updated Time |

+--------------------------------------+------------+-----------------+----------------------+----------------------+

| 5df68458-095d-43bd-a8c4-033e68ba79a0 | pod1 | UPDATE_COMPLETE | 2017-11-02T21:30:06Z | 2017-11-06T21:40:58Z |

+--------------------------------------+------------+-----------------+----------------------+----------------------+

检查新的OSD-Compute节点是否处于活动状态:

[stack@director ~]$ source stackrc

[stack@director ~]$ nova list |grep osd-compute-3

| 0f2d88cd-d2b9-4f28-b2ca-13e305ad49ea | pod1-osd-compute-3 | ACTIVE | - | Running | ctlplane=192.200.0.117 |

[stack@director ~]$ source corerc

[stack@director ~]$ openstack hypervisor list |grep osd-compute-3

| 63 | pod1-osd-compute-3.localdomain |

登录新的OSD-Compute服务器并检查Ceph进程。最初,当Ceph恢复时,状态将为HEALTH_WARN:

[heat-admin@pod1-osd-compute-3 ~]$ sudo ceph -s

cluster eb2bb192-b1c9-11e6-9205-525400330666

health HEALTH_WARN

223 pgs backfill_wait

4 pgs backfilling

41 pgs degraded

227 pgs stuck unclean

41 pgs undersized

recovery 45229/1300136 objects degraded (3.479%)

recovery 525016/1300136 objects misplaced (40.382%)

monmap e1: 3 mons at {Pod1-controller-0=11.118.0.40:6789/0,Pod1-controller-1=11.118.0.41:6789/0,Pod1-controller-2=11.118.0.42:6789/0}

election epoch 58, quorum 0,1,2 Pod1-controller-0,Pod1-controller-1,Pod1-controller-2

osdmap e986: 12 osds: 12 up, 12 in; 225 remapped pgs

flags sortbitwise,require_jewel_osds

pgmap v781746: 704 pgs, 6 pools, 533 GB data, 344 kobjects

1553 GB used, 11840 GB / 13393 GB avail

45229/1300136 objects degraded (3.479%)

525016/1300136 objects misplaced (40.382%)

477 active+clean

186 active+remapped+wait_backfill

37 active+undersized+degraded+remapped+wait_backfill

4 active+undersized+degraded+remapped+backfilling

但在短时间后(20分钟),Ceph会返回到HEALTH_OK状态:

[heat-admin@pod1-osd-compute-3 ~]$ sudo ceph -s

cluster eb2bb192-b1c9-11e6-9205-525400330666

health HEALTH_OK

monmap e1: 3 mons at {Pod1-controller-0=11.118.0.40:6789/0,Pod1-controller-1=11.118.0.41:6789/0,Pod1-controller-2=11.118.0.42:6789/0}

election epoch 58, quorum 0,1,2 Pod1-controller-0,Pod1-controller-1,Pod1-controller-2

osdmap e1398: 12 osds: 12 up, 12 in

flags sortbitwise,require_jewel_osds

pgmap v784311: 704 pgs, 6 pools, 533 GB data, 344 kobjects

1599 GB used, 11793 GB / 13393 GB avail

704 active+clean

client io 8168 kB/s wr, 0 op/s rd, 32 op/s wr

[heat-admin@pod1-osd-compute-3 ~]$ sudo ceph osd tree

ID WEIGHT TYPE NAME UP/DOWN REWEIGHT PRIMARY-AFFINITY

-1 13.07996 root default

-2 0 host pod1-osd-compute-0

-3 4.35999 host pod1-osd-compute-2

1 1.09000 osd.1 up 1.00000 1.00000

4 1.09000 osd.4 up 1.00000 1.00000

7 1.09000 osd.7 up 1.00000 1.00000

10 1.09000 osd.10 up 1.00000 1.00000

-4 4.35999 host pod1-osd-compute-1

2 1.09000 osd.2 up 1.00000 1.00000

5 1.09000 osd.5 up 1.00000 1.00000

8 1.09000 osd.8 up 1.00000 1.00000

11 1.09000 osd.11 up 1.00000 1.00000

-5 4.35999 host pod1-osd-compute-3

0 1.09000 osd.0 up 1.00000 1.00000

3 1.09000 osd.3 up 1.00000 1.00000

6 1.09000 osd.6 up 1.00000 1.00000

9 1.09000 osd.9 up 1.00000 1.00000

更换后服务器设置

将服务器添加到overcloud后,请参阅以下链接以应用旧服务器中以前存在的设置:

恢复VM

案例1. OSD计算节点托管CF、ESC、EM和UAS

新增到Nova聚合列表

将OSD-Compute节点添加到聚合主机,并验证主机是否已添加。在这种情况下,必须将OSD-Compute节点添加到CF和EM主机聚合。

nova aggregate-add-host

[stack@director ~]$ nova aggregate-add-host VNF2-CF-MGMT2 pod1-osd-compute-3.localdomain

[stack@director ~]$ nova aggregate-add-host VNF2-EM-MGMT2 pod1-osd-compute-3.localdomain

[stack@direcotr ~]$ nova aggregate-add-host POD1-AUTOIT pod1-osd-compute-3.localdomain

nova aggregate-show

[stack@director ~]$ nova aggregate-show VNF2-CF-MGMT2

[stack@director ~]$ nova aggregate-show VNF2-EM-MGMT2

[stack@director ~]$ nova aggregate-show POD1-AUTOITT

恢复UAS VM

检查UAS VM在新星列表中的状态并将其删除:

[stack@director ~]$ nova list | grep VNF2-UAS-uas-0

| 307a704c-a17c-4cdc-8e7a-3d6e7e4332fa | VNF2-UAS-uas-0 | ACTIVE | - | Running | VNF2-UAS-uas-orchestration=172.168.11.10; VNF2-UAS-uas-management=172.168.10.3

[stack@director ~]$ nova delete VNF2-UAS-uas-0

Request to delete server VNF2-UAS-uas-0 has been accepted.

要恢复autovnf-uas VM,请运行uas-check脚本以检查状态。它必须报告错误。然后使用 — fix选项再次运行,以重新创建缺失的UAS VM:

[stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts/

[stack@director scripts]$ ./uas-check.py auto-vnf VNF2-UAS

2017-12-08 12:38:05,446 - INFO: Check of AutoVNF cluster started

2017-12-08 12:38:07,925 - INFO: Instance 'vnf1-UAS-uas-0' status is 'ERROR'

2017-12-08 12:38:07,925 - INFO: Check completed, AutoVNF cluster has recoverable errors

[stack@director scripts]$ ./uas-check.py auto-vnf VNF2-UAS --fix

2017-11-22 14:01:07,215 - INFO: Check of AutoVNF cluster started

2017-11-22 14:01:09,575 - INFO: Instance VNF2-UAS-uas-0' status is 'ERROR'

2017-11-22 14:01:09,575 - INFO: Check completed, AutoVNF cluster has recoverable errors

2017-11-22 14:01:09,778 - INFO: Removing instance VNF2-UAS-uas-0'

2017-11-22 14:01:13,568 - INFO: Removed instance VNF2-UAS-uas-0'

2017-11-22 14:01:13,568 - INFO: Creating instance VNF2-UAS-uas-0' and attaching volume ‘VNF2-UAS-uas-vol-0'

2017-11-22 14:01:49,525 - INFO: Created instance ‘VNF2-UAS-uas-0'

登录到autovnf-uas。等待几分钟,UAS必须返回到正常状态:

VNF2-autovnf-uas-0#show uas

uas version 1.0.1-1

uas state ha-active

uas ha-vip 172.17.181.101

INSTANCE IP STATE ROLE

-----------------------------------

172.17.180.6 alive CONFD-SLAVE

172.17.180.7 alive CONFD-MASTER

172.17.180.9 alive NA

注意:如果uas-check.py -fix失败,您可能需要复制此文件并再次运行。

[stack@director ~]$ mkdir –p /opt/cisco/usp/apps/auto-it/common/uas-deploy/

[stack@director ~]$ cp /opt/cisco/usp/uas-installer/common/uas-deploy/userdata-uas.txt /opt/cisco/usp/apps/auto-it/common/uas-deploy/

恢复ESC VM

从新星列表中检查ESC VM的状态并将其删除:

stack@director scripts]$ nova list |grep ESC-1

| c566efbf-1274-4588-a2d8-0682e17b0d41 | VNF2-ESC-ESC-1 | ACTIVE | - | Running | VNF2-UAS-uas-orchestration=172.168.11.14; VNF2-UAS-uas-management=172.168.10.4 |

[stack@director scripts]$ nova delete VNF2-ESC-ESC-1

Request to delete server VNF2-ESC-ESC-1 has been accepted.

从AutoVNF-UAS,查找ESC部署事务,并在事务的日志中查找boot_vm.py命令行以创建ESC实例:

ubuntu@VNF2-uas-uas-0:~$ sudo -i

root@VNF2-uas-uas-0:~# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on VNF2-uas-uas-0

VNF2-uas-uas-0#show transaction

TX ID TX TYPE DEPLOYMENT ID TIMESTAMP STATUS

-----------------------------------------------------------------------------------------------------------------------------

35eefc4a-d4a9-11e7-bb72-fa163ef8df2b vnf-deployment VNF2-DEPLOYMENT 2017-11-29T02:01:27.750692-00:00 deployment-success

73d9c540-d4a8-11e7-bb72-fa163ef8df2b vnfm-deployment VNF2-ESC 2017-11-29T01:56:02.133663-00:00 deployment-success

VNF2-uas-uas-0#show logs 73d9c540-d4a8-11e7-bb72-fa163ef8df2b | display xml

<config xmlns="http://tail-f.com/ns/config/1.0">

<logs xmlns="http://www.cisco.com/usp/nfv/usp-autovnf-oper">

<tx-id>73d9c540-d4a8-11e7-bb72-fa163ef8df2b</tx-id>

<log>2017-11-29 01:56:02,142 - VNFM Deployment RPC triggered for deployment: VNF2-ESC, deactivate: 0

2017-11-29 01:56:02,179 - Notify deployment

..

2017-11-29 01:57:30,385 - Creating VNFM 'VNF2-ESC-ESC-1' with [python //opt/cisco/vnf-staging/bootvm.py VNF2-ESC-ESC-1 --flavor VNF2-ESC-ESC-flavor --image 3fe6b197-961b-4651-af22-dfd910436689 --net VNF2-UAS-uas-management --gateway_ip 172.168.10.1 --net VNF2-UAS-uas-orchestration --os_auth_url http://10.1.2.5:5000/v2.0 --os_tenant_name core --os_username ****** --os_password ****** --bs_os_auth_url http://10.1.2.5:5000/v2.0 --bs_os_tenant_name core --bs_os_username ****** --bs_os_password ****** --esc_ui_startup false --esc_params_file /tmp/esc_params.cfg --encrypt_key ****** --user_pass ****** --user_confd_pass ****** --kad_vif eth0 --kad_vip 172.168.10.7 --ipaddr 172.168.10.6 dhcp --ha_node_list 172.168.10.3 172.168.10.6 --file root:0755:/opt/cisco/esc/esc-scripts/esc_volume_em_staging.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_volume_em_staging.sh --file root:0755:/opt/cisco/esc/esc-scripts/esc_vpc_chassis_id.py:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_vpc_chassis_id.py --file root:0755:/opt/cisco/esc/esc-scripts/esc-vpc-di-internal-keys.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc-vpc-di-internal-keys.sh

将boot_vm.py行保存到Shell脚本文件(esc.sh),并使用正确的信息(通常为core/<PASSWORD>)更新所有用户名*****和密码*****行。 您还需要删除-encrypt_key选项。 对于user_pass和user_confd_pass,您需要使用格式 — username:password(示例- admin:<PASSWORD>)。

查找URL以使bootvm.py从running-config中保留,并将bootvm.py文件获取到autovnf-uas VM。在本例中,10.1.2.3是自动IT虚拟机的IP:

root@VNF2-uas-uas-0:~# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on VNF2-uas-uas-0

VNF2-uas-uas-0#show running-config autovnf-vnfm:vnfm

…

configs bootvm

value http:// 10.1.2.3:80/bundles/5.1.7-2007/vnfm-bundle/bootvm-2_3_2_155.py

!

root@VNF2-uas-uas-0:~# wget http://10.1.2.3:80/bundles/5.1.7-2007/vnfm-bundle/bootvm-2_3_2_155.py

--2017-12-01 20:25:52-- http://10.1.2.3 /bundles/5.1.7-2007/vnfm-bundle/bootvm-2_3_2_155.py

Connecting to 10.1.2.3:80... connected.

HTTP request sent, awaiting response... 200 OK

Length: 127771 (125K) [text/x-python]

Saving to: ‘bootvm-2_3_2_155.py’

100%[=====================================================================================>] 127,771 --.-K/s in 0.001s

2017-12-01 20:25:52 (173 MB/s) - ‘bootvm-2_3_2_155.py’ saved [127771/127771]

创建/tmp/esc_params.cfg文件:

root@VNF2-uas-uas-0:~# echo "openstack.endpoint=publicURL" > /tmp/esc_params.cfg

运行shell脚本以便从UAS节点部署ESC:

root@VNF2-uas-uas-0:~# /bin/sh esc.sh

+ python ./bootvm.py VNF2-ESC-ESC-1 --flavor VNF2-ESC-ESC-flavor --image 3fe6b197-961b-4651-af22-dfd910436689

--net VNF2-UAS-uas-management --gateway_ip 172.168.10.1 --net VNF2-UAS-uas-orchestration --os_auth_url

http://10.1.2.5:5000/v2.0 --os_tenant_name core --os_username core --os_password <PASSWORD> --bs_os_auth_url

http://10.1.2.5:5000/v2.0 --bs_os_tenant_name core --bs_os_username core --bs_os_password <PASSWORD>

--esc_ui_startup false --esc_params_file /tmp/esc_params.cfg --user_pass admin:<PASSWORD> --user_confd_pass

admin:<PASSWORD> --kad_vif eth0 --kad_vip 172.168.10.7 --ipaddr 172.168.10.6 dhcp --ha_node_list 172.168.10.3

172.168.10.6 --file root:0755:/opt/cisco/esc/esc-scripts/esc_volume_em_staging.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_volume_em_staging.sh

--file root:0755:/opt/cisco/esc/esc-scripts/esc_vpc_chassis_id.py:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_vpc_chassis_id.py

--file root:0755:/opt/cisco/esc/esc-scripts/esc-vpc-di-internal-keys.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc-vpc-di-internal-keys.sh

登录新的ESC并验证备份状态:

ubuntu@VNF2-uas-uas-0:~$ ssh admin@172.168.11.14

…

####################################################################

# ESC on VNF2-esc-esc-1.novalocal is in BACKUP state.

####################################################################

[admin@VNF2-esc-esc-1 ~]$ escadm status

0 ESC status=0 ESC Backup Healthy

[admin@VNF2-esc-esc-1 ~]$ health.sh

============== ESC HA (BACKUP) ===================================================

ESC HEALTH PASSED

从ESC恢复CF和EM VM

从nova列表中检查CF和EM VM的状态。它们必须处于ERROR状态:

[stack@director ~]$ source corerc

[stack@director ~]$ nova list --field name,host,status |grep -i err

| 507d67c2-1d00-4321-b9d1-da879af524f8 | VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea | None | ERROR|

| f9c0763a-4a4f-4bbd-af51-bc7545774be2 | VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229 |None | ERROR

登录到ESC主服务器,为每个受影响的EM和CF VM运行recovery-vm-action。耐心点。ESC会安排恢复操作,此操作可能在几分钟内不会发生。监控yangesc.log:

sudo /opt/cisco/esc/esc-confd/esc-cli/esc_nc_cli recovery-vm-action DO

[admin@VNF2-esc-esc-0 ~]$ sudo /opt/cisco/esc/esc-confd/esc-cli/esc_nc_cli recovery-vm-action DO VNF2-DEPLOYMENT-_VNF2-D_0_a6843886-77b4-4f38-b941-74eb527113a8

[sudo] password for admin:

Recovery VM Action

/opt/cisco/esc/confd/bin/netconf-console --port=830 --host=127.0.0.1 --user=admin --privKeyFile=/root/.ssh/confd_id_dsa --privKeyType=dsa --rpc=/tmp/esc_nc_cli.ZpRCGiieuW

<?xml version="1.0" encoding="UTF-8"?>

<rpc-reply xmlns="urn:ietf:params:xml:ns:netconf:base:1.0" message-id="1">

<ok/>

</rpc-reply>

[admin@VNF2-esc-esc-0 ~]$ tail -f /var/log/esc/yangesc.log

…

14:59:50,112 07-Nov-2017 WARN Type: VM_RECOVERY_COMPLETE

14:59:50,112 07-Nov-2017 WARN Status: SUCCESS

14:59:50,112 07-Nov-2017 WARN Status Code: 200

14:59:50,112 07-Nov-2017 WARN Status Msg: Recovery: Successfully recovered VM [VNF2-DEPLOYMENT-_VNF2-D_0_a6843886-77b4-4f38-b941-74eb527113a8]

登录新的EM并验证EM状态是否为up:

ubuntu@VNF2vnfddeploymentem-1:~$ /opt/cisco/ncs/current/bin/ncs_cli -u admin -C

admin connected from 172.17.180.6 using ssh on VNF2vnfddeploymentem-1

admin@scm# show ems

EM VNFM

ID SLA SCM PROXY

---------------------

2 up up up

3 up up up

登录StarOS VNF并验证CF卡是否处于备用状态。

案例2.托管自动IT、自动部署、EM和UAS的OSD计算节点

自动部署虚拟机的恢复

在OSPD中,如果自动部署VM受到影响,但仍显示ACTIVE/Running,您需要首先将其删除。如果自动部署未受影响,请跳至“Recovery of Auto-it VM:

[stack@director ~]$ nova list |grep auto-deploy

| 9b55270a-2dcd-4ac1-aba3-bf041733a0c9 | auto-deploy-ISO-2007-uas-0 | ACTIVE | - | Running | mgmt=172.16.181.12, 10.1.2.7 [stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts

[stack@director ~]$ ./auto-deploy-booting.sh --floating-ip 10.1.2.7 --delete

删除自动部署后,使用相同的floatingip地址重新创建它:

[stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts

[stack@director scripts]$ ./auto-deploy-booting.sh --floating-ip 10.1.2.7

2017-11-17 07:05:03,038 - INFO: Creating AutoDeploy deployment (1 instance(s)) on 'http://10.84.123.4:5000/v2.0' tenant 'core' user 'core', ISO 'default'

2017-11-17 07:05:03,039 - INFO: Loading image 'auto-deploy-ISO-5-1-7-2007-usp-uas-1.0.1-1504.qcow2' from '/opt/cisco/usp/uas-installer/images/usp-uas-1.0.1-1504.qcow2'

2017-11-17 07:05:14,603 - INFO: Loaded image 'auto-deploy-ISO-5-1-7-2007-usp-uas-1.0.1-1504.qcow2'

2017-11-17 07:05:15,787 - INFO: Assigned floating IP '10.1.2.7' to IP '172.16.181.7'

2017-11-17 07:05:15,788 - INFO: Creating instance 'auto-deploy-ISO-5-1-7-2007-uas-0'

2017-11-17 07:05:42,759 - INFO: Created instance 'auto-deploy-ISO-5-1-7-2007-uas-0'

2017-11-17 07:05:42,759 - INFO: Request completed, floating IP: 10.1.2.7

将Autodeploy.cfg文件、ISO和confd_backup tar文件从备份服务器复制到自动部署VM并从备份tar文件恢复confd cdb文件:

ubuntu@auto-deploy-iso-2007-uas-0:~# sudo -i

ubuntu@auto-deploy-iso-2007-uas-0:# service uas-confd stop

uas-confd stop/waiting

root@auto-deploy-iso-2007-uas-0:# cd /opt/cisco/usp/uas/confd-6.3.1/var/confd

root@auto-deploy-iso-2007-uas-0:/opt/cisco/usp/uas/confd-6.3.1/var/confd# tar xvf /home/ubuntu/ad_cdb_backup.tar

cdb/

cdb/O.cdb

cdb/C.cdb

cdb/aaa_init.xml

cdb/A.cdb

root@auto-deploy-iso-2007-uas-0~# service uas-confd start

uas-confd start/running, process 2036

通过检查早期的事务,验证confd是否已正确加载。使用新的OSD计算名称更新autodeploy.cfg。请参阅部分 — 最后步骤:更新自动部署配置:

root@auto-deploy-iso-2007-uas-0:~# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on auto-deploy-iso-2007-uas-0

auto-deploy-iso-2007-uas-0#show transaction

SERVICE SITE

DEPLOYMENT SITE TX AUTOVNF VNF AUTOVNF

TX ID TX TYPE ID DATE AND TIME STATUS ID ID ID ID TX ID

-------------------------------------------------------------------------------------------------------------------------------------

1512571978613 service-deployment tb5bxb 2017-12-06T14:52:59.412+00:00 deployment-success

auto-deploy-iso-2007-uas-0# exit

恢复自动IT虚拟机

从OSPD中,如果自动转换虚拟机受到影响,但仍显示为活动/运行,则需要删除它。如果auto-it未受到影响,请跳至下一个VM:

[stack@director ~]$ nova list |grep auto-it

| 580faf80-1d8c-463b-9354-781ea0c0b352 | auto-it-vnf-ISO-2007-uas-0 | ACTIVE | - | Running | mgmt=172.16.181.3, 10.1.2.8 [stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts

[stack@director ~]$ ./ auto-it-vnf-staging.sh --floating-ip 10.1.2.8 --delete

运行auto-it-vnf暂存脚本并重新创建auto-it:

[stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts

[stack@director scripts]$ ./auto-it-vnf-staging.sh --floating-ip 10.1.2.8

2017-11-16 12:54:31,381 - INFO: Creating StagingServer deployment (1 instance(s)) on 'http://10.84.123.4:5000/v2.0' tenant 'core' user 'core', ISO 'default'

2017-11-16 12:54:31,382 - INFO: Loading image 'auto-it-vnf-ISO-5-1-7-2007-usp-uas-1.0.1-1504.qcow2' from '/opt/cisco/usp/uas-installer/images/usp-uas-1.0.1-1504.qcow2'

2017-11-16 12:54:51,961 - INFO: Loaded image 'auto-it-vnf-ISO-5-1-7-2007-usp-uas-1.0.1-1504.qcow2'

2017-11-16 12:54:53,217 - INFO: Assigned floating IP '10.1.2.8' to IP '172.16.181.9'

2017-11-16 12:54:53,217 - INFO: Creating instance 'auto-it-vnf-ISO-5-1-7-2007-uas-0'

2017-11-16 12:55:20,929 - INFO: Created instance 'auto-it-vnf-ISO-5-1-7-2007-uas-0'

2017-11-16 12:55:20,930 - INFO: Request completed, floating IP: 10.1.2.8

重新加载ISO映像。在本例中,自动IP地址为10.1.2.8。加载过程需要几分钟时间:

[stack@director ~]$ cd images/5_1_7-2007/isos

[stack@director isos]$ curl -F file=@usp-5_1_7-2007.iso http://10.1.2.8:5001/isos

{

"iso-id": "5.1.7-2007"

}

to check the ISO image:

[stack@director isos]$ curl http://10.1.2.8:5001/isos

{

"isos": [

{

"iso-id": "5.1.7-2007"

}

]

}

将VNF system.cfg文件从OSPD自动部署目录复制到自动部署虚拟机:

[stack@director autodeploy]$ scp system-vnf* ubuntu@10.1.2.8:.

ubuntu@10.1.2.8's password:

system-vnf1.cfg 100% 1197 1.2KB/s 00:00

system-vnf2.cfg 100% 1197 1.2KB/s 00:00

ubuntu@auto-it-vnf-iso-2007-uas-0:~$ pwd

/home/ubuntu

ubuntu@auto-it-vnf-iso-2007-uas-0:~$ ls

system-vnf1.cfg system-vnf2.cfg

注意:两种情况下EM和UAS VM的恢复过程相同。请参阅Case.1部分。

处理ESC恢复故障

如果ESC由于意外状态而无法启动VM,Cisco建议您通过重新启动主ESC执行ESC切换。ESC切换将需要大约一分钟。在新主ESC上运行脚本health.sh,以检查状态是否为up。主ESC以启动VM并修复VM状态。完成此恢复任务最多需要五分钟。

您可以监控/var/log/esc/yangesc.log和/var/log/esc/escmanager.log。如果您在5-7分钟后仍未发现虚拟机已恢复,则用户将需要执行受影响虚拟机的手动恢复。

自动部署配置更新

在AutoDeploy VM中,编辑auto-deploy.cfg,并将旧的OSD-Compute服务器替换为新的OSD-Compute服务器。然后在confd_cli中加载替换。此步骤是以后成功停用部署所必需的。

root@auto-deploy-iso-2007-uas-0:/home/ubuntu# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on auto-deploy-iso-2007-uas-0

auto-deploy-iso-2007-uas-0#config

Entering configuration mode terminal

auto-deploy-iso-2007-uas-0(config)#load replace autodeploy.cfg

Loading. 14.63 KiB parsed in 0.42 sec (34.16 KiB/sec)

auto-deploy-iso-2007-uas-0(config)#commit

Commit complete.

auto-deploy-iso-2007-uas-0(config)#end

更改配置后,重新启动uas-confd和Auto-Deploy服务:

root@auto-deploy-iso-2007-uas-0:~# service uas-confd restart

uas-confd stop/waiting

uas-confd start/running, process 14078

root@auto-deploy-iso-2007-uas-0:~# service uas-confd status

uas-confd start/running, process 14078

root@auto-deploy-iso-2007-uas-0:~# service autodeploy restart

autodeploy stop/waiting

autodeploy start/running, process 14017

root@auto-deploy-iso-2007-uas-0:~# service autodeploy status

autodeploy start/running, process 14017

启用系统日志

要为UCS服务器、Openstack组件和已恢复的VM启用系统日志,请按照以下部分操作

“Re-Enable syslog for UCS and Openstack components”和“Enable syslog for the VNFs”在下面的链接中:

修订历史记录

| 版本 | 发布日期 | 备注 |

|---|---|---|

1.0 |

10-Jul-2018 |

初始版本 |

由思科工程师提供

- 帕蒂班·拉贾戈帕尔思科高级服务

- 帕德马拉伊·拉马努贾姆思科高级服务

反馈

反馈