HX Disaster Recovery Overview

HyperFlex DR enables the protection of virtual machines from disaster by configuring the replication of running VMs between a pair of network connected clusters. Protected virtual machines running on one cluster replicate to the other cluster in the pair, and vice versa. The two paired clusters typically are located at a distance from each other, with each cluster serving as the disaster recovery site for virtual machines running on the other cluster.

Once protection is configured on a VM, the HX Data Platform periodically takes a Data Protection (DP) Snapshot of the running VM on the local cluster and replicates (copies) the DP snapshot to the paired remote cluster. In the event of a disaster at the local cluster, the most recently replicated snapshot of each protected VM can be recovered on the remote cluster. Each cluster that serves as a disaster recovery site for another cluster must be sized with adequate spare resources so that upon a disaster, it can run the newly recovered VMs in addition to its normal workload.

Note |

Only the most recently replicated DP snapshot is retained on the destination cluster. Retaining additional DP snapshots is not supported. |

Each VM is individually protected by assigning it protection attributes, including the replication interval (schedule). The shorter the replication interval, the fresher the replicated snapshot data is likely to be. DP snapshot intervals can range from once every 5 minutes to once every 24 hours.

A protection group is a group of VMs that have a common DP snapshot schedule , quiescence parameter value, and a common start time.

Setting up DP snapshots requires two existing clusters running a currently supported HX Data Platform Software Release. Both clusters must be on the same HX Data Platform version. Use HyperFlex Connect to complete the setup.

First, set up a local replication network for each cluster. Use HX Connect to provide a set of IP addresses to be used by local cluster nodes to replicate to the remote cluster. HX Connect creates VLANs through UCS Manager, for dedicated local replication network use.

Note |

When this option is chosen in HX Connect, UCSM is configured only when both UCS Manager and fabric interconnect are associated with the HyperFlex cluster. When UCSM and FI are not present, you must enter the VLAN ID, and not select UCSM configuration in HX Connect. |

The two clusters, and their corresponding existing relevant datastores must be explicitly paired. The pairing setup can be completed using HX Connect from one of the two clusters. This requires administrative credentials of the other cluster.

Virtual machines can be protected (or have their existing protection attributes modified) by using HX Connect at the cluster where they are currently active.

HX Connect can monitor the status of both incoming and outgoing replication activity on a cluster.

After a disaster, a protected VM can be recovered and run on the cluster that serves as the DP snapshot recovery site for that VM.

Replication and Disaster Recovery Requirements and Considerations

Note |

Documentation for the N:1 DR for HyperFlex feature is located in the Intersight Help Center. The URL is https://www.intersight.com/help/saas/resources/replication_for_cisco_hyperFlex_clusters. |

The following is a list of requirements and considerations when configuring virtual machine replication and performing disaster recovery of virtual machines:

-

Datastore Unmapped Behavior:

HXDP Release 5.5(2a) and HXDP Release 6.0(x) and later: Unmap Other DRO datastore pair is supported when VMs are in an Active (protected) state on either of the site. VMs in a state other than Active (for example Recovered, Recovery_Failed, and Migrate_Failed) need to be moved to the Active (Protected) state for unmap other DRO datastore pair support.

-

Other DRO Datastore Delete Operations:

The following guidelines apply to HXDP Release 5.5(2a) and HXDP Release 6.0(x) and later:

-

Delete operations are supported after upgrading to HXDP Release 6.0(1a).

-

Adding and editing schedules is not supported.

-

Datastore map and unmap is not supported if any DRO datastore unmap happens in parallel.

-

-

All Protected VMs Tab Usage: Do not to perform any DR actions available from All Protected VMs tab for SRM (OtherDRO) VMs.

-

Post HXDP Release 5.5(2a) and HXDP Release 6.0(x) and later Upgrade Action:

When HyperFlex creates a SRA/SRM pair and protects VMs, SRM creates placeholder VMs on opposite site with same name to reserve the resources for the VM during migration and recovery. Cisco recommends that after upgrading to HXDP 6.0(1a) you delete the placeholder VMs. Failing to complete this action will result in DR workflows failing due to the VM with same name exists on the opposite site.

Caution |

Operations via SRM: Even though VMs in SRM the environment are available for performing Planned Migration or Disaster Recovery. Cisco does not recommend users perform any kind of operations via SRM. |

Admin Role Requirements

You can perform all replication and recovery tasks with administrator privileges on the local cluster. For tasks involving a remote cluster, both the local and remote user must have administrative privileges. You can configure administrative privileges with vCenter SSO on the respective clusters.

Networking Requirements

The replication network should be reliable and have a sustained minimum symmetric bandwidth that is the same as the bandwidth configured in the HyperFlex replication network. Do not share the network with any other applications or traffic on an uplink or downlink. Other requirements are as follows:

|

Requirement |

Description |

||

|---|---|---|---|

|

Minimum and Recommended Bandwidth |

The minimum supported bandwidth is 10 Mbps. Recommended bandwidth is half of the network link bandwidth available for replication. For example, if the network link bandwidth available is 100 Mbps, you should configure the replication bandwidth to be 50 Mbps. |

||

|

Adaptive Bandwidth Control |

Replication network variability may cause network bandwidth to vary and may include the introduction of network errors. Adaptive Bandwidth Control for replication will dynamically adjust the replication speed to scale down if errors are detected and scale up to the configured replication bandwidth limit when the errors are cleared.

|

||

|

Measuring Available Replication Network Bandwidth |

You can measure the bandwidth of a HyperFlex replication network between two sites by using the iperf utility. In preparation for using the iperf utility, configure the local replication networks on both HyperFlex clusters. After you have configured the local replication networks, you can then pair the HyperFlex clusters. Once you have paired the HyperFlex clusters, map one of the local datastores to a datastore on the remote HyperFlex cluster.

|

||

|

|||

|

Measuring Available Replication Network Bandwidth (continued) |

|

||

|

Maximum Latency |

The maximum replication network latency supported is 75 ms between two paired clusters. There are conditions where it is possible that some replication jobs will encounter error conditions and fail. For example, this may occur when multiple replication jobs execute simultaneously with low network bandwidth and high latency. If this situation occurs, increase the replication network bandwidth, or reduce job concurrency by staggering the number of concurrent replication jobs. If this situation persists, VM unprotect operations may take longer than expected. Measuring Replication Network Latency You can measure the average replication network latency by running a ping command on any of the storage controller VMs on site A and site B. From site A, execute ping command as performed in the following example:

The average latency should be 75 ms or less.

|

||

|

Network Ports |

The comprehensive list of ports required for HyperFlex component communication is located in appendix A of the HX Data Platform Security Hardening Guide. The port/protocl requirements (as of Version 4.5.2a rev 3 dated September 2021) for HyperFlex replication are: ICMP, TCP: 9338, 9339, 3049, 4049, 4059, 9098, 8889, and 9350. Testing Network Ports Internal to the HyperFlex cluster, firewall entries are made on the source and destination storage controller VMs during the site pairing operation to allow the HX data platform access to the systems bi-directionally. You need to allow this traffic on WAN routers for each HX node replication IP address and management CIP address. When you configure the local replication network on a HyperFlex cluster, you can manually perform a Test Local Replication Network action to test connectivity across the replication IP addresses of each storage controller VM on the local cluster. This test includes port connectivity and firewall checks. When the two clusters have been paired, you can manually perform a Test Remote Replication Network action to test connectivity between each local storage controller VM and each remote storage controller VM. Port connectivity and firewall checks are performed. You can also use the Linux “netcat” utility as an additional option to check port connectivity. |

||

|

Network Loss |

Reliable transmission of data enables replication between two paired clusters to function optimally. Packet loss in data transmission between two paired clusters may degrade replication performance. Diagnosing Dropped Packets There are two cases where packet loss may occur - network congestion and transient network device errors. If dropped packets occur on a replication network due to network congestion the HyperFlex cluster replication engine automatically throttles back replication bandwidth. Throttling replication network bandwidth reduces network congestion and results in the reduction of dropped packets. In extreme cases, replication bandwidth throttling may result in replication jobs taking longer to complete than anticipated. Dropped packets that occur on a replication network due to transient network device errors may cause replication failures that occur randomly or at specific times of the day. Dropped packets are not reported in the HX Connect user interface. Occurrences of packet drop are logged in the HyperFlex storage controller logs. Users that experience noticeable replication job elongation or other failures can contact support for further assistance. |

Cluster Requirements

When configuring virtual machine replication and performing disaster recovery of virtual machines, please ensure that the following cluster requirements are met:

Storage Space Requirements

Ensure that there is sufficient space on both clusters to accommodate the retention and processing of replicated DP snapshots. Each protected VM will result in the creation and subsequent replication of a DP snapshot based on the configured schedule interval. The most recent successfully replicated DP snapshot is retained on the destination HyperFlex cluster. Note that for every protected VM, there is a maximum of two DP snapshots present on the source cluster and two DP snapshots present on the destination cluster. This approach facilitates efficient difference-based replication and also assures that the most recent successfully replicated DP snapshot is available for recovery in the event that a newer DP snapshot fails to successfully complete the replication process. Although storage capacity reduction methods are applied, including deduplication and compression, each replicated virtual machine consumes some storage space.

-

Space Consumed by Protected VMs with Redolog Snapshots—When protecting a VM that also has VMware redolog snapshots, the entire content of the VM is replicated. The entire content includes the VM as well as any retained VMware redolog snapshot(s). This results in increased storage space utilization on both of the paired HyperFlex clusters. When a greater number of redolog snapshots are retained, storage space consumption will also increase.

-

Space Consumed by Protected VMs with HX Native Snapshots—When protecting a VM that also has HX native snapshots, only the latest VM data is replicated. Retained HX native snapshot data is not replicated. Typically, there is no need to account for space consumed by HX native snapshots on a replication destination HyperFlex cluster.

-

Space Consumed by Deleted VMs—Deleting a protected VM will not cause space to be reclaimed on the paired HyperFlex cluster datastore. The most recent successfully replicated DP snapshot will be retained to protect the VM from accidental deletion. In order to reclaim space consumed by protected VMs, the VMs must first be unprotected. When a VM is unprotected, the associated DP snapshots are deleted on both paired HyperFlex clusters.

-

Space Consumption Calculations—The amount of predicted space consumption in addition to the size of a protected VM can be expressed as:

VM change rate times the number of DP snapshots retained

The number of DP snapshots retained equals two (2). When a protected VM has VMware redolog snapshots the calculation will be skewed based on the number of retained snapshots.

Space calculations should also consider that when a protected VM fails over or is migrated to the paired site, the calculations for the source and target can be reversed.

-

Difference Based Replication and Full Copy Replication—In a typical replication data protection lifecycle, a full copy of a protected VM is replicated in the form of a DP snapshot only once. This full copy replication job occurs when a VM is initially protected. After the initial replication job completes, subsequent replication jobs take advantage of efficient differencing-based technology to replicate only new and changed data.

You cannot use difference-based technology in the following known corner cases:

-

A protected VM also has HX native snapshots. If the VM is reverted back to a retained HX native snapshot, the next scheduled protection job will perform a full copy replication job instead of a difference-based replication job. An additional full copy worth of space needs to be budgeted on both of the paired clusters.

-

A protected VM undergoes storage vMotion and is migrated to a different datastore. If the destination datastore is mapped to a datastore on the paired cluster, the next scheduled protection job will perform a full copy replication job instead of a difference-based replication job. An additional full copy worth of space needs to be budgeted on both of the paired clusters.

-

A protected VM has a DP snapshot that was taken in conjunction with a replication job. Subsequent to this, an initial HX native snapshot is created that also creates an HX Sentinel snapshot. The next scheduled protection job will perform a full copy replication job instead of a difference-based replication job. An additional full copy worth of space needs to be budgeted on both of the paired clusters.

-

When a protected VM DP snapshot is taken during an HX native snapshot workflow that created intermediate delta disks, the next scheduled protection job will perform a full copy replication job instead of a difference-based replication job. An additional full copy worth of space needs to be budgeted on both of the paired clusters.

-

When a new VMDK is added to an already protected VM, that specific VMDK will be full-copied once.

Not having sufficient storage space on the remote cluster can cause the remote cluster to reach capacity usage maximums. If you note any Out of Space errors, refer to Handling Out of Space Errors for more information. Pause all replication schedules until space available on the cluster has been properly adjusted. Always ensure that cluster capacity consumption is below the space utilization warning threshold.

-

Supported Configurations

Supported configurations for native replication (NRDR 1:1) are: 2N/3N/4N Edge, FI, and DC-no-FI based clusters to 2N/3N/4N Edge, FI, and DC-no-FI based clusters, including stretched clusters, all managed through HX Connect.

HyperFlex hardware acceleration cards (PID: HX-PCIE-OFFLOAD-1) are supported with native replication beginning with HX 4.5(1a). You must enable HX Hardware Acceleration on both of the paired HyperFlex clusters.

Rebooting Nodes

Do not reboot any nodes in an HX cluster during a restore, replication, or recovery operation. Note that node reboot operation may occur as part of an upgrade process. You should pause the replication scheduler prior to an upgrade, and then resume it after the upgrade has completed.

Replication Network and Pairing Requirements

You must establish a replication network between HyperFlex clusters that uses replication for Data Protection (DP) snapshots. The replication network is created to isolate inter-cluster replication traffic from other traffic within each cluster and site. Please also consider the following:

|

Component |

Description |

|---|---|

|

HX Data Platform Version |

Ensure that the HyperFlex clusters that are going to be paired for replication are running the same HX data platform software version. Note that the use of different HX data platform versions is only supported during HX data platform upgrades. In this scenario, one of the paired HyperFlex clusters may be running a different version of HX data platform software for the period of time until both of the paired clusters have been upgraded. Ensure that you upgrade both of the paired clusters to the same HX data platform version within the shortest possible time frame based on site specific constraints. Also note that a maximum of one major HX data platform release version difference is permitted when upgrading paired clusters. Additionally, the changing of any replication configuration parameter is not supported when the paired clusters are not both running the same HX data platform version during an upgrade. |

|

Node Status |

Ensure that all HyperFlex cluster nodes are online and fully operational prior to the creation of the local replication networks and performing the site pairing process. |

|

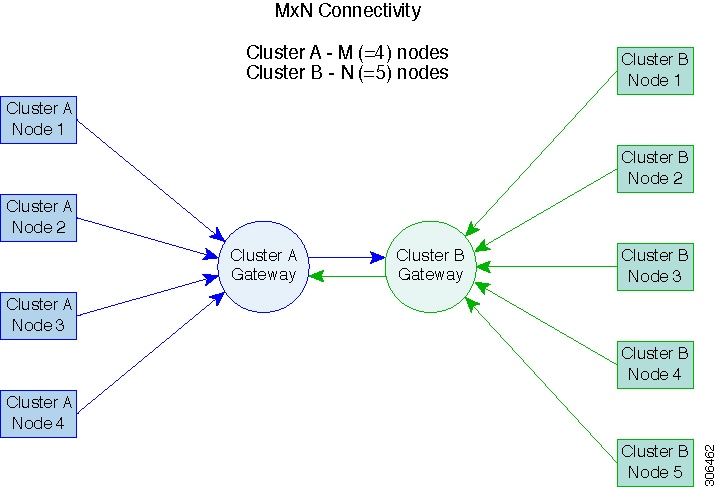

Node Communication Requirements |

Requirements are as follows:

For more information, see the graphic below. |

|

M*N Connectivity Between Clusters

|

|

|

Node Failure |

In the highly unlikely and rare event of a node failure, there may be an impact to replication. As an example, replication jobs in progress will stop if the node which has the replication CIP address enters an inoperative state. At the point in time when the replication CIP address is claimed by another node in the cluster, the replication job will automatically resume. Similarly, if a recovery job was running on the node with replication CIP address and the node failed, the job would fail. The replication CIP address would subsequently be claimed by another node in the cluster. Retry the operation upon noting the failure. |

|

vCenter Recommendations |

Ensure that each of the two paired HyperFlex clusters is managed by a unique vCenter instance. Also ensure that vCenter is deployed in a different fault domain for availability during disaster recovery scenarios. |

Replication and Disaster Recovery Virtual Machine Considerations

The following are considerations for VMs:

|

Consideration |

Description |

||

|---|---|---|---|

|

Thin Provisioning |

Protected VMs are recovered with thin provisioned disks irrespective of how disks were specified in the originally protected VM. |

||

|

VM Device Limitations |

Do not protect VMs with connected ISO images or floppies as individually protected VMs, or within a protection group. You can set any configured CD or DVD drive to “Client Device” with the “Connected” state disabled. There is no need to delete the device from the VM configuration. If there is a need to temporarily mount an ISO image, you can unprotect the VM and then protect it again once you have set the CD or DVD drive to “Client Device” and then disconnected. |

||

|

Protected Virtual Machine Scalability |

Beginning with HX Release 4.5(1a):

|

||

|

Non-HX Datastores |

Periodical replication fails on a protected a VM with storage on a non-HX datastore. To avoid the failure, unprotect the VM or remove non-HX storage from the VM. Do not move protected VMs from HX datastores to non-HX datastores. If a VM is moved to a non-HX datastore through storage vMotion, unprotect the VM before using vMotion. |

||

|

Templates |

Templates are not supported with disaster recovery. Do not attempt to protect a template. |

||

|

Recovery of Virtual Machines with Snapshots |

When recovering a protected VM that has VMware redolog snapshots, the VM is recovered and all previous snapshots redolog snapshots are preserved. | ||

|

Data Protection Snapshots |

Replicated DP snapshots are stored on the mapped datastore on the paired cluster. You should not perform a manual deletion of DP snapshots as this is not supported. Deleting snapshot directories or individual files will compromise HX data protection and disaster recovery.

|

||

|

VMware SRM – Purposeful VM Deletion |

Supported through HXDP Release 5.5(1a). Not supported in HXDP Release 5.5(2x). If a VM is deleted from VMware vCenter and the VM is located in an “Other DRO” datastore pair, the SRM recovery plan for this datastore pair will fail during recovery. To avoid this scenario, first unprotect the VM using the following command on one of the HyperFlex storage controller VMs: stcli dp vm delete --vmid <VM_ID> |

||

|

VMware SRM – Purposeful VM Deletion |

Not supported in HXDP Release 5.5(2x) and later. |

||

|

VM Naming |

If a protected VM is renamed within vCenter, HyperFlex recovers at the previous name folder but registers the VM with the new name on the recovery side cluster. Following are some of the limitations to this situation:

|

||

|

HyperFlex Software Encryption |

Software encryption must be enabled on clusters in both paired datastores to be able to protect VMs on encrypted datastores. |

Storage Replication Adapter Overview

Note |

The Storage Replication Adapter Feature is not supported in HXDP 5.5(2a) and later. |

Storage Replication Adapter (SRA) for VMware vCenter Site Recovery Manager (SRM) is a storage vendor-specific plug-in for VMware vCenter server. The adapter enables communication between SRM and a storage controller at the Storage Virtual Machine (SVM) level as well as at the cluster level configuration. The adapter interacts with the SVM to discover replicated datastores.

For more information on installation and configuration of SRM, refer the following links as per the SRM release version:

-

SRM 8.1 installation—https://docs.vmware.com/en/Site-Recovery-Manager/8.1/srm-install-config-8-1.pdf

-

SRM 6.5 installation—https://docs.vmware.com/en/Site-Recovery-Manager/6.5/srm-install-config-6-5.pdf

-

SRM 6.0 installation—https://docs.vmware.com/en/Site-Recovery-Manager/6.0/srm-install-config-6-0.pdf

You must install an appropriate SRA on the Site Recovery Manager Server hosts at both the protected and recovery sites. If you use more than one type of storage array, you must install the SRA for each type of array on both of the Site Recovery Manager Server hosts.

Before installing an SRA, ensure that SRM and JDK 8 or above version are installed on Windows machines at the protected and recovery sites.

To install an SRA, do the following:

-

Download SRA from the VMware site.

In the https://my.vmware.com/web/vmware/downloads page, locate VMware Site Recovery Manager and click Download Product. Click Drivers & Tools, expand Storage Replication Adapters, and click Go to Downloads.

-

Copy the Windows installer of SRA to SRM Windows machines at both the protected and recovery sites.

-

Double-click the installer.

-

Click Next on the Welcome page of the installer.

-

Accept the EULA and click Next.

-

Click Finish.

Note |

The SRA is installed within the SRM program folder: C:\Program Files\VMware\VMware vCenter Site Recovery Manager\storage\sra |

After SRA installation, refer the following guide as per the SRM release version and do the SRM environment setup:

-

SRM 8.1 configuration—https://docs.vmware.com/en/Site-Recovery-Manager/8.1/srm-admin-8-1.pdf

-

SRM 6.5 configuration—https://docs.vmware.com/en/Site-Recovery-Manager/6.5/srm-admin-6-5.pdf

-

SRM 6.0 configuration—https://docs.vmware.com/en/Site-Recovery-Manager/6.0/srm-admin-6-0.pdf

After configuration, SRM works with SRA to discover arrays and replicated and exported datastores, and to fail over or test failover datastores.

SRA enables SRM to execute the following workflows:

-

Discovery of replicated storage

-

Non-disruptive failover test recovery using a writable copy of replicated data

-

Emergency or planned failover recovery

-

Reverse replication after failover as part of failback

-

Restore replication after failover as part of a production test

Data Protection Terms

Interval―Part of the replication schedule configuration, used to enforce how often the protected VMs DP snapshot must be taken and copied to the target cluster.

Local cluster―The cluster you are currently logged into through HX Connect, in a VM replication cluster pair. From the local cluster, you can configure replication protection for locally resident VMs. The VMs are then replicated to the paired remote cluster.

Migration―A routine system maintenance and management task where a recent replication DP snapshot copy of the VM becomes the working VM. The replication pair of source and target cluster do not change.

Primary cluster―An alternative name for the source cluster in VM disaster recovery.

Protected virtual machine― A VM that has replication configured. The protected VMs reside in a datastore on the local cluster of a replication pair. Protected VMs have a replication schedule configured either individually or by inclusion in a protection group.

Protection group―A means to apply the same replication configuration to a group of VMs.

Recovery process―The manual process to recover protected VMs in the event the source cluster fails or a disaster occurs.

Recovery test―A maintenance task that ensures the recovery process will be successful in the event of a disaster.

Remote cluster―One of a VM replication cluster pair. The remote cluster receives the replication snapshots from the Protected VMs in the local cluster.

Replication pair―Two clusters that together provide a remote cluster location for storing the replicated DP snapshots of local cluster VMs.

Clusters in a replication pair can be both a remote and local cluster. Both clusters in a replication pair can have resident VMs. Each cluster is local to its resident VMs. Each cluster is remote to the VMs that reside on the paired local cluster.

DP snapshot―Part of the replication protection mechanism. A type of snapshot taken of a protected VM, which is replicated from the local cluster to the remote cluster.

Secondary cluster―An alternative name for the target cluster in VM disaster recovery.

Source cluster―One of a VM replication cluster pair. The source cluster is where the protected VMs reside.

Target cluster―One of a VM replication cluster pair. The target cluster receives the replicated DP snapshots from the VMs of the source cluster. The target cluster is used to recover the VMs in the event of a disaster on the source cluster.

Best Practices for Data Protection and Disaster Recovery

The requirement for an effective data protection and disaster recovery strategy based on the environment being protected cannot be overstated. The solution should be designed and deployed to meet or exceed the business requirements for both Recovery Point Objectives (RPO) and Recovery Time Objectives (RTO) of the production VMs. The following are some of the points that must be considered when designing this strategy:

-

The number of Service Level Agreements (SLA) necessary to comply with various categories of production workloads that may include mission critical, business critical, and important VMs.

-

Detailed constructs of each SLA that may include RPO, RTO, the number or recovery points retained, requirements for off-site copies of data, and any requirements for storing backup copies on different media types. There may be additional requirements that include the ability to recover to a different environment such as a different location, different hypervisor or different private/public cloud.

-

An ongoing testing strategy for each SLA which serves to prove that the solution meets the business requirements it was designed for.

Note that backups and backup copies must be stored external to the HyperFlex cluster being protected. For example, backups performed to protect VMs on a HyperFlex cluster should not be saved to a backup repository or a disk library that is hosted on the same HyperFlex cluster.

The built-in HyperFlex data protection capabilities are generalized into the following categories:

-

Data Replication Factor—Refers to the number of redundant copies of data within a HyperFlex cluster. A data replication factor of 2 or 3 can be configured during data platform installation and cannot be changed. The data replication factor benefit is that it contributes to the number of cluster tolerated failures. See the section titled, HX Data Platform Cluster Tolerated Failures for additional information about the data replication factor.

Note

Data Replication Factor alone may not fulfill requirements for recovery in the highly unlikely event of a cluster failure, or an extended site outage. Also, the data replication factor does not facilitate point-in-time recovery, retention of multiple recovery points, or creation of point-in-time copies of data external to the cluster.

-

HX Native Snapshots—Operates on an individual VM basis and enables saving versions of a VM over time. A maximum of 31 total snapshots, including the HX SENTINEL snapshot, can be retained.

Note

HX native snapshots alone may not fulfill requirements for recovery in the unlikely event of a cluster failure, or an extended site outage. Also, HX native snapshots do not facilitate the ability to create point-in-time copies of data external to the cluster. More importantly, unintentional deletion of a VM also deletes any HX native snapshots associated with the deleted VM.

-

Asynchronous Replication—Also known as The HX Data Platform disaster recovery feature, it enables protection of virtual machines by replicating virtual machine DP snapshots between a pair of network connected HyperFlex clusters. Protected virtual machines running on one cluster replicate to the other cluster in the pair, and vice versa. The two paired clusters typically are located at a distance from each other, with each cluster serving as the disaster recovery site for virtual machines running on the other cluster.

Note

Asynchronous Replication alone may not fulfill requirements for recovery when multiple point-in-time copies need to be retained on the remote cluster. Only the most recent snapshot replica for a given VM is retained on the remote cluster. Also, asynchronous replication does not facilitate the ability to create point-in-time copies of data external to either cluster.

It is recommended to first understand the unique business requirements of the environment and then deploy a comprehensive data protection and disaster recovery solution to meet or exceed those requirements.

Feedback

Feedback