Cisco Data Intelligence Platform on Cisco UCS C240 M5 with Cloudera Data Platform Running Apache Ozone

Available Languages

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- US/Canada 800-553-2447

- Worldwide Support Phone Numbers

- All Tools

Feedback

Feedback

Cisco Data Intelligence Platform on Cisco UCS C240 M5 with Cloudera Data Platform Running Apache Ozone

Deployment Guide for Cisco Data Intelligence Platform on Cisco UCS C240 M5 with Cloudera Data Platform Private Cloud Base 7.1.5 Running Apache Ozone

Published: May 2021

In partnership with:

![]()

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to:

http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unified Computing System (Cisco UCS), Cisco UCS B-Series Blade Servers, Cisco UCS C-Series Rack Servers, Cisco UCS S-Series Storage Servers, Cisco UCS Manager, Cisco UCS Management Software, Cisco Unified Fabric, Cisco Application Centric Infrastructure, Cisco Nexus 9000 Series, Cisco Nexus 7000 Series. Cisco Prime Data Center Network Manager, Cisco NX-OS Software, Cisco MDS Series, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries. (LDW_UP).

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2021 Cisco Systems, Inc. All rights reserved.

Deployment Hardware and Software

Cloudera Data Platform (CDP) Private Cloud is built for cloud-native speed, scale, and economics for the connected data lifecycle. CDP is faster than traditional on-premise data management solutions and cloud services, and responds faster to changing business requirements, providing a quicker adoption of innovation.

The CDP private cloud base is built on Hadoop 3.x distribution. Hadoop developed several capabilities since its inception. However, Hadoop 3.0 had been an eagerly awaited major release with many new features and optimizations. Upgrading from Hadoop 2.x to 3.0 is a paradigm shift since it enables diverse computing resources, such as CPU, GPU, and FPGA ,to work on data and leverage AI/ML methodologies. It supports flexible and elastic containerized workloads managed either by Hadoop scheduler such as YARN or Kubernetes, distributed deep learning, GPU enabled Spark workloads, and so on. Also, Hadoop 3.0 offers better reliability and availability of metadata through multiple standby name nodes, disk balancing for evenly utilized data nodes, enhanced workloads scheduling with YARN, and overall improved operational efficiency.

Apache Ozone 1.0 was released as a part of CDP Private Cloud Base 7.1.4 and it provides an optimized and shared storage compute infrastructure across the entire data lifecycle, increasing efficiency and lowering cost by reducing compute infrastructure requirements for analytics.

Apache Ozone provides the foundation for the next generation of storage architecture for Hadoop, where data blocks are organized in storage containers for higher scale and handling of small objects. This has been a major architectural enhancement in how storage was managed in Hadoop with HDFS to how Apache Ozone manages it, removing limitation of HDFS while supporting features such as:

● Apache Ozone is an object store for Hadoop supporting both HDFS API and S3 API allowing for several new use cases

● Supports 10s of billions of files

● Supports 400 TB / server with potential 1PB/server support in the future

● Supports 16TB hard disks (HDD/Flash) with potential to support larger drives in the future

● Continue to support data locality and separation of storage and compute like HDFS

In this reference architecture, Cisco Data Intelligence Platform (CDIP) is thoughtfully designed, supports data intensive workloads with Cloudera Data Platform Private cloud base, and Storage dense nodes with Apache Ozone.

This CVD is based on Cisco Data Intelligence Platform Private cloud base on Cisco UCS C240 M5 Rack Server with Cloudera Data Platform Private Cloud Base (CDP PvC) 7.1.5 with Apache Ozone as the distributed Filesystem for CDP on-premise. Cisco UCS C240 M5 Rack Servers deliver a highly dense, cost-optimized, on-premises storage with broad infrastructure flexibility for object storage, Hadoop, and Big Data analytics solutions.

This CVD offers customers the ability to consolidate their data lake further with larger storage per data node with Apache Ozone helping with the following savings:

● Infrastructure cost

● Software licensing cost

● Smaller datacenter footprint

● Support for HDFS and S3 and billions of objects supporting both large and small files in a similar fashion.

CDIP with Cloudera Data Platform enables the customer to independently scale storage and computing resources as needed while offering an exabyte scale architecture with low total cost of ownership (TCO) and future-proof architecture with the latest technology offered by Cloudera.

Furthermore, CDIP offers a single pane of glass management with Cisco Intersight.

Big Data and machine learning have progressed to the point where they are being implemented in production systems running 24x7. There exists a very clear need for a proven, dependable, high-performance platform for the ingestion, processing, storage, and analysis of data, as well as the seamless dissemination of the output, results, and insights of the analysis.

This solution implements Cloudera Data Platform Private Cloud Base (CDP PvC Base) with Apache Ozone on Cisco Data Intelligence Platform (CDIP) architecture, a world-class platform specifically designed for demanding workloads that is both easy to scale and easy to manage, even as the requirements grow to thousands of servers and petabytes of storage.

Many companies, recognizing the immense potential of big data and machine learning technology, are gearing up to leverage these new capabilities, building out departments and increasing hiring. However, these efforts have a new set of challenges:

● Making data available to the diverse set of users (data engineers, analysts, data scientists) who need it

● Management and processing of Exabyte scale storage

● Supporting Objects (S3) and different variety of data and file types

● Reduce data center footprint with better consolidation of storage

● Enabling access to high-performance computing resources, GPUs, that also scale with the data growth

● Allowing people to work with the data using the environments in which they are familiar

● Enabling the automated production of those results

● Managing the data for compliance and governance

● Scaling the system as the data grows

● Managing and administering the system in an efficient, cost-effective way

This solution is based on the Cisco Data Intelligence Platform that includes computing, storage, connectivity, capabilities built on Cisco Unified Computing System (Cisco UCS) infrastructure, using Cisco UCS C-Series and S-Series Rack Servers and unified management with Cisco Intersight to help companies manage the entire infrastructure from a single pane of glass along with Cloudera Data Platform to provide the software for fast ingest of data and managing and processing exabyte scale data being collected. This architecture is specifically designed for performance and linear scalability for big data and machine learning workload.

The intended audience of this document includes sales engineers, field consultants, professional services, IT managers, partner engineering and customers who want to deploy the Cloudera Data Platform Private Cloud base with Apache Ozone on the Cisco Data Intelligence Platform (Cisco UCS M5 Rack-Mount servers).

This document describes the architecture, design choices, and deployment procedures for Cisco Data Intelligence Platform using Cloudera Data Platform Private Cloud Base with Apache Ozone on Cisco UCS C240 M5.

This document also serves as a step-by-step guide on how to deploy Cloudera Data Platform on a 27-node cluster of Cisco UCS C240 M5 Rack Server.

This solution extends the portfolio of Cisco Data Intelligence Platform (CDIP) architecture with Cloudera Data Platform Private Cloud base with Apache Ozone, a state-of-the-art distributed storage which is the successor to Hadoop Filesystem (HDFS). Apache Ozone overcomes some of the storage limitations of HDFS while providing a richer support natively for Objects and S3 API support, higher consolidation of storage per node and a better lab footprint. Furthermore, as the enterprise’s requirements and needs change over time, the platform can grow to thousands of servers, at exabytes of storage, and tens of thousands of cores to process this data.

The following will be implemented in this validated design:

● Cisco Intersight to configure and manage Cisco Infrastructure

● Data lake provided by Cloudera Data Platform Private Cloud Base on Cisco UCS servers

● Next-generation data storage with Apache Ozone providing both HDFS and S3 API support storage for a data lake

● Intel Based 3.8TB SATA SSDs as storage drives for Apache Ozone

This CVD details the process of installing Cloudera Data Platform Private Cloud Base including the installation of Apache Ozone, the prerequisites for Cloudera Data Platform Private Cloud base, and the configuration details of the cluster.

Apache Ozone Brings the Best of HDFS and Object Store

● Overcomes HDFS limitations

◦ Can support billions of files (unlike HDFS which only supports up to 500 million files).

◦ Can currently support 400 TB/ node with potential of supporting 1PB /node at a later point in time unlike HDFS which only supports up to 100 TB/node.

◦ Supports 16TB drives unlike HDFS which only supports up to 8TB drives.

◦ Can scale to Exabyte scale.

● Overcome Object Store limitations

◦ Can support large files with linear performance. Like HDFS, Apache Ozone breaks files into smaller chunks (Object stores fail to do this and don’t perform linearly with large files), solving the large file problems often hit in object stores.

◦ Separates control and data plane enabling high performance. Supports very fast reads out of any of the three replicas.

● Apache Ozone fully migrates a HDFS cluster to Apache Ozone cluster.

● Supports data locality similar to HDFS and disaggregate architecture.

● Applications like Apache Spark, Hive and YARN, work without any modifications when using Ozone. Ozone comes with a Java client library, S3 protocol support, and a command line interface which makes it easy to use and administer.

Benefits of Apache Ozone to Customers

Apache Ozone brings storage consolidation in a data lake and provides customers with the following:

● Lower Infrastructure cost

● Better TCO and ROI on their investment

● Lower datacenter footprint

Figure 1. Data Lake Storage Consolidation with Apache Ozone

Figure 2. Data Lake Storage Consolidation with Apache ozone and Intel 3.8TB SATA SSD Drives

![]() The focus of this CVD will be the 3.8TB Intel SATA SSD 1x DWPD EV drives.

The focus of this CVD will be the 3.8TB Intel SATA SSD 1x DWPD EV drives.

Cisco Data Intelligence Platform

Cisco Data Intelligence Platform (CDIP) is a cloud-scale architecture which brings together big data, AI/compute farm, and storage tiers to work together as a single entity while also being able to scale independently to address the IT issues in the modern data center. This architecture provides the following:

● Extremely fast data ingest, and data engineering done at the data lake.

● AI compute farm allowing for different types of AI frameworks and compute types (GPU, CPU, FPGA) to work on this data for further analytics.

![]() GPU and FPGA are not supported in this release of Cloudera Private Cloud Experiences 1.0.2.

GPU and FPGA are not supported in this release of Cloudera Private Cloud Experiences 1.0.2.

● Seamlessly scale the architecture to thousands of nodes with a single pane of glass management using Cisco Intersight and Cisco Application Centric Infrastructure (ACI).

● Cisco Data Intelligence Platform caters to the evolving architecture bringing together a fully scalable infrastructure with centralized management and fully supported software stack (in partnership with industry leaders in the space) to each of these three independently scalable components of the architecture including data lake, AI/ML and Object stores.

Figure 3. Cisco Data Intelligent Platform

Cisco Data Intelligence Platform with Cloudera Data Platform

Cisco developed numerous industry leading Cisco Validated Designs (reference architectures) in the area of Big Data, compute farm with Kubernetes (CVD with RedHat OpenShift Container Platform) and Object store.

A CDIP architecture can be fully enabled by the Cloudera Data Platform with the following components:

● Data lake enabled through CDP PvC Base with Apache Ozone

● Private Cloud with compute on Kubernetes can be enabled through CDP Private Cloud Experiences

● Exabyte storage enabled through Apache Ozone

Figure 4. Cisco Data Intelligence Platform with Apache Ozone

This architecture can start from a single rack and scale to thousands of nodes with a single pane of glass management with Cisco Application Centric Infrastructure (ACI).

Figure 5. Cisco Data Intelligent Platform at Scale

Cisco Data Intelligence Platform reference architectures are carefully designed, optimized, and tested with the leading big data and analytics software distributions to achieve a balance of performance and capacity to address specific application requirements. You can deploy these configurations as is or use them as templates for building custom configurations. You can scale your solution as your workloads demand, including expansion to thousands of servers through the use of Cisco Nexus 9000 Series Switches. The configurations vary in disk capacity, bandwidth, price, and performance characteristics.

Data Lake Reference Architecture

Figure 6. Cisco UCS Integrated Infrastructure for Big Data and Analytics – Modernize Hadoop Infrastructure

Dense Storage Apache Ozone Reference Architecture

Table 1 lists the CDIP Apache Ozone reference architecture.

Table 1. Cisco Data Intelligence Platform Apache Ozone Reference Architecture for Data Lake

|

|

High Performance |

High Capacity |

Extreme Capacity |

| Server |

16 x Cisco UCS C240 M5 Rack Servers with small-form-factor (SFF) drives |

Cisco UCS S3260 with Single Node

|

Cisco UCS S3260 with Dual Node |

| CPU |

2 x 2nd Gen Intel® Xeon® Scalable Processors 5218R processors (2 x 20 cores, at 2.1 GHz) |

2 x 2nd Gen Intel Xeon Scalable Processor 6230R (2 x 26 cores, 2.1 GHz) |

2 x 2nd Gen Intel Xeon Scalable Processor 6230R (2 x 26 cores, 2.1 GHz) |

| Memory |

12 x 32GB DDR4 (384 GB) |

12 x 32GB 2666 MHz (384 GB) |

12 x 32GB 2666 MHz (192 GB) per Node |

| Boot |

2 x 960GB M.2 SATA Boot SSDs |

2 x 960GB SATA Boot SSDs |

2 x 960GB SATA Boot SSDs |

| Storage |

24 x 3.8TB Intel SATA SSD (1x DWPD Enterprise Value) + 2 x 3.2TB Intel P4610 NVMe |

48x8TB drives + 2 x 3.2TB Intel P4610 NVMe |

24x16TB drives + 2 x 3.2TB Intel P4610 NVMe |

| VIC |

25 Gigabit Ethernet (Cisco UCS VIC 1457) or 40/100 Gigabit Ethernet (Cisco UCS VIC 1497 recommended) |

25 Gigabit Ethernet (Cisco UCS VIC 1455) or 40/100 Gigabit Ethernet (Cisco UCS VIC 1495) |

25 Gigabit Ethernet (Cisco UCS VIC 1455) or 40/100 Gigabit Ethernet (Cisco UCS VIC 1495) |

| Storage controller |

Cisco 12-Gbps modular SAS host bus adapter (HBA) |

Cisco UCS S3260 dual RAID controller |

Cisco UCS S3260 dual RAID controller |

| Network connectivity |

Cisco UCS 6332 Fabric Interconnect or Cisco UCS 6454/64108 Fabric Interconnect |

Cisco UCS 6332 Fabric Interconnect or Cisco UCS 6454/64108 Fabric Interconnect |

Cisco UCS 6332 Fabric Interconnect or Cisco UCS 6454/64108 Fabric Interconnect |

Table 2 lists the data lake, private cloud, and dense storage with HDFS reference architecture for Cisco Data Intelligence Platform.

Table 2. Cisco Data Intelligence Platform Data Lake Configuration with HDFS

|

|

High Performance |

Performance |

Capacity |

High Capacity |

| Servers |

16 x Cisco UCS C220 M5SN Rack Servers with small-form-factor (SFF) drives (UCSC-C220-M5SN) |

16 x Cisco UCS C240 M5 Rack Servers with small-form-factor (SFF) drives |

16 x Cisco UCS C240 M5 Rack Servers with large-form-factor (LFF) drives |

8 x Cisco UCS S3260 Storage Servers each with dual nodes |

| CPU |

2 x 2nd Gen Intel® Xeon® Scalable Processors 6230R (2 x 26 cores, at 2.1 GHz) |

2 x 2nd Gen Intel® Xeon® Scalable Processors 5218R processors (2 x 20 cores, at 2.1 GHz) |

2 x 2nd Gen Intel Xeon Scalable Processors 5218R (2 x 20 cores, at 2.1 GHz) |

2 x 2nd Gen Intel Xeon Scalable Processors 6230R (2 x 26 cores, 2.1 GHz) |

| Memory |

12 x 32GB DDR4 (384 GB) |

12 x 32GB DDR4 (384 GB) |

12 x 32GB DDR4 (384 GB) |

12 x 32GB DDR4 (384 GB) |

| Boot |

2 x 240GB M.2 SATA Boot SSDs |

2 x 240GB M.2 SATA Boot SSDs |

2 x 240GB M.2 SATA Boot SSDs |

2 x 480GB SATA SSDs |

| Storage |

10 x 8TB 2.5in U.2 Intel P4510 NVMe High Perf. Value Endurance |

26 x 2.4TB 10K rpm SFF SAS HDDs or 26 x 3.8TB Intel SATA SSD (1x DWPD Enterprise Value) |

12 x 8-TB 7.2K rpm LFF SAS HDDs |

28 x 4TB 7.2K rpm LFF SAS HDDs per server node |

| Virtual interface card (VIC) |

25 Gigabit Ethernet (Cisco UCS VIC 1457) or 40/100 Gigabit Ethernet (Cisco UCS VIC 1497) |

25 Gigabit Ethernet (Cisco UCS VIC 1457) or 40/100 Gigabit Ethernet (Cisco UCS VIC 1497) |

25 Gigabit Ethernet (Cisco UCS VIC 1457) or 40/100 Gigabit Ethernet (Cisco UCS VIC 1497) |

40 Gigabit Ethernet (Cisco UCS VIC 1387) or 25 Gigabit Ethernet (Cisco UCS VIC 1455) or 40/100 Gigabit Ethernet (Cisco UCS VIC 1495) |

| Storage controller |

NVMe Switch included in the optimized server |

Cisco 12-Gbps SAS modular RAID controller with 4-GB flash-based write cache (FBWC) or Cisco 12-Gbps modular SAS host bus adapter (HBA) |

Cisco 12-Gbps SAS modular RAID controller with 2-GB FBWC or Cisco 12-Gbps modular SAS host bus adapter (HBA) |

Cisco 12-Gbps SAS Modular RAID Controller with 4-GB flash-based write cache (FBWC) |

| Network connectivity |

Cisco UCS 6332 Fabric Interconnect or Cisco UCS 6454/64108 Fabric Interconnect |

Cisco UCS 6332 Fabric Interconnect or Cisco UCS 6454/64108 Fabric Interconnect |

Cisco UCS 6332 Fabric Interconnect or Cisco UCS 6454/64108 Fabric Interconnect |

Cisco UCS 6332 Fabric Interconnect or Cisco UCS 6454/64108 Fabric Interconnect |

| GPU (optional) |

Up to 2 x NVIDIA Tesla T4 with 16 GB memory each |

Up to 2 x NVIDIA Tesla V100 with 32 GB memory each Or Up to 6 x NVIDIA Tesla T4 with 16 GB memory each |

2 x NVIDIA Tesla V100 with 32 GB memory each Or Up to 6 x NVIDIA Tesla T4 with 16 GB memory each |

|

Private Cloud Reference Architecture

Table 3 lists the CDIP private cloud configuration for master and worker nodes.

Table 3. Cisco Data Intelligence Platform Private Cloud configuration (Master and worker nodes)

|

|

High Core Option |

| Servers |

8 x Cisco UCS C240 M5 Rack Servers |

| CPU |

2 x 2nd Gen Intel Xeon Scalable Processor 6230R (2 x 26 cores, 2.1 GHz) |

| Memory |

12 x 32GB DDR4 (384 GB) |

| Boot |

M.2 with 2 x 960GB SSDs |

| Storage |

4 x 2.4TB 10K rpm SFF SAS HDDs or 4 x 1.6TB Enterprise Value SATA SSDs or 4x 3.8TB Intel SATA SSD (1x DWPD Enterprise Value) |

| VIC |

25 Gigabit Ethernet (Cisco UCS VIC 1457) or 40/100 Gigabit Ethernet (Cisco UCS VIC 1497) |

| Storage controller |

Cisco 12-Gbps SAS modular RAID controller with 4-GB FBWC or Cisco 12-Gbps modular SAS HBA |

| Network connectivity |

Cisco UCS 6332 Fabric Interconnect or Cisco UCS 6454/64108 Fabric Interconnect |

| GPU (optional) |

2 x NVIDIA TESLA V100 with 32-GB memory each or up to 6 x NVIDIA T4

|

As illustrated in Figure 4, this CVD was designed with the following:

● 27 x Cisco UCS C240 M5 running Cloudera Data Platform Private Cloud Base with Apache Ozone

Refer to http://www.cisco.com/go/bigdata_design to build a fully supported CDP Private Cloud Base on CDIP reference architecture with HDFS. This CVD does not provide the details to build a CDP Private Cloud Base with HDFS but only with Apache Ozone. For detailed instruction to deploy CDP Private Cloud Base with HDFS, click the following links:

Cisco Data Intelligence Platform with All NVMe Storage, Cisco Intersight, and Cloudera Data Platform

Cisco Data Intelligence Platform on Cisco UCS S3260 with Cloudera Data Platform

Cisco Data Intelligence Platform with Cloudera Data Platform

To deploy CDP Private Cloud Experiences on Red Hat OpenShift Container Platform, see: Cisco Data Intelligence Platform with Cloudera Data Platform Private Cloud Experiences

16 node cluster with Rack#1 hosting 16 Cisco UCS C240 M5 and 11 node Cisco UCS C240 M5 in Rack#2. Each link in Figure 7 represents a 40 Gigabit Ethernet link from each of the 16-server connected to a pair of Cisco Fabric Interconnect switches.

Figure 7. Cisco Data Intelligence Platform with Cloudera Data Platform Private Cloud Base running Apache Ozone

![]() The Cisco UCS VIC 1457 provides 10/25Gbps, and the Cisco UCS VIC 1497 provides 40/100Gbps connectivity for the Cisco UCS C-series rack server. For more information see: Cisco UCS C-Series Servers Managing Network Adapters.

The Cisco UCS VIC 1457 provides 10/25Gbps, and the Cisco UCS VIC 1497 provides 40/100Gbps connectivity for the Cisco UCS C-series rack server. For more information see: Cisco UCS C-Series Servers Managing Network Adapters.

![]() In this solution we configured quad-port mLOM VIC 1457 installed in Cisco UCS C240 M5 server with link 1-2 connected to Fabric A and link 3-4 connected to Fabric B to achieve 50GbE per server.

In this solution we configured quad-port mLOM VIC 1457 installed in Cisco UCS C240 M5 server with link 1-2 connected to Fabric A and link 3-4 connected to Fabric B to achieve 50GbE per server.

NVMe for Apache Ozone metadata is configured in RAID1 to provide business continuity if there is a hardware failure. This is not needed on pure compute nodes which only use them for caching. Master Nodes and Data Nodes uses NVMe to store Ozone metadata. The Compute Nodes use NVMe for shuffle and can be in JBOD. The mixed Compute Data Nodes use NVMe for both Apache Ozone metadata and shuffle which requires mount Ozone partitions across both drives as RAID1 (800GB), with the remaining space used for shuffle/cache as independent JBOD partitions. Scaling the Solution

Figure 5 illustrates how to scale the solution. Each pair of Cisco UCS 6332 Fabric Interconnects has 24 Cisco UCS C240 M5 servers connected to it. This allows for eight uplinks from each Fabric Interconnect to the Cisco Nexus 9332 switch. Six pairs of 6332 FIs can connect to a single switch with four uplink ports each. With 24 servers per FI, a total of 144 servers can be supported. Additionally, this solution can scale to thousands of nodes with the Cisco Nexus 9500 series family of switches.

In this reference architectures, each of the components is scaled separately, and for the purposes of this example, scaling is uniform. Two scale scenarios are as follows:

● Scaled architecture with 3:1 oversubscription with Cisco fabric interconnects and Cisco ACI

● Scaled architecture with 2:1 oversubscription with Cisco ACI

In the following scenarios, the goal is to populate up to a maximum of 200 leaf nodes in a Cisco ACI domain. Not all cases reach that number because they use the Cisco Nexus 9508 Switch for this sizing and not the Cisco Nexus 9516 Switch.

Scaled Architecture with 3:1 Oversubscription with Cisco Fabric Interconnects and Cisco ACI

The architecture discussed here and shown in Figure 8 supports 3:1 network oversubscription from every node to every other node across a multidomain cluster (nodes in a single domain within a pair of Cisco fabric interconnects are locally switched and not oversubscribed).

From the viewpoint of the data lake, 24 Cisco UCS C240 M5 Rack Servers are connected to a pair of Cisco UCS 6332 Fabric Interconnects (with 24 x 40-Gbps throughput). From each fabric interconnect, 8 x 40-Gbps links connect to a pair of Cisco Nexus 9336 Switches. Three pairs of fabric interconnects can connect to a single pair of Cisco Nexus 9336 Switches (8 x 40-Gbps links per Fabric Interconnect to a pair of Cisco Nexus switches). Each of these Cisco Nexus 9336 Switches connects to a pair of Cisco Nexus 9508 Cisco ACI switches with 6 x 100-Gbps uplinks (connecting to a Cisco N9K-X9736C-FX line card). the Cisco Nexus 9508 Switch with the Cisco N9K-X9736C-FX line card can support up to 36 x 100-Gbps ports, each and 8 such line cards.

Figure 8. Scaled Architecture with 3:1 Oversubscription with Cisco Fabric Interconnects and Cisco ACI

Scaled Architecture with 2:1 Oversubscription with Cisco ACI

In this scenario as shown in Figure 9, the Cisco Nexus 9508 Switch with the Cisco N9K-X9736C-FX line card can support up to 36 x 100-Gbps ports, each and 8 such line cards.

For the 2:1 oversubscription, 30 Cisco UCS C240 M5 Rack Servers are connected to a pair of Cisco Nexus 9336 Switches, and each Cisco Nexus 9336 connects to a pair of Cisco Nexus 9508 Switches with three uplinks each. A pair of Cisco Nexus 9336 Switches can support 30 servers and connect to a spine with 6 x 100-Gbps links on each spine. This single pod (pair of Cisco Nexus 9336 Switches connecting to 30 Cisco UCS C240 M5 servers and 6 uplinks to each spine) can be repeated 48 times (288/6) for a given Cisco Nexus 9508 Switch and can support up to1440 servers.

To reduce the oversubscription ratio (to get 1:1 network subscription from any node to any node), you can use just 15 servers under a pair of Cisco Nexus 9336 Switches and then move to Cisco Nexus 9516 Switches (the number of leaf nodes would double).

To scale beyond this number, multiple spines can be aggregated.

Figure 9. Scaled Architecture with 2:1 Oversubscription with Cisco ACI

![]() In a 5-rack system, 80 percent of traffic is expected to go upstream.

In a 5-rack system, 80 percent of traffic is expected to go upstream.

Cisco Data Intelligence Platform

This section describes the components used to build Cisco Data Intelligence Platform, a highly scalable architecture designed to meet a variety of scale-out application demands with seamless data integration and management integration capabilities.

Cisco Data Intelligence Platform powered by Cloudera Data Platform delivers:

● Latest generation of CPUs from Intel (2nd generation Intel Scalable family, with Cascade Lake CLXR).

● Cloud scale and fully modular architecture where big data, AI/compute farm, and massive storage tiers work together as a single entity and each CDIP component can also scale independently to address the IT issues in the modern data center.

● World record Hadoop performance both for MapReduce and Spark frameworks published at TPCx-HS benchmark.

● AI compute farm offers different types of AI frameworks and compute types (GPU, CPU, FPGA) to work data for analytics.

● A massive storage tier enables to gradually retire data and quick retrieval when needed on a storage dense sub-systems with a lower $/TB providing a better TCO.

● Data compression with FPGA, offload compute-heavy compression tasks to FPGA, relieve CPU to perform other tasks, and gain significant performance.

● Seamlessly scale the architecture to thousands of nodes.

● Single pane of glass management with Cisco Intersight.

● ISV Partner ecosystem – Top notch ISV partner ecosystem, offering best of the breed end-to-end validated architectures.

● Pre-validated and fully supported platform.

● Disaggregate Architecture – Supporting separation of storage and compute for a data lake.

● Container Cloud – Kubernetes – Compute farm backed by the industry leading container orchestration engine and offers the very first container cloud plugged with data lake and object store.

Cloudera Data Platform – Private Cloud Base (PvC)

With the merger of Cloudera and Hortonworks, a new Cloudera software named Cloudera Data Platform (CDP) combined the best of Hortonwork’s and Cloudera’s technologies to deliver the industry leading first enterprise data cloud. CDP Private Cloud Base is the on-prem version of CDP and CDP Private Cloud Experiences is the on-prem version of Private Cloud to enable compute on Kubernetes with Red Hat OpenShift Container Platform. This unified distribution is a scalable and customizable platform where workloads can be securely provisioned. CDP gives a clear path for extending or refreshing your existing HDP and CDH deployments and set the stage for cloud-native architecture.

CDP Private Cloud Base can be deployed either with Hadoop Filesystem (HDFS) or Apache Ozone or both as the underlying distributed storage. However, this CVD primarily focuses on Apache Ozone as the underlying distributed filesystem for Hadoop.

Apache Ozone is a scalable, redundant, and distributed object store for Hadoop. Apart from scaling to billions of objects of varying sizes, Ozone can function effectively in containerized environments such as Kubernetes and YARN. Applications using frameworks like Apache Spark, YARN and Hive work natively without any modifications. Apache Ozone is built on a highly available, replicated block storage layer called Hadoop Distributed Data Store (HDDS).

Ozone consists of volumes, buckets, and keys:

● Volumes are similar to user accounts. Only administrators can create or delete volumes.

● Buckets are similar to directories. A bucket can contain any number of keys, but buckets cannot contain other buckets.

● Keys are similar to files. Each key is part of a bucket, which, in turn, belongs to a volume. Ozone stores data as keys inside these buckets.

● A hierarchical directory tree can be created inside a bucket using directory separators in

Apache Ozone is a distributed key-value store that can manage both small and large files alike. While HDFS provides POSIX-like semantics, Ozone looks and behaves like an Object Store.

When a key is written to Apache Ozone, the associated data is stored on the DataNodes in chunks called blocks. Therefore, each key is associated with one or more blocks. Within the DataNodes, a series of unrelated blocks is stored in a container, allowing many blocks to be managed as a single entity.

Apache Ozone separates management of namespaces and storage, helping it to scale effectively. Ozone Manager manages the namespaces while Storage Container Manager handles the containers.

Figure 10. Basic Architecture for Ozone

Apache Ozone is a scale-out architecture with minimal operational overheads and long-term maintenance efforts. Ozone can be co-located with HDFS with single security and governance policies for easy data exchange or migration and also offers seamless application portability. Ozone enables separation of compute and storage via the S3 API as well as similar to HDFS, it also supports data locality for applications that choose to use it.

The design of Ozone was guided by the key principles listed in Figure 11.

Figure 11. Ozone Design Principle

Cisco Unified Computing System

Cisco Unified Computing System (Cisco UCS) is a next-generation data center platform that unites computing, networking, storage access, and virtualization resources into a cohesive system designed to reduce Total Cost of Ownership (TCO) and increase business agility. The system integrates a low-latency, lossless 10/25/40/100 Gigabit Ethernet unified network fabric with enterprise-class, x86-architecture servers. The system is an integrated, scalable, multi-chassis platform in which all resources participate in a unified management domain (Figure 12).

Figure 12. Cisco UCS Component Hierarchy

Cisco UCS Manager (UCSM) resides within the Cisco UCS Fabric Interconnect. It makes the system self-aware and self-integrating, managing all the system components as a single logical entity. Cisco UCS Manager can be accessed through an intuitive GUI, a CLI, or an XML API. Cisco UCS Manager uses service profiles to define the personality, configuration, and connectivity of all resources within Cisco UCS, radically simplifying provisioning of resources so that the process takes minutes instead of days. This simplification allows IT departments to shift their focus from constant maintenance to strategic business initiatives.

Key Features

The following are some of the key feature of Cisco UCS Manager:

● Supports Cisco UCS B-Series Blade and Cisco UCS C-Series Rack Servers, the Cisco UCS C3260 storage server, Cisco UCS Mini, and the Cisco HyperFlex hyperconverged infrastructure.

● Programmatically controls server, network, and storage resources, with a unified, policy-driven management, so they can be efficiently managed at scale through software.

● Works with HTML 5, Java, or CLI graphical user interfaces.

● Can automatically detect, inventory, manage, and provision system components that are added or changed.

● Facilitates integration with third-party systems management tools.

● Builds on existing skills and supports collaboration across disciplines through role-based administration.

Cisco UCS 6300 Series Fabric Interconnects

Cisco UCS 6300 Series Fabric Interconnects provide high-bandwidth, low-latency connectivity for servers, with integrated, unified management provided for all connected devices by Cisco UCS Manager. Deployed in redundant pairs, Cisco fabric interconnects offer the full active-active redundancy, performance, and exceptional scalability needed to support the large number of nodes that are typical in clusters serving big data applications. Cisco UCS Manager enables rapid and consistent server configuration using service profiles, automating ongoing system maintenance activities such as firmware updates across the entire cluster as a single operation. Cisco UCS Manager also offers advanced monitoring with options to raise alarms and send notifications about the health of the entire cluster.

The Cisco UCS 6300 Series Fabric Interconnect is a One-Rack-Unit (1RU) providing low-latency, lossless 10 and 40 Gigabit Ethernet, Fiber Channel over Ethernet (FCoE), and Fiber Channel functions with management capabilities for the entire system. All servers attached to Fabric interconnects become part of a single, highly available management domain.

Figure 13. Cisco UCS 6332UP 32 -Port Fabric Interconnect

For more information, go to: https://www.cisco.com/c/en/us/products/collateral/servers-unified-computing/ucs-6300-series-fabric-interconnects/datasheet-c78-736682.html?cachemode=refresh

Cisco UCS 6400 Series Fabric Interconnect

The Cisco UCS 6400 Series Fabric Interconnects are a core part of the Cisco Unified Computing System, providing both network connectivity and management capabilities for the system. The Cisco UCS 6400 Series offer line-rate, low-latency, lossless 10/25/40/100 Gigabit Ethernet, Fibre Channel over Ethernet (FCoE), and Fibre Channel functions. (Figure 14 and Figure 15).

The Cisco UCS 6454 54-Port Fabric Interconnect (Figure 14) is a One-Rack-Unit (1RU) 10/25/40/100 Gigabit Ethernet, FCoE, and Fibre Channel switch offering up to 3.82 Tbps throughput and up to 54 ports. The switch has 28 10/25-Gbps Ethernet ports, 4 1/10/25- Gbps Ethernet ports, 6 40/100-Gbps Ethernet uplink ports, and 16 unified ports that can support 10/25-Gbps Ethernet ports or 8/16/32-Gbps Fibre Channel ports. All Ethernet ports are capable of supporting FCoE.

Figure 14. Cisco UCS 6454 Fabric Interconnect

The Cisco UCS 64108 Fabric Interconnect (Figure 15) is a 2-RU top-of-rack switch that mounts in a standard 19-inch rack such as the Cisco R Series rack. The 64108 is a 10/25/40/100 Gigabit Ethernet, FCoE and Fiber Channel switch offering up to 7.42 Tbps throughput and up to 108 ports. The switch has 16 unified ports (port numbers 1-16) that can support 10/25-Gbps SFP28 Ethernet ports or 8/16/32-Gbps Fibre Channel ports, 72 10/25-Gbps Ethernet SFP28 ports (port numbers 17-88), 8 1/10/25-Gbps Ethernet SFP28 ports (port numbers 89-96), and 12 40/100-Gbps Ethernet QSFP28 uplink ports (port numbers 97-108). All Ethernet ports are capable of supporting FCoE.

Figure 15. Cisco UCS 64108 Fabric Interconnect

Cisco UCS C-Series Rack-Mount Servers

It is designed to operate both in standalone environments and as part of Cisco UCS managed configuration, these servers enable organizations to deploy systems incrementally—using as many or as few servers as needed—on a schedule that best meets the organization’s timing and budget. Cisco UCS C-Series servers offer investment protection through the capability to deploy them either as standalone servers or as part of Cisco UCS. One compelling reason that many organizations prefer rack-mount servers is the wide range of I/O options available in the form of PCIe adapters. Cisco UCS C-Series servers support a broad range of I/O options, including interfaces supported by Cisco and adapters from third parties.

Cisco UCS 240M5 Storage Servers

The Cisco UCS C240 M5 Rack-Mount Server (Figure 16) is a 2-socket, 2-Rack-Unit (2RU) rack server offering industry-leading performance and expandability. It supports a wide range of storage and I/O-intensive infrastructure workloads, from big data and analytics to collaboration. Cisco UCS C-Series Rack Servers can be deployed as standalone servers or as part of a Cisco Unified Computing System managed environment to take advantage of Cisco’s standards-based unified computing innovations that help reduce customers’ Total Cost of Ownership (TCO) and increase their business agility.

The latest update includes support for 2nd Generation Intel Xeon Scalable Processors, 2933-MHz DDR4 memory, and the new 512GB Intel OptaneTM DC Persistent Memory Modules (DCPMMs). With this combination of features, up to 9 TB of memory is possible (using 12 x 256 GB DDR4 DIMMs and 12 x 512 GB DCPMMs).

![]() Optane DCPMMs are not used in this architecture or CVD with Apache Ozone but highlights the capabilities of Cisco UCS C240 M5.

Optane DCPMMs are not used in this architecture or CVD with Apache Ozone but highlights the capabilities of Cisco UCS C240 M5.

The Cisco UCS C240 M5 Rack-Mount Server has the following features:

● Latest 2nd Generation Intel Xeon Scalable CPUs with up to 28 cores per socket

● Up to 24 DDR4 DIMMs for improved performance

● Up to 26 Small-Form-Factor (SFF) 2.5-inch drives, (up to 10 NVMe PCIe SSDs on the NVMe-optimized chassis version), or 12 Large-Form- Factor (LFF) 3.5-inch drives

● Support for 12-Gbps SAS modular RAID controller in a dedicated slot, leaving the remaining PCIe Generation 3.0 slots available for other expansion cards

● Modular LAN-On-Motherboard (mLOM) slot that can be used to install a Cisco UCS Virtual Interface Card (VIC) without consuming a PCIe slot, supporting dual 10/25/40-Gbps network connectivity

● Dual embedded Intel x550 10GBASE-T LAN-On-Motherboard (LOM) ports

● Modular M.2 or Secure Digital (SD) cards that can be used for boot

Figure 16. Cisco UCS C240M5 Rack-Mount Server

Cisco UCS Virtual Interface Cards (VICs)

Cisco UCS VIC 1457

The Cisco UCS VIC 1457 (Figure 17) is a quad-port Small Form-Factor Pluggable (SFP28) mLOM card designed for the M5 generation of Cisco UCS C-Series Rack Servers. The card supports 10/25-Gbps Ethernet or FCoE. The card can present PCIe standards-compliant interfaces to the host, and these can be dynamically configured as either NICs or HBAs.

Cisco UCS VIC 1497

The Cisco VIC 1497 (Figure 18) is a dual-port Small Form-Factor (QSFP28) mLOM card designed for the M5 generation of Cisco UCS C-Series Rack Servers. The card supports 40/100-Gbps Ethernet and FCoE. The card can present PCIe standards-compliant interfaces to the host, and these can be dynamically configured as NICs and HBAs.

Cisco Intersight is Cisco’s systems management platform that delivers intuitive computing through cloud-powered intelligence. This platform offers a more intelligent level of management that enables IT organizations to analyze, simplify, and automate their environments in ways that were not possible with prior generations of tools. This capability empowers organizations to achieve significant savings in Total Cost of Ownership (TCO) and to deliver applications faster, so they can support new business initiatives.

Cisco Intersight is a Software as a Service (SaaS) infrastructure management which provides a single pane of glass management of CDIP infrastructure in the data center. Cisco Intersight scales easily, and frequent updates are implemented without impact to operations. Cisco Intersight Essentials enables customers to centralize configuration management through a unified policy engine, determine compliance with the Cisco UCS Hardware Compatibility List (HCL), and initiate firmware updates. Enhanced capabilities and tight integration with Cisco TAC enables more efficient support. Cisco Intersight automates uploading files to speed troubleshooting. The Intersight recommendation engine provides actionable intelligence for IT operations management. The insights are driven by expert systems and best practices from Cisco.

Cisco Intersight offers flexible deployment either as Software as a Service (SaaS) on Intersight.com or running on your premises with the Cisco Intersight virtual appliance. The virtual appliance provides users with the benefits of Cisco Intersight while allowing more flexibility for those with additional data locality and security requirements.

Cisco Intersight provides the following features for ease of operations and administration for the IT staff:

● Connected TAC

● Security Advisories

● Hardware Compatibility List (HCL)

To learn more about all the features of Intersight go to: https://www.cisco.com/c/en/us/products/servers-unified-computing/intersight/index.html

Connected TAC is an automated transmission of technical support files to the Cisco Technical Assistance Center (TAC) for accelerated troubleshooting.

Cisco Intersight enables Cisco TAC to automatically generate and upload Tech Support Diagnostic files when a Service Request is opened. If you have devices that are connected to Intersight but not claimed, Cisco TAC can only check the connection status and will not be permitted to generate Tech Support files. When enabled, this feature works in conjunction with the Smart Call Home service and with an appropriate service contract. Devices that are configured with Smart Call Home and claimed in Intersight can use Smart Call Home to open a Service Request and have Intersight collect Tech Support diagnostic files.

Figure 20. Cisco Intersight: Connected TAC

To enable Connected TAC, follow these steps:

1. Log into Intersight.com.

2. Click the Servers tab. Go to Server > Actions tab. From the drop-down list, click Open TAC Case.

3. Clicking Open TAC Case launches the Cisco URL for Support case manager where associated service contracts for Server or Fabric Interconnect is displayed.

4. Click Continue.

5. Follow the procedure to Open TAC Case.

Cisco Intersight Integration for HCL

Cisco Intersight evaluates the compatibility of your Cisco UCS and Cisco HyperFlex systems to check if the hardware and software have been tested and validated by Cisco or Cisco partners. Cisco Intersight reports validation issues after checking the compatibility of the server model, processor, firmware, adapters, operating system, and drivers, and displays the compliance status with the Hardware Compatibility List (HCL).

You can use Cisco UCS Tools, a host utility vSphere Installation Bundle (VIB), or OS Discovery Tool, an open source script to collect OS and driver information to evaluate HCL compliance.

In Cisco Intersight, you can view the HCL compliance status in the dashboard (as a widget), the Servers table view, and the Server details page.

![]() For more information, go to: https://www.intersight.com/help/features#compliance_with_hardware_compatibility_list_(hcl)

For more information, go to: https://www.intersight.com/help/features#compliance_with_hardware_compatibility_list_(hcl)

Figure 21. Example of HCL Status and Driver Recommendation for RHEL 7.6

Cisco Intersight sources critical security advisories from the Cisco Security Advisory service to alert users about the endpoint devices that are impacted by the advisories and deferrals. These alerts are displayed as Advisories in Intersight. The Cisco Security Advisory service identifies and monitors and updates the status of the advisories to provide the latest information on the impacted devices, the severity of the advisory, the impacted products, and any available workarounds. If there are no known workarounds, you can open a support case with Cisco TAC for further assistance. A list of the security advisories is shown in Intersight under Advisories.

Figure 22. Intersight Dashboard

![]()

Figure 23. Example: List of PSIRTs Associated with Sample Intersight Account

CDP is an integrated data platform that is easy to deploy, manage, and use. By simplifying operations, CDP reduces the time to onboard new use cases across the organization. It uses machine learning to intelligently auto scale workloads up and down for more cost-effective use of cloud infrastructure.

Cloudera Data Platform Private Cloud Base (CDP PvC Base) is the on-premises version of Cloudera Data Platform. This new product combines the best of both world, Cloudera Enterprise Data Hub and Hortonworks Data Platform Enterprise along with new features and enhancements across the stack. This unified distribution is a scalable and customizable platform where you can securely run many types of workloads.

Figure 24. Cloudera Data Platform – Unity Release

Cloudera Data Platform provides:

● Unified Distribution: Whether you are coming from CDH or HDP, CDP caters both. It offers richer feature sets and bug fixes with concentrated development and higher velocity.

● Hybrid and On-prem: Hybrid and multi-cloud experience, on-prem it offers best performance, cost, and security. It is designed for data centers with optimal infrastructure.

● Management: It provides consistent management and control points for deployments.

● Consistency: Security and governance policies can be configured once and applied across all data and workloads.

● Portability: Policies stickiness with data, even if it moves across all supported infrastructure.

Cloudera Data Platform Private Cloud Base (CDP PvC Base)

CDP Private Cloud Base is the on-premises version of Cloudera Data Platform. This new product combines the best of Cloudera Enterprise Data Hub and Hortonworks Data Platform Enterprise along with new features and enhancements across the stack. This unified distribution is a scalable and customizable platform where you can securely run many types of workloads.

CDP Private Cloud Base supports a variety of hybrid solutions where compute tasks are separated from data storage and where data can be accessed from remote clusters, including workloads created using CDP Private Cloud Experiences. This hybrid approach provides a foundation for containerized applications by managing storage, table schema, authentication, authorization, and governance.

CDP Private Cloud Base is comprised of a variety of components such as Apache HDFS, Apache Hive 3, Apache HBase, and Apache Impala, along with many other components for specialized workloads. You can select any combination of these services to create clusters that address your business requirements and workloads. Several pre-configured packages of services are also available for common workloads.

Cloudera Data Platform Private Cloud Experiences (CDP PVC Experiences)

Cloudera Data Platform (CDP) Private Cloud is the newest on-prem offering of CDP that brings many of the benefits of the public cloud deployments to the on-prem CDP deployments.

CDP Private Cloud provides a disaggregation of compute and storage and allows independent scaling of compute and storage clusters. Through the use of containerized applications deployed on Kubernetes, CDP Private Cloud brings both agility and predictable performance to analytic applications. CDP Private Cloud gets unified security, governance, and metadata management through Cloudera Shared Data Experience (SDX), which is available on a CDP Private Cloud Base cluster.

CDP Private Cloud users can rapidly provision and deploy Cloudera Data Warehousing and Cloudera Machine Learning services through the Management Console, and easily scale them up or down as required.

Shadow IT can now be eliminated when the CDP Private Cloud is implemented in Cisco Data Intelligence Platform. CDP Private Cloud offers cloud-like experience in customer’s on-prem environment. With disaggregated compute and storage, complete self-service analytics environment can be implemented, thereby, offering better infrastructure utilization.

Also, CDP Private Cloud offers personas driven approach such as Data Scientist, Data Engineer, and Data Analyst, thus providing the right tools to the users and improving time-to-value.

![]() This CVD doesn’t include Cloudera Data Platform Private Cloud Experiences; it’s explained in detail here: Cisco Data Intelligence Platform with Cloudera Data Platform Private Cloud Experiences

This CVD doesn’t include Cloudera Data Platform Private Cloud Experiences; it’s explained in detail here: Cisco Data Intelligence Platform with Cloudera Data Platform Private Cloud Experiences

Containerization

Hadoop 3.0 introduced production-ready Docker container support on YARN with GPU isolation and scheduling. This provided a plethora of opportunities for modern applications, such as micro-services and distributed applications frameworks comprised of 1000s of containers to run AI/ML algorithms on peta bytes of data quickly and easily.

With Cloudera Data Platform Private Cloud Experiences, Hadoop workloads can be run as containers on Kubernetes (powered by Red Hat OpenShift Container Platform) allowing disaggregated architecture and cloud native architecture in Hadoop.

![]() This CVD doesn’t include Containerization; it’s explained in detail here: Cisco Data Intelligence Platform with Cloudera Data Platform Private Cloud Experiences

This CVD doesn’t include Containerization; it’s explained in detail here: Cisco Data Intelligence Platform with Cloudera Data Platform Private Cloud Experiences

Distributed Deep Learning with Apache Submarine

Hadoop community initiated the Apache Submarine project to make distributed deep learning/machine learning applications easily launched, managed, and monitored. These improvements make distributed deep learning/machine learning applications (such as TensorFlow) run on Apache Hadoop YARN, Kubernetes, or just a container service. It enables data scientists to focus on algorithms instead of worrying about underlying infrastructure. Apache Submarine Workbench (work in progress) is a WEB system for data scientists where they can interactively access notebooks, submit/manage jobs, manage models, create model training workflows, access data sets, and more.

![]() This CVD doesn’t include Distributed Deep Learning. AI workloads on GPU are expected to be supported in future releases of Cloudera Data Platform.

This CVD doesn’t include Distributed Deep Learning. AI workloads on GPU are expected to be supported in future releases of Cloudera Data Platform.

Apache Spark 3.0

Apache Spark 3.0 is a highly anticipated release. To meet this expectation, Spark is no longer limited just to CPU for its workload, it now offers GPU isolation and pooling GPUs from different servers to accelerate compute. To easily manage the deep learning environment, YARN launches the Spark 3.0 applications with GPU. This prepares the other workloads, such as Machine Learning and ETL, to be accelerated by GPU for Spark Workloads. Cisco Blog on Apache Spark 3.0

![]() GPU support isn’t included in this release of Cloudera Data Platform. It is expected to be supported in future releases.

GPU support isn’t included in this release of Cloudera Data Platform. It is expected to be supported in future releases.

Red Hat OpenShift Container Platform (RHOCP) Cluster

Cloudera has selected Red Hat OpenShift as the preferred container platform for CDP Private Cloud. With Red Hat OpenShift, CDP Private Cloud delivers powerful, self-service analytics and enterprise-grade performance with the granular security and governance policies that IT leaders demand.

To keep pace in the digital era, businesses must modernize their data strategy for increased agility, ease-of-use, and efficiency. Together, Red Hat OpenShift and CDP Private Cloud help create an essential hybrid, multi-cloud data architecture, enabling teams to rapidly onboard mission-critical applications and run them anywhere, without disrupting existing ones.

![]() This CVD doesn’t include Cloudera Data Platform Private Cloud Experiences and the required Red Hat OpenShift Container Platform to power the Kubernetes. This is explained here: Cisco Data Intelligence Platform with Cloudera Data Platform Private Cloud Experiences

This CVD doesn’t include Cloudera Data Platform Private Cloud Experiences and the required Red Hat OpenShift Container Platform to power the Kubernetes. This is explained here: Cisco Data Intelligence Platform with Cloudera Data Platform Private Cloud Experiences

Kubernetes

Extracting intelligence from data lake in a timely and speedy fashion is an absolute necessity in finding emerging business opportunities, accelerating time to market efforts, gaining market share, and by all means, increasing overall business agility.

In today’s fast-paced digitization, Kubernetes enables enterprises to rapidly deploy new updates and features at scale while maintaining environmental consistency across test/dev/prod. Kubernetes provides the foundation for cloud-native apps which can be packaged in container images and can be ported to diverse platforms. Containers with microservice architecture managed and orchestrated by Kubernetes help organizations embark on a modern development pattern. Moreover, Kubernetes has become in fact, the standard for container orchestration and offers the core for on-prem container cloud for enterprises. it's a single cloud-agnostic infrastructure with a rich open-source ecosystem. It allocates, isolates, and manages resources across many tenants at scale as needed in elastic fashion, thereby, giving efficient infrastructure resource utilization. Figure 25 illustrates how Kubernetes is transforming the use of compute and becoming the standard for running applications.

Figure 25. Compute on Kubernetes is exciting!!!

![]() Not all features mentioned above are available as a generally available product for a data lake but holds the potential for the above capabilities.

Not all features mentioned above are available as a generally available product for a data lake but holds the potential for the above capabilities.

Spark on Kubernetes

With Spark 2.4.5 along with YARN as a scheduler, comes full support for Apache Spark on Kubernetes as a scheduler. This enables a Kubernetes cluster act as compute layer running Spark workloads for the data lake much of which is used in Cloudera Private Cloud applications.

Spark on Kubernetes has considerably advanced the Hadoop ecosystem, since it made it easier for many public cloud-specific applications and framework use cases to be deployed on-prem; thus, providing hybridity to stretch to cloud anywhere. Kubernetes address gaps that existed in YARN such as lack of isolation and reproducibility and allows workloads to be packaged in docker images. Spark on Kubernetes also inherit all other in-built features such as auto-scaling, detailed metrics, advanced container networking, security, and so on.

Hybrid Architecture

Red Hat OpenShift, container cloud platform for CDP private cloud and, is the market leading Kubernetes powered container platform. This combination is the first enterprise data cloud with a powerful hybrid architecture that decouples compute and storage for greater agility, ease-of-use, and more efficient use of private and multi-cloud infrastructure resources. With Cloudera’s Shared Data Experience (SDX), security and governance policies can be easily and consistently enforced across data and analytics in private as well as multi-cloud deployments. This hybridity will open myriad opportunities for multi-function integration with other frameworks such as streaming data, batch workloads, analytics, data pipelining/engineering, and machine learning.

Cloud Native Architecture for Data Lake and AI

Cisco Data Intelligence Platform with CDP private cloud accelerates the process of becoming cloud-native for your data lake and AI/ML workloads. By leveraging Kubernetes powered container cloud, enterprises can now quickly break the silos in monolithic application frameworks and embrace a continuous innovation of micro-services architecture with CI/CD approach. With cloud-native ecosystem, enterprises can build scalable and elastic modern applications that extends the boundaries from private cloud to hybrid.

Infrastructure and Software Requirements

As illustrated in Figure 26, this CVD was designed with 27 x Cisco UCS C240 M5 running Cloudera Data Platform Private Cloud Base with Apache Ozone.

The cluster configuration consists of the following:

● 2 Cisco UCS 6454 Fabric Interconnects

● 27 Cisco UCS C240 M5 Rack servers

● 2 Cisco R42610 standard racks

● 4 Vertical Power distribution units (PDUs) (Country Specific)

The single-rack consists of two vertical PDUs and two Cisco UCS Fabric Interconnect with 16 Cisco UCS C240 M5 Rack Servers connected to each of the vertical PDUs for redundancy. This ensures availability during power source failure. Figure 26 illustrates a 2x25 Gigabit Ethernet link from each server is connected to both Fabric Interconnects.

Figure 26. Cisco Data Intelligence Platform with Cloudera Data Platform Private Base Running Apache Ozone

![]() Please contact your Cisco representative for country-specific information.

Please contact your Cisco representative for country-specific information.

![]() The Cisco UCS VIC 1457 provides 10/25Gbps, and the Cisco UCS VIC 1497 provides 40/100Gbps connectivity for the Cisco UCS C-series rack server. For more information see: Cisco UCS C-Series Servers Managing Network Adapters.

The Cisco UCS VIC 1457 provides 10/25Gbps, and the Cisco UCS VIC 1497 provides 40/100Gbps connectivity for the Cisco UCS C-series rack server. For more information see: Cisco UCS C-Series Servers Managing Network Adapters.

![]() In this solution, we configure quad-port mLOM VIC 1457 installed in Cisco UCS C240 M5 server with link 1-2 connected to Fabric A and link 3-4 connected to Fabric B to achieve 50GbE per server.

In this solution, we configure quad-port mLOM VIC 1457 installed in Cisco UCS C240 M5 server with link 1-2 connected to Fabric A and link 3-4 connected to Fabric B to achieve 50GbE per server.

![]() NVMe for Apache Ozone metadata is configured in RAID1 to provide business continuity in case of hard-ware failure. This is not needed on pure compute nodes which only use them for caching. Master Nodes and Data Nodes uses NVMe to store Ozone metadata. The Compute Nodes uses NVMe for shuffle and can be in JBOD. The mixed Compute Data Nodes uses NVMe for both Apache Ozone metadata and shuffle which requires mount Ozone partitions across both drives as RAID1 (800GB), with the remaining space used for shuffle/cache as independent JBOD partitions.

NVMe for Apache Ozone metadata is configured in RAID1 to provide business continuity in case of hard-ware failure. This is not needed on pure compute nodes which only use them for caching. Master Nodes and Data Nodes uses NVMe to store Ozone metadata. The Compute Nodes uses NVMe for shuffle and can be in JBOD. The mixed Compute Data Nodes uses NVMe for both Apache Ozone metadata and shuffle which requires mount Ozone partitions across both drives as RAID1 (800GB), with the remaining space used for shuffle/cache as independent JBOD partitions.

Port Configuration on Cisco UCS Fabric Interconnect 6454

Table 4 lists the port configuration on Cisco UCS Fabric Interconnect 6454.

Table 4. Port Configuration on Cisco UCS Fabric Interconnect 6454

| Port Type |

Port Number |

| Server |

1 - 48 |

| Network |

49 - 54 |

Server Configuration and Cabling for Cisco UCS C240 M5

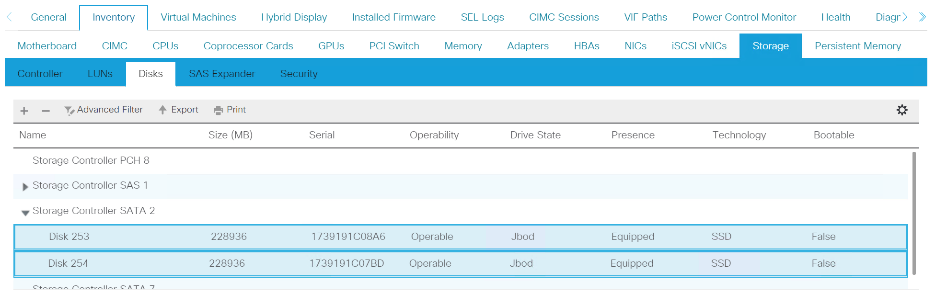

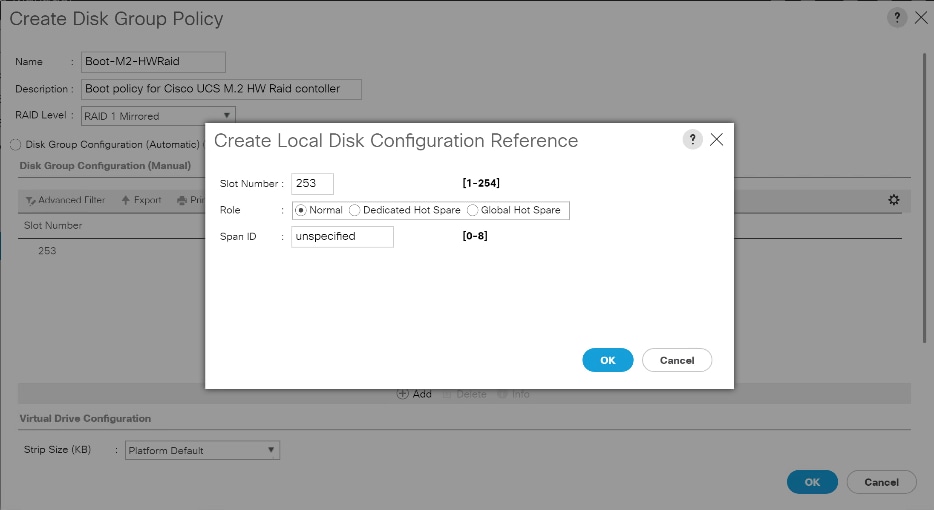

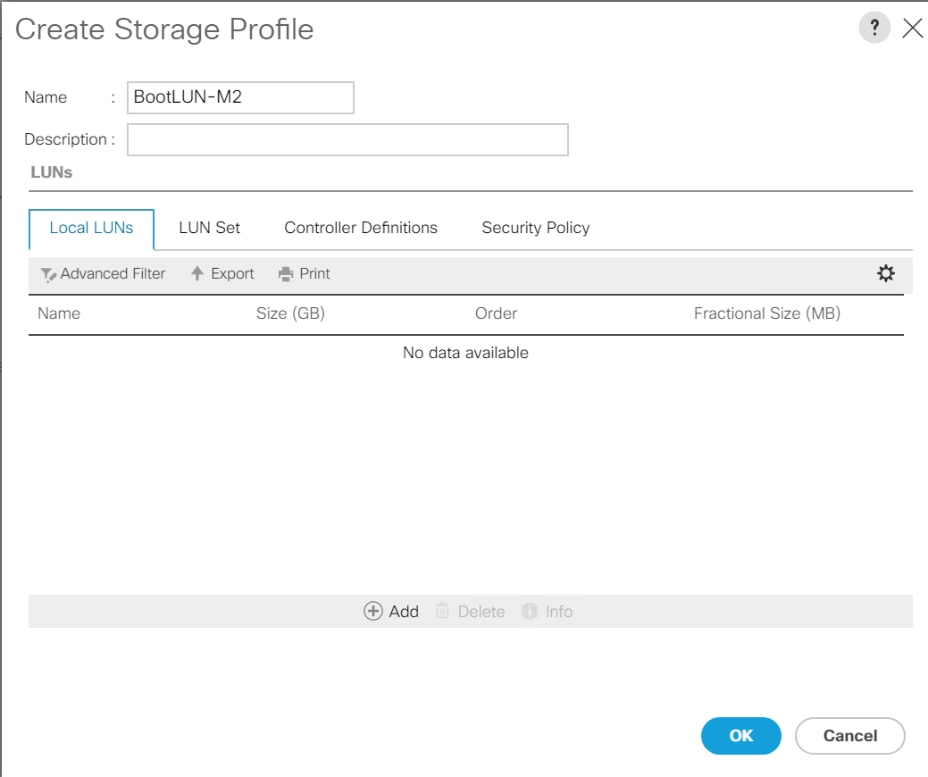

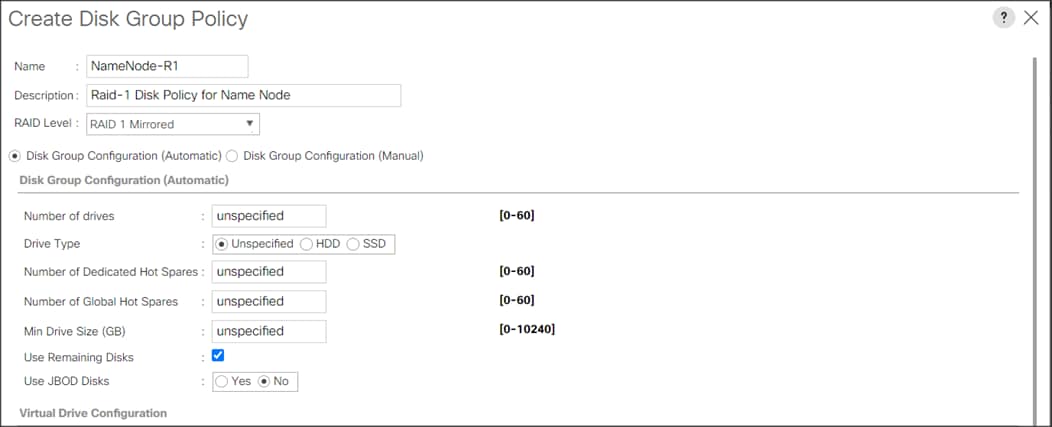

The Cisco UCS C240 M5 Rack Server is equipped with two 2nd Gen Intel Xeon Scalable Family Processor 6230R (2 x 26 cores, 2.1 GHz), 384 GB of memory (12 x 32GB @ 2933MHz), Cisco UCS Virtual Interface Card 1497, 4 x 3.8TB 2.5-inch Enterprise Value 6G SATA SSD (Intel S4500/S4150), M.2 with 2 x 960GB SSDs for Boot.

Figure 27 illustrates the port connectivity between the Cisco UCS Fabric Interconnect 6454 and Cisco UCS C240 M5 Rack Server with mLOM VIC 1457. Sixteen Cisco UCS C240 M5 servers are installed in this configuration.

For information on physical connectivity and single-wire management, go to: https://www.cisco.com/c/en/us/td/docs/unified_computing/ucs/c-series_integration/ucsm4-0/b_C-Series-Integration_UCSM4-0/b_C-Series-Integration_UCSM4-0_chapter_01.html

Figure 27. Network Connectivity for Cisco UCS C240 M5 Rack Server

![]() With Cisco UCS VIC 1455 and 1457, by default a port-channel is turned on between port 1-2 and port-channel between port 3-4. Up to 14 additional vHBAs or vNICs can be created.

With Cisco UCS VIC 1455 and 1457, by default a port-channel is turned on between port 1-2 and port-channel between port 3-4. Up to 14 additional vHBAs or vNICs can be created.

![]() When port-channel mode is set to enabled, the ports on the Cisco Nexus switch should be configured as channel group members.

When port-channel mode is set to enabled, the ports on the Cisco Nexus switch should be configured as channel group members.

![]() The Cisco UCS 1455 and 1457 Virtual Interface Cards, in non-port channel mode, provide four vHBAs and four vNICs by default. Up to 10 additional vHBAs or vNICs can be created.

The Cisco UCS 1455 and 1457 Virtual Interface Cards, in non-port channel mode, provide four vHBAs and four vNICs by default. Up to 10 additional vHBAs or vNICs can be created.

![]() As a best practice, select port 1 and 3 to connect to a pair of Cisco Nexus switch, port 2 and 4 can be added without the need for any additional changes if desired.

As a best practice, select port 1 and 3 to connect to a pair of Cisco Nexus switch, port 2 and 4 can be added without the need for any additional changes if desired.

![]() Switching between port-channel mode on/off requires server reboot.

Switching between port-channel mode on/off requires server reboot.

![]() For detailed configuration through Intersight see https://www.intersight.com/help/resources/creating_network_policies

For detailed configuration through Intersight see https://www.intersight.com/help/resources/creating_network_policies

Software Distributions and Firmware Versions

The software distributions required versions are listed in Table 5.

Table 5. Software Distribution and Version

| Layer |

Component |

Version or Release |

| Compute |

Cisco UCS C240 M5 |

4.1(3b) |

| Network |

Cisco UCS Fabric Interconnect |

4.1(3b) |

| Cisco UCS VIC1497 Firmware |

5.1(3a) |

|

| Storage |

Cisco 12G Modular RAID Controller |

51.10.0-3612 |

| SAS Expander |

65.11.20.00 |

|

| LSI MegaRAID SAS Driver |

07.710.50.00-rh1 |

|

| Software |

Red Hat Enterprise Linux Server |

7.8 |

| Cloudera CDP Private Cloud Base |

7.1.5 |

|

| Hadoop |

3.1.1 |

|

| Spark |

2.4.5 |

![]() The latest drivers can be downloaded from here: https://software.cisco.com/download/home/283862063/type/283853158/release/4.1(3b)

The latest drivers can be downloaded from here: https://software.cisco.com/download/home/283862063/type/283853158/release/4.1(3b)

![]() The latest drivers can be downloaded from the link below: https://software.cisco.com/download/home/283862063/type/283853158/release/4.1(3b)

The latest drivers can be downloaded from the link below: https://software.cisco.com/download/home/283862063/type/283853158/release/4.1(3b)

Deployment Hardware and Software

Cisco Unified Computing System Configuration

This section details the Cisco Unified Computing System (Cisco UCS) configuration that was done as part of the infrastructure build out. The racking, power, and installation of the Cisco UCS Rack Server is described in the physical topology section earlier in this document. Please refer to the Cisco UCS Manager Getting Started Guide. For more information about each step, see the Cisco UCS Manager - Configuration Guides.

Configure Cisco UCS Fabric Interconnect

This document assumes you are using Cisco UCS Manager Software version 4.1(3b). To upgrade the Cisco UCS Manager software and the Cisco UCS 6454 Fabric Interconnect software to a higher version of the firmware, see the Cisco UCS Manager Install and Upgrade Guides.

Alternatively, if you intend to clear the existing Cisco UCS Manager configuration, follow these steps:

1. Connect a console cable to the console port on what will become the primary fabric interconnect.

2. If the fabric interconnects were previously deployed and you want to erase it to redeploy, follow these steps:

3. Login with the existing username and password.

4. #connect local-mgmt

5. #erase config

6. #yes (to confirm)

7. After the fabric interconnect restarts, the out-of-box first time installation prompt appears, type console and press Enter.

8. Follow the Initial Configuration steps as outlined in Cisco UCS Manager Getting Started Guide. When configured, log into UCSM IP Address via the web interface to perform the base Cisco UCS configuration.

Configure Fabric Interconnects for a Cluster Setup

To configure the Cisco UCS Fabric Interconnects, follow this step:

1. Verify the following physical connections on the fabric interconnect:

● The management Ethernet port (mgmt0) is connected to an external hub, switch, or router.

● The L1 ports on both fabric interconnects are directly connected to each other.

● The L2 ports on both fabric interconnects are directly connected to each other

Configure Fabric Interconnect A

To configure Fabric Interconnect A, follow these steps:

1. Connect to the console port on the first Cisco UCS 6454 Fabric Interconnect.

At the prompt to enter the configuration method, enter console to continue.

If asked to either perform a new setup or restore from backup, enter setup to continue.

Enter y to continue to set up a new Fabric Interconnect.

Enter y to enforce strong passwords.

2. Enter the password for the admin user.

3. Enter the same password again to confirm the password for the admin user.

When asked if this fabric interconnect is part of a cluster, answer y to continue.

Enter A for the switch fabric.

4. Enter the cluster name for the system name.

5. Enter the Mgmt0 IPv4 address.

6. Enter the Mgmt0 IPv4 netmask.

7. Enter the IPv4 address of the default gateway.

8. Enter the cluster IPv4 address.

To configure DNS, answer y.

9. Enter the DNS IPv4 address.

Answer y to set up the default domain name.

10. Enter the default domain name.

Review the settings that were printed to the console, and if they are correct, answer yes to save the configuration.

11. Wait for the login prompt to make sure the configuration has been saved.

Configure Fabric Interconnect B

To configure Fabric Interconnect B, follow these steps:

1. Connect to the console port on the second Cisco UCS 6454 Fabric Interconnect.

When prompted to enter the configuration method, enter console to continue.

The installer detects the presence of the partner Fabric Interconnect and adds this fabric interconnect to the cluster. Enter y to continue the installation.

2. Enter the admin password that was configured for the first Fabric Interconnect.

3. Enter the Mgmt0 IPv4 address.

4. Answer yes to save the configuration.

5. Wait for the login prompt to confirm that the configuration has been saved.

For more information about configuring Cisco UCS 6454 Series Fabric Interconnect, go to: https://www.cisco.com/c/en/us/td/docs/unified_computing/ucs/ucs-manager/GUI-User-Guides/Getting-Started/4-0/b_UCSM_Getting_Started_Guide_4_0.html

To log into Cisco UCS Manager, follow these steps:

1. Open a Web browser and navigate to the Cisco UCS 6454 Fabric Interconnect cluster address.

2. Click the Launch link to download the Cisco UCS Manager software.

3. If prompted to accept security certificates, accept as necessary.

4. When prompted, enter admin for the username and enter the administrative password.

5. Click Login to log into the Cisco UCS Manager.

Upgrade Cisco UCS Manager Software to Version 4.1(3b)

This document assumes you’re using Cisco UCS 4.1(3b). Refer to the Cisco UCS 4.1 Release (upgrade Cisco UCS Manager software and Cisco UCS 6454 Fabric Interconnect software to version 4.1(3b). Also, make sure the Cisco UCS C-Series version 4.1(3b) software bundles are installed on the Fabric Interconnects.

![]() Upgrading Cisco UCS firmware is beyond the scope of this document. However for complete Cisco UCS Install and Upgrade Guides, go to: https://www.cisco.com/c/en/us/support/servers-unified-computing/ucs-manager/products-installation-guides-list.html

Upgrading Cisco UCS firmware is beyond the scope of this document. However for complete Cisco UCS Install and Upgrade Guides, go to: https://www.cisco.com/c/en/us/support/servers-unified-computing/ucs-manager/products-installation-guides-list.html

To register UCSM with Intersight, follow these steps:

1. Login WebUI for Cisco UCS Manager, go to admin tab. Select Device Connector from the drop-down list. Click Settings.

2. Enable Device Connector. Select Allow Control in Access Mode.

3. Complete steps for DNS configuration, NTP Configuration and Proxy Configuration as applicable. Click Save.

4. Make sure UCSM can communicate to Intersight.

5. Copy Device Claim ID from right side of Device Connector screen.

6. Log into Intersight.com

7. Select Devices tab on the left side menu; click Claim a New Device.

8. Enter Device ID and Device Claim Code copied from UCS Manager. Click Claim.

9. When Claimed, UCSM can be launched directly from Intersight.

10. Click Launch UCSM.

For more information, go to: Claiming a Device

![]() For this study, we launched UCSM through Intersight. UCSM WebUI can also be accessed the traditional way which is by entering the IP address of Cisco UCS Manager in a Web Browser. For more information, go to: https://www.cisco.com/c/en/us/td/docs/unified_computing/ucs/UCS_CVDs/Cisco_UCS_Data_Intelligence_Platform_with_Cloudera_and_CDSW.html

For this study, we launched UCSM through Intersight. UCSM WebUI can also be accessed the traditional way which is by entering the IP address of Cisco UCS Manager in a Web Browser. For more information, go to: https://www.cisco.com/c/en/us/td/docs/unified_computing/ucs/UCS_CVDs/Cisco_UCS_Data_Intelligence_Platform_with_Cloudera_and_CDSW.html

Configure Cisco UCS Manager through Intersight

To configure Cisco UCS Manager, follow these high-level steps:

1. Configure Fabric Interconnects for a Cluster Setup.

2. Set Fabric Interconnects to Fiber Channel End Host Mode.

3. Synchronize Cisco UCS to NTP.

4. Configure Fabric Interconnects for Rack or Chassis and Blade Server Discovery.

5. Configure Global Policies.

6. Configure Server Ports.

7. Configure LAN on Cisco UCS Manager.

8. Configure Ethernet LAN Uplink Ports.

9. Set QoS system class and Jumbo Frames in both the Cisco Fabric Interconnect.

10. Create Uplink Port Channels to Cisco Nexus Switches.

11. Configure FC SAN Uplink Ports

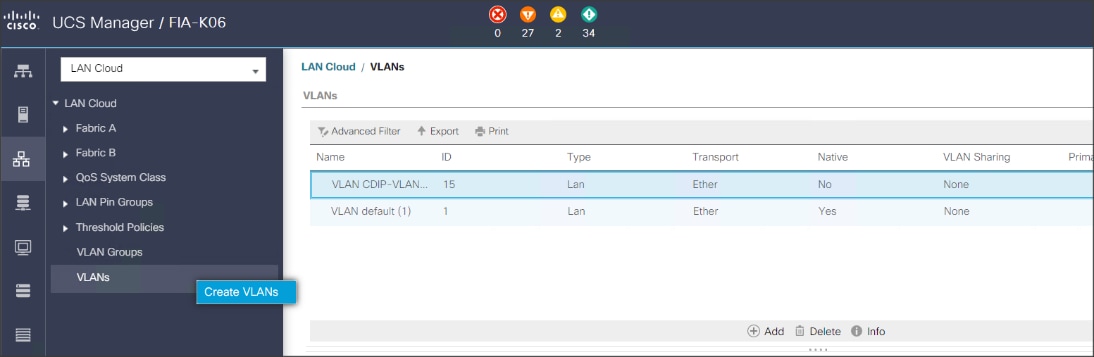

12. Configure VLAN

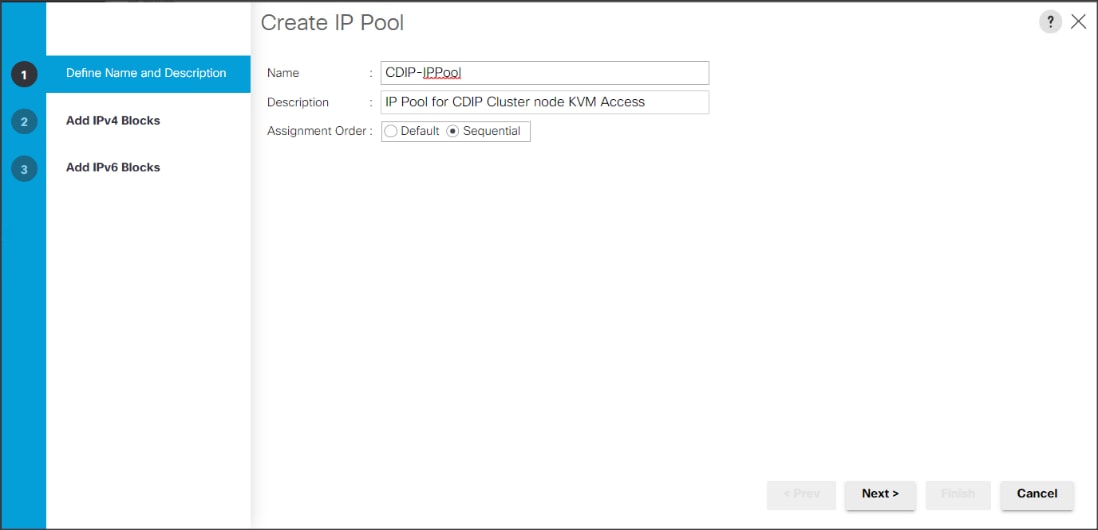

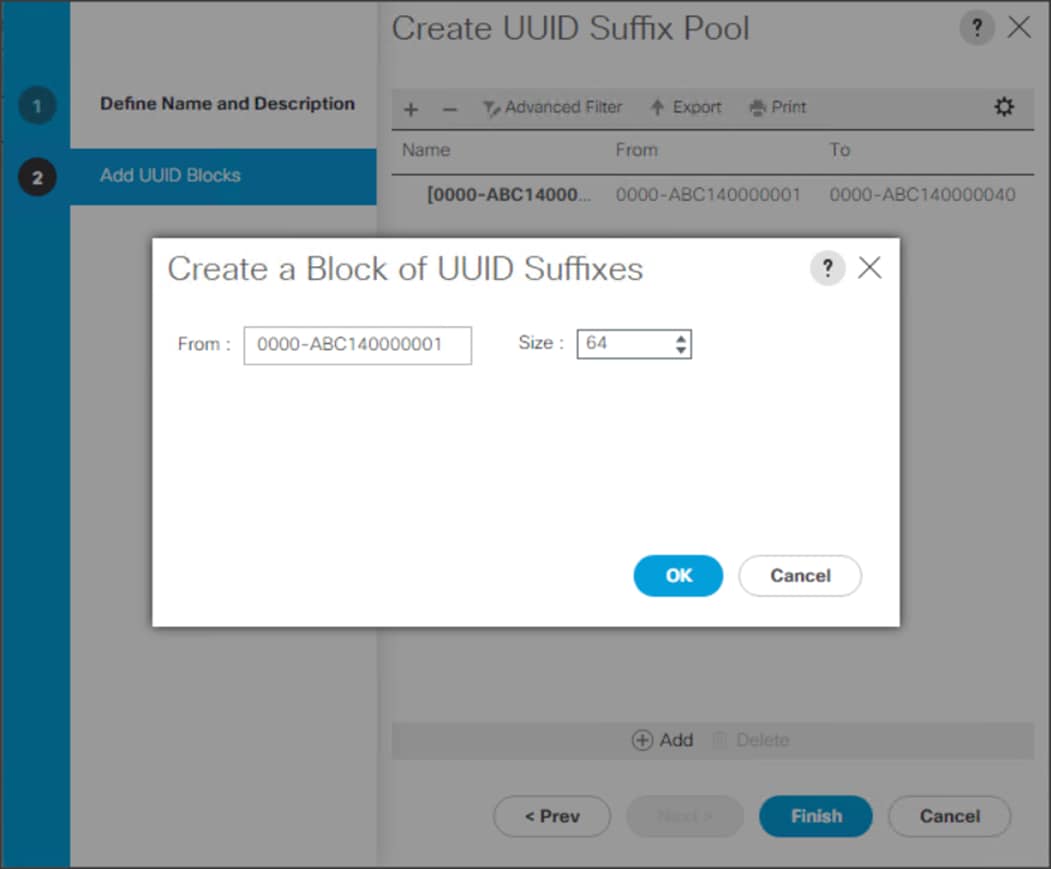

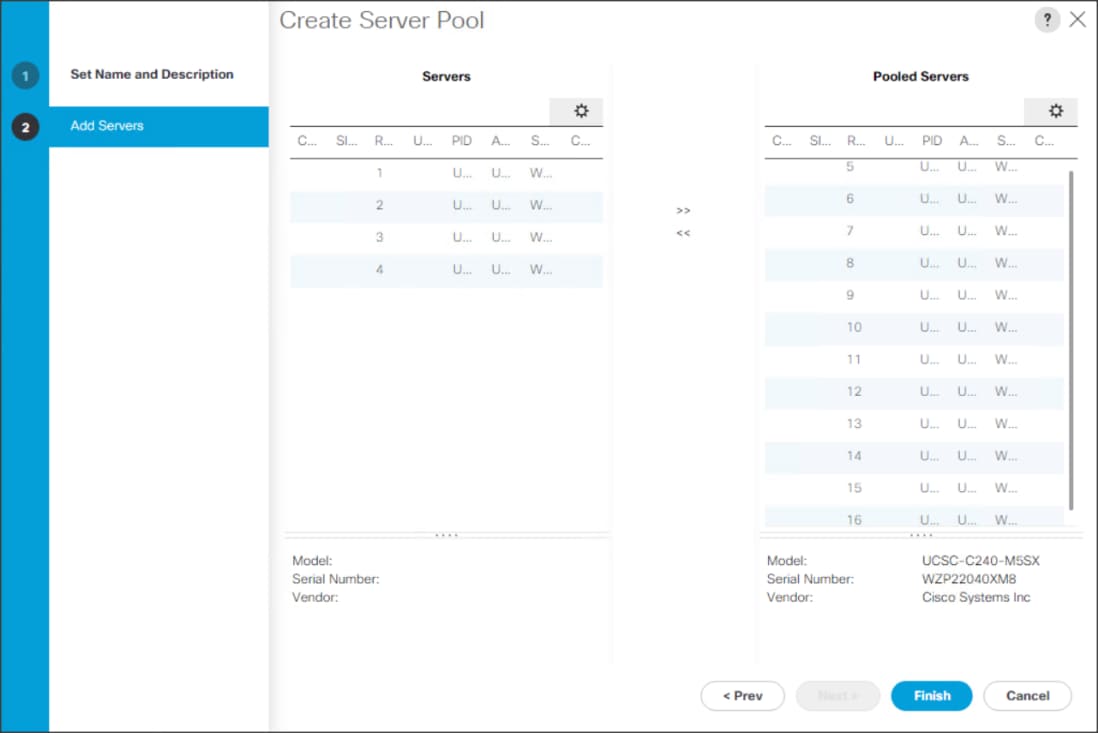

13. Configure IP, UUID, Server, MAC Pool and policy:

a. IP Pool Creation

b. UUID Suffix Pool Creation

c. Server Pool Creation

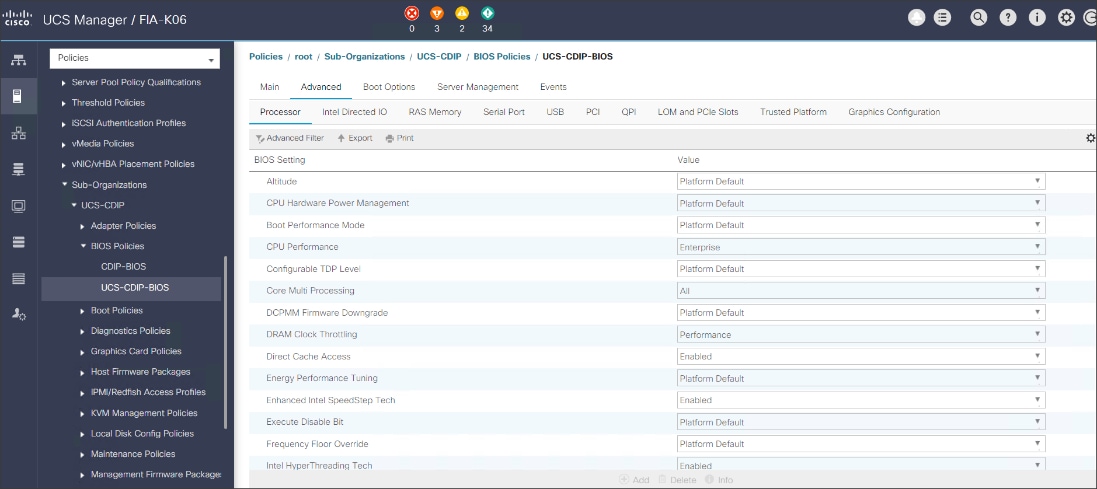

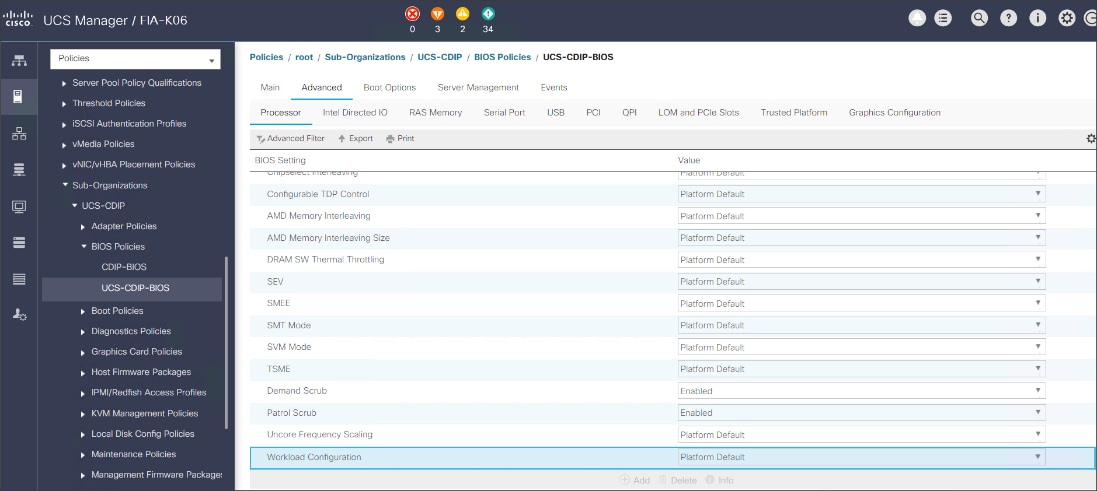

d. Configure Server BIOS Policy.

e. Create Adapter Policy.

f. Configure Default Maintenance Policy.

g. Configure vNIC Template

h. Create Server Boot Policy

Details for each step are discussed in the following sections.

Synchronize Cisco UCSM to NTP

To synchronize the Cisco UCS environment to the NTP server, follow these steps:

1. In Cisco UCS Manager, in the navigation pane, click the Admin tab.

2. Select All > Time zone Management.

3. In the Properties pane, select the appropriate time zone in the Time zone menu.

4. Click Save Changes and then click OK.

5. Click Add NTP Server.

6. Enter the NTP server IP address and click OK.

7. Click OK to finish.

8. Click Save Changes.

Figure 28. Synchronize Cisco UCS Manager to NTP

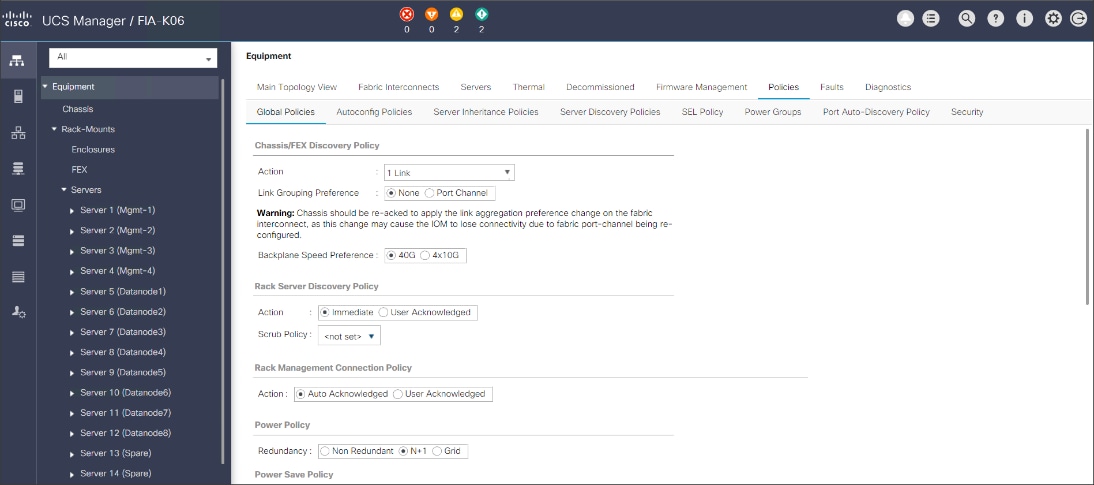

Configure Global Policies

The rack server and chassis discovery policy determine how the system reacts when you add a new rack server or chassis. We recommend using the platform max value as shown. Using platform max helps ensure that Cisco UCS Manager uses the maximum number of IOM uplinks available.

To configure the global policies, follow this step:

1. In Cisco UCS Manager; Configure Global Policy. Go to Equipment > Policies (right pane) > Global Policies.

Figure 29. Global Policies in UCSM

Configure Server Ports

Configure Server Ports to initiate Chassis and Blade discovery. To configure server ports, follow these steps:

1. Go to Equipment > Fabric Interconnects > Fabric Interconnect A > Fixed Module > Ethernet Ports.

2. Select the ports (for this solution ports are 1-28) which are connected to the Cisco UCS VIC 1457 on Cisco UCS C240 M5 rack server.

3. Right-click and select Configure as Server Port.

Figure 30. Configure Server Port on Cisco UCS Manager Fabric Interconnect for Server/Chassis Discovery

Configure Uplink Ports

Configure Network Ports to connect to the data center network switch.

![]() In our solution study, we connected to a Cisco Nexus 9000 series switch.

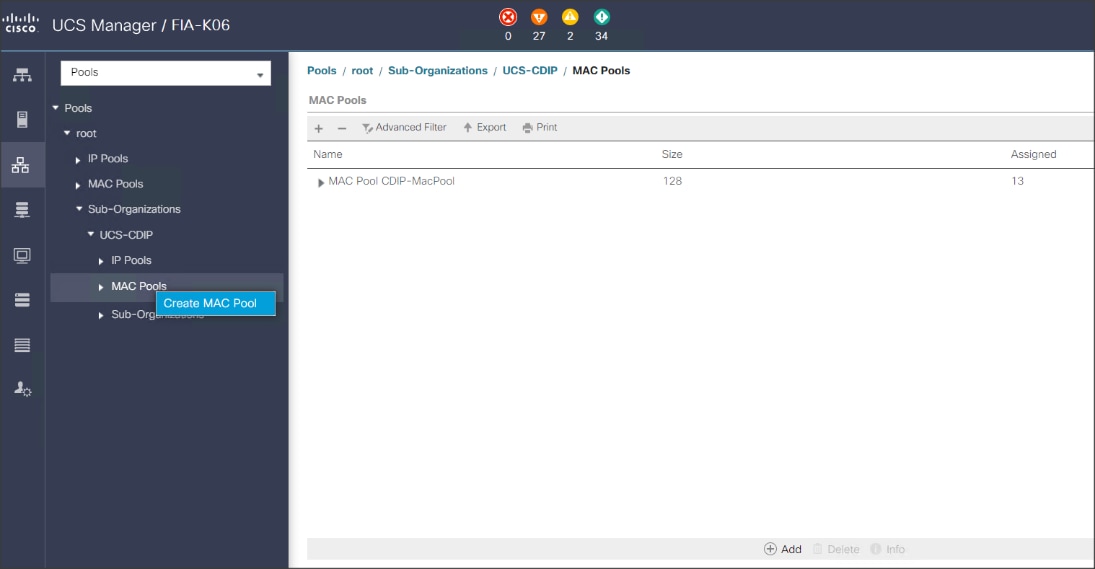

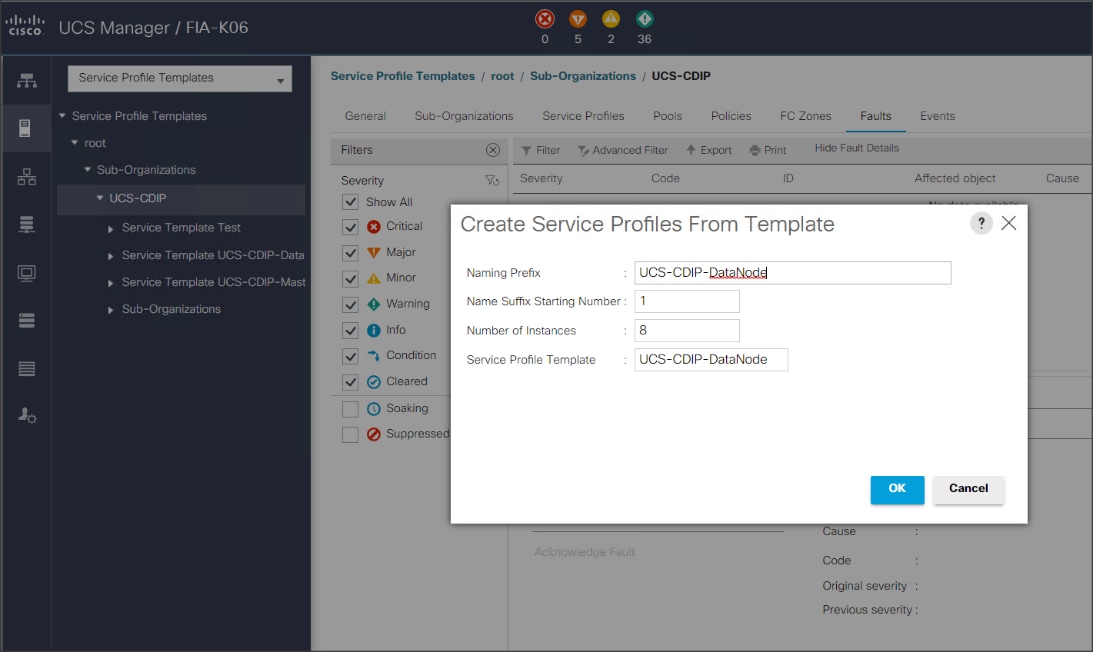

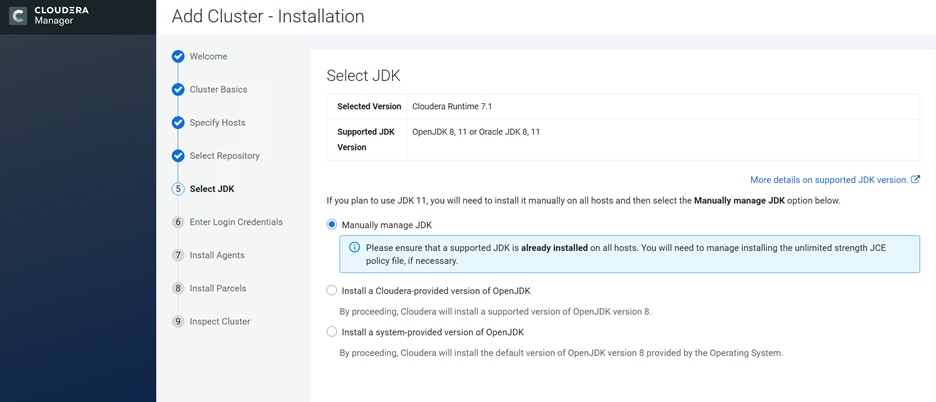

In our solution study, we connected to a Cisco Nexus 9000 series switch.