Cisco Prime Service Catalog 12.1 Reporting Guide

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- Updated:

- September 18, 2017

Chapter: Monitoring and Operating Cisco Prime Service Catalog Reports

Monitoring and

Operating Cisco Prime Service Catalog Reports

This chapter contains the following topics:

- Configuring Cognos Memory Usage

- Refreshing the Standard Reports Package

- Refreshing the Data Mart

- Understanding the Process Flow for Custom Reports Package

- Migrating Custom Reports

- Customizing the Cognos Framework Manager Data Model

Configuring Cognos Memory Usage

You configure Cognos memory usage by modifying the heap size for Cognos. To modify the heap size for the Cognos server:

Setting the Timeout Interval on IBM Cognos Server

The IBM Cognos session timeout setting should match that of Service Catalog to allow Single Sign-On to work seamlessly.

To set the timeout interval:

Refreshing the Standard Reports Package

All tables in the reporting database that support the Standard Reports Package are truncated and completely refreshed in every ETL cycle. Report contents are refreshed and available for viewing as soon as the ETL cycle has completed. The prebuilt reports cannot be run while the ETL is in process.

Refreshing the Data Mart

Several Cognos and Service Catalog components are required to refresh the database contents of the Custom Reports Package, which provides the business view of the data mart.The data mart must be loaded with data from the transactional systems on a regular basis in an Extract-Transform-Load (ETL) process. That is, data is extracted from the transactional system; transformed into a format that is optimized for reporting (rather than for online transactions); and then loaded into the data mart.

The ETL process is incremental. Only the data that has been changed or created since the last time the data mart was refreshed are processed in the next ETL cycle. Users can continue to access the data mart and run reports while the refresh process is running. However, the response time for some reports may be adversely affected. Running the ETL process has no or very limited impact on the response times in the transactional database.

The refresh process is typically scheduled to run automatically at regular intervals. We recommend that the data mart be refreshed every 24 hours, ideally at a period of limited user activity.

The Service Catalog ETL processes for all packages use Cognos Data Manager Runtime components to generate executables that are deployed into the reporting server as part of the application installation procedure. These scripts use Cognos SQL to read the data from the OLTP source, allowing for greater degree of portability of the catalog between heterogeneous database environments. Oracle or SQL Server specific code is abstracted to views that are created in the OLTP source. The Data Manager scripts also include User Defined Functions (UDF) to handle the transformation of specially formatted strings stored within the Service Catalog database (which support the internationalization of the software). The UDF also cleanses html tags from the data, if they have been included in dictionary captions or field labels.

A custom program is required to extract service form field-level data from the Service Catalog requisition record (such data is stored in a proprietary and compressed format, to optimize OLTP performance) into a standard relational format. This program runs on the Service Catalog application server.

Another custom program is required to create and maintain the Custom Reports Data Project. This script uses the Cognos Framework Manager SDK to dynamically create the Custom Reports Project, based on the services and dictionaries each customer site has chosen as Reportable. This dynamic structure and content is added to standard data mart facts and dimensions to produce the data mart available in the Custom Reports Project.

The generated executables should be collated in a job stream for batch execution. The exact structure of the job stream will vary depending on which Reporting components are installed and configured: prebuilt reports and KPIs; and the custom reports data mart.

We recommend starting a reporting installation with a once daily refresh of the data mart, typically scheduled during slow times for transactional processing. However, The refresh of the data mart may be scheduled concurrently with online usage of Service Catalog or multiple times per day. Performance for online transactional users would be affected only insofar as the database server load is affected. Some reporting users may report a blip in performance as indexes are rebuilt; however, the effects are generally transient.

Custom Reports Package

All tables that support the data mart available for Advanced Reporting are incrementally refreshed in every ETL cycle. Therefore, in principle, the data mart remains online during the ETL cycle. However, because of the increased database activity in the database and because indexes on the tables are temporarily unavailable, performance may be adversely affected.

Understanding the Process Flow for Custom Reports Package

The process flow used to produce the Custom Reports Package uses the components described above. The most substantive difference is the use of additional Cognos components and custom Cisco-provided code to handle the inclusion of dynamically defined form data (in the form of reportable services and dictionaries) in the data mart.

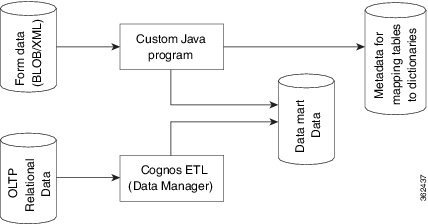

Data is loaded into the custom reports package by means of both a Cognos (Data Manager) ETL script, and a custom Java program, as shown in the diagram below.

Form Data Custom ETL

The custom Java program not only loads form data from reportable dictionaries and services into corresponding dimensions in the data mart, it also tracks which dictionaries and services have been loaded. On its initial run this program loads all data in the transactional database into the analytical database which supports the data mart. On subsequent cycles it loads data incrementally, that is, only new or modified data in the transactional database is inserted or updated in the data mart.

In addition to actually loading the data, this program also checks for new reportable dictionaries and services and, updates the list of such objects. This information, labeled as “Metadata for mapping...” in the diagram above, is then used by another custom program. This program uses the Framework Manager API to construct the business view of the data that is available to users in Report Designer and Ad-Hoc Reporting, so that the names assigned to reportable dictionaries and their attributes are accurately displayed in the reporting tools.

Data Manager ETL

The DataManager ETL loads statically defined (dimension/fact) data from OLTP database into the corresponding dimensions and facts in the data mart. The load process is incremental. When this process is run for the first time, it loads all available data from the transactional database. On subsequent runs it loads only data which have been inserted or modified after the last run of ETL.

All source tables in the OLTP database have time stamp columns (CreatedOn/ModifiedOn). These columns are updated whenever a new record is inserted or an existing record is modified. The ETL process captures new/modified data by comparing the time stamp columns in the source tables against the date and time the ETL process was last run.

The ETL process has been optimized to handle both inserting new rows and updating existing rows in the data mart. For example, when a service request is submitted, the request and all its tasks would be created in the data mart. When the tasks are subsequently updated, the existing task fact is updated to reflect the new information.

The ETL process runs as follows:

- Select Data from the Transactional Database

- Insert Incremental Data into Staging Area Tables

- Construct Work Area Tables

- Load Data in to the Dimension/Fact Tables

Select Data from the Transactional Database

Select new or changed data from the OLTP database, based on extraction views which include the columns required in the data mart and which filter by comparing the time stamps in the source data to the date and time the ETL process was last run.

Insert Incremental Data into Staging Area Tables

Staging tables in the OLAP database (indicated by the prefix STG) temporarily hold the new/changed data from the OLTP database. Staging tables have one to one correspondence with OLTP tables. These tables are truncated on every run of ETL so they contain only new/ modified data.

Construct Work Area Tables

Work area tables in the OLAP database (indicated by the prefix WRK) hold data extracted and consolidate it from previous ETL runs. Work area tables are used only for transforming data for dimensions whose data is derived from multiple tables in the transactional database. The mapping of source to target tables is given in the Modifying Form Data Reporting Configuration .

Load Data in to the Dimension/Fact Tables

The ETL uses both staging and work tables to insert new/modified data into the appropriate dimensions/fact tables in the datamart. Business views are created on top these tables and these views are exposed as query subjects in the package.

Migrating Custom Reports

When you upgrade Prime Service Catalog Reporting solution from Cognos 8.4.0/ 8.4.1 to Cognos version 10.2.1, the system migrates all existing report specification and customized reports in My Folders and Public Folders automatically.

Also, if you have administrative privileges and choose to perform the upgrade manually you can use the reporting solution wizard to migrate all reports.

In either case, we recommend that you take a backup of all existing reports during upgrade.

For more information about performing upgrade, see Cisco Prime Service Catalog Installation and Upgrade Guide .

Customizing the Cognos Framework Manager Data Model

Service Catalog includes only runtime licenses for the Framework Manager and Data Manager tools used to populate the data mart. Service Catalog users who have Cognos enterprise development licenses may wish to customize the data mart contents, including additional client-specific data.

The key to a successful customization is taking into account that the business view of the data, configured via Framework Manager, includes both a static and dynamic component. The static component, specifying the universal facts and dimension, is stored in the file

<App_Home>\cognos\Reports\CustomReportsDataModel\ CustomReportsDataModel.cpf

on the reporting server. The ETL uses that file as the basis for the business view, then generates additional DictionaryData and ServiceData dimensions, depending on which objects have been marked as reportable. Client customizations to the static component may be applied using IBM Cognos Framework Manager. Any such customizations are incorporated into the new business view, generated via the next ETL cycle. Such customizations will have to be reapplied after any application upgrade.

Feedback

Feedback