Deploying Applications in Clustered Mode

Refer to the following sections for deploying applications in cluster mode:

-

Requirements for a clustered setup, see Requirements for Cisco DCNM Clustered Mode.

-

To install a DCNM compute, see Installing a DCNM Compute.

-

To ensure that the vSwitch Networking Policies are properly configured, see Networking Policies for OVA Installation - Clustered mode.

-

To form the compute cluster, see Enabling the Compute Cluster.

-

Once cluster is formed, the compute nodes will appear as Discovered in the Applications > Compute tab. Then compute Nodes need to be added by an user with admin privileges on the DCNM. To add them compute nodes from the UI, see Adding Computes into the Cluster Mode.

-

Now compute cluster is ready for applications to be deployed. To upload an application, see Add a new application to DCNM. To start/stop/delete application, see Stop and Delete Applications.

For additional compute/application related operations, see Application Framework User Interface.

For advanced compute and application health monitoring, see Watch Tower.

Cisco DCNM Cluster Mode

Starting from Cisco DCNM 11.1, in a DCNM HA setup (active + standby), 80 switches with Endpoint Locator, Virtual Machine Manager, config compliance are validated. For a network exceeding 80 switches, with these features in a given DCNM instance, (maximum qualified scale is 256 switches), it is recommended to add 3 compute nodes. This is called the clustered mode in Cisco DCNM.

Compute nodes are scale out application hosting nodes that run resource-intensive services to provide services to larger fabric. When compute nodes are added, all containerized services run only on these nodes. This includes Config Compliance, Endpoint Locator, Virtual Machine Manager. The Elasticsearch time series database for these features run on compute nodes in case of clustered mode.

While DCNM core functionalities only run on the DCNM HA nodes. Addition of compute nodes beyond 80 switches is to build a scale out model for DCNM and related services.

From Release 11.2(1), you can configure IPv6 address for Network Management for compute clusters. However, DCNM does not support IPv6 address for containers and must connect to DCNM using only IPv4 address only.

Requirements for Cisco DCNM Clustered Mode

Note |

We recommend that you install the Cisco DCNM in the Native HA mode. |

Cisco DCNM LAN Deployment Without Network Insights (NI)

| Node | CPU Deployment Mode | CPU | Memory | Storage | Network |

|---|---|---|---|---|---|

| DCNM | OVA/ISO | 16 vCPUs | 32G | 500G HDD | 3xNIC |

| Computes | NA | — | — | — | — |

| Node | CPU Deployment Mode | CPU | Memory | Storage | Network |

|---|---|---|---|---|---|

| DCNM | OVA/ISO | 16 vCPUs | 32G | 500G HDD | 3xNIC |

| Computes x 3 | OVA/ISO | 16 vCPUs | 64G | 500G HDD | 3xNIC |

Cisco DCNM LAN Deployment With NIA and NIR Software Telemetry

Note |

We recommend that you install the Cisco DCNM in the Native HA mode. |

| Node | CPU Deployment Mode | CPU | Memory | Storage | Network |

|---|---|---|---|---|---|

| DCNM | OVA/ISO | 16 vCPUs | 32G | 500G HDD | 3xNIC |

| Computes x 3 | OVA/ISO | 16 vCPUs | 64G | 500G HDD | 3xNIC |

| Node | CPU Deployment Mode | CPU | Memory | Storage | Network |

|---|---|---|---|---|---|

| DCNM | OVA/ISO | 16 vCPUs | 32G | 500G HDD | 3xNIC |

| Computes x 3 | ISO | 32 vCPUs | 256G | 2.4 TB HDD | 3xNIC1 |

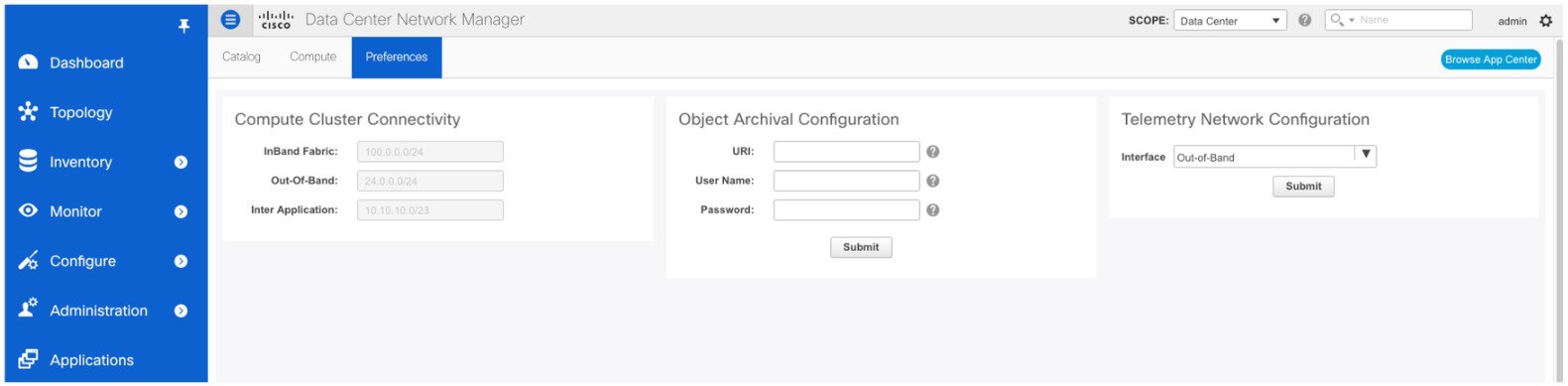

Subnet Requirements

In general, Eth0 of the Cisco DCNM server is used for Management, Eth1 is used to connect Cisco DCNM Out-Of-Band with switch management, and eth2 is used for In-Band front panel connectivity of Cisco DCNM. The same concept extends into compute nodes as well. Some services in cluster mode have additional requirements. That is, some services require a switch to reach into Cisco DCNM, for example, Route Reflector to Endpoint Locator connection or switch streaming telemetry into the Telemetry receiver service of the application. This IP address needs to remain sticky during all failure scenarios. For this purpose, an IP pool needs to be provided to Cisco DCNM at the time of cluster configuration for both Out-of-Band and In-Band subnets.

Telemetry NTP Requirements

For telemetry to work correctly, the Cisco Nexus 9000 switches and Cisco DCNM need to be time synchronized (NTP is recommended). DCNM telemetry manager does the required NTP configuration as part of enablement. If there is a use-case to change the NTP server configuration manually on the switches ensure that the DCNM and the switches are always time synchronized. To setup telemetry network configuration, see .

How Do the Compute Nodes Communicate with DCNM Nodes?

The compute nodes communicate with DCNM nodes using APIs.

How Do the Compute Nodes Communicate with Each Other?

The compute nodes communicate with each other using Eth0 IP addresses. When in clustered mode, it is recommended to have at least 16 IP addresses in the pool for the containers.

Installing a DCNM Compute

Note |

With Native HA installations, ensure that the HA status is OK before DCNM is converted to cluster mode. |

A Cisco DCNM Compute can be installed using an ISO or OVA of a regular Cisco DCNM image. It can be deployed directly on a bare metal using an ISO or a VM using the OVA. After you deploy Cisco DCNM, using the DCNM web installer, choose Compute as the install mode for Cisco DCNM Compute nodes. On a Compute VM, you will not find DCNM processes or postgres database; it runs a minimum set of services required to provision and monitor applications.

If you have a Cisco DCNM LAN Fabric deployment, refer to Installing Cisco DCNM Compute Node in the Cisco DCNM Installation and Upgrade Guide for Classic LAN Deployment, Release 11.2(1).

If you have a Cisco DCNM LAN Fabric deployment, refer to Installing Cisco DCNM Compute Node in the Cisco DCNM Installation and Upgrade Guide for LAN Fabric Deployment, Release 11.2(1).

Note |

Compute nodes and Cluster modes are supported only on these two Cisco DCNM Deployments. |

Networking Policies for OVA Installation - Clustered mode

For each compute OVA installation, ensure the following networking policies are applied for the corresponding vSwitches of the host:

-

Login to vCenter.

-

Click on the Host where compute OVA is running.

-

Click Configuration > Networking.

-

Right click on the port groups corresponding to the eth1 and eth2, and select Edit Settings.

The VM Network - Edit Settings window is displayed.

-

In Security settings, for Promiscuous mode, select Accepted.

-

If a DVS Port-group is attached to the compute VM, configure these settings on the Vcenter > Networking > Port-Group. If a normal Vswtich port-group is used, configure these settings on Configuration > Networking > port-group on each of the Compute's hosts.

Figure 1. Security settings for VSwitch Port-Group

Figure 2. Security settings for DVSwitch Port-group

Note |

Ensure that you repeat this procedure on all the hosts, where a Compute OVA is running. |

Adding Computes into the Cluster Mode

Compute is an additional installation mode with Cisco DCNM Release 11.2(1). It is supported with both small and large installations. Cisco DCNM supports a maximum of three Computes.

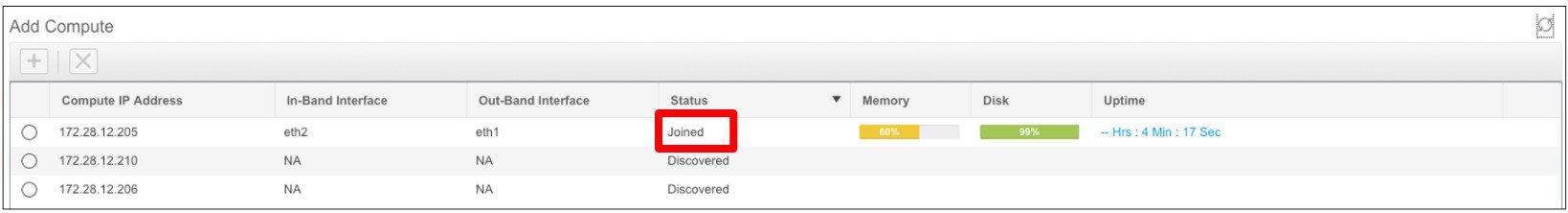

When a Compute is installed with correct parameters, it appears as Joined in the Status column. However, the other two computes will appear as Discovered.

To add computes into the cluster mode from Cisco DCNM Web UI, perform the following steps:

Procedure

| Step 1 |

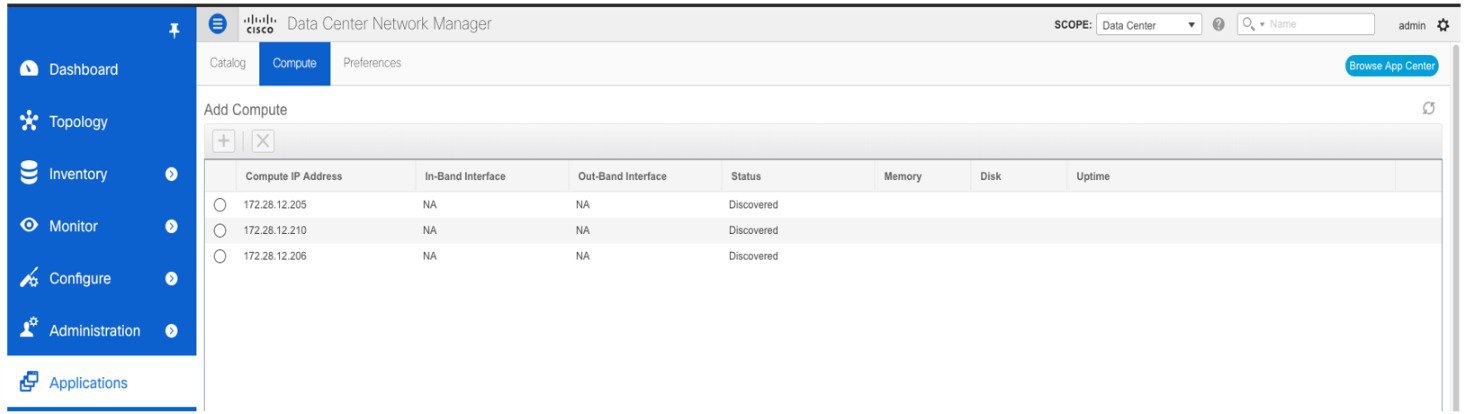

Choose Applications > Compute. The Compute tab displays the computes enabled on the Cisco DCNM. |

||

| Step 2 |

Select a Compute node which is in Discovered status. Click the Add Compute (+) icon.

The Compute window can also be used to monitor the health of computes. The health essentially indicates the amount of memory left in the compute, this is based on applications that are enabled. If a Compute is not properly communicating with the DCNM Server, the status of the Compute appears as Offline, and no applications will be running on Offline Computes. Most applications do not function properly if there are less than three computes, while a short loss of a single Compute node is mostly fine. In such cases, refer to the requirements of the individual applications. |

||

| Step 3 |

In the Add Compute dialog box, verify the Compute IP Address, In-Band Interface, and the Out-Band Interface values.

|

||

| Step 4 |

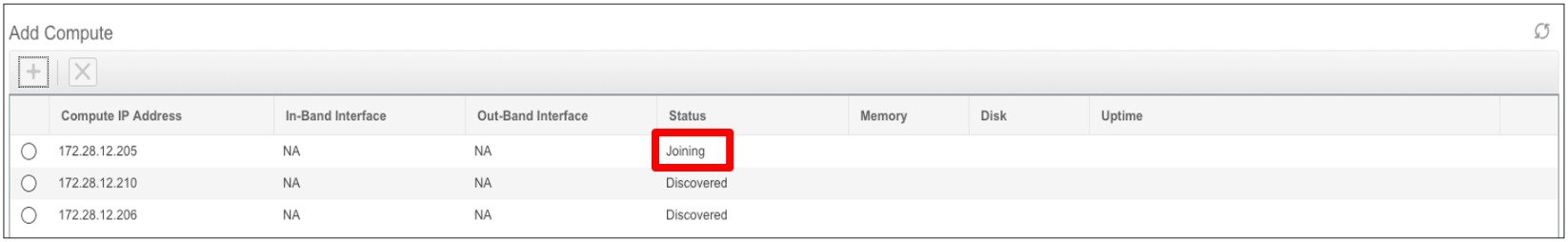

Click OK. The Status for that Compute IP changes to Joining.

You must wait until the Compute IP status shows Joined.

|

||

| Step 5 |

Repeat the above steps to add the remaining compute node. All the Computes appear as Joined.

|

Telemetry Network and NTP Requirements

For the Network Insights Resource (NIR) application, a UTR micro-service running inside the NIR receives the telemetry traffic from the switches either through Out-Of-Band (Eth1) or In-Band (Eth2) interface. By default, the telemetry is configured to be streaming via the Out-Of-Band interface. You can choose to change it to In-Band interface as well.

Telemetry using Out-of-Band (OOB) network

By default, the telemetry data is streamed through the management interface of the switches to the Cisco DCNM OOB network eth1 interface. This is a global configuration for all fabrics in Cisco DCCNM LAN Fabric Deployment, or switch-groups in Cisco DCNM Classic LAN Deployment. After the telemetry is enabled via Network Insights Resources (NIR) application, the telemetry manager in Cisco DCNM will push the necessary NTP server configurations to the switches by using the DCNM OOB IP address as the NTP server IP address, as shown below:

switch# show run ntp

!Command: show running-config ntp

!Running configuration last done at: Thu Jun 27 18:03:07 2019

!Time: Thu Jun 27 20:32:18 2019

version 7.0(3)I7(6) Bios:version 07.65

ntp server 192.168.126.117 prefer use-vrf management

Telemetry using In-Band (IB) network:

The switches stream the telemetry data through their front panel ports to Cisco DCNM assuming the connectivity from the switches to the Cisco DCNM In-Band network eth2 interface.

Feedback

Feedback