Jitter is a simple term that describes interpacket delay variance. When multiple packets are sent consecutively at an interval

of 10 ms from source to destination, the destination should receive them 10 ms apart (if the network is behaving correctly).

However, if there are delays in the network (such as queuing, arriving through alternate routes, and so on), the time interval

between packet arrivals might be more or less than 10 ms. A positive jitter value indicates that the packets arrived more

than 10 ms apart. A negative jitter value indicates that the packets arrived less than 10 ms apart. If the packets arrive

12 ms apart, the positive jitter is 2 ms; if the packets arrive 8 ms apart, the negative jitter is 2 ms. For delay-sensitive

networks, positive jitter values are undesirable, and a jitter value of 0 is ideal.

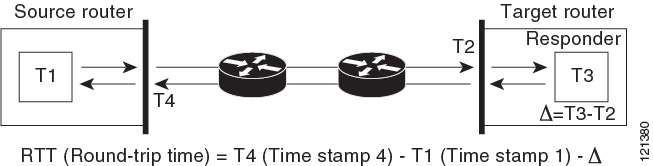

In addition to monitoring jitter, the IP SLA UDP jitter operation can be used as a multipurpose data gathering operation.

The packets generated by IP SLAs carry sequence information and time stamps from the source and operational target that include

packet sending and receiving data. Based on this data, UDP jitter operations measure the following:

-

Per-direction jitter (source to destination and destination to source)

-

Per-direction packet-loss

-

Per-direction delay (one-way delay)

-

Round-trip delay (average round-trip time)

Because the paths for the sending and receiving of data can be different (asymmetric), you can use the per-direction data

to more readily identify where congestion or other problems are occurring in the network.

The UDP jitter operation generates synthetic (simulated) UDP traffic and sends a number of UDP packets, each of a specified

size, sent a specified number of milliseconds apart, from a source router to a target router, at a given frequency. By default,

ten packet-frames, each with a payload size of 10 bytes are generated every 10 ms, and the operation is repeated every 60

seconds. You can configure each of these parameters to best simulate the IP service you want to provide.

To provide accurate one-way delay (latency) measurements, time synchronization (as provided by NTP) is required between the

source and the target device. Time synchronization is not required for the one-way jitter and packet loss measurements. If

the time is not synchronized between the source and target devices, one-way jitter and packet loss data is returned, but values

of 0 are returned for the one-way delay measurements provided by the UDP jitter operation.

Feedback

Feedback