vzAny with PBR Overview

The following sections provide an overview, requirements and guidelines, and configuration steps for enabling vzAny contracts with Policy-Base Redirects (PBR) in your Multi-Site domain. For an overview of vzAny in general and basic vzAny use cases that do not include PBR, see the vzAny Contracts chapter instead.

Use Cases

Prior to release 4.2(3), the following basic vzAny use cases (without PBR) were supported with Multi-Site, all of which are described in the vzAny Contracts chapter:

-

Free communication between EPGs within the same VRF.

-

Many-to-one communication allowing all EPGs within the same VRF to consume a shared service from a single EPG that is in the same or different VRF.

Beginning with NDO release 4.2(3), the following additional use cases for vzAny with PBR are supported for ACI fabrics running APIC release 6.0(4) or later, which allow redirecting traffic to a logical firewall service connected in each site in one-arm mode:

-

Any intra-VRF communication (vzAny-to-vzAny) between two EPGs or External EPGs within the same VRF.

-

Many-to-one communication between all the EPGs in a VRF (vzAny) and a specific EPG that is part of the same VRF.

-

Many-to-one communication between all the EPGs in a VRF (vzAny) and a specific External EPG that is part of the same VRF.

General Workflow for Configuring vzAny with PBR

The following sections describe how to create and configure the individual building blocks (such as templates, EPGs, contracts) that are required for all of the vzAny with PBR use cases followed by user-case-specific sections that provide the workflows necessary to put the individual building blocks together for the specific use case you want to configure.

When configuring any of the vzAny with PBR use cases, you will go through the following workflow which includes the new Service Device templates introduced in release 4.2(3) and used to define service graph configurations:

-

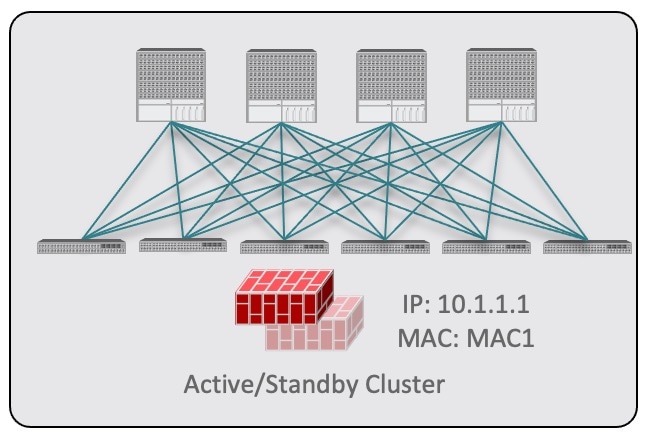

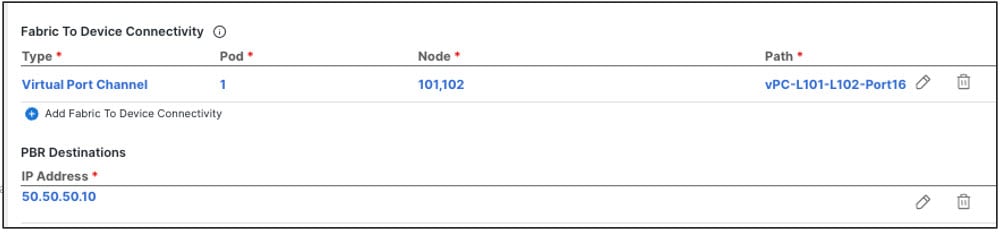

Create a Service Device template and associate it to a specific tenant and to all the sites where the configuration is required, which includes:

-

(Optional) Referencing an IP SLA policy.

The IP SLA policy must be already defined in a Tenant Policy template associated to the same tenant.

-

Creating one or more service node devices in the Service Device template.

Note that when you create a service device configuration, you will need to provide a bridge domain which must already exist in one of the Application templates. The exact BD requirements are listed in the following vzAny with PBR Guidelines and Limitations section.

-

Providing site-level configurations for the service node device defined in the Service Device template and deploying it.

Note

Beginning with release 4.2(3) and the introduction of Service Device templates, there's no Service Graph object that must be explicitly created in Nexus Dashboard Orchestrator for PBR use cases. NDO implicitly creates the service graph and deploys it in the site's APIC.

-

-

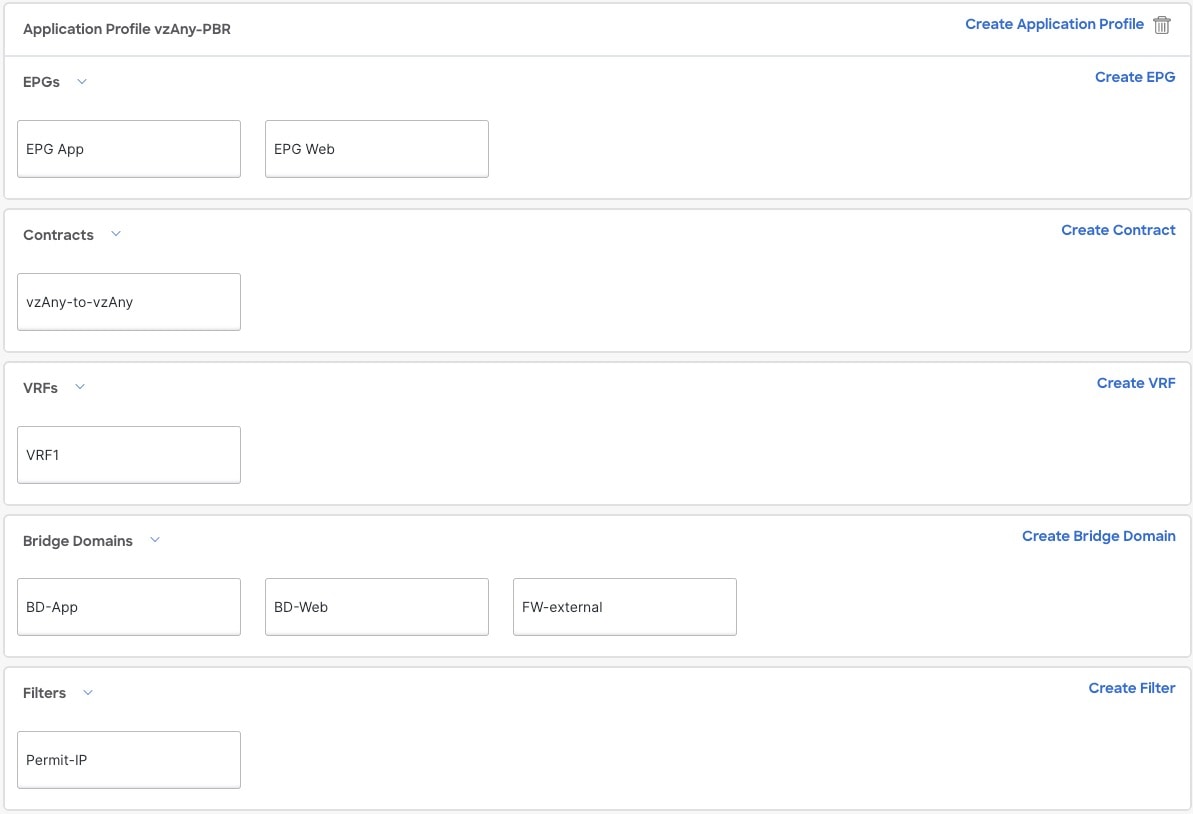

Complete the configuration for the specific tenant associated to the Service Device template that you just created, which includes:

-

Creating a Tenant Application template and assigning it to all sites where the configuration is required.

-

Configuring vzAny VRF settings required to enable PBR and a contract.

-

Configuring the consumer and provider EPGs.

While the service BD must be stretched across sites, the BDs you use for the EPGs can be stretched or site-local.

-

-

Associate the service device you created in Step 1 with the vzAny contract you created in Step 2.

Note |

Please refer ACI Contract Guide and ACI PBR White Paper to understand Cisco ACI contract and PBR terminologies. |

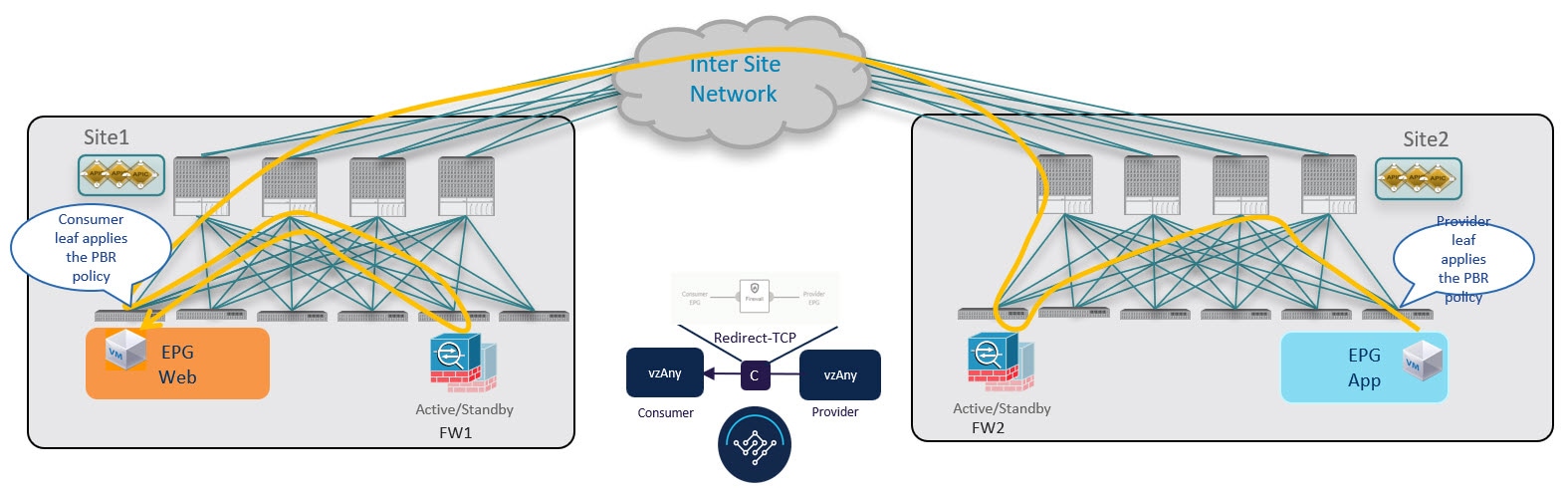

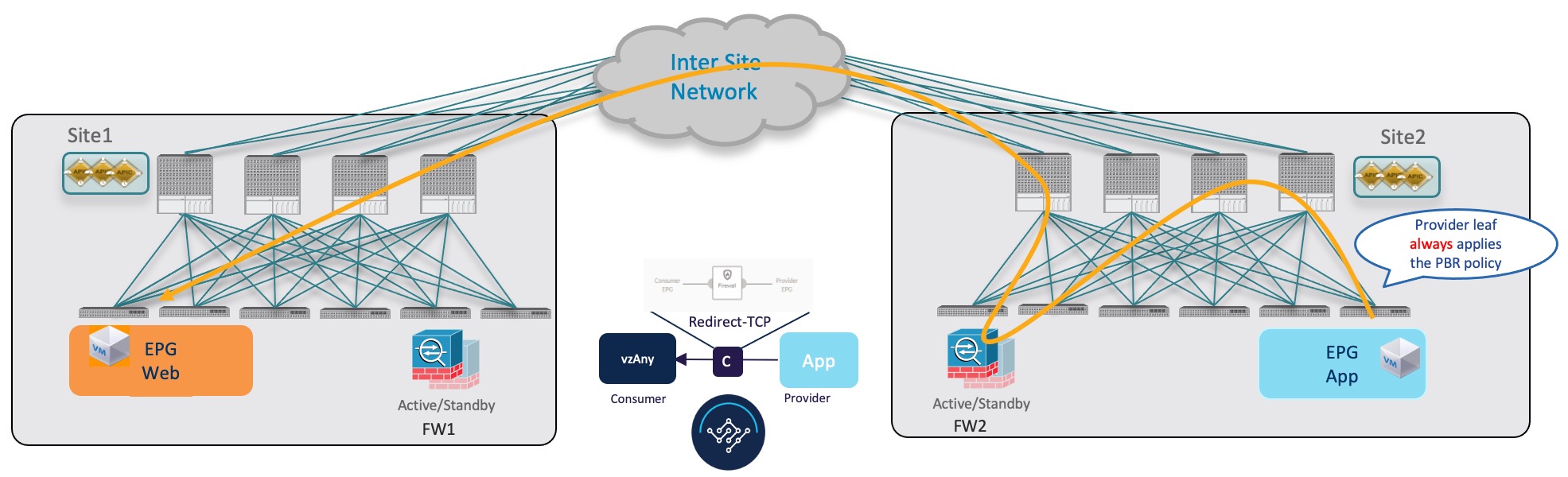

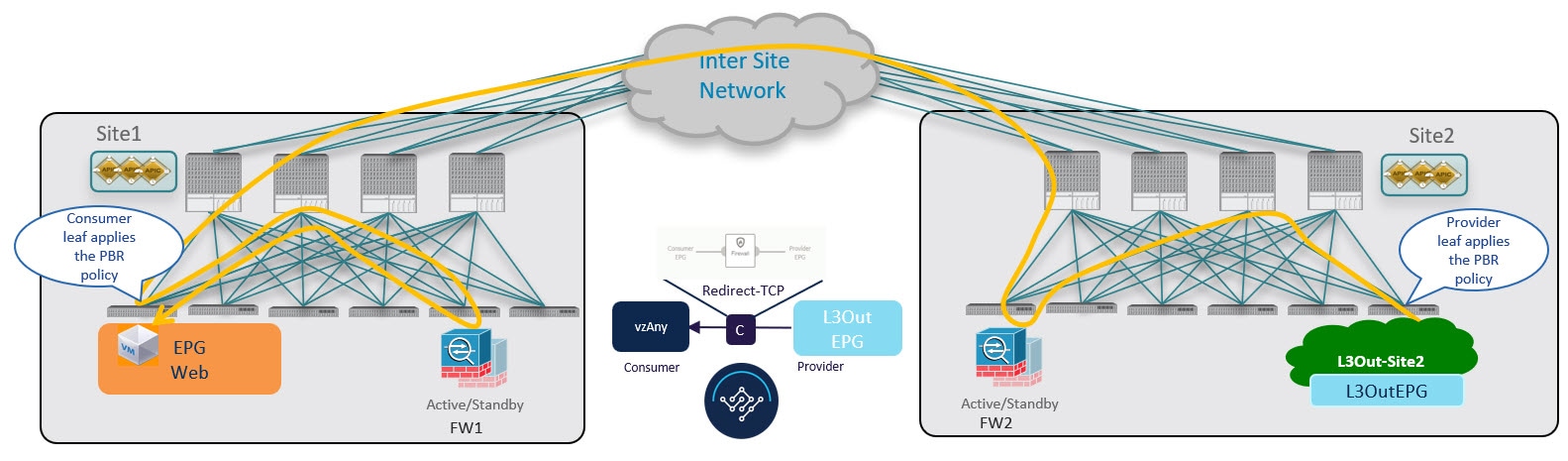

Traffic Flow: Intra-VRF vzAny-to-vzAny

This section summarizes the traffic flow between two EPGs that are part of the logical vzAny construct for a given VRF in different sites. In this use case, vzAny is both the provider and the consumer of a PBR contract.

Note |

In this case, the traffic flow in both directions is redirected through both firewalls in order to avoid asymmetric traffic flows due to independent FW nodes deployed in the two sites. |

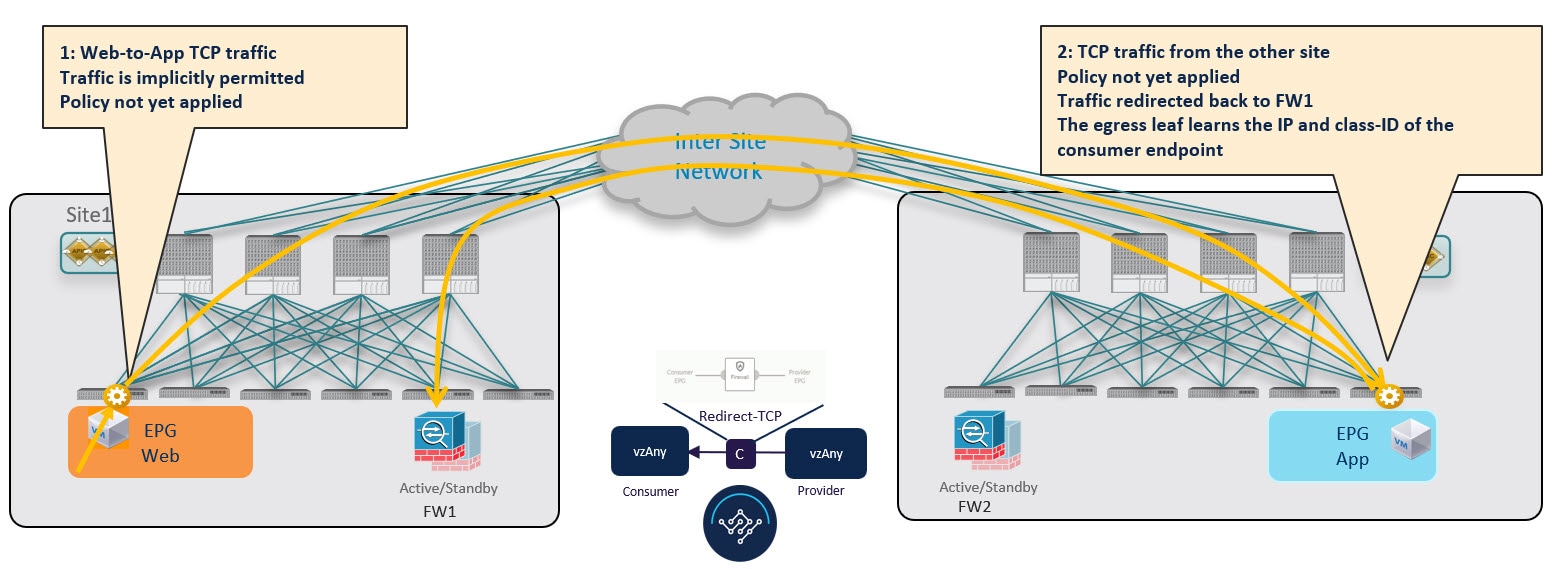

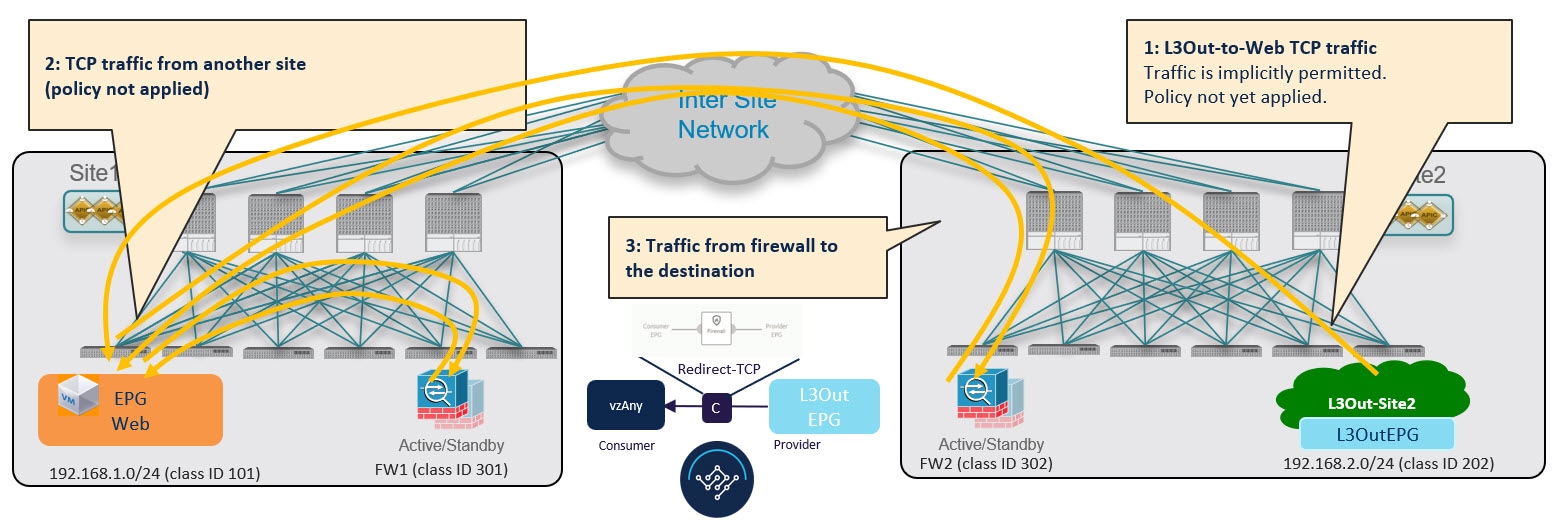

Initial Consumer-to-Provider Traffic Flow and Conversational Learning

The design principle for redirecting the traffic to the FW service nodes in both the local site and the remote site is that the PBR policy should always be applied on the ingress leaf switch for both directions of the traffic flow. For this to happen, the ingress leaf switch must be aware of the destination's endpoint policy information (Class-ID). The figure below shows an example where communication is initiated from the consumer endpoint, and the ingress (consumer) leaf switch does not yet have the Class-ID information for the destination (provider) endpoint. So the traffic is simply forwarded toward the destination connected to the remote site. This release implements a new logic to support this use case, so that the provider leaf switch that receives the traffic can understand that the flow originated in Site 1 but it has not been sent through the firewall service node connected in that site. As a result, after learning the consumer endpoint information (Class-ID), the provider leaf in Site 2 bounces back the traffic toward the firewall in Site 1.

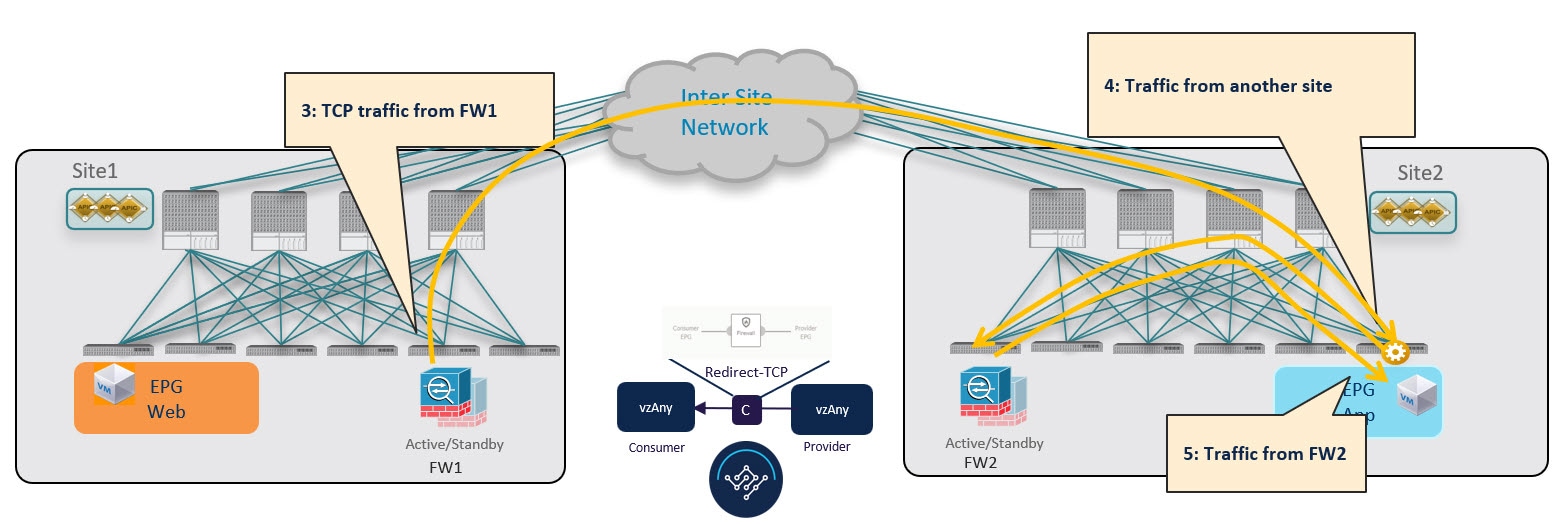

The firewall in Site 1 applies the security policy, then the traffic is forwarded again to the destination leaf switch in Site 2. This leaf is now able to understand that, while the traffic is still coming from Site 1, it now has been sent through the firewall deployed in that site. As a result, the destination leaf switch forwards the packet to its local firewall device for inspection and after that it is delivered to the destination endpoint as shown in the following figure.

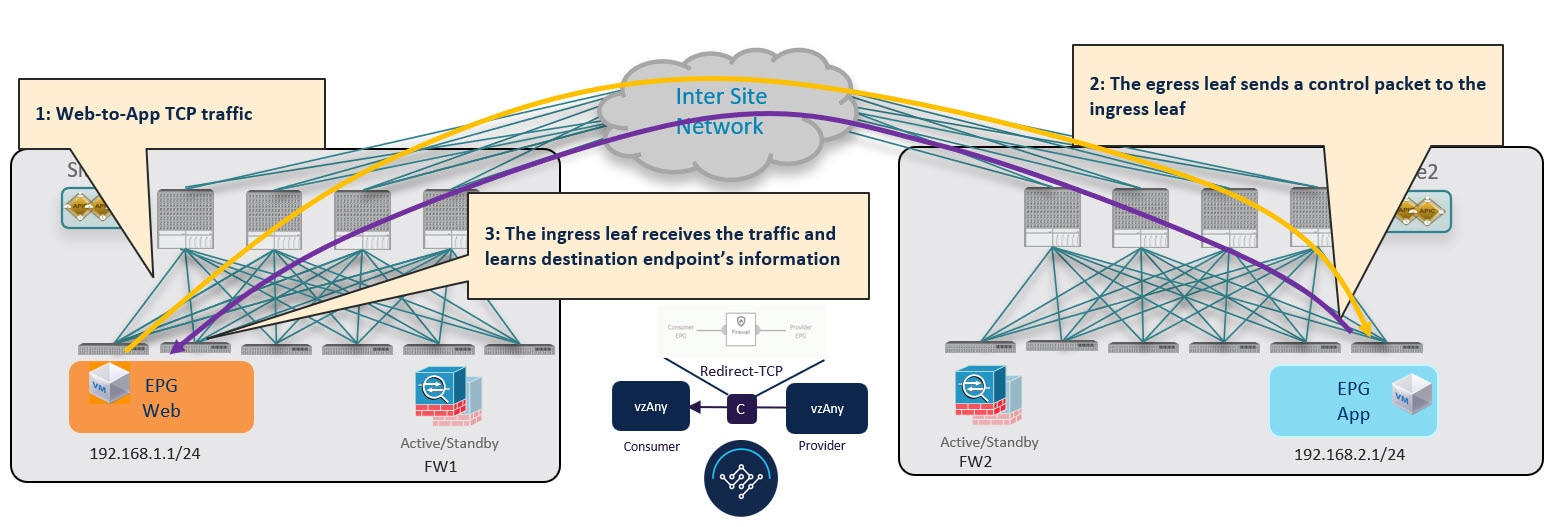

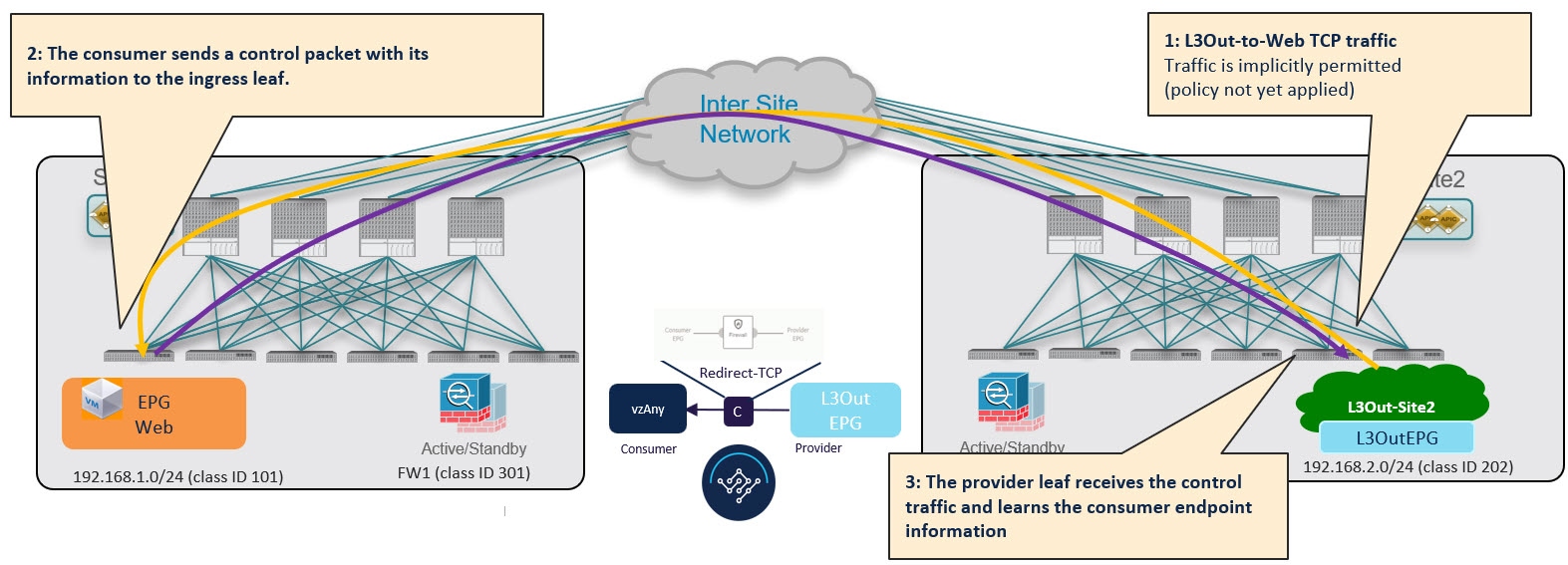

In order to avoid the suboptimal bounce of traffic shown in Conversational Learning, the provider leaf switch generates a special control packet and sends it to the consumer leaf switch in Site 1, so that the consumer leaf can learn the provider endpoint's Class-ID information.

Note |

The same behavior described above for the consumer-to-provider traffic direction applies if the initial flow is established in the provider-to-consumer direction instead. |

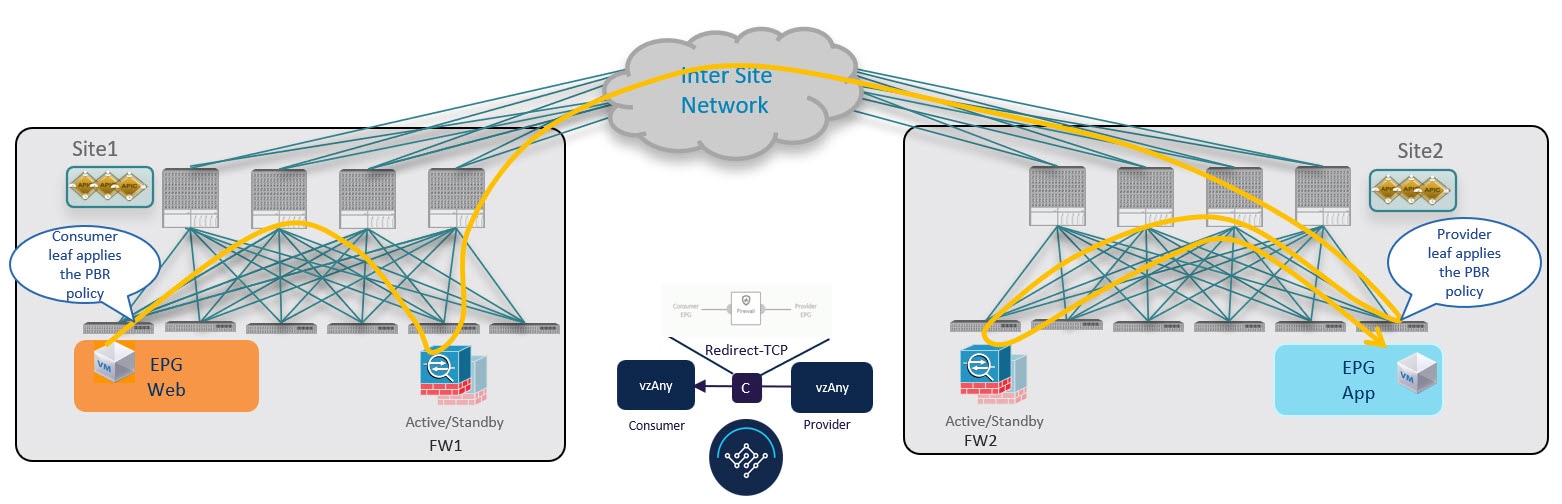

Consumer-to-Provider Traffic Flow (at Steady State)

After the consumer leaf switch has learned the provider endpoint information from the conversational learning stage described above, it can apply policy and redirect traffic to its local firewall for all future traffic:

Provider-to-Consumer Traffic Flow (at Steady State)

After the provider leaf switch has learned the consumer endpoint information either from the direct packet shown in Conversational Learning or based on conversational learning, it can apply policy and redirect traffic to its local firewall for all future traffic:

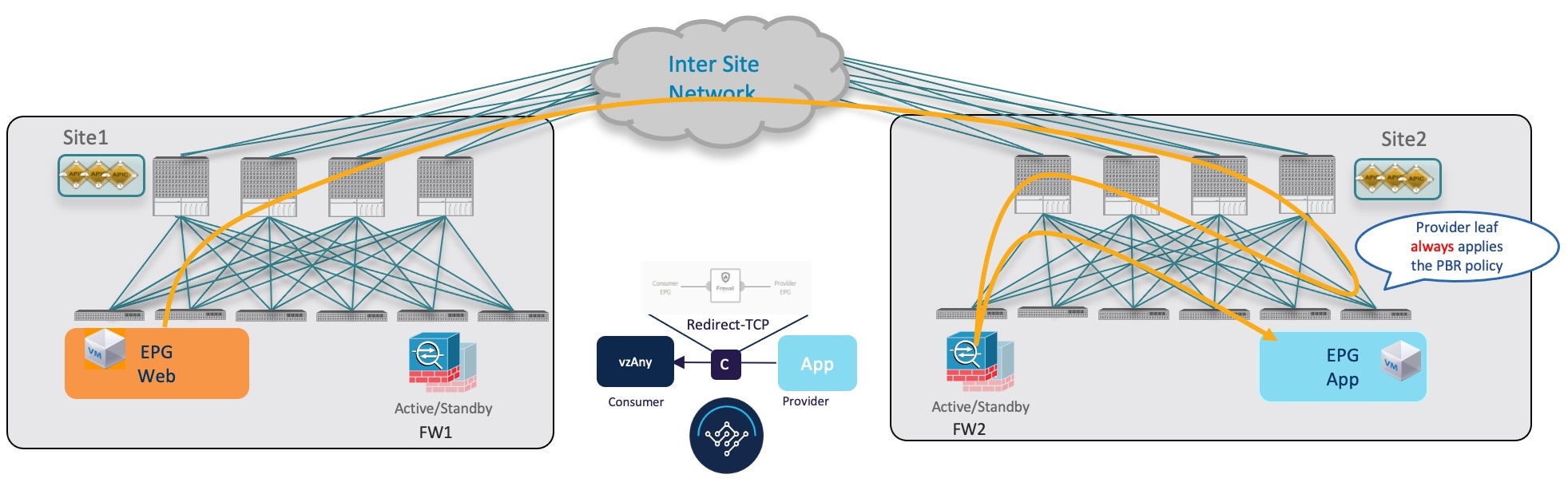

Traffic Flow: Intra-VRF vzAny-to-EPG

This section summarizes the traffic flow between a consumer EPG that is part of the logical vzAny construct for a given VRF and a provider EPG that is part of the same VRF. In this use case, vzAny is the consumer of the PBR contract, whereas a specific EPG is the provider.

Note |

Unlike the vzAny-to-vzAny and vzAny-to-L3Out use cases where traffic always flows through the firewall devices in both sites, vzAny-to-EPG always uses only the device in the provider's site. |

Consumer-to-Provider Traffic Flow

For the vzAny-to-EPG use case, policy is applied on the provider leaf switch only regardless of the traffic direction. So for consumer-to-provider traffic, the consumer EPG sends traffic directly to the provider EPG's leaf switch, which learns the consumer endpoint information (Class-ID) and redirects the traffic to its local firewall for inspection:

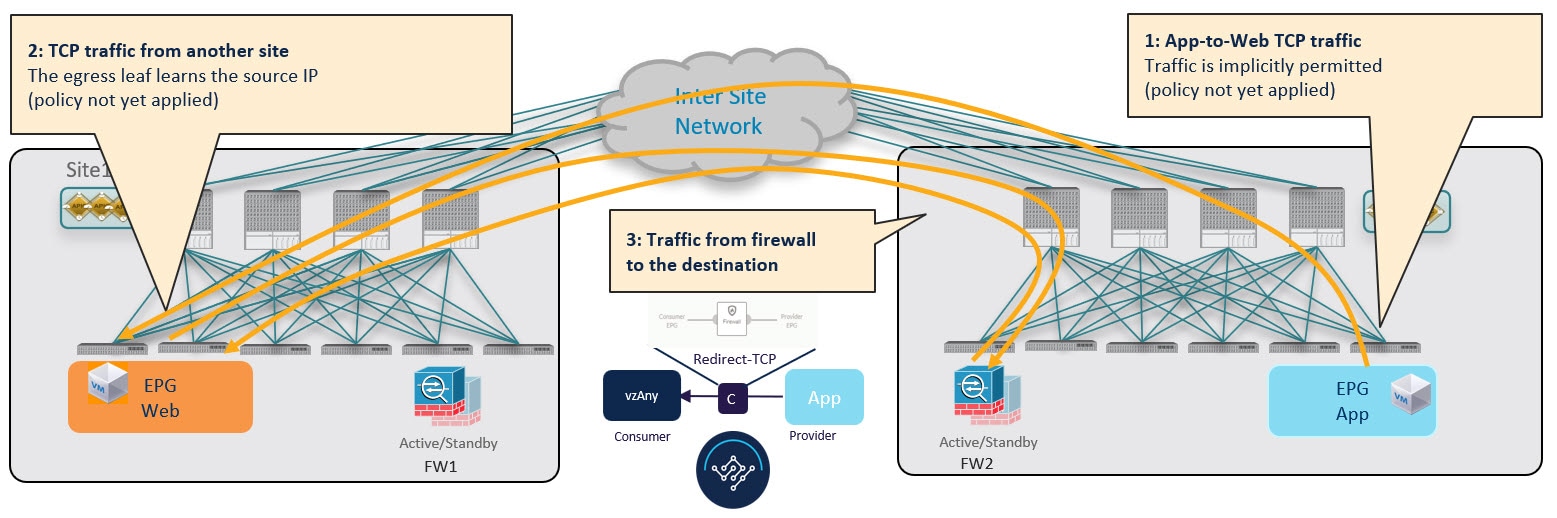

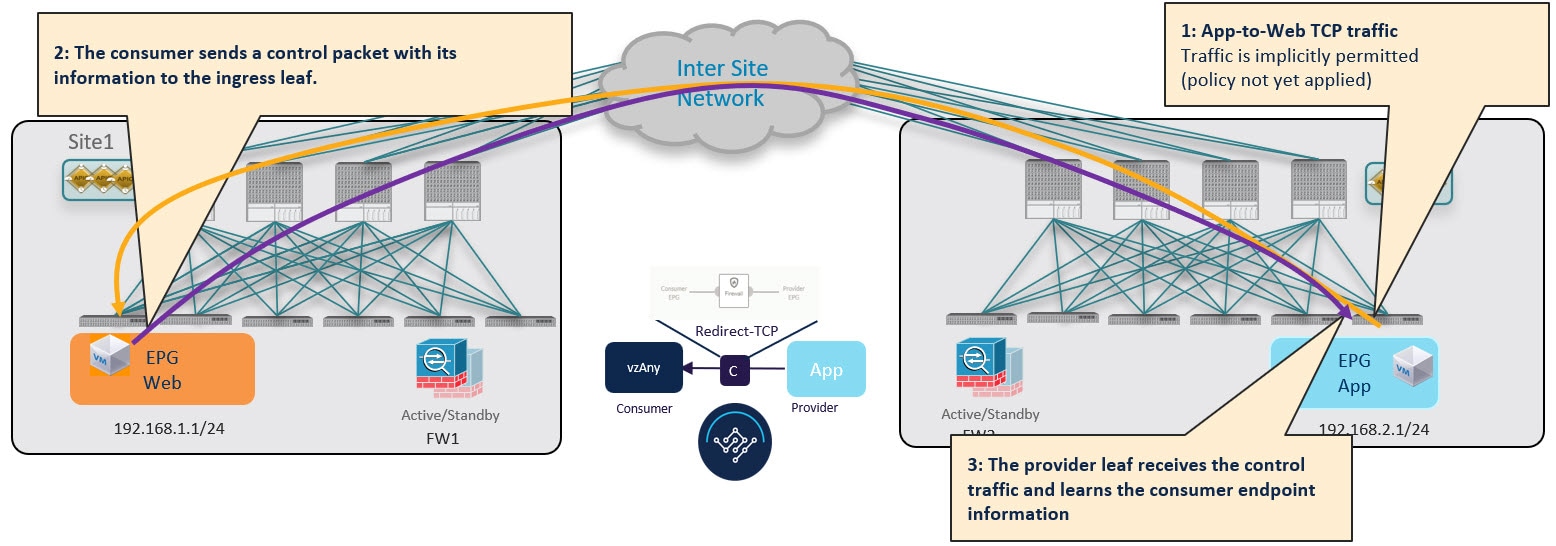

Provider-to-Consumer Traffic Flow (Initial Traffic and Conversational Learning)

If the communication is initiated by the provider endpoint before the provider leaf switch can learn the consumer endpoint information (Class-ID), it cannot apply the policy to redirect traffic to its local firewall, so the traffic is sent across sites to the consumer leaf switch. Because the policy was not applied (indicated by a control bit in the packet), the consumer leaf switch redirects the traffic back to the provider site's firewall for inspection, which finally bounces the traffic back to the consumer endpoint.

While this suboptimal traffic flow can continue indefinitely, the consumer EPG's leaf switch also sends a separate control packet to the provider leaf switch with consumer endpoint information in order to optimizes future traffic and prevent it from bouncing between both sites:

Provider-to-Consumer Traffic Flow (at Steady State)

After the provider leaf switch has learned the consumer endpoint information either from the direct packet originated from the consumer endpoint shown in vzAny-to-EPG Consumer-to-Provider Traffic Flow or based on conversational learning, it can apply policy and redirect traffic to its local firewall for all future traffic:

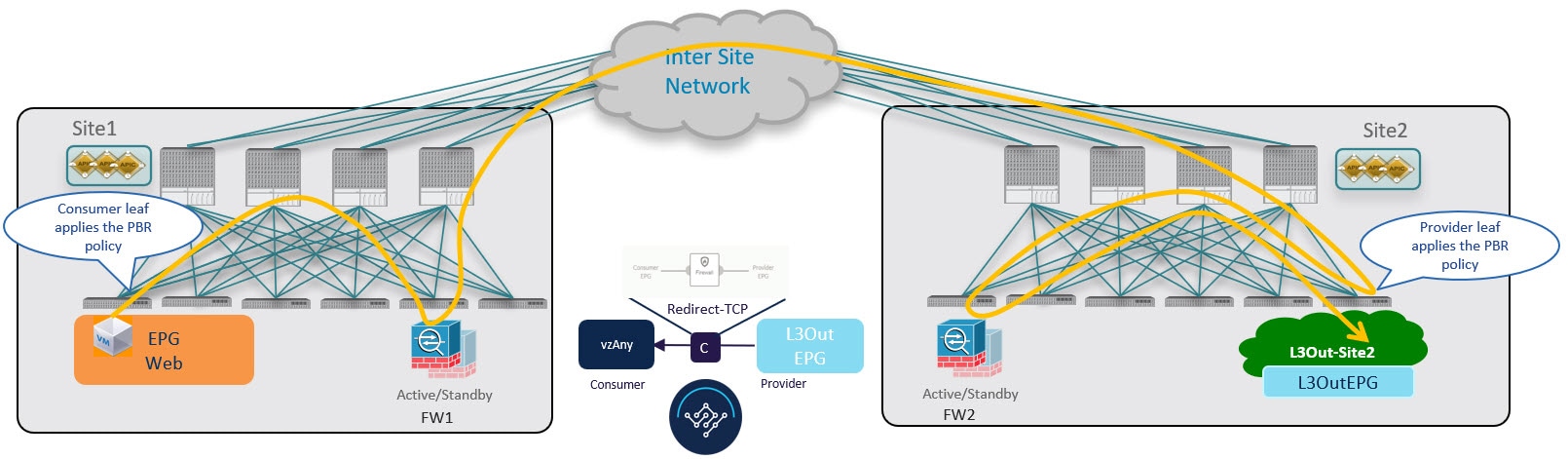

Traffic Flow: Intra-VRF vzAny-to-External-EPG (L3Out EPG)

This section summarizes the traffic flow between an EPG that is part of the logical vzAny construct for a given VRF and an external EPG (L3Out EPG) that is part of the same VRF in another site. In this use case, vzAny is the consumer of a vzAny contract, while an External EPG associated to the L3Out is the provider.

Note |

In this use case, the traffic is always redirected through firewall devices in both sites. |

Consumer-to-Provider Traffic Flow

The ingress leaf switch can always resolve the class ID of the destination external EPG and applies the PBR policy redirecting the traffic to the local FW, so no conversational learning is necessary for traffic in this direction. Because the traffic is received by the provider leaf switch after going through the firewall node in Site 1, it is not possible for the provider leaf switch to learn the consumer endpoint information (Class-ID) from this data-plane communication.

Provider-to-Consumer Traffic Flow (Initial Traffic and Conversational Learning)

Before the provider leaf switch learns the consumer endpoint information, it cannot apply the policy to redirect traffic to its local firewall, so the traffic is sent across sites to the consumer leaf switch. Because the policy was not applied (indicated by a control bit in the packet), the consumer leaf switch redirects the traffic back to the provider site's firewall for inspection, which finally forwards the traffic back to the consumer endpoint.

While this traffic flow can continue indefinitely, the consumer leaf switch also sends a separate control packet to the provider leaf switch with consumer endpoint information in order to optimizes future traffic and prevent it from bouncing between both sites:

Provider-to-Consumer Traffic Flow (at Steady State)

After the provider leaf switch has learned the consumer endpoint information, it applies the PBR policy to redirect traffic to its local firewall device first, which then sends traffic across sites to the consumer leaf switch, which redirects traffic to the firewall device in its site and then finally to the consumer endpoint.

Feedback

Feedback